Question: Are OpenAI, Anthropic, and Google building autonomous AI agents like OpenClaw?

Quick Answer: Yes — and as of April 2026, all three have shipped GA-quality products. OpenAI launched Workspace Agents as the no-code successor to custom GPTs (April 2026), built on top of its Frontier enterprise platform (February 2026) and powered by the Codex agent infrastructure. Anthropic moved Claude Cowork + Skills from research preview to general availability on April 9, 2026, alongside its new Managed Agents layer for enterprise governance. Google announced Workspace Intelligence at Cloud Next 2026 (April 22) — a semantic layer that gives Gemini agents shared context across email, files, chats, and projects — plus the rebranded Gemini Enterprise Agent Platform (formerly Vertex AI). All three have adopted MCP (10,000+ servers, 97 million monthly SDK downloads) as the interoperability standard. With 89% of business teams already using AI agents and the average organization running 12, the question isn't whether agents are coming to your business — it's whether you'll be ready when the major platforms make them the default operating mode.

OpenClaw Was the Match. The Big Labs Are the Wildfire.

In January 2026, an Austrian engineer's hobby project called OpenClaw hit 160,000 GitHub stars in weeks — proving that autonomous AI agents weren't a research abstraction anymore. They were tools real people could deploy on their laptops to execute real tasks across real systems.

As we detailed in our analysis of the OpenClaw wake-up call, that moment exposed something most organizations weren't ready to hear: your employees are already using autonomous AI agents, whether you've sanctioned them or not.

But here's what happened next — and what most coverage missed.

Within weeks of OpenClaw's viral explosion, the three most powerful AI companies on earth accelerated their own agent strategies. Not because OpenClaw threatened their business. Because OpenClaw proved the demand was real, the technology was mature enough, and the workforce wasn't going to wait for corporate approval.

89% of business teams now use AI agents. The average organization runs 12. They're planning to increase to 20 within two years. And 93% of leaders believe that companies who successfully scale agents in the next 12 months will gain an edge over industry peers.

OpenClaw was the match. OpenAI, Anthropic, and Google are building the wildfire — with industrial-grade fuel and corporate-approved fire lanes.

What Each Lab Is Actually Shipping

The easiest way to misunderstand this moment is to think these companies are building chatbots with extra features. They're not. They're building autonomous systems that see your screen, control your browser, write your code, and coordinate with other agents — with varying degrees of human oversight.

Here's what's actually live and shipping right now.

OpenAI: Workspace Agents, Frontier, and Operator

OpenAI's agent strategy now spans three layers, all shipped between February and April 2026.

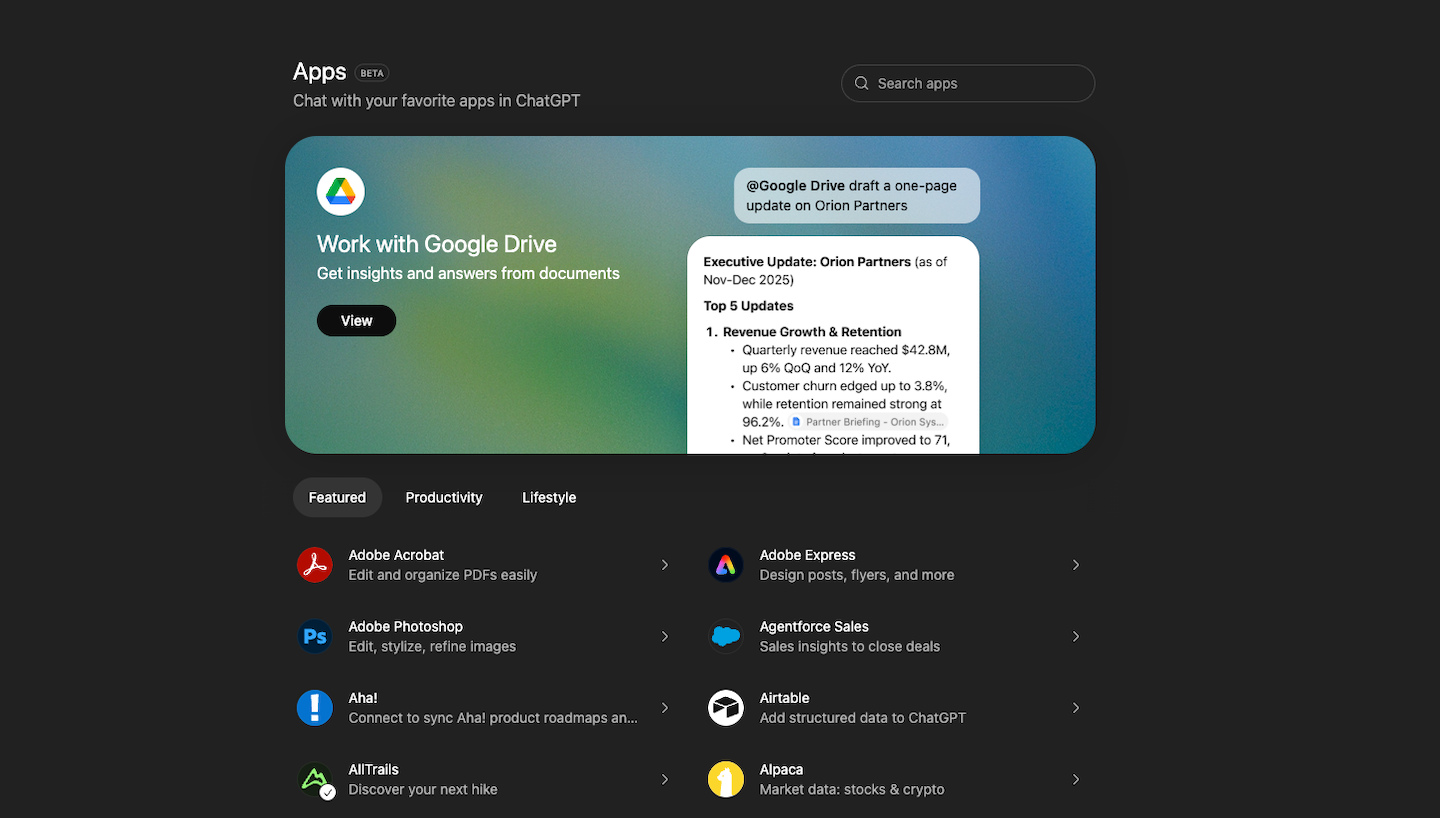

Workspace Agents (April 2026) is the no-code, in-product layer for ChatGPT business customers — the successor to custom GPTs. It plugs directly into Slack, Salesforce, HubSpot, and other enterprise tools, executing multi-step workflows on permission-scoped context. Free through May 6, 2026, then credit-based.

Frontier (February 2026) is the enterprise platform underneath: shared business context, execution environments, evaluation frameworks, and permission systems for managing fleets of AI coworkers rather than single chat windows.

Operator is the underlying browser-automation engine — a Computer-Using Agent (CUA) that navigates web pages by viewing pixels, clicking buttons, and entering text. It still hits 87% success rates on complex browser tasks like booking international travel, ordering groceries from recipe ingredients, and managing procurement workflows. Operator runs in Watch Mode (suggestions) or Takeover Mode (autonomous execution) — with automatic pauses on sensitive sites like email and banking.

The strategic move: OpenAI has decided the future of ChatGPT-for-work is fleets of permissioned agents, not single chat windows. Custom GPTs were not enough.

Anthropic: Cowork, Skills, and Managed Agents

Anthropic took a different path — they built knowledge work itself, not the agent that does it.

Claude Cowork went GA on April 9, 2026 (after a research preview launch in January). It's an agentic environment inside the Claude Desktop app where you give Claude a goal, and it works on your computer — local files, applications, browser — to deliver a finished output. Researchers, analysts, ops teams, legal, and finance teams are the explicit target. Available now on macOS and Windows for paying subscribers.

Skills are the unit of capability inside Cowork. Each Skill is a discrete, reusable behavior — a way Claude knows how to do a specific kind of work (close monthly books, write a customer brief, prepare for a sales call). Skills compose: Claude picks the right one for the task and stitches them together when the work spans multiple domains.

Managed Agents (also April 9, 2026) is the enterprise layer: governed agents with plug-ins for finance, engineering, and design, deployed under the same controls Microsoft already uses for Claude on Azure AI Foundry. These are the agents IT departments will sanction.

The capability ceiling is still striking. Claude Opus 4.7 with a 1-million-token context window has independently discovered over 500 previously unknown zero-day vulnerabilities in open-source software — in several cases inventing novel detection methods when conventional tools failed. Claude Code (the developer-focused harness) drives multi-file feature builds with sub-agents working in parallel. Cowork brings that same pattern to non-developers.

Google: Workspace Intelligence and the Gemini Enterprise Agent Platform

Google's full-stack bet against OpenAI and Anthropic landed at Cloud Next 2026 on April 22.

Workspace Intelligence is the headline: a new semantic layer that maps emails, chats, files, collaborators, and active projects into shared context that Gemini-powered agents can act on across the suite. Sheets gets natural-language spreadsheet building plus third-party imports from HubSpot and Salesforce. Docs can generate infographics grounded in business data. Slides generates editable decks in one pass using company templates. Drive adds Drive Projects as a shared context hub. The rollout covers Business Starter through Enterprise Plus — Workspace customers get this as a tier upgrade, not a separate product.

Gemini Enterprise Agent Platform is what Vertex AI was rebranded into at the same event. Agentspace got absorbed into the unified Gemini Enterprise product. Workspace Studio is the agent-building canvas for developers and citizen builders. A2A protocol (now adopted by 150 organizations) is Google's bid to be the agent-to-agent communication standard.

Project Mariner still exists as the browser-control sub-component — the agent that handles up to 10 concurrent tasks on cloud-based virtual machines, scoring 83.5% on the WebVoyager benchmark. But Mariner is no longer the headline: it's now one capability among many inside the Gemini Agent strategy, with Mariner Studio (the visual builder) shipping in Q2 and an agent marketplace in Q4.

The MCP Moment: One Standard to Connect Them All

If individual agents are the engine, MCP (Model Context Protocol) is the highway system that lets them go anywhere.

Originally created by Anthropic, MCP was donated to the Linux Foundation in February 2026 — establishing it as a vendor-neutral open standard alongside contributions from OpenAI and Block. The adoption numbers tell the story:

- 10,000+ active public MCP servers covering everything from developer tools to Fortune 500 deployments

- 97 million+ monthly SDK downloads across Python and TypeScript

- Adopted by ChatGPT, Gemini, Microsoft Copilot, Cursor, Replit, and Visual Studio Code

- Enterprise infrastructure support from AWS, Cloudflare, Google Cloud, and Microsoft Azure

What does this mean practically? MCP solves the integration problem that has historically killed AI deployments. Instead of building custom connectors for every tool and data source — the kind of fragile plumbing that breaks when software updates — MCP creates a standardized protocol that lets agents access any system with an MCP server. Your Google Drive. Your Slack. Your GitHub. Your Postgres database.

For mid-market companies, this is the most important development in the entire agent landscape. Because the biggest barrier to scaling AI agents isn't the AI itself. It's the 957 applications the average enterprise manages, with only 27% connected. MCP is the infrastructure that starts closing that gap.

From Watch Mode to Takeover: The Autonomy Spectrum

Here's the critical difference between what the big labs are building and what OpenClaw proved possible: guardrails.

OpenClaw grants root-level permissions by default. It operates with persistent access across your messaging platforms, file system, and financial tools — without requiring approval for individual actions. That's powerful. It's also terrifying from a governance perspective.

The major platforms are building the same capabilities with fundamentally different safety architectures:

OpenAI's approach is modal. Operator's Watch Mode keeps humans informed; Takeover Mode lets the agent execute — but on sensitive sites, it automatically pauses and hands control back. The agent is trained to decline certain tasks entirely, including banking transactions and high-stakes decisions.

Anthropic's approach is classification-based. ASL-3 safety classifiers run in real-time to detect and block potential misuse. Cowork's agent execution operates in isolated sandbox environments — Docker containers and virtual machines that prevent uncontrolled system access. Managed Agents adds enterprise-grade controls layered on top: deployment policies, audit trails, role-based permissions. Six dedicated cybersecurity probes monitor for misuse of enhanced capabilities.

Google's approach is architectural. Project Mariner runs on cloud-based VMs, not your local machine — creating a physical separation between agent actions and your actual systems. Real-time user intervention means you can stop, redirect, or modify any agent action mid-execution.

The pattern is clear: each company is racing to maximize capability while minimizing unsupervised risk. They're building the brakes alongside the engine — something the open-source community has been slower to prioritize.

For business leaders, this matters because it changes the risk calculation. The question is no longer "should we allow AI agents?" but "which safety architecture fits our risk tolerance?" And that's a question your AI governance framework needs to answer now, not after something breaks.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Your Employees Are Already Living in This Future

While you're evaluating agent strategy, your workforce has already made their choice.

The numbers are unambiguous: 89% of business teams now use AI agents, with the average organization running 12 agents and planning to increase to roughly 20 within two years. Organizations are deploying them through three channels: 36% activate embedded agents inside existing platforms, 34% build custom agents, and 30% adopt pre-built SaaS agents.

The results are measurable. Organizations report 10-30% increases in sales and conversions. 72% of employees feel more productive. One major bank freed up 17% of employee capacity and cut lead times by 22% through agentic AI deployment.

But here's the gap that should concern every mid-market leader: 64% of organizations worry they won't hit their agent implementation goals. And the reason isn't technology. It's integration.

The average enterprise manages 957 applications — but only 27% are connected. Even organizations further along in agentic transformation, with larger app estates averaging 1,057 applications, have connectivity rates of just 32%. You can have the most capable AI agents in the world, but if they can't access your systems, they're just expensive chatbots.

This is the same pattern we've seen with every wave of AI adoption. The 63% problem — where most AI initiatives fail at the human and organizational level — doesn't disappear because the AI got more capable. It intensifies. More powerful agents operating in more systems means more potential failure points, more change management challenges, and more organizational resistance to manage.

The companies getting this right treat agent deployment as a data and integration program first, with AI as a secondary consideration. They're not asking "which agent should we use?" They're asking "which workflows have the data connectivity to actually support agents?" That's a fundamentally different — and more productive — starting point.

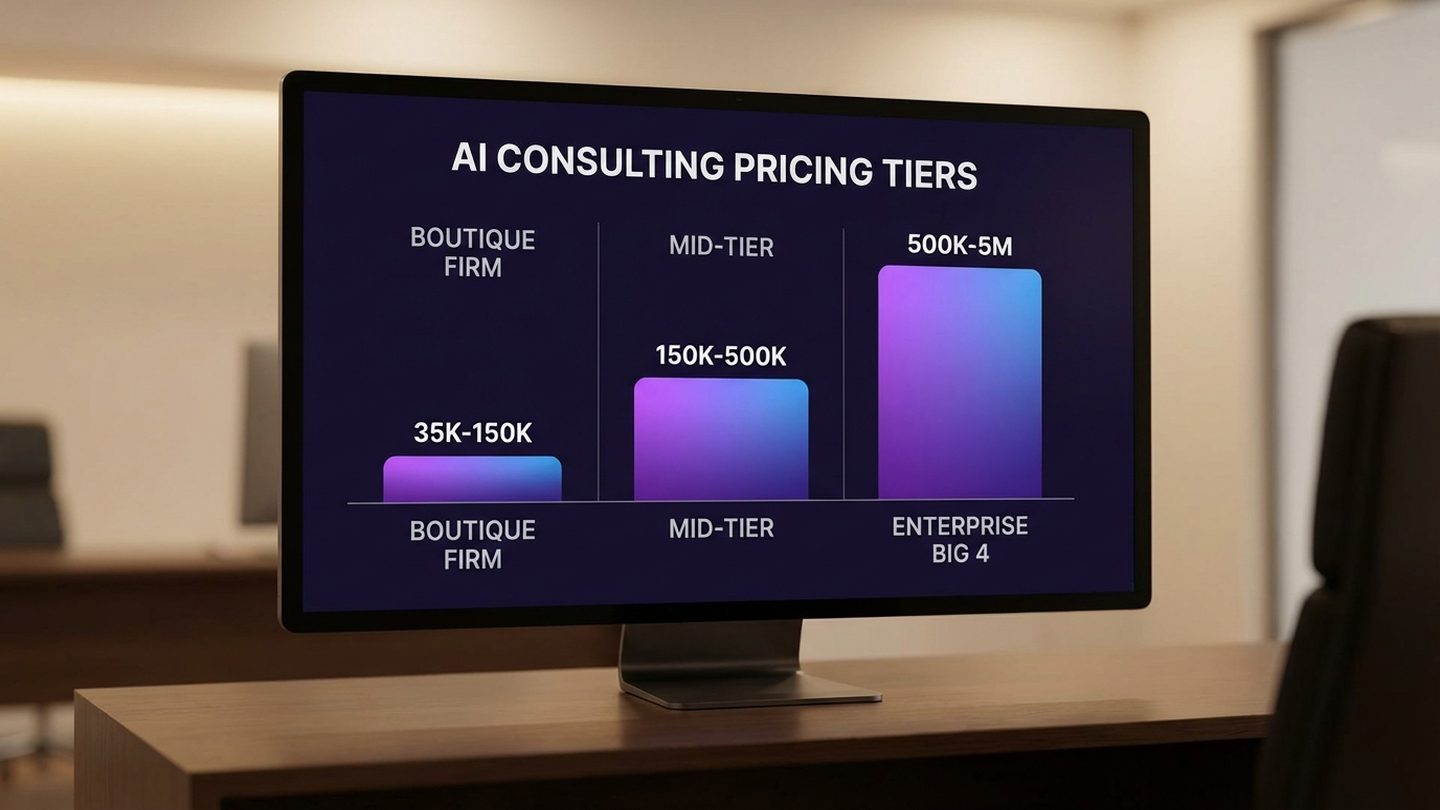

The Pricing Earthquake: From Seats to Outcomes

The agent arms race isn't just changing how work gets done. It's rewriting how software companies make money — and creating a once-in-a-decade opportunity for mid-market software buyers.

41% of enterprise SaaS companies are now implementing hybrid pricing models that combine baseline subscriptions with performance-based components. Gartner projects that 40% of enterprise SaaS will include outcome-based pricing elements by the end of 2026 — up from 15% just two years ago.

The logic is straightforward. When an AI agent does the work of five users, per-seat pricing collapses. Companies like Intercom and Forethought are already moving away from user-based subscriptions toward models where customers pay only when AI features deliver results.

This connects directly to the $300 billion SaaS valuation crash we analyzed in the reinvention question every business must answer. That crash wasn't a market correction. It was the market pricing in a structural shift: the era of seat-based software economics is ending.

For mid-market companies, this creates three immediate opportunities:

Renegotiation leverage. If your current vendors are still charging per-seat while agents reduce the number of humans needed for certain workflows, you have leverage to renegotiate. Especially as competitors offer outcome-based alternatives.

AI-native tools at lower cost. Tools that were previously priced for enterprise budgets are becoming accessible through consumption-based models. You pay for what the agent actually accomplishes, not for a license that may or may not deliver value.

Vendor lock-in reduction. MCP and similar standards mean agents can work across platforms. The switching costs that kept you locked into expensive software suites are dropping as interoperability increases.

The companies that move fastest to audit their software stack through an agent lens — identifying which tools charge per seat for work that agents can do — will capture the most savings.

What Mid-Market Leaders Should Do in the Next 90 Days

The agent arms race creates urgency, but it doesn't require panic. Here's a practical three-phase approach for the next quarter.

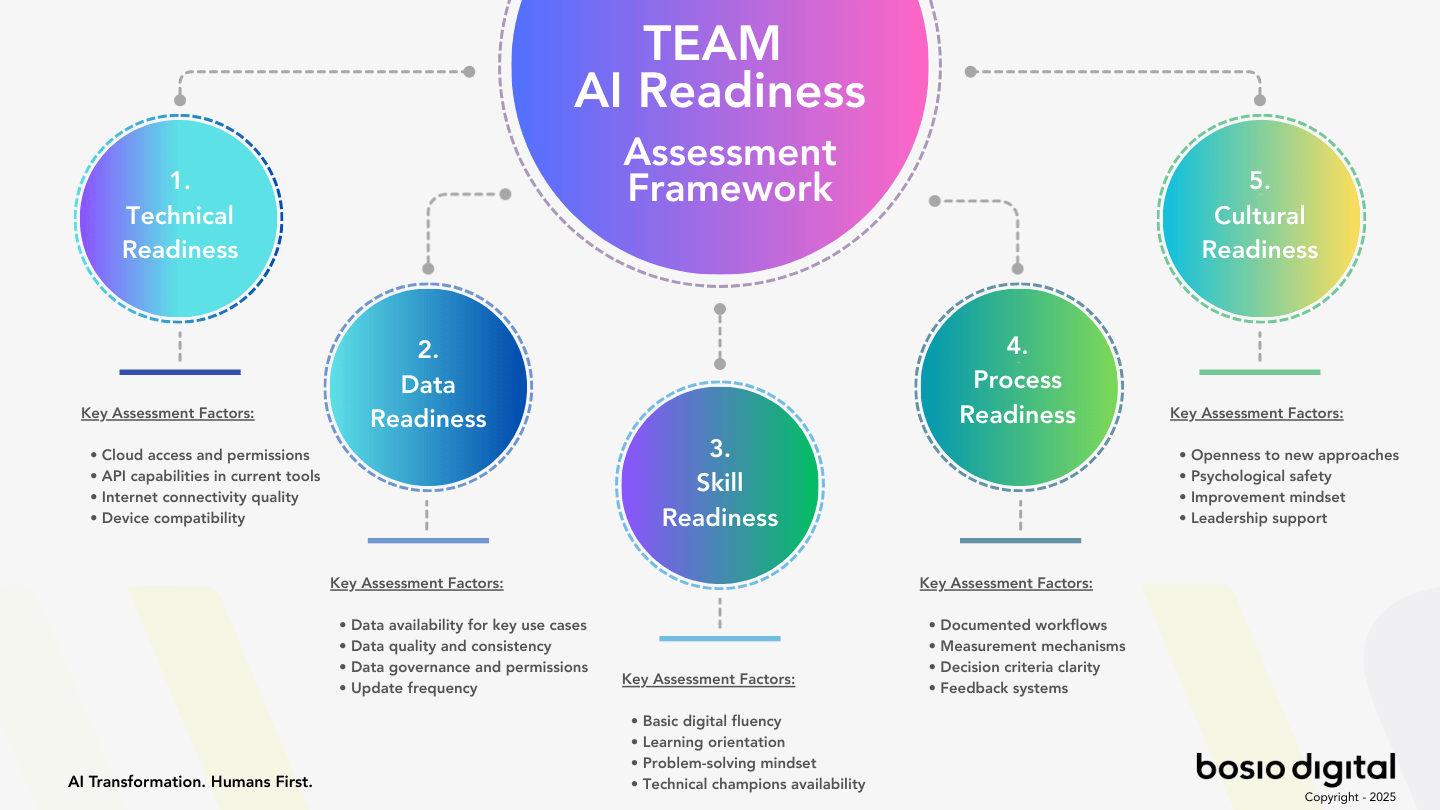

Phase 1: Audit and Map (Days 1-30)

Understand what's already happening. Survey your teams to discover which AI agents they're already using — officially and unofficially. If 89% of business teams use agents and personal AI accounts are already creating hidden liability, you need visibility before you can govern.

Map your integration landscape. Which of your systems have APIs? Which support MCP? Where are the data silos that will prevent agents from being effective? The 27% connectivity rate is your biggest constraint — identify the 3-5 most critical integrations first.

Assess your governance readiness. Do you have policies that address autonomous AI actions — not just chatbot usage? There's a significant difference between an employee using ChatGPT for writing and an agent executing financial transactions across your systems.

Phase 2: Pilot One Workflow (Days 31-60)

Choose one high-value, low-risk workflow for a sanctioned agent deployment. Good candidates: meeting scheduling and follow-up, research and competitive analysis, content drafting and review, data entry across systems, customer inquiry routing.

Pick the right platform. Based on your existing stack: Microsoft ecosystem → Copilot agents. Google Workspace → Workspace Intelligence + Gemini Enterprise. ChatGPT-led teams → Workspace Agents (and Frontier for governance). Knowledge work across documents and apps → Claude Cowork + Skills. Developer workflows → Claude Code. Browser-automation tasks → Operator (OpenAI) or Mariner (Google).

Measure everything. Time saved, error rates, employee satisfaction, security incidents. You need data to make the case for scaling — and to identify risks before they compound.

Phase 3: Build Your Agent Governance Playbook (Days 61-90)

Define your autonomy boundaries. Which actions require human approval? Where can agents operate independently? The major platforms offer different safety architectures — match them to your risk tolerance.

Establish credential isolation. Agents should have their own credentials, separate from human user accounts. This creates audit trails and prevents the credential-sharing risks that building AI-ready organizations requires addressing.

Create your agent approval process. Before any new agent is deployed, it should pass through a lightweight review: what data can it access, what actions can it take, and who is responsible when something goes wrong.

The goal isn't to build a comprehensive AI-ready organization overnight. It's to establish minimum viable governance that lets your team capture productivity gains while containing risks — before the major platforms make agents the default mode, not the opt-in feature.

The Humans First Principle Still Applies

Here's what gets lost in the arms race narrative: this is still fundamentally a human transformation.

The companies that will navigate this moment successfully aren't the ones with the best agent technology or the biggest AI budgets. They're the ones that understand something the technology companies tend to overlook: AI agents don't replace organizational complexity. They amplify it.

More powerful agents mean more decisions about trust, oversight, and responsibility. More autonomous systems mean more conversations with employees about what their roles are becoming. More integration points mean more change management, more training, and more patience.

Every major AI deployment study confirms what we've observed consistently: the technology works. The implementation challenge is human. That's why 64% of organizations worry about hitting their agent goals — not because the agents aren't capable, but because the organizations aren't ready.

The agent arms race is real. OpenAI, Anthropic, and Google are building tools that will transform how your business operates. But the competitive advantage won't come from which agent platform you choose. It will come from how well you help your people work alongside agents that can now see their screens, access their systems, and execute tasks on their behalf.

That's not a technology question. That's a leadership one.

Frequently Asked Questions

What is the AI agent arms race and why does it matter for businesses?

The AI agent arms race refers to the accelerating competition between OpenAI (Workspace Agents and Frontier, with Operator as the underlying browser engine), Anthropic (Cowork + Skills, Managed Agents, and Claude Code), and Google (Workspace Intelligence and the Gemini Enterprise Agent Platform, with Project Mariner as a sub-component) to build autonomous AI systems that execute real-world tasks — not just answer questions. It matters because 89% of business teams already use AI agents, and as of April 2026 all three vendors have shipped GA products that make autonomous agents the default operating mode for business software. Companies that don't prepare risk falling behind competitors who adopt sanctioned agent workflows earlier.

How does OpenAI's Operator actually work?

Operator is a Computer-Using Agent (CUA) that navigates web browsers by capturing screenshots, identifying interactive elements, and executing clicks and keystrokes — mimicking human interaction. It achieves 87% success rates on complex tasks like booking travel, managing procurement, and coordinating purchases across multiple websites. It operates in Watch Mode (suggestions) and Takeover Mode (autonomous execution), with automatic safety pauses on sensitive sites.

What is Anthropic's Cowork and how is it different from a chatbot?

Anthropic shipped Claude Cowork to general availability on April 9, 2026 — an agentic environment inside the Claude Desktop app where Claude works on your computer, files, and applications to complete a goal end-to-end. Cowork uses Skills (discrete, reusable capabilities) that compose for tasks spanning multiple domains. Claude Code is the developer-focused harness that drives multi-file feature builds with parallel sub-agents. Claude Opus 4.7 supports a 1-million-token context window and has independently discovered over 500 zero-day software vulnerabilities — capability that goes far beyond conversation.

What is MCP and why should business leaders care about it?

MCP (Model Context Protocol) is an open standard — now governed by the Linux Foundation — that lets AI agents connect to external tools and data sources through a universal protocol. With 10,000+ active servers and 97 million monthly SDK downloads, MCP has been adopted by ChatGPT, Gemini, Copilot, and Cursor. For businesses, MCP means agents can work across your entire software stack without custom integrations, dramatically reducing the connectivity barrier that currently limits agent effectiveness.

How is the AI agent revolution affecting software pricing?

AI agents are accelerating the shift from per-seat to outcome-based software pricing. When agents do the work of multiple users, charging per seat becomes unsustainable. Currently, 41% of enterprise SaaS companies are implementing hybrid pricing models, and Gartner projects 40% of enterprise SaaS will include outcome-based elements by the end of 2026. This creates significant opportunities for mid-market buyers to renegotiate contracts and access AI-native tools at lower costs.

What should mid-market companies do first to prepare for AI agents?

Start with a 30-day audit: survey teams to discover which agents they're already using (officially and unofficially), map your integration landscape to identify which systems support agent connectivity, and assess whether your governance policies address autonomous AI actions. Then pilot one high-value, low-risk workflow with a sanctioned agent in days 31-60 before building your agent governance playbook in days 61-90.

Are AI agents safe for business use?

The major platforms build safety differently: OpenAI uses modal safety (Operator's Watch vs Takeover modes with automatic pauses) and permission-scoped Workspace Agents. Anthropic uses classification-based safety (ASL-3 classifiers, sandboxed Cowork execution, Managed Agents for enterprise controls). Google uses architectural safety (Mariner runs on cloud VMs separate from local systems; Workspace Intelligence respects existing Workspace permissions). All three include human-in-the-loop requirements for sensitive actions. The safety architectures are significantly more robust than open-source alternatives like OpenClaw, but no system is risk-free — governance policies and credential isolation remain essential.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Sources

- 2026 Connectivity Benchmark Report — MuleSoft/Salesforce, 2026 (89% of teams use AI agents, avg org runs 12, 957 apps per enterprise, 27% connected, 64% worry about implementation goals)

- Agentic AI in the Enterprise — Capgemini/OneReach AI, 2026 (93% of leaders believe scaling agents = competitive edge, Bradesco 17% capacity freed)

- OpenAI Operator Analysis: 87% Success Rate on Complex Browser Tasks — Wedbush Securities/TokenRing, January 2026 (Operator CUA performance data)

- Claude Opus 4.6 Discovers 500+ Zero-Day Vulnerabilities — Axios, February 5, 2026 (Claude security capabilities)

- Claude on Azure AI Foundry: Secure Governed Agents — Microsoft Azure Blog, February 2026 (enterprise deployment with minimal oversight)

- Introducing Claude Opus 4.6 and Claude Code Agent Teams — Anthropic, February 2026 (multi-agent orchestration, MCP 10,000+ servers, 97M+ SDK downloads, Linux Foundation donation)

- Google's Project Mariner Handles 10 Concurrent Tasks on Cloud VMs — TechCrunch, May 2025 (Project Mariner architecture and capabilities)

- SaaS Pricing Trends: 41% Implementing Hybrid Models — Monetizely, 2026 (outcome-based pricing shift)

- 40% of Enterprise SaaS to Include Outcome-Based Pricing by End of 2026 — Gartner/BetterCloud, 2026 (pricing projections)

- AI Agent Statistics: 10-30% Increases in Sales and Conversions — Master of Code, 2026 (agent deployment results)

- Intercom and Forethought Moving to Outcome-Based Pricing — Aquant, 2026 (pricing model shifts)

- $300B SaaS Valuation Crash — SaaStr/Fortune, February 3, 2026 (market impact)

- Agentic AI Projected to Reach $1.3 Trillion by 2029 — OneReach/Gartner, 2026 (market projections)

- Cowork: Claude Code Power for Knowledge Work — Anthropic, January 2026 (Cowork research preview launch)

- Anthropic Launches Managed Agents and Claude Cowork GA — April 9, 2026 (Cowork GA, Skills, Managed Agents enterprise launch)

- Introducing Workspace Intelligence — Google Workspace Blog, April 22, 2026 (semantic layer for Gemini agents at Cloud Next 2026)

- OpenAI Unveils Workspace Agents, Successor to Custom GPTs — VentureBeat, April 23, 2026 (Workspace Agents launch, Codex agent infrastructure)

- Google Cloud Next 2026: AI Agents, A2A Protocol, Workspace Studio — The Next Web, April 2026 (Vertex AI rebrand, A2A protocol adoption)