Question

Why aren't most companies seeing ROI from their AI investment?

Quick Answer

McKinsey reported in April 2026 that more than 80% of companies investing in AI are not yet seeing impact on the bottom line. The reason is not the technology — it is the architecture. Organizations that see AI ROI made three decisions before deploying agents: they encoded organizational knowledge into the AI system before automating anything, they built reusable skills that compound expertise rather than accumulating disconnected tools, and they designed governance and oversight into the architecture from the start rather than adding it after problems appeared. Most organizations skipped these steps and deployed AI into unchanged workflows. More tools did not fix the problem because the problem was never the tools.

McKinsey published a podcast in early April 2026 with a finding that should have stopped every executive who listened to it. More than 80% of companies investing in AI report little or no impact on the bottom line. The pattern is consistent across the firms doing the most spending. The technology arrived. The returns did not.

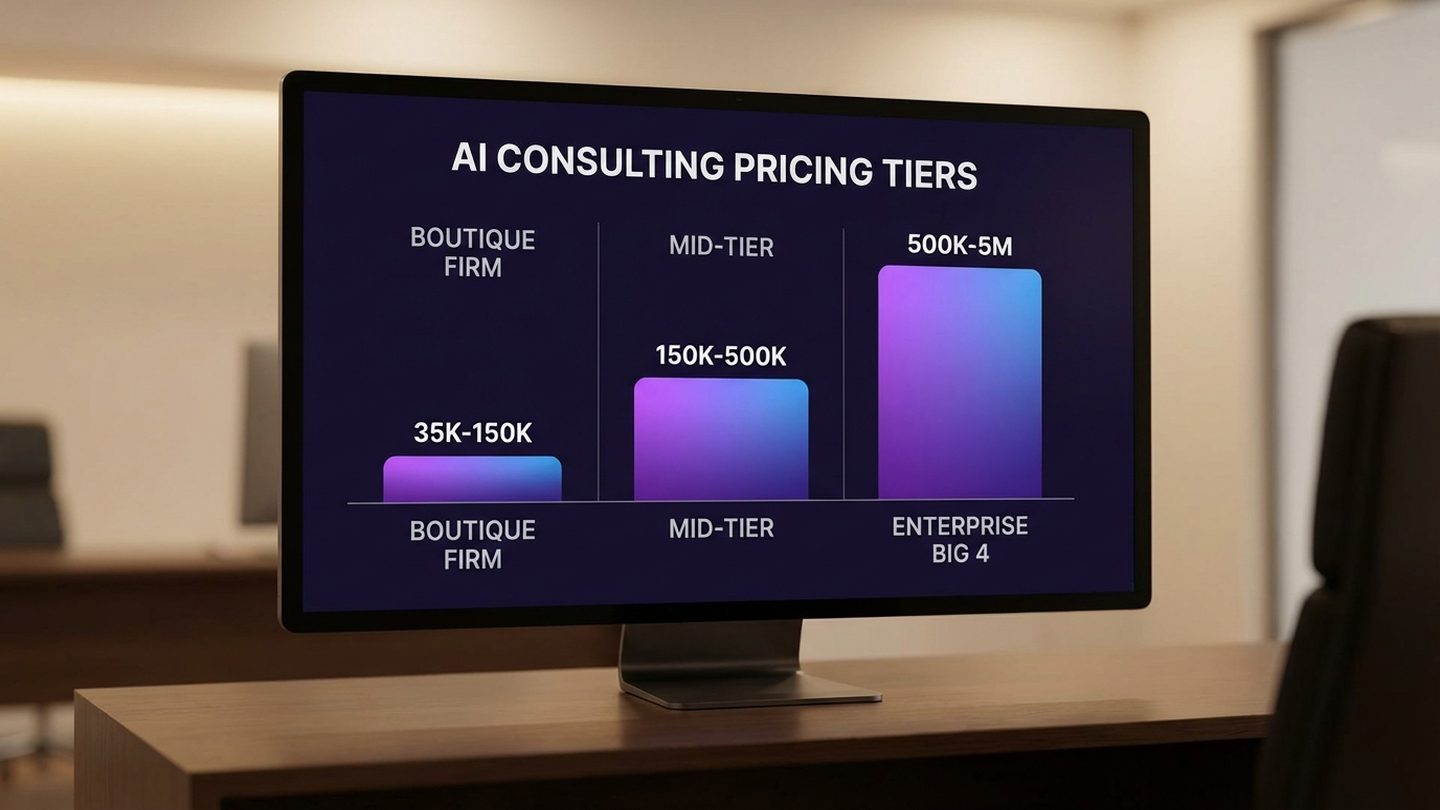

A separate study by PwC two weeks later put a sharper edge on the same picture: three-quarters of AI's economic gains are being captured by just 20% of companies. Two of the three largest professional services firms in the world, looking at the same market from different angles, arrived at the same conclusion: the AI investment paradox is real, it is concentrated, and it is structural.

The question that matters is not why AI is not working. The data is clear that, for most organizations, it is not. The question is what the 20% who are seeing returns built that the other 80% did not.

This article is about that. It takes McKinsey's diagnosis seriously and answers the question they leave open. The answer is architectural — not more tools, not more agents, not more spend. A small set of decisions made early, before the first automation went live.

The McKinsey Number That Should Make Every Leader Stop

The McKinsey podcast — released April 2, 2026, with senior partner Alexis Krivkovich on the mic alongside Lucia Rahilly and Roberta Fusaro — names the problem precisely. Companies expected massive transformation, invested accordingly, and most are not yet seeing it. The technology landed in the organization. The operating model did not change to absorb it.

The headline number is the 80%. The deeper finding is that the gap between AI capability and AI value is no longer about the AI. The capability is sufficient. The reason most organizations are not seeing returns is that AI was added to existing workflows without rethinking the workflows. It was deployed alongside people doing the same work in the same way, with the same approval chains, the same handoffs, the same review cycles. The technology accelerated some steps. The end-to-end process barely moved.

This is not a "give it more time" problem. The companies in the 20% that are seeing returns started under the same conditions as everyone else. They are not running on better models. They are not benefiting from a head start the rest of the market cannot access. They made different decisions about what to build, and they made those decisions early — before the first agent went into production.

That is the part of the McKinsey finding that goes underreported. Most coverage of the 80% stat treats the gap as a maturity problem that will close with time. The PwC study suggests otherwise: if 20% of companies are already capturing 75% of the economic gains, the gap is widening, not narrowing. Time alone is not closing it. Architecture is.

Free Assessment · 10–15 min

Which Side of the 80/20 Split Is Your Organization On?

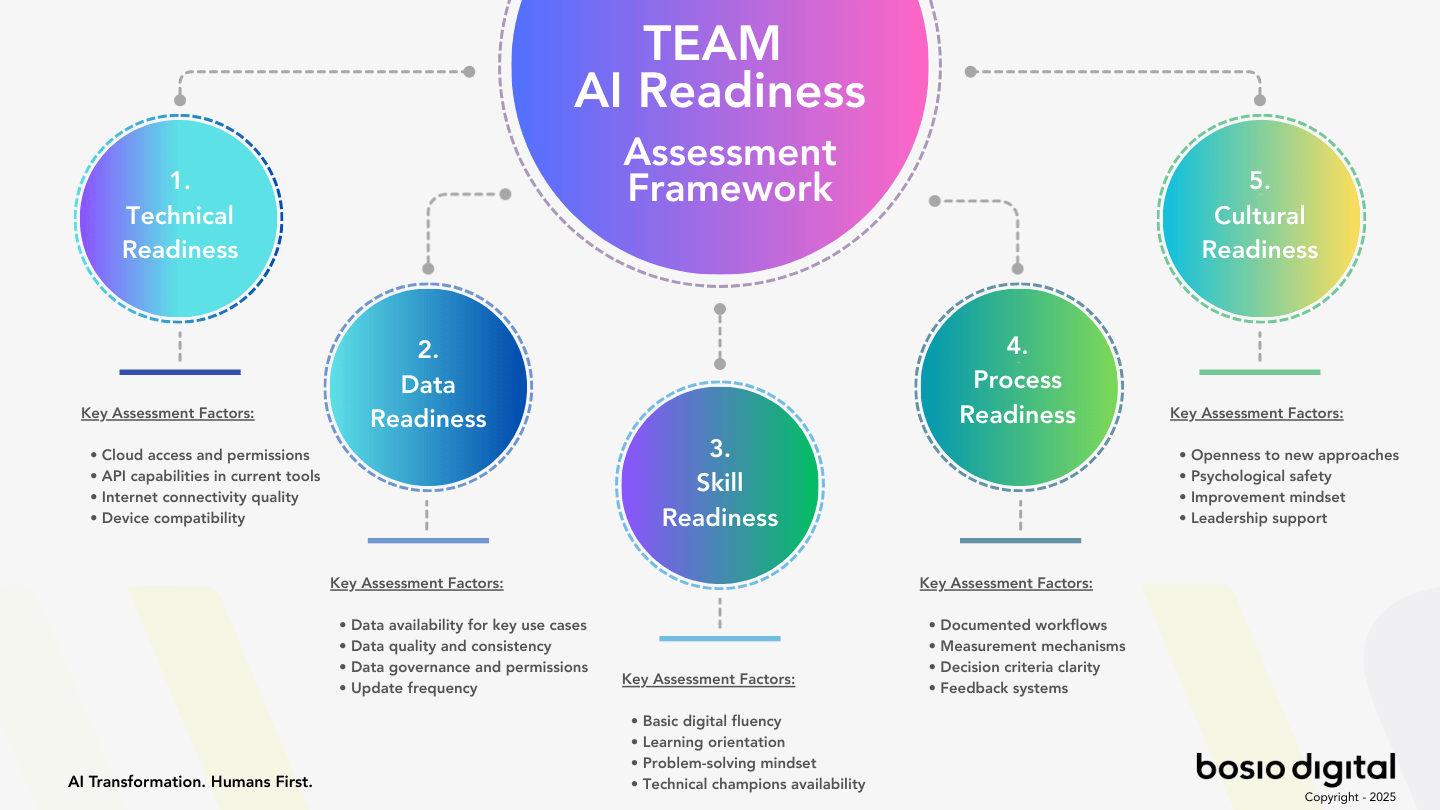

The bosio Architecture Assessment scores your AI readiness across five dimensions in 10 minutes — and tells you, concretely, what's between you and the 20% that are seeing returns.

What McKinsey Got Right (And What They Left Out)

McKinsey's diagnosis is accurate. Workflows have not changed. Leadership has not changed. Culture has not changed. The technology arrived as a layer on top of an organization that kept operating the way it always did. They are right about all of this, and the consulting world has converged on the same general conclusion.

What the McKinsey podcast does not do — and the PwC study does not do, and the Deloitte report does not do — is tell a leader what to build first. They describe the gap between the 20% and the 80% in language that names the problem without naming the response. The result is an executive class that knows AI is not delivering, knows the diagnosis, and still cannot point to the specific architectural decisions that separate the companies in the 20% from everyone else.

That is the gap this article fills.

But isn't this just an execution problem?

The most common response to the 80% finding — and the one most consulting firms reach for — is that the failure is about execution. Better change management. Stronger C-suite sponsorship. More patience. A 3–5 year horizon instead of a 12-month one. All of these are real. None of them are wrong.

They also miss the more uncomfortable point. Execution problems show up as variance — some teams perform well, some perform badly, the average is mediocre. The 80% finding is not variance. It is the consistent result of a market-wide pattern: organizations deploying AI on top of architectures that were not designed to absorb it. Better execution on the same wrong architecture produces better-managed mediocre results.

Architecture decisions look like execution problems from the outside. From the inside, they look like decisions made — or skipped — at the start of the build, before any team had a chance to execute well or badly. The 20% did not just execute the same plan more diligently. They made a different plan.

Decision 1 — Context Before Agents

The first decision the 20% made was the most skipped one in AI deployment.

Before deploying any automation, they encoded what their organization actually knows — workflows, expertise, decision history, client knowledge, brand voice, pricing logic, escalation paths — into the AI system. The AI started knowing their business before it was asked to do anything for the business. Most organizations skipped this step. They deployed AI tools that knew nothing specific about the company running them, and then they wondered why the outputs felt generic.

The bosio.digital framing for this is straightforward: your AI does not know your business, and until you change that, every stage of automation built on top of it will underperform. The technology may be capable. The knowledge architecture is not there.

What context actually means in practice is mundane and unglamorous. It is the customer segments your team actually sells to, captured in a structure the AI can read every time it works. It is the language you use in client communications versus internal memos. It is the past five proposals that won, the past three that lost, and the reasoning behind each. It is the decisions made last quarter that should not be relitigated this quarter, and the priorities that should be remembered without being repeated. None of this exists in a generic AI tool. All of it has to be deliberately encoded in a form the AI can access.

The companies in the 20% built this layer first. The companies in the 80% almost universally skipped it. The architectural pattern this creates — context that compounds rather than starting over every session — is the foundation everything else is built on. Skip the foundation, and the rest of the stack is fragile.

For most mid-market organizations, the first 6–10 weeks of an AI strategy should not produce a single deployed agent. It should produce a context layer that captures organizational knowledge in a maintainable, reusable form. That is what the 20% spent their first weeks building. Most companies spent the same weeks deploying tools instead.

Decision 2 — Skills, Not Tool Stacks

The second decision was about how the 20% organized capability. Instead of accumulating AI tools for different tasks — a sales agent, a marketing agent, a finance agent, a compliance agent — they built reusable skills that encode domain expertise once and make it accessible everywhere it is needed. One skill that knows your compliance workflow. One that knows your client voice. One that knows your pricing logic. The same skill is used by whatever agent needs it.

This pattern was given a name recently when two Anthropic engineers, Barry Zhang and Mahesh Murag, told a developer summit to "stop building agents, build skills." They argued that the AI market has spent two years competing on agent count when the real differentiator was always domain knowledge. The talk was technically a developer-facing argument. Strategically, it was the same argument the 20% had already implemented.

The reason skills outperform tool stacks is mechanical, not philosophical. A specialized agent is a separately maintained system with its own context, its own drift, and its own learning curve. Twelve specialized agents is twelve separate places where organizational knowledge can be wrong, twelve places where it has to be updated when something changes, and twelve places where improvements in one do not transfer to the others. A skill, by contrast, is encoded once. When it is refined, every future use of that skill benefits. The improvements compound across uses, not just within an agent.

The architectural argument for this — and what it looks like when an organization actually builds it — is laid out in detail in why skills-first architecture outperforms agent-first. The short version: agents are the runtime, skills are the asset. The 20% understood that the asset was always going to be the body of organizational expertise the AI could draw on. The 80% are still buying runtimes.

This decision matters more over time, not less. A skills library accumulates value with every refinement. A tool stack accumulates technical debt. By month 18, the gap between the two paths is structural — and very hard to close without rebuilding what was already deployed. The accumulation of agents without coherent architecture is the visible symptom of the deeper problem: the organization optimized for the wrong unit.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Decision 3 — Governance Built In, Not Bolted On

The third decision was about who is in charge of what, and when.

The 80% deployed AI agents and added governance after problems surfaced. An agent did something it should not have done; an approval got skipped; a customer received an output that did not reflect the company's voice. The fix, almost universally, was to add an oversight layer on top of the existing automation. Approval workflows. Audit trails. Escalation paths. Bolted on.

The 20% designed those things in from the start. Before any agent went live, the architecture answered three questions: which decisions can the AI make autonomously, which require human approval before action, and which require human review after action. The answers varied by domain. The discipline of having answered them at all is what separated the two groups.

This is not a compliance discussion. It is an architectural one. Anthropic's own research on trustworthy agent deployment showed that organizations granting agents more autonomy over time — moving from "approve every action" to "intervene when needed" — required the trust framework to exist before the autonomy expanded. Trust was not a feeling. It was a layered set of controls that made the autonomy expansion possible without losing oversight. The companies that did not build the trust framework cannot expand autonomy safely. They have to keep humans in every step, which is exactly the bottleneck AI was supposed to solve.

The Deloitte State of AI in the Enterprise 2026 report (the "Untapped Edge" study, January 2026, surveying 3,235 leaders across 24 countries) made the gap visible: roughly 74% of organizations plan to deploy agentic AI within two years, but only 21% report a mature governance model for those agents. The gap between deployment intent and governance readiness is the architectural debt the 80% are accumulating right now. It will look like an execution problem in 18 months. It is being designed in today.

The "Humans Above the Loop" Operating Model

The McKinsey podcast introduces the term that may be the most useful piece of vocabulary in the entire AI conversation: humans above the loop.

The distinction is precise. Humans in the loop participate in the AI's process. They review steps, approve outputs, fill gaps between agent tasks. They are still doing work that requires their time and attention; the AI just does some of the work alongside them. Humans above the loop participate in the AI's outcomes. The agent handles the full process end-to-end. The human role is to judge what the system produced, not to execute steps within it.

This is where the meaningful ROI lives. As long as humans are in the loop, AI is helpful but bottlenecked on human availability. Above the loop, the constraint shifts. Humans become the judgment layer for outcomes the system runs autonomously. The leverage is enormous — and the risk is enormous, which is why the third architectural decision matters so much. Without trustworthy governance, you cannot move humans above the loop without losing control of the outcomes.

What McKinsey describes as a future state, the 20% are already operating in selective domains. Not for every workflow. The interesting move is which workflows they chose. They moved humans above the loop in domains where the cost of an error was bounded and the rate of routine outputs was high — drafting, screening, scheduling, first-pass analysis, data preparation. They kept humans in the loop in domains where errors had unbounded consequences — strategic communication, legal commitments, irreversible actions. This is not a binary. It is a gradient that requires architecture to navigate.

The 80% cannot navigate it because the architecture is not there. They are stuck running everything with humans in the loop, which limits the scaling benefit of AI to the patience of the humans involved. This is why their ROI is flat. The technology can do more. The architecture cannot let it.

The shift from in-the-loop to above-the-loop is not a technology purchase. It is an architecture decision — context, skills, governance — that makes humans-above operationally safe in specific domains. Once that architecture exists, the operating model can shift. Where this fits in the broader maturity arc of AI integration — from chat to context to automation to compounding intelligence — is the broader frame for the same conversation. Humans above the loop is what the later stages of that arc actually look like in practice.

What the Other 20% Look Like in Practice

Here is the part of the article that requires honest framing.

bosio.digital has been running this architecture — context, skills, governance, layered into a single operating system for our own firm — since the start of 2026. The same system we deliver to clients runs every day inside our own business. We did not invent this pattern; the 20% the McKinsey data describes were doing it before we systematized it. We built the version we run. We adopted it for ourselves first because it was the only honest way to recommend it to anyone else.

What it looks like in practice is unglamorous. It is a folder structure that captures organizational knowledge — brand voice, client context, financial logic, content production workflows, service delivery patterns, compliance constraints — in a maintainable, queryable form. It is a library of skills that encode the specific way our firm approaches specific kinds of work. It is a governance layer that defines, for each kind of task, what the system does autonomously and what requires human review before action. The architecture is small. The discipline of maintaining it is what makes it work.

The reason this matters for a leader reading this article is not that we built it. The reason is that the architecture itself is buildable in 8–12 weeks for a mid-market organization. It is not a transformation program. It is a knowledge project, a design project, and a maintenance discipline — done in that order. Most of the work is editorial: deciding what the organization knows that should be encoded, deciding what governance applies where, deciding what the AI should do automatically and what it should escalate. The technical part is small.

This is also why the 20% can pull ahead so reliably. The architectural decisions are not gated by capital, by tooling, by access to a specific platform. They are gated by organizational will to do the editorial work most companies treat as a documentation chore. The companies that take that work seriously get to humans-above-the-loop in selective domains. The companies that do not stay stuck running everything with humans in the loop, watching their AI investments produce activity instead of outcomes.

The McKinsey data — 80% not seeing impact — is what happens when the editorial work does not get done. The 20% number is what happens when it does.

The Question to Ask Before Your Next AI Investment

The instinct, when reading a stat like the 80%, is to ask: am I in the 80% or the 20%? The honest answer is that most organizations cannot tell — because the difference is not visible from the inside. The 80% experience is "we are using AI everywhere and not seeing it on the bottom line." The 20% experience can look the same in the first six months, before the architectural difference shows up in the numbers.

The better question is not which side you are on. It is what you are building right now that determines which side you will be on by the end of 2026.

Three diagnostic questions, each answerable by any leader, that surface the architectural decisions before they become the architectural debt.

1. Does your AI know your business, or does it start from zero every session?

If a new employee could use your AI system on day one and produce outputs that sound like your organization, you have built context. If not, you have not. Most organizations have not — and they will not get ROI until they do. This is the most-skipped first step in AI deployment.

2. Is your organizational expertise encoded in reusable skills, or locked in prompts and individual accounts?

The expertise that matters most in your organization — the way your senior people handle hard situations, the patterns in your best client communications, the decision logic that took years to develop — is either encoded into reusable skills your AI can apply consistently, or it is sitting in individual people's heads and personal Custom GPTs. The first compounds with the organization. The second leaves when the people leave.

3. Do you have governance designed in, or are you waiting to see what goes wrong?

If your answer to "what are we allowed to let the AI do autonomously?" is "we will figure it out as we go," you are in the 80%. If you can name, for any specific workflow, exactly what the AI decides on its own, what it requires human approval for, and what gets escalated, you are in the 20%. Governance is what makes humans-above-the-loop possible. Without it, you cannot capture the leverage that produces ROI.

Start Building

Audit Whether You're in the 80% or the 20%

Paste this prompt into any AI tool. The diagnostic surfaces the three architectural decisions that separate the two groups — and tells you specifically where your organization is exposed.

I want to assess whether my organization is set up to capture AI ROI or to be in the 80% that isn't seeing returns. Ask me these questions one at a time: 1. If a new employee used your AI system on day one with no instructions, would the outputs sound like your organization or like a generic consultant? 2. When a senior person in your firm produces unusually good work — a winning proposal, a tough client conversation, a clean financial analysis — is the underlying logic captured anywhere your AI can use it? 3. For your three highest-leverage AI workflows, can you name exactly what the AI does autonomously, what requires human approval before action, and what requires human review after action? 4. If your most experienced person left tomorrow, what percentage of their judgment would remain accessible to the rest of the team through your AI system? 5. What is your AI investment producing right now — activity (more outputs, faster) or outcomes (a measurable change in the business)? After I answer all 5, give me: — Honest diagnosis: 80% or 20% (with specific evidence from my answers) — The single architectural decision I should make first to move toward the 20% — A 30-day plan to start building that decision into our operating model

The diagnostic shows you the gap. Closing it is a different project. See where you stand across all five readiness dimensions →

The Architectural Choice

The McKinsey 80% is not a story about AI failure. It is a story about organizations that bought a technology and skipped the architecture. The PwC corroboration — 20% capturing 75% of the gains — is the same story from the inverse angle. The companies that are seeing returns built three things first. The companies that are not deployed AI on top of operating models that were never designed to absorb it.

This connects to a question that is bigger than AI. The leaders we work with who are clearest about their AI ROI also tend to be clearest about their business model — what it depends on, what it assumes about the world, where it might be exposed. AI is not the only technology shift forcing the question. It is the most visible one. The human-side reasons AI projects fail are real, but they often track back to a more uncomfortable underlying truth: the operating model was assuming something that is no longer true.

The architectural decisions that separate the 20% from the 80% are buildable. The window for being early on them is closing as the gap between the two groups widens. The question is not whether you have an AI strategy. The question is whether what you are building today will be in the right group six months from now.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Related reading: Google Search Central published its AI Optimization Guide on May 15, 2026, formalizing what mid-market leaders should and should not pay agencies to do. Our read on what it means for AI citation strategy and architecture investment.

Frequently Asked Questions

Why is AI ROI so hard to measure for most businesses?

Most organizations measure AI by activity — how many tools deployed, how many prompts run, how many agents in production — rather than by outcomes. Activity is easy to measure and feels like progress. Outcomes require defining what would have to change in the business for the AI to be working: cycle time, error rate, throughput, decision quality, customer outcomes. Until those metrics are defined upfront, AI investments produce activity that looks productive without producing returns. The 20% define the outcome metrics before they deploy the AI.

What is an agentic organization and how do I know if I'm building one?

An agentic organization is one where AI handles end-to-end processes autonomously and humans focus on judgment about outcomes rather than execution of steps. The shift is structural: workflows are reimagined, governance is architected in from the start, and humans operate above the loop on most routine work. You know you're building one when your AI system has access to organizational context that lets it act with knowledge of the business, when reusable skills encode the way the organization works, and when governance is architected to expand AI autonomy safely. Without these, you have AI tools deployed inside an organization that has not become agentic.

What does "humans above the loop" mean in practice?

Humans above the loop means people judge outcomes the AI produces rather than executing tasks within an AI-supported process. In practice, this looks like reviewing a batch of agent-drafted client communications and deciding which to send rather than drafting each one with AI assistance. It looks like approving an agent-prepared analysis that already reached a recommendation rather than supervising the analysis steps. The human role shifts from doing to deciding. This shift only works when the architecture — context, skills, governance — is trustworthy enough that humans can step back from execution without losing control of outcomes.

What's the difference between AI tools and AI architecture?

AI tools are individual products an organization buys and deploys: a sales agent, a marketing automation, a Custom GPT, a workflow integration. AI architecture is the underlying framework that determines how those tools connect to organizational knowledge, share expertise, and operate under governance. Most organizations have AI tools without AI architecture. The tools work; the system does not compound. The 20% have both. The architecture is what makes the tools' value accumulate over time rather than expire when the next tool replaces the last one.

How long does it take to start seeing real ROI from AI investment?

For organizations that build the architecture first — context, skills, governance — meaningful ROI typically appears within 3–6 months in selected workflows, not enterprise-wide. The architectural foundation takes 8–12 weeks to establish for a mid-market company. The first ROI shows up in the workflows that get refactored to operate with humans above the loop in low-risk domains. Enterprise-wide returns take longer because they require workflow redesign at scale, which is a multi-quarter program. Organizations that skip the architecture and deploy tools first typically report flat ROI for 12–18 months, then begin the rebuilding work that should have happened first.

What should I build first before deploying AI agents?

Build the context layer first. Encode what the organization knows — workflows, expertise, decision history, brand voice, client knowledge — in a maintainable, queryable form before any agent goes live. Most organizations can do this in 6–10 weeks if they treat it as a knowledge project rather than an IT project. The benefit compounds: every agent built on top of a real context layer produces better outputs from the first session, requires less correction, and contributes to the same shared organizational intelligence rather than fragmenting it.

How do I know if my AI deployment is in the 80% or the 20%?

The simplest test: open a new AI session in your organization with no context-setting. Ask it to draft something typical for your business. If the output sounds like it could have come from any organization in your industry, you are in the 80%. If it reflects your specific voice, priorities, and operating context without you providing them, you are likely in the 20% — at least for that workflow. The 20% is rarely uniform across an organization. Most companies that are doing this well have selected domains where the architecture is in place and other domains where it is not yet. The question is whether you have any domain at all where the architecture is built.

Sources

- "AI is everywhere. The agentic organization isn't — yet." — McKinsey podcast, April 2, 2026 (Alexis Krivkovich, Lucia Rahilly, Roberta Fusaro). The 80% finding; "humans above the loop" framework.

- PwC AI Performance Study — April 13, 2026. Independent corroboration: 20% of companies are capturing 75% of AI's economic gains.

- Deloitte "The State of AI in the Enterprise: The Untapped Edge" — January 21, 2026. 3,235 leaders surveyed across 24 countries; 74% plan agentic AI deployment within two years; only 21% report mature governance frameworks.

- McKinsey "State of Organizations 2026" — February 2026. The 75% of roles requiring fundamental reshaping finding.

- McKinsey "State of AI 2025" — November 2025. Global survey of 1,993 respondents; 23% report scaling agentic AI somewhere in the enterprise.

- Writer "Enterprise AI Adoption 2026" — April 7, 2026. Survey of 2,400 respondents; 79% of organizations report challenges scaling AI.

- "Don't Build Agents, Build Skills Instead" — Barry Zhang & Mahesh Murag, AI Engineer Code Summit, November 21, 2025. Skills-first architecture argument from Anthropic engineers.

- Stop Building Agents. Build Skills. — bosio.digital. The architectural depth on Decision 2.

- Trustworthy AI Agents — bosio.digital. The architectural depth on Decision 3 (governance).

- AI Agent Sprawl — bosio.digital. The failure pattern that produces the 80%.

- The Current State of AI for Business: A Practitioner's Map — bosio.digital. The four-stage maturity arc; humans-above-the-loop maps to the later stages.

- bosio.digital internal AI operating system — production reference implementation of context + skills + governance architecture, operating since the start of 2026.