Question

What should you ask an AI consulting firm before signing a contract?

Quick Answer

The most important questions to ask an AI consulting firm before signing are: who specifically will do the work (not who will sell it), how they define success across all three dimensions — technical performance, business impact, and user adoption — what their specific methodology is for human-side change management, and whether they can connect you with a mid-market reference from the last 18 months. Most mid-market AI engagements fail not because the technology underperforms, but because the wrong firm was selected before a single line of work began. Source: Deloitte State of AI in the Enterprise 2026; Anthropic 81,000-person qualitative study.

Eighty-eight percent of organizations now use AI in at least one business function. Fewer than one-third have successfully scaled it across the enterprise. That gap — between AI adoption and AI transformation — is where consulting firms make their money, and where mid-market companies lose theirs.

The Deloitte State of AI in the Enterprise report does not bury the finding: most organizations are not getting what they expected from their AI investments. The technology is rarely the problem. The selection of who guides the work almost always is.

Before you sign anything — before you approve a proposal, before you shake a hand over a pilot engagement, before you book a kickoff call — there are eight questions that separate the firms that will change what your organization is capable of from the ones that will leave you with an expensive slide deck and a strategy no one knows how to execute.

Most mid-market companies never ask them. Not because they're uninformed, but because the consulting sales process is specifically designed to route around them. The pitch is polished. The case studies are curated. The team you meet in the room will not be the team in the room once the contract is signed.

This is not a cynical observation. It is the most common feedback we hear from companies that have been through at least one failed or stalled AI engagement. They knew something was off during the sales process. They didn't have the specific questions to name it.

This article is those questions. (For a comprehensive guide to all your AI consulting options — from Big 4 to boutique to fractional — see our complete guide to choosing the right AI consulting partner.)

Why Generic AI Experience Gets You the Wrong Firm

The first mistake mid-market companies make when evaluating AI consulting firms is asking the wrong question at the top of the conversation.

"Do you have experience with AI transformation?" is not a useful question in 2026. Every firm in the market will say yes. The Big 4 have rebranded entire practices around it. The boutique shops have pivoted their pitch decks. Even generalist digital agencies have added an "AI consulting" service line. The experience claim is table stakes. It tells you almost nothing about fit.

The question underneath the question is: experience doing what, with whom, in what size of organization, and with what outcome?

This distinction matters more for mid-market companies than it does for enterprise. The reasons are structural. Enterprise organizations have internal IT departments, change management resources, project management offices, and the budget to sustain a multi-year engagement even when it's going slowly. Mid-market companies — typically 50 to 500 employees — have none of that infrastructure. The consulting firm isn't supplementing your capacity. In most cases, they are your AI implementation capacity.

That changes everything about what you need from them.

An enterprise engagement can absorb six months of discovery before a single workflow changes. A mid-market engagement cannot. The firm needs to know how to move from diagnosis to execution in weeks, not quarters. They need to be able to work with messy data, undertrained staff, and leadership teams that are skeptical or divided. They need to understand that when 42% of organizations cite lack of skilled professionals and implementation complexity as their top AI challenges — which research consistently shows — they're describing your exact situation.

The second mistake is confusing strategy with implementation. Some of the most respected names in AI consulting excel at one and quietly subcontract the other, or simply stop at the edge of execution and call it a "roadmap delivery." The handoff gap — where strategy ends and implementation begins — is where most mid-market engagements fall apart. It's also the gap that never appears in the case study.

The eight questions that follow are designed to find these gaps before you're inside them.

The Questions That Get the Answers You Actually Need

These eight questions are not gotchas. They are the questions a sophisticated buyer asks because they've either been through an expensive mistake or they've been thoughtful enough to avoid one. Any firm worth hiring will welcome them. They narrow the field quickly.

1. "Who specifically will do the work once we sign — and can I meet them before we sign?"

This is the question that makes sales teams uncomfortable, and that discomfort is the point.

The bait-and-switch is not unique to AI consulting, but it's particularly costly in this space. A partner or principal leads the pitch. Their track record is referenced in the proposal. The case studies they describe are ones they personally led. You sign based on the credibility of that person and their team.

Then the engagement kicks off and the junior analyst is running your discovery sessions while the partner resurfaces for quarterly check-ins.

This is not a character failing — it's a business model. At large consulting firms, partners sell and analysts deliver. The ratio can be extreme: one senior person managing four to seven active engagements simultaneously, with execution delegated entirely down. The right answer to this question is specific. They name the person who will run your discovery phase, the person who will lead the implementation sessions, and they offer to connect you before the contract is signed. If the answer involves assurances that you'll have "senior oversight" or "partner involvement at key milestones," that is not the same as senior delivery. It tells you exactly who is doing the work.

2. "Walk me through how you measure success — all three dimensions."

If a consulting firm can only describe one dimension of success, they are only managing one dimension of your engagement. And that means two dimensions of failure are invisible to them until the engagement ends.

The three dimensions of a successful AI implementation are technical performance, business impact, and user adoption. Most consulting firms can speak fluently to the first. Many can speak to the second with some prompting. Almost none have a structured methodology for the third — and the third is where most mid-market implementations fall short.

Anthropic's qualitative study of 81,000 people — the largest multilingual AI research study ever conducted — found that the top concerns employees have about AI are unreliability and hallucinations (26.7%), job displacement (22.3%), loss of autonomy and control (21.9%), and cognitive atrophy from over-reliance (16.3%). Not a single one of those is a technical performance problem. All four are user adoption problems. A firm that doesn't have a methodology for navigating those four fears is going to hand you a technically excellent system that your people won't use.

Ask this question and then listen without jumping in. Count how many sentences they spend on technical metrics versus how many they spend on adoption and behavior change. The ratio tells you more than the content.

3. "What is your specific methodology for the human side of the implementation?"

"Change management" is a phrase that means almost nothing on its own. Every consulting firm uses it. Most of them mean: we'll do some communication planning, maybe a training session, and remind leadership to send an all-hands email. That is not a methodology. That is the absence of a methodology with a respectable label on it.

A real methodology for human-side AI transformation answers specific questions: How do you assess human readiness before the work begins, not during it? How do you identify and build AI champions inside the organization rather than depending on top-down mandates? How do you address the specific fears — job displacement, loss of control, skill atrophy — that research shows drive resistance? What's your process when a pilot succeeds technically but adoption stays flat?

The reason this matters so much for mid-market companies specifically is scale. At an enterprise organization, you can absorb the fact that 20% of employees resist change — there are enough early adopters to drive momentum regardless. At a 200-person company, 20% resistance is company-wide drag. You cannot afford a methodology that waits for adoption to happen organically.

If the answer stays general — "we take a people-first approach" — follow up with: "Can you give me a concrete example of how that played out in a recent mid-market engagement?" Watch what happens to the specificity of the answer when you push.

4. "What does the first four weeks look like? Show me the deliverable list."

Consulting engagements have a well-known early phase: discovery. The firm comes in, conducts interviews, reviews documentation, and maps the current state. This phase is valuable. It is also the phase most likely to extend indefinitely when there is no clear deliverable gate.

A discovery phase that runs three to five weeks and produces a presentation is not the same as one that produces a prioritized implementation roadmap, an assessed risk register, a champion identification output, and the first pilot workflow selected and scoped — all within the same timeframe.

Mid-market companies cannot afford extended discovery phases without organizational confidence eroding. Leadership is watching. Employees are skeptical. Every week of discovery without visible progress erodes the initiative's internal support.

Ask for the deliverable list and ask what week each deliverable is due. A firm that resists this question — that says deliverables depend on what they find in discovery — has not run enough mid-market engagements to have a pattern for what they typically find. That is a risk you're absorbing.

The Questions About What Happens After Week Four

5. "Describe your handoff process."

This question surfaces the most expensive problem in AI consulting, and most clients never ask it until they're inside it.

A significant number of AI consulting engagements — particularly at larger firms — are scoped as strategy-and-pilot deliveries. The firm designs the approach, runs a controlled pilot, validates the concept, and produces a roadmap for scaling. Then the engagement ends. The roadmap sits in a slide deck. Your internal team, which does not yet have the skills or the context to execute it, now owns the next phase.

That is not a transformation. That is a very expensive proof of concept followed by an internal execution problem you have to solve on your own.

The question to ask directly: "After the initial engagement, what are you still accountable for?" If the answer is "nothing — our engagement is complete at handoff," that is honest. It is also significant information. The gap between "we deliver a roadmap" and "we're accountable for the outcome" is where most mid-market AI investments disappear.

6. "Can you connect me with a mid-market company you've worked with in the last 18 months?"

Not an enterprise client. Not a logo from a Fortune 500 that appears on the website. A company with fewer than 500 employees, in roughly your industry, that went through an implementation similar to what you're discussing, in the last 18 months.

The "last 18 months" qualifier matters. The AI landscape has changed significantly in the last two years. A successful implementation from 2022 used different tools, different models, and addressed different organizational challenges than one from 2025. The lessons are not directly transferable.

The "mid-market" qualifier is equally important. The skills required to run a successful AI transformation at a 150-person professional services firm are not the same skills required at a 50,000-person corporation. A firm that has only worked at enterprise scale and is now pitching the mid-market is learning on your engagement.

The response to this question is itself the answer. A firm with real mid-market depth will have three names ready, offer to make introductions without conditions, and those references will have specific things to say. A firm without that depth will have reasons why they can't share references easily.

The Intellectual Honesty Tests

7. "What would you specifically not recommend for us right now?"

This is the intellectual honesty test, and it is the one question that a firm in pure sales mode cannot answer well.

Every organization walking into an AI consulting engagement has options: different tools, different starting points, different scopes. Some of those options are genuinely right for where the organization is. Some are not yet appropriate given the state of the data, the team's readiness, or the competitive pressure the business is actually under.

A firm that wants to help you will tell you which options they'd advise against — and why. That "why" will be specific to your situation. It might sound like: "Given what you've described about your data infrastructure, starting with an automated workflow layer is going to create reliability problems before you've built trust. We'd start with an augmentation layer instead, even though it's a smaller initial engagement." That is a firm thinking about your outcome, not their revenue.

A firm that cannot answer this question — that pivots back to capabilities, or says "it really depends on your needs," or finds a way to reframe every option as viable — is in sales mode. The absence of a specific recommendation against something is a signal.

8. "How do you handle the situation where our data isn't ready?"

Data unreadiness is not an exception. It is the standard condition for mid-market AI implementations. Gartner found that 63% of organizations either do not have or are unsure whether they have the right data management practices for AI — and predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data. A March 2026 Cloudera and Harvard Business Review study was even more stark: only 7% of enterprises say their data is completely ready for AI adoption. That number is almost certainly lower at companies without dedicated data teams.

Any consulting firm with real mid-market experience has walked into this situation many times. A firm with a real methodology for data readiness can describe what the assessment looks like before implementation begins, how they prioritize which data problems to solve first, what workarounds they use when high-quality data can't be produced quickly, and how they avoid building on data foundations that will create quality problems downstream.

A firm without this methodology will assure you they'll work through data issues as they arise. That is true. It also means your engagement timeline and budget absorb the cost of their learning.

Practically speaking, the data readiness conversation is often where the real scope of an engagement gets defined. If a consulting firm can run you through their data assessment process before the proposal is final — even informally — that tells you they have a process. That is what you want.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Read the Room During the Answers

The eight questions above are designed to get specific, useful information. But the way a firm responds to them is as informative as the content of the answers.

Notice how the sales team responds when you ask to meet the delivery team before signing. Does the pace of the conversation change? Is there a reason given for why that's difficult to arrange? Or do they immediately say "of course — let me introduce you to the lead."

Notice the difference between an answer that gets more specific when you push and one that stays at the same level of generality regardless of how you follow up. Specific answers are a sign of actual experience. Generality that persists under questioning is a sign that the experience being described was once removed — a case study read, not a project led.

Notice how they respond to the "what would you not recommend" question. The pause before that answer is interesting. A confident firm with intellectual integrity will pause because they're actually thinking about your situation. A firm in sales mode will pause because they're figuring out how to redirect.

The selection process is a sample of the working relationship. How the firm handles pressure, ambiguity, and uncomfortable questions in the pitch tells you how they'll handle those same things in the engagement. You have the right to use the selection process as a diagnostic.

One more thing to notice: how do they talk about your people? McKinsey's 2025 State of AI report found that high-performing organizations — the ones that actually scale AI across the enterprise — are three times more likely to have senior leaders demonstrating ownership of and commitment to AI initiatives. A consulting firm that talks exclusively about technology deployment and barely mentions organizational readiness, champion development, or cultural integration is telling you where their expertise stops. The firms that understand what makes AI transformation succeed at the mid-market level talk about people at least as much as they talk about platforms.

What a Real AI Consulting Partner Sounds Like

We built our own AI operating system from scratch before taking a single client engagement. Not because it was required — but because we refused to advise clients on something we hadn't built for ourselves. Twenty-five years of digital transformation consulting with global brands and twenty-five years of contemplative practice taught us something the Big 4 still haven't internalized: AI transformation is a human transformation. The technology is the easy part. Understanding how people actually change — their fears, their resistance, their tremendous capacity for growth — that is the hard part, and it is the part most firms skip.

That is why our methodology starts with a principle we call "Humans First." Not as a tagline, but as an operating philosophy. AI should elevate human talent, not threaten it. Every framework we build, every workflow we design, every change management plan we execute starts from the question: does this work with human nature, or against it?

When a client asks "who does the work," the answer is Sascha and Laura — founder-led, every phase, every engagement, no exceptions. When they ask about our change management methodology, we describe the specific framework built around Anthropic's research on employee AI concerns — addressing unreliability fears, job displacement anxiety, and the loss of autonomy that drives resistance even when the platform choice is right. We test and refine it in our own operations before applying it anywhere else. When they ask for a mid-market reference, we name the companies and make the introductions the same day.

This is what the questions above should surface: not a perfect firm, but an honest one. A firm whose scope matches your size. A firm whose senior people actually deliver the work. A firm that understands humans deeply — not just technology. Boutique expertise, enterprise capabilities, and the intellectual honesty to tell you what not to do.

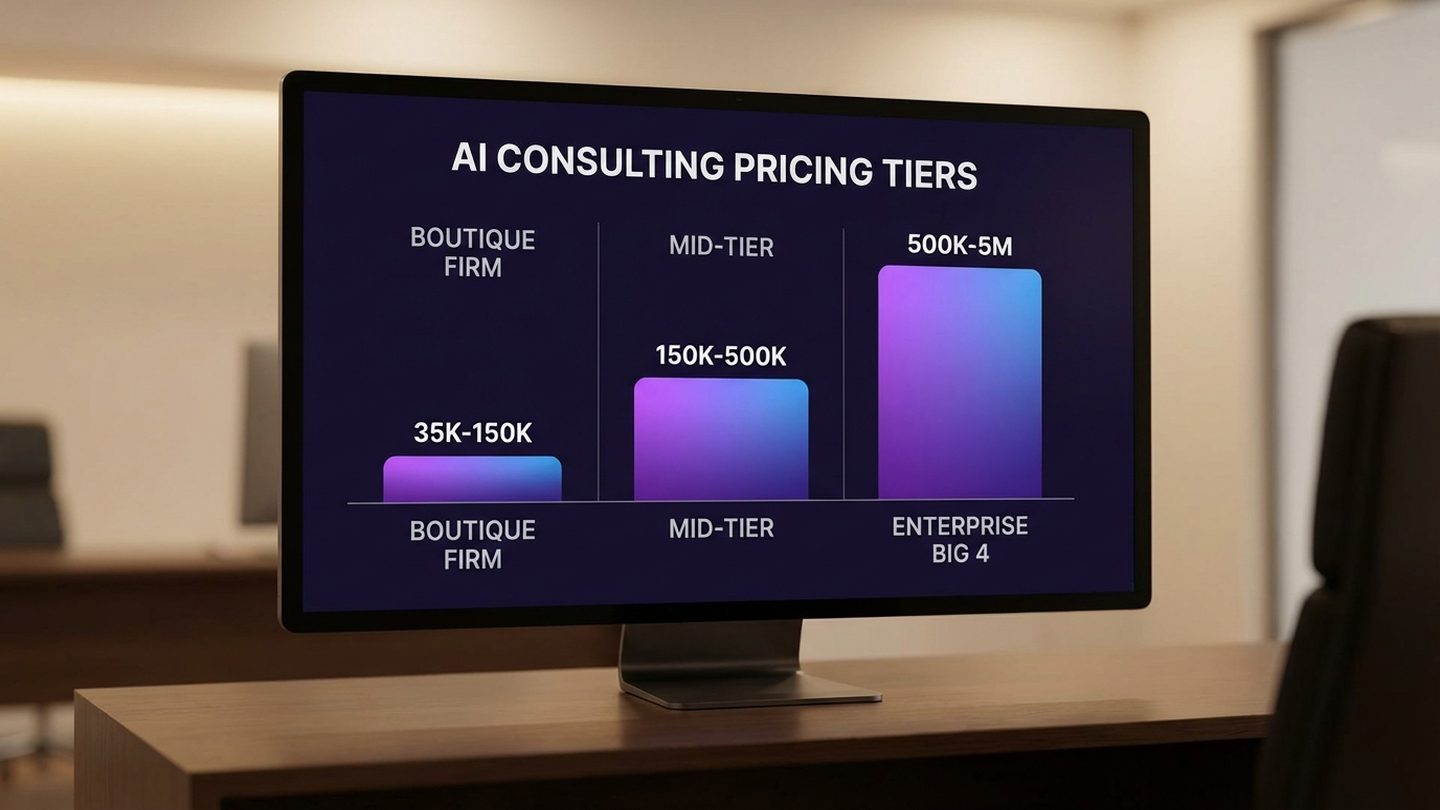

The AI consulting market is projected to grow from $14 billion in 2026 to over $116 billion by 2035. Understanding what AI consulting actually costs — and what drives the number — is the foundation for evaluating any proposal you receive. McKinsey's 2025 State of AI report found that while 78% of companies now use AI, only one-third have successfully scaled it — and high-performing organizations are three times more likely to have senior leaders actively engaged in driving adoption. That growth means a lot of new entrants, a lot of rebranded practices, and a lot of firms that have learned the right language faster than they've built the right experience. The questions in this article exist to help you tell the difference — before you sign, not after.

The context you give your AI implementation partner shapes every decision they'll make on your behalf. We've written about why business-specific AI context is the most important infrastructure decision a mid-market company can make. We've written about what the race between OpenAI, Anthropic, and Google means for your technology decisions right now. And we've written about why the NVIDIA OpenClaw moment is a mandate, not just a news story. But none of that strategic clarity matters if the firm you hire to execute it isn't right for your organization.

The questions come first.

The Firm You Choose This Quarter Sets Your Trajectory for the Next Three Years

The goal of the selection process is not to find the cheapest firm, or the most credentialed, or the one with the most impressive client list. The goal is to find the one that can actually execute the specific transformation your organization needs — with your data, your people, your timeline, and your constraints.

A few practical notes on running this process well:

Ask the questions in person or on video, not in writing. Written responses give firms time to polish answers that would otherwise reveal gaps. The pause, the redirect, the look toward a colleague when you ask who specifically does the work — these are not captured in an email.

Ask the same questions to every firm you're evaluating. The contrast between answers is often more useful than any individual answer in isolation. The firm that immediately names a mid-market reference and offers an introduction before the call ends is more legible when you've just sat through a firm that spent three minutes explaining why they couldn't share client contacts.

Weight the "what would you not recommend" question heavily. In our experience, it is the single most reliable signal of intellectual integrity and genuine mid-market expertise. A firm that can look at your situation and confidently recommend against a path you were excited about — because they've seen that path fail at your scale — is a firm that has the operational knowledge and the confidence to act in your interest. That combination is rare enough to be a signal when you find it.

The transformation you're building deserves the right partner to build it with. Your people are the heroes of this transformation — the consulting firm's job is to set them up to succeed, not to deliver a roadmap and disappear.

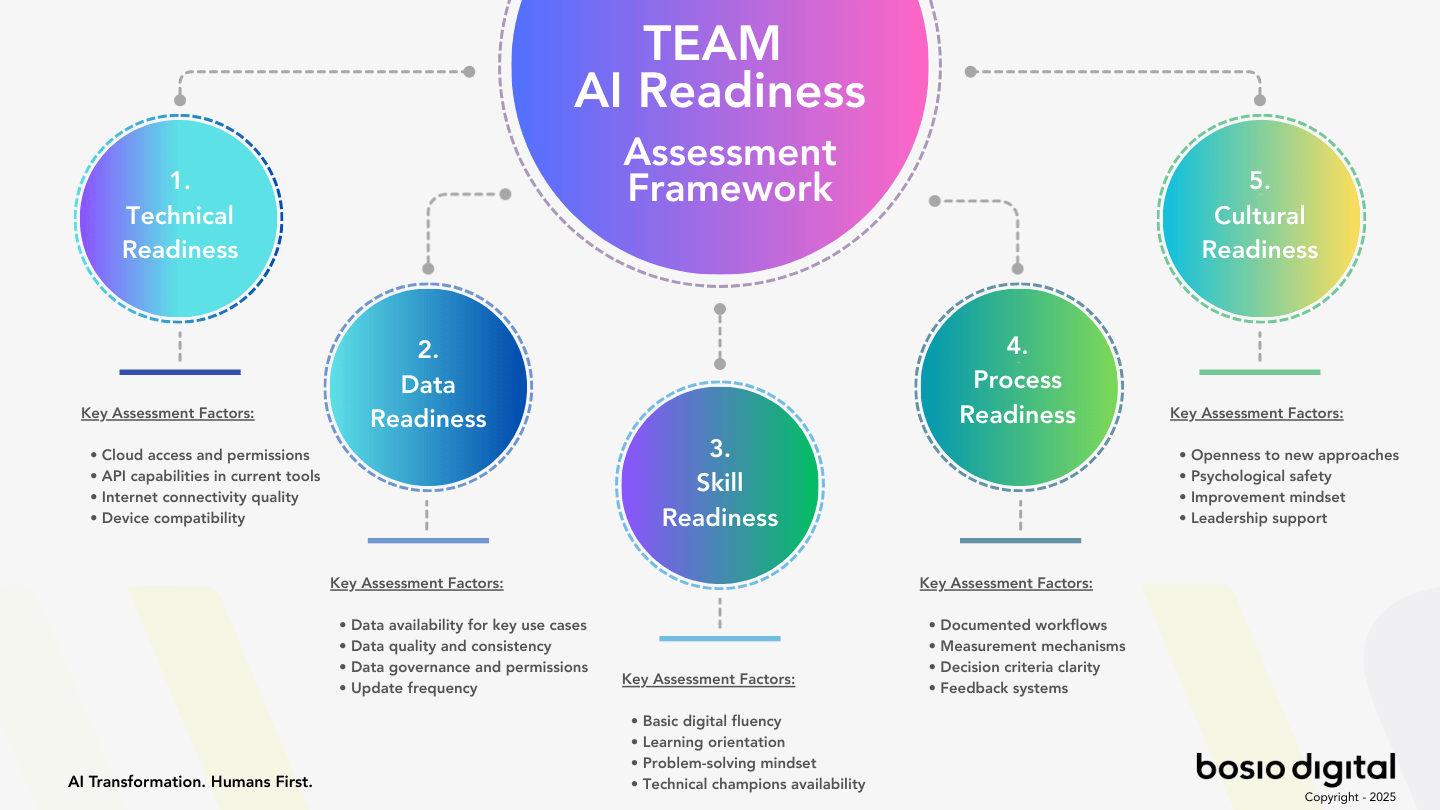

If you're evaluating firms right now and want a structured way to assess your organization's readiness before the first conversation, our free AI Readiness Assessment evaluates where you stand across five dimensions: Technical infrastructure, Data maturity, Skills readiness, Process alignment, and Cultural preparedness. It takes ten minutes and gives you the baseline every consulting conversation should start from.

The questions come before the contract. And the right partner will welcome every single one of them.

Frequently Asked Questions

What are the most important questions to ask an AI consulting firm?

The most critical questions focus on four areas: who actually delivers the work (not who sells it), how success is measured across all three dimensions of technical performance, business impact, and user adoption, what specific methodology exists for change management and human readiness, and whether the firm can provide mid-market references from recent engagements. These questions surface the gaps that standard RFP processes consistently miss.

How do mid-market AI consulting engagements typically fail?

The most common failure modes are the bait-and-switch staffing model (senior people sell, junior people deliver), the handoff gap (the firm delivers strategy and the client is left to execute without support), inadequate human readiness methodology (technology deploys, adoption doesn't follow), and data unreadiness that wasn't assessed before the engagement began. Nearly 88% of organizations use AI in at least one function, but fewer than one-third have successfully scaled it — this gap reflects the failure of implementation more than technology.

What's the difference between a Big 4 AI consulting engagement and a boutique firm for mid-market companies?

Big 4 and mid-tier firms excel at strategy, frameworks, and the early diagnostic phase. Their challenge at the mid-market level is senior attention: the people who sell the work are rarely the people who execute it, and their delivery model was built for enterprise clients with large internal teams. Boutique firms typically offer more senior delivery capacity and tighter implementation timelines — research suggests boutique mid-market engagements run 2–6 weeks versus 8–12 weeks at larger firms, with fees 20–40% lower — but need to be evaluated carefully on technical depth and whether their experience is genuinely mid-market or repurposed enterprise work.

How do I evaluate whether an AI consulting firm has real mid-market experience?

Ask for a reference from a company with under 500 employees in a similar industry from the last 18 months — not an enterprise logo. Ask what specific challenges came up in that engagement that wouldn't have appeared in an enterprise context. The answers reveal whether the firm has genuinely navigated mid-market constraints — limited data infrastructure, small internal teams, leadership skepticism, constrained timelines — or whether they're presenting enterprise experience as transferable.

Should I ask about an AI consulting firm's data readiness methodology?

Yes — and the earlier in the conversation, the better. Gartner found that 63% of organizations lack proper data management practices for AI, and predicts 60% of projects will be abandoned due to data unreadiness. Most mid-market companies have data that is siloed, inconsistently formatted, or incomplete. A firm with real mid-market experience has encountered this many times and has a structured process for assessing data readiness before scope is finalized. A firm without that process will absorb the cost of figuring it out into your engagement timeline and budget.

What red flags should I watch for during the AI consulting sales process?

The most significant red flags are: inability to name who specifically will deliver the work before you sign; vague answers to the change management methodology question that don't become more specific when pushed; resistance to sharing mid-market references; inability to name something they would not recommend for your specific situation; and handoff language that ends the firm's accountability at "roadmap delivery." The sales process is a sample of the working relationship — how a firm handles pressure and uncomfortable questions in the pitch reveals how they'll handle the same in the engagement.

How long should an AI consulting engagement take for a mid-market company?

The rule of thumb: if discovery alone is longer than five weeks, ask what is being produced at week five and why it isn't ready earlier. Mid-market engagements can't absorb extended discovery phases without organizational confidence eroding. The firms best suited to mid-market work have compressed their discovery process through repeated pattern recognition — they know what they typically find and can move to implementation faster without sacrificing rigor.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Sources

- Deloitte. The State of AI in the Enterprise — 2026 AI Report.

- Anthropic. 81,000-Person Qualitative AI Research Study.

- ColorWhistle. AI Consultation Statistics 2026: Market Size, Trends and Insights.

- Business Research Insights. Artificial Intelligence (AI) Consulting Market 2026–2035.

- Future Market Insights. AI Consulting Services Market Size & Forecast 2025 to 2035.

- PwC. 2026 AI Business Predictions.

- McKinsey. The State of AI in 2025: Agents, Innovation, and Transformation.

- Gartner. Lack of AI-Ready Data Puts AI Projects at Risk.

- Cloudera & Harvard Business Review. Only 7% of Enterprises Say Their Data Is Completely Ready for AI. March 2026.

- QBSS. 2026: The Year Mid-Market Outpaces Enterprise in AI Adoption.