Question

What are LLM knowledge bases and why do they matter for business?

Quick Answer

LLM knowledge bases represent a shift from storing documents to compiling living intelligence. Large language models now ingest raw sources — articles, meeting notes, research, internal documentation — and synthesize them into structured, self-updating knowledge systems that improve with every query. According to McKinsey, knowledge workers spend 9.3 hours per week searching for information. LLM-compiled knowledge bases don't just reduce that search time — they make knowledge self-organizing, queryable, and compounding. The AI-driven knowledge management market is projected to reach $11.24 billion in 2026, growing at 46.7% year-over-year.

Andrej Karpathy — former head of AI at Tesla, co-founder of OpenAI, and one of the people who shaped how the industry thinks about neural networks — posted something in April 2026 that most business leaders scrolled past. It wasn't a product announcement. It wasn't a research paper. It was a quiet admission about how his own work has changed.

"A large fraction of my recent token throughput," he wrote, "is going less into manipulating code, and more into manipulating knowledge."

Andrej Karpathy

@karpathy

"Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it."

View on X · April 2, 2026

What he described is a pattern worth paying attention to — not because it's new technology, but because it represents a threshold the technology has crossed. Karpathy isn't building a search engine or a chatbot. He's using LLMs to ingest raw sources — articles, research papers, datasets, code repositories — and compile them into a structured, interlinked wiki that the LLM itself writes and maintains. The wiki includes summaries, backlinks, concept categorization, and cross-references. It updates incrementally. It improves with every query. He rarely touches it directly.

Two days after the original post went viral, Karpathy followed up with something even more telling — he published the pattern itself as an open "idea file," designed to be handed directly to an AI agent.

Andrej Karpathy

@karpathy

"In this era of LLM agents, there is less of a point/need of sharing the specific code/app, you just share the idea, then the other person's agent customizes & builds it for your specific needs."

View on X · April 4, 2026 · Full idea file (GitHub Gist)

That's significant. The person who built the pattern is telling the world: you don't need my code, you need my architecture. Give the idea to your AI and let it build the implementation for you. It's a shift in how technical knowledge transfers — from sharing tools to sharing thinking. And it's exactly the pattern we've been advocating for with organizational AI: the architecture is the asset, not the software.

His personal knowledge base has grown to roughly 100 articles and 400,000 words — compiled, organized, and maintained entirely by AI. And the part that should get your attention: he found he didn't need fancy retrieval-augmented generation (RAG) infrastructure to make it work. The LLM maintains its own index files and summaries, reads the relevant data when queried, and handles the complexity at this scale without specialized engineering.

This matters for business leaders because what Karpathy is doing alone, for personal research, is a preview of what organizational knowledge infrastructure will look like within the next three years. The pattern he's describing — ingest, compile, query, enhance — is exactly what companies need for institutional intelligence. The technology is ready. The question is whether your organization understands what's now possible.

The Signal That Got Our Attention

When someone at Karpathy's level says their primary use of AI has shifted from code generation to knowledge compilation, it's worth asking what that implies at scale.

His workflow has five distinct phases. First, he indexes source documents — articles, papers, repos, datasets, images — into a raw directory. Second, he uses an LLM to incrementally "compile" those sources into a wiki: a collection of markdown files organized into a directory structure with summaries, backlinks, concept categories, and interlinked articles. Third, he uses Obsidian as a frontend to view the raw data, the compiled wiki, and derived visualizations. Fourth, he queries the wiki with complex questions, and the LLM researches answers across the compiled knowledge. Fifth — and this is the part that creates compound value — he files the outputs of his queries back into the wiki, so every exploration enriches the base for future queries.

The LLM writes and maintains all of the wiki data. Karpathy rarely edits it directly. The system is self-organizing, self-enhancing, and queryable in natural language.

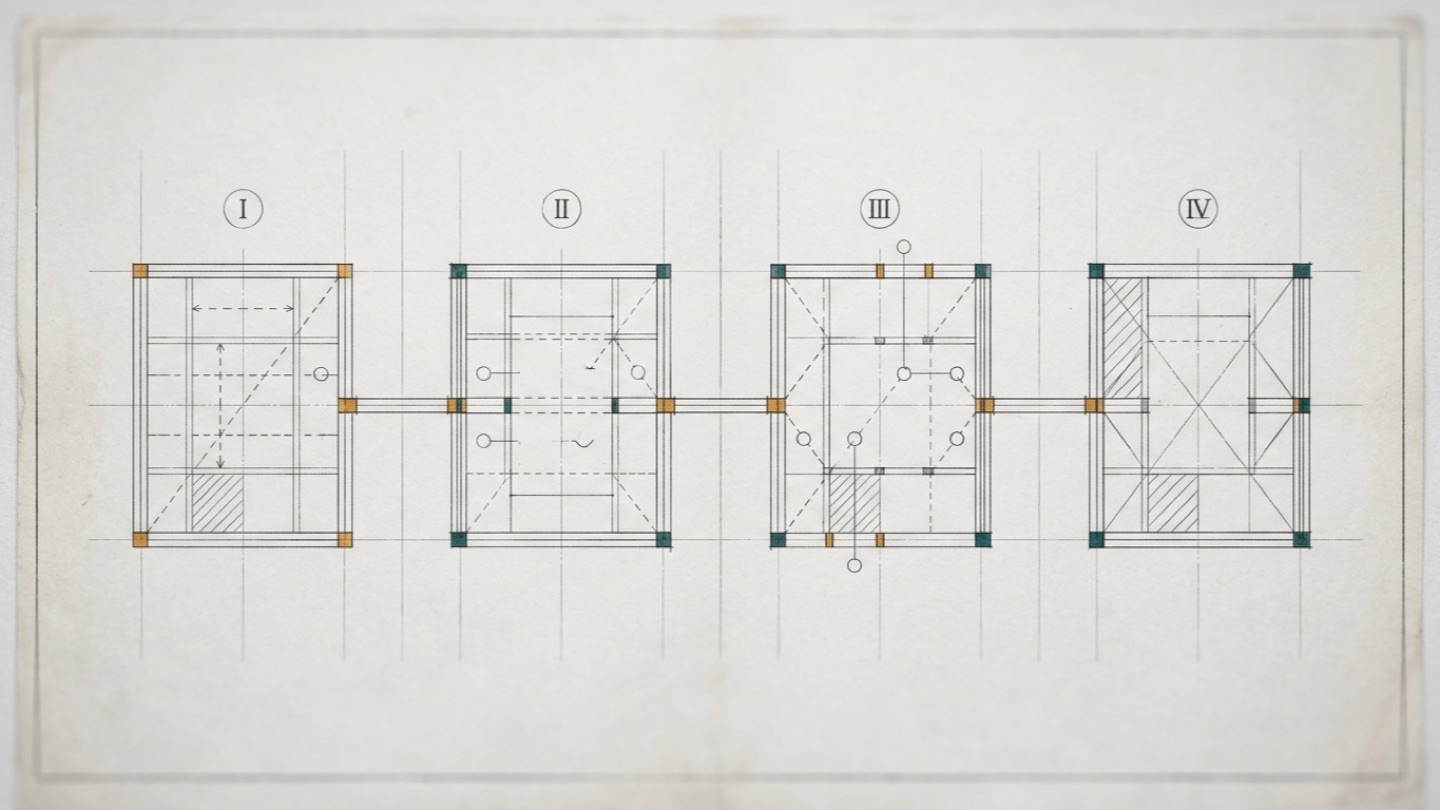

The 5-Layer Knowledge Compilation Pattern

This isn't a productivity hack. It's a new architecture for how knowledge gets created, maintained, and used. The traditional model — humans write documents, store them somewhere, other humans search for them later — has a compounding decay problem. Documents go stale. Search returns noise. The person who wrote the original document leaves the company and takes the context with them. According to research on institutional knowledge, 42% of organizational knowledge resides solely with individual employees. When they leave, that knowledge leaves with them. LLM-compiled knowledge bases solve this by making knowledge extraction and organization continuous, not episodic.

Karpathy also described running LLM "health checks" over his wiki — automated audits that find inconsistent data, impute missing information using web searches, discover unexpected connections between topics, and suggest new research directions. The knowledge base doesn't just store what he knows. It actively identifies what he doesn't know yet and proposes where to look. That is a fundamentally different relationship between a person and their accumulated knowledge than anything a traditional wiki, database, or document management system has offered.

From Search to Synthesis: What's Actually Changing

For twenty years, the dominant model for organizational knowledge has been the same: create documents, store them in a system (SharePoint, Confluence, Google Drive, Notion), and rely on search to retrieve them later. The theory is that if you can find the right document, you can find the right answer.

The practice is different. A Gartner survey found that 47% of digital workers struggle to find the information they need to do their jobs. McKinsey's research puts a number on the cost: knowledge workers spend an average of 9.3 hours per week — nearly 20% of their working time — searching for and gathering information. That's not a search engine problem. It's an architecture problem. The knowledge was never organized in a way that made retrieval reliable.

What's emerging now is a fundamentally different architecture. Instead of storing documents and searching them, LLMs can ingest raw information and compile it into structured, interlinked knowledge — then maintain that structure as new information arrives. The shift is from "store documents, search later" to "ingest raw data, compile living knowledge, query intelligently."

The AI-driven knowledge management market is projected to grow from $7.66 billion to $11.24 billion in 2026, a compound annual growth rate of 46.7%, according to The Business Research Company's Global Report. But the market size is less interesting than the architectural shift driving it. The dominant pattern is moving from document retrieval to knowledge compilation — from systems that help you find what someone already wrote to systems that synthesize new understanding from everything your organization knows. Gartner predicts that enterprises adopting AI knowledge systems will outperform others by at least 25% across key performance metrics.

That 25% advantage isn't about having better search results. It's about having knowledge that is always current, always organized, and always accessible in the form the person needs — not in the form someone happened to save it in three years ago. A compiled knowledge base doesn't decay the way a document library does. It compounds.

This is the distinction that matters for mid-market leaders. The question isn't whether your company needs better search. The question is whether your organization's knowledge infrastructure is designed to compile and compound — or just to store and retrieve.

The Architecture of Living Knowledge

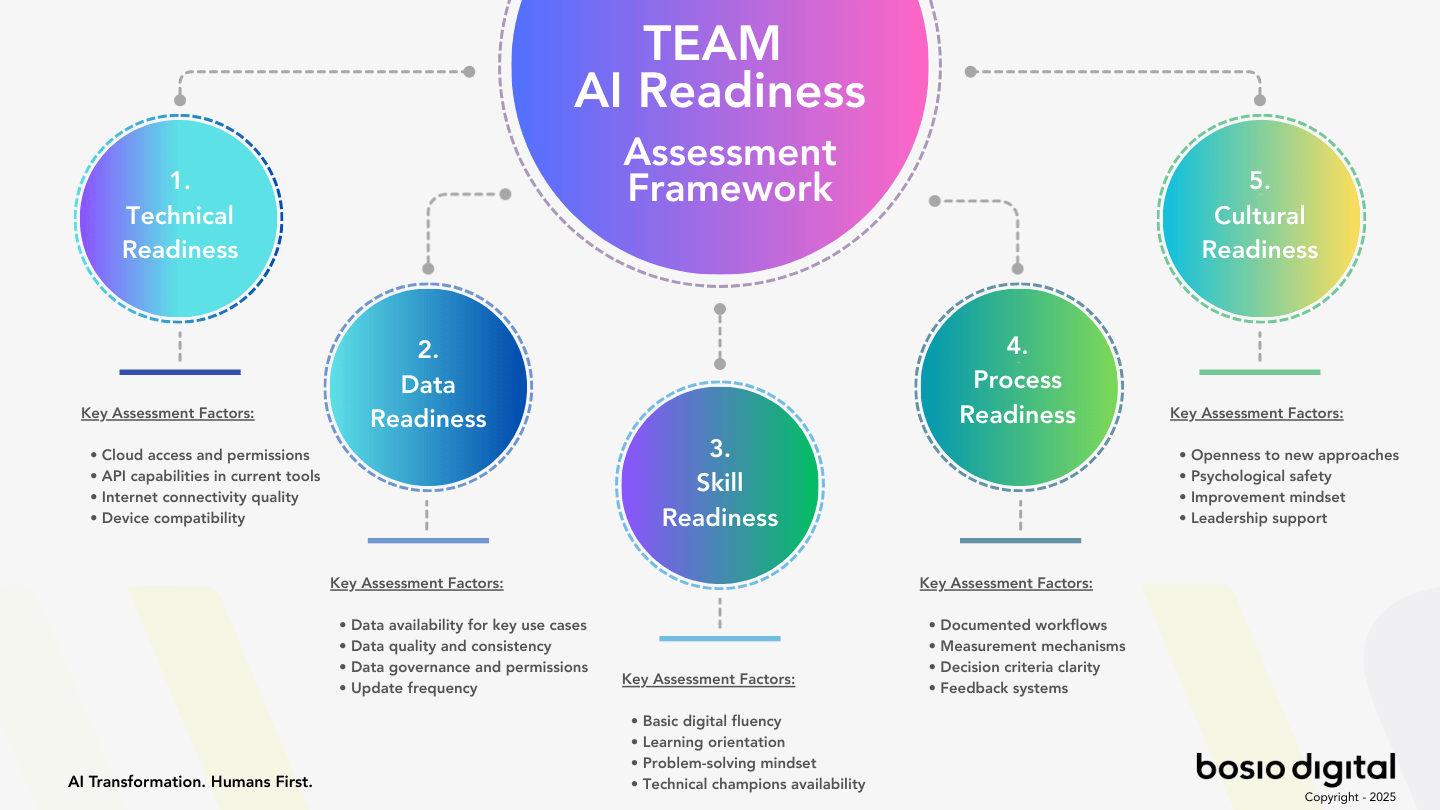

The pattern Karpathy described — and the pattern that's emerging across enterprise knowledge management — operates in five layers. Each layer does something that traditional knowledge management doesn't, and together they create a system that gets smarter over time rather than decaying.

Layer 1: Ingest. Raw sources enter the system — meeting transcripts, client communications, market research, internal documentation, external articles, industry reports, product specifications, support tickets. The key difference from traditional document management: the ingestion layer doesn't just store these files. It processes them. It extracts structure, identifies key concepts, tags relationships, and prepares the raw material for compilation. Think of it as the difference between dropping a box of receipts on your accountant's desk and handing them a categorized ledger.

Layer 2: Compile. This is where the LLM does work that no previous technology could do at this cost or speed. It takes the ingested raw sources and synthesizes them into structured knowledge: concept pages, process documentation, relationship maps, summaries with backlinks to source material. The compiled output is not a copy of the inputs — it's a synthesis. If three different sales calls mention the same competitive concern, the compiled knowledge base creates a single competitive intelligence entry that synthesizes all three observations, links back to the original transcripts, and situates the concern within the broader competitive landscape the system has already mapped.

Layer 3: Index. The compiled knowledge maintains its own index — a navigable map of everything the system knows, with relevance scores, recency weights, and concept clustering. Karpathy noted that he was surprised he didn't need sophisticated RAG infrastructure because the LLM was good at maintaining index files and brief summaries of all documents. At the scale of most mid-market organizations' internal knowledge, this self-indexing capability is sufficient. The LLM knows what it knows and can find what's relevant without a separate retrieval system.

Layer 4: Query. Natural language questions against the compiled knowledge base — not keyword search against stored documents. The difference is profound. A search query finds documents that contain your keywords. A knowledge query synthesizes an answer from everything the system has compiled, draws connections across sources that no single document contains, and presents the answer with citations back to the underlying evidence. An employee asking "What do we know about how enterprise clients evaluate our pricing versus competitors?" gets an answer that synthesizes information from sales call transcripts, competitive analyses, customer feedback, and market research — even if no single document covers all of those angles.

Layer 5: Enhance. This is the compound interest layer — the one that makes the entire architecture worth building. Every query that produces a useful answer gets filed back into the knowledge base, enriching it for future queries. Every health check that identifies inconsistencies or gaps triggers automatic updates. The system doesn't just answer questions — it gets better at answering questions over time. Karpathy described this as his "own explorations and queries always adding up in the knowledge base." For an organization, this means the collective intelligence of every employee's inquiry compounds into institutional knowledge that persists regardless of who stays or leaves.

What This Looks Like Inside a Company

The easiest way to understand this shift is to translate Karpathy's personal workflow into organizational contexts where the same five-layer pattern applies.

Client intelligence. A consulting firm ingests every client communication, meeting note, deliverable, and feedback survey into the knowledge base. The LLM compiles a living client profile that includes relationship history, stated and unstated priorities, engagement patterns, competitive context, and risk factors. When a partner prepares for a quarterly review, they don't search through twelve months of emails. They query the knowledge base: "What are this client's three biggest unresolved concerns, and what have we delivered that addresses each one?" The answer synthesizes a year of interactions into an actionable briefing.

Product knowledge. A manufacturing company ingests product specifications, support tickets, engineering change orders, quality reports, and customer feedback. The compiled knowledge base maintains a living understanding of each product: known issues, performance characteristics, customer usage patterns, and competitive positioning. When a sales engineer needs to answer a technical question during a customer call, the answer is synthesized from the full breadth of the organization's product knowledge — not limited to whatever document the sales engineer happened to have bookmarked.

Market intelligence. A mid-market retailer ingests competitor announcements, industry reports, customer reviews, social media signals, and internal sales data. The LLM compiles a continuously updated competitive landscape that identifies emerging trends, pricing movements, and strategic shifts. When leadership wants to understand how a competitor's recent move affects their positioning, the knowledge base provides a synthesized analysis that draws on months of accumulated intelligence — not a one-time report that was current when it was written and stale by the time it's read.

The practical impact is already measurable. Titansoft, a Singapore-based software company, built a custom knowledge base using LLM compilation and RAG architecture during an internal hackathon. Their product backlog refinement process — which previously took 45 minutes of manual cross-referencing and context gathering — was completed in 30 seconds. Product owners who were initially skeptical became advocates after experiencing the efficiency gains firsthand. That's not an incremental improvement. It's a categorical change in how institutional knowledge flows from where it exists to where it's needed.

IDC research quantifies the broader cost: companies lose $31.5 billion annually due to poor knowledge sharing. Panopto's research found that the average large U.S. business loses $47 million per year from inefficient knowledge sharing, with knowledge workers wasting 5.3 hours every week either waiting for information from colleagues or recreating knowledge that already exists somewhere in the organization. A living knowledge base doesn't eliminate those costs entirely, but it attacks the root cause — knowledge that exists but isn't accessible in the moment it's needed.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

The Context Advantage, Compounded

We've written before about how context compounds over time — how building a persistent AI context layer creates returns that accelerate rather than diminish. LLM knowledge bases are the mechanism through which that compounding actually happens.

Consider the difference between two companies that both adopt AI in 2026. Company A uses AI tools the standard way: employees interact with general-purpose AI assistants, get answers based on the model's training data, and start fresh with every conversation. The AI is helpful but amnesiac. Every session begins at zero. We've described this as the gap between app-level and OS-level AI — the difference between an AI that can answer generic questions and an AI that understands your specific business.

Company B builds a living knowledge base. Every client interaction, every internal decision, every market observation, every product insight gets ingested, compiled, and indexed. Three months in, the knowledge base contains a synthesized understanding of the company's operations that no single employee holds. Six months in, it's catching patterns that humans miss — correlations between customer feedback and product issues, connections between market signals and internal capabilities. A year in, the knowledge base is the most comprehensive record of the company's institutional intelligence that has ever existed.

The gap between Company A and Company B widens every week. Company A's AI usage generates disposable value — helpful in the moment, gone the next session. Company B's AI usage generates cumulative value — every query enriches the system, every enrichment makes the next query better. This is what proprietary knowledge as competitive advantage looks like in practice. The companies that build these systems now are creating a knowledge moat that compounds over time.

According to Gartner, 80% of enterprises will have deployed generative AI applications by 2026, and 40% of enterprise applications will integrate task-specific AI agents. But deployment without persistent knowledge architecture means these tools operate in isolation — powerful in the moment but incapable of learning from the organization's accumulated experience. This is the dynamic behind AI agent sprawl: organizations deploy dozens of agents with no shared context or learning loops, and the system stays static regardless of how much it is used. The competitive advantage belongs not to the companies with the most AI tools, but to the companies whose AI tools share a living understanding of the business. That understanding is the knowledge base.

Why This Isn't RAG (And Why That Matters)

Most business leaders who've encountered AI knowledge management have heard of RAG — retrieval-augmented generation. It's the standard approach: when someone asks a question, the system retrieves relevant documents from a database and feeds them to the LLM as context for generating an answer. RAG is valuable. It grounds AI responses in your actual data rather than relying solely on the model's training knowledge.

But RAG and LLM knowledge compilation are doing fundamentally different things, and the distinction matters for how you think about your organization's knowledge strategy.

RAG searches what you have. It retrieves existing documents — the ones humans wrote and stored — and uses them to inform an answer. The quality of the answer depends on the quality and organization of the original documents. If the knowledge was poorly written, incompletely captured, or scattered across formats, RAG inherits those problems. It's a better search engine, but it's still a search engine. It finds what exists.

LLM knowledge compilation creates what you need. It takes raw inputs — the messy, unstructured, overlapping, contradictory reality of how organizations actually accumulate information — and synthesizes structured knowledge that didn't exist before. The compiled output is a new artifact: cleaner, more organized, more complete, and more queryable than any of the individual inputs. It's not finding a document that answers your question. It's building the understanding that answers your question from everything the organization knows.

The practical difference shows up in a common scenario. A new employee needs to understand how the company prices custom enterprise deals. With RAG, the system might retrieve the pricing guide, a few relevant sales proposals, and an internal memo about a pricing change from six months ago. The employee then has to synthesize those documents themselves. With a compiled knowledge base, the system has already synthesized the pricing methodology, integrated the change from six months ago, mapped exceptions and edge cases from completed deals, and can present a coherent, current answer that reflects the full complexity of how pricing actually works — not just what's in the pricing guide.

Both approaches matter. RAG is the right choice when you need to ground AI responses in specific source documents — legal compliance, regulated industries, situations where traceability to the original document is essential. LLM knowledge compilation is the right choice when you need synthesized understanding that transcends what any individual document contains. The most capable organizations will use both, but the compilation layer is the one that creates compound value — and it's the one most companies haven't built yet.

What Mid-Market Leaders Should Do Now

This isn't a "wait and see" situation. The technology exists. The cost is accessible. And the advantage compounds — meaning the organizations that start building now will have a knowledge infrastructure advantage that widens every quarter.

Three moves to make in the next 90 days.

First: Audit your knowledge landscape. Before building anything, understand what your organization actually knows and where that knowledge lives. Ask the hard question: what do your best people know that isn't written down anywhere? What institutional intelligence would disappear if your top five employees left tomorrow? That gap — between what the organization knows and what the organization has captured — is the compilation opportunity. Every company has it. Most companies have never measured it.

Second: Identify your highest-value compilation target. Don't try to build an everything-knowledge-base. Pick the domain where synthesized, always-current knowledge would create the most immediate value. For most mid-market companies, this is one of three areas: client intelligence (everything you know about your customers, compiled into living profiles), product knowledge (everything your organization understands about what you sell, synthesized across engineering, support, and sales), or competitive intelligence (everything you observe about your market, compiled into a continuously updated landscape). Pick one. Build the pattern. Prove the value. Then expand.

Third: Start small, start now. You don't need a six-figure platform purchase to begin. The Karpathy pattern works at small scale with current LLM tools — a structured directory of raw sources, an LLM that compiles and maintains a knowledge wiki, and a query interface. The architecture matters more than the tooling. Understand the five-layer pattern (ingest, compile, index, query, enhance) and build a proof of concept with your highest-value knowledge domain. The companies that will have the strongest knowledge infrastructure in 2027 are the ones that started compiling in 2026.

The shift from document storage to knowledge compilation isn't a technology upgrade — it's an organizational capability. According to APQC's 2026 Knowledge Management Priorities Survey, 49% of KM teams now prioritize incorporating AI and smart technologies into their programs. But the organizations that will benefit most aren't the ones buying AI-powered search tools. They're the ones building the compilation layer — the living knowledge base that turns raw organizational experience into compounding institutional intelligence. The knowledge your company accumulates is only as valuable as the architecture you build to compound it.

Start Building

Your First Knowledge Layer

Copy this prompt into Claude or ChatGPT. Replace the bracketed sections with your own context. In 30 minutes, you'll have a working Layer 1 and the beginning of Layer 2 — enough to feel how knowledge compilation actually works.

I want to build a living knowledge base for my organization. Start with Layer 1 (Ingest) and Layer 2 (Compile) of the knowledge compilation pattern. Here's my context: — Company: [your company name, what you do, who you serve] — Domain focus: [pick ONE — client intelligence, product knowledge, or competitive intelligence] — Sources: I'm going to paste [3-5] key documents that represent our most important knowledge in this domain. What I need you to do: 1. Ingest each document I paste. For each one, extract: key concepts, decisions, relationships between entities, and anything that contradicts or updates information from previous documents. 2. After all documents are ingested, compile them into a structured knowledge base with: — A summary article for each major concept or entity — Cross-references between related articles — A "conflicts and gaps" section noting where documents disagree or where knowledge is missing — A master index listing every article with a one-line description 3. Output the compiled knowledge base as structured markdown files I can save. Start by confirming you understand the approach, then ask me to paste my first document.

This gets you through Layers 1–2: Ingest and Compile. Layers 3–5 — auto-indexing, cross-domain querying, and the feedback loop that makes it compound — are where the architectural decisions shape whether this becomes a real competitive advantage or just another document library. See where you stand →

Frequently Asked Questions

What is an LLM knowledge base?

An LLM knowledge base is a structured collection of knowledge that is compiled and maintained by a large language model rather than manually authored by humans. Raw sources — documents, meeting notes, research, communications — are ingested and synthesized into organized, interlinked knowledge articles that the LLM updates incrementally as new information arrives. Unlike traditional knowledge bases that decay as documents go stale, LLM knowledge bases are living systems that improve with use.

How do LLMs compile knowledge differently from traditional search?

Traditional search retrieves documents that contain your keywords. LLM knowledge compilation creates new structured knowledge from raw inputs — synthesizing information across sources, identifying relationships, resolving contradictions, and presenting answers that no single document contains. The output isn't a list of documents to read. It's a synthesized understanding built from everything the system has compiled.

Is this the same as RAG (retrieval-augmented generation)?

No. RAG retrieves existing documents to inform an LLM's response — it's a better search engine. LLM knowledge compilation creates new structured knowledge that didn't exist before. RAG searches what you have. Compilation builds what you need. Both are valuable, but compilation creates compound value over time because every query can enrich the knowledge base for future use.

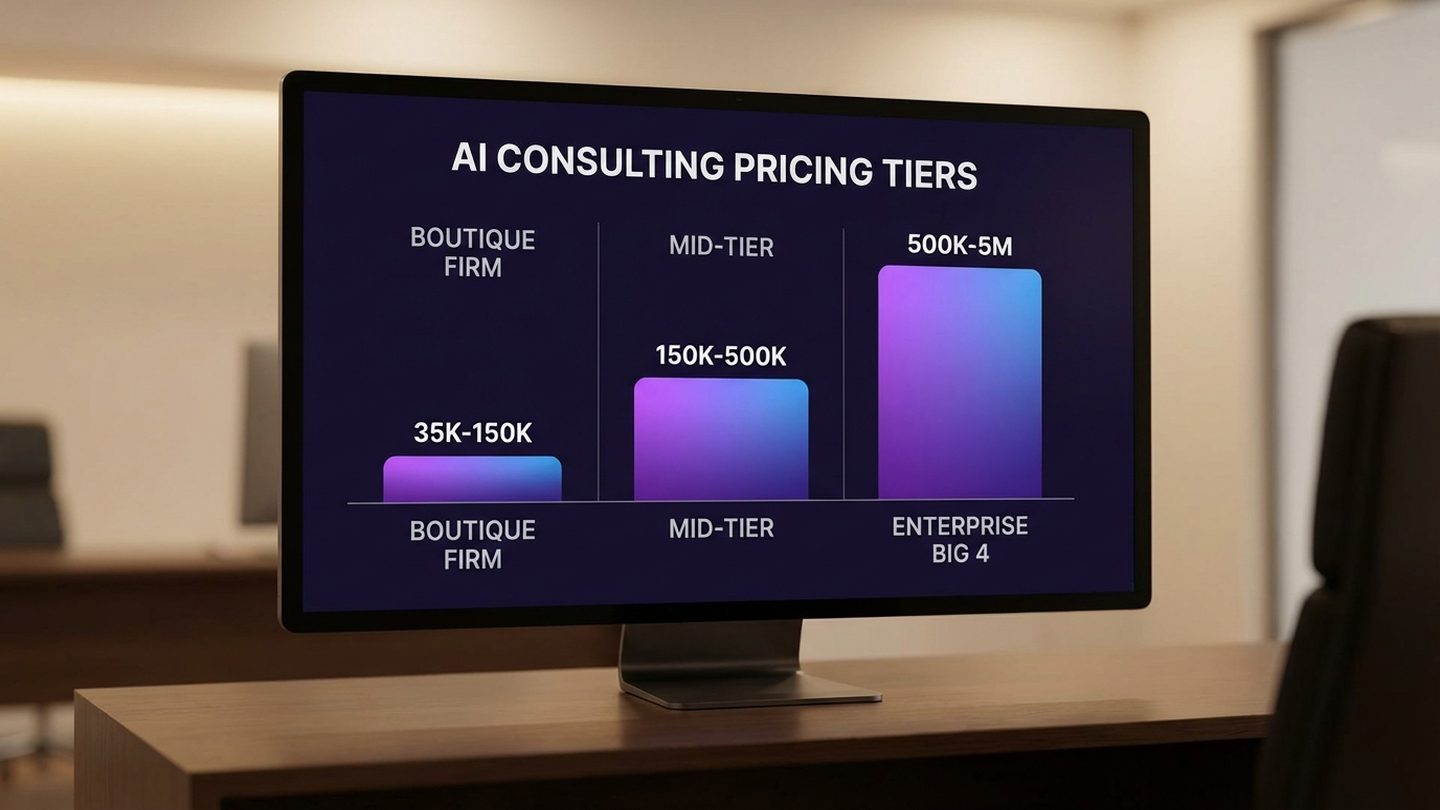

How much does it cost to build an AI knowledge management system?

The range is wide — from near-zero for a proof-of-concept using current LLM tools (following the Karpathy pattern of structured directories and LLM compilation) to six or seven figures for enterprise-grade platforms with security, governance, and integration requirements. The architecture matters more than the price tag. A well-designed compilation system at small scale will outperform an expensive search-only system at any scale.

Can small and mid-market companies do this, or is it only for enterprises?

Mid-market companies are actually better positioned for this than enterprises. Smaller organizations have less knowledge fragmentation, faster decision cycles, and can implement new knowledge architectures without navigating enterprise IT governance. Karpathy's personal knowledge base — 100 articles, 400,000 words — is comparable in scale to many mid-market companies' total institutional knowledge. The pattern works at this scale without specialized infrastructure.

What's the difference between an AI knowledge base and a traditional wiki?

A traditional wiki requires humans to write, organize, and maintain every article — which is why most corporate wikis decay within months of launch. An LLM knowledge base is compiled and maintained by the AI itself: it ingests raw sources, creates structured articles, maintains indexes and backlinks, identifies gaps and inconsistencies, and improves incrementally with every query. The human role shifts from writing and organizing knowledge to curating inputs and asking good questions.

How long does it take to see results from AI knowledge management?

A focused proof-of-concept in a single domain (client intelligence, product knowledge, or competitive intelligence) can demonstrate value within 30-60 days. The compound effect becomes significant at the 90-day mark, when the knowledge base has accumulated enough compiled intelligence to begin surfacing connections and insights that wouldn't have been visible in the raw sources alone. Titansoft's implementation showed measurable results — 45 minutes reduced to 30 seconds — from a hackathon-scale build.

Sources

- LLM Knowledge Bases — Andrej Karpathy, X (formerly Twitter), April 2, 2026

- LLM Wiki — Idea File — Andrej Karpathy, GitHub Gist, April 4, 2026

- The Social Economy: Unlocking Value and Productivity Through Social Technologies — McKinsey Global Institute (9.3 hours/week knowledge worker search time)

- AI-Driven Knowledge Management System Market Global Report 2026 — The Business Research Company ($7.66B to $11.24B, 46.7% CAGR)

- How to Safeguard Institutional Knowledge — Gartner (42% of knowledge resides solely with individual employees)

- More Than 80% of Enterprises Will Have Used Generative AI APIs by 2026 — Gartner

- 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026 — Gartner

- Inefficient Knowledge Sharing Costs Large Businesses $47 Million Per Year — Panopto / Censuswide

- Cost of Organizational Knowledge Loss and Countermeasures — Iterators (IDC $31.5B annual loss)

- We Built a Custom Knowledge Base So Our AI Assistant Would Stop Forgetting Everything — Titansoft, March 2026

- Leaders Predict AI to Continue Permeating All Aspects of KM in 2026 — KMWorld / APQC (49% of KM teams prioritize AI)

- LLM Knowledge Base: How Does It Increase Employee Productivity? — Glean

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.