Question

What is the current state of AI for business, and what comes after the chatbot phase?

Quick Answer

Most businesses are operating at Stage 1 — a chat interface with no memory of your organization. The next three stages are Context (AI that knows your business), Automation (AI that executes without being asked), and Living Intelligence (AI systems that compound organizational knowledge over time). Most organizations believe they're further along than they are. The gap matters because Stages 3 and 4 require architectural decisions that must be made at Stage 2 — decisions most organizations skip.

You probably started with ChatGPT. A browser tab, a question typed in, an answer returned. Maybe you upgraded to the paid plan. Maybe you started encouraging your team to use it. Maybe you've spent the last year figuring out which prompts work and which don't.

Here's what most organizations miss: that's Stage 1. It's where almost everyone starts — and it's where most organizations still are, even if they have a dozen AI tools running across the business.

There are four distinct stages in how AI integrates into an organization — plus a fifth that's already on the horizon. Each one requires a different kind of investment. Each one builds on what came before. And you can't skip Stage 2 without making Stage 3 dangerous — a pattern that's showing up right now in every organization that went straight to agents and automation before building the foundation those systems need to operate on.

This is a map of all the stages: what each one does, what it costs, and what you need in place before you can move to the next. More usefully, it's a way to locate yourself honestly — because most leaders are investing in Stage 3 capabilities on a Stage 1 foundation, and eventually that catches up with them.

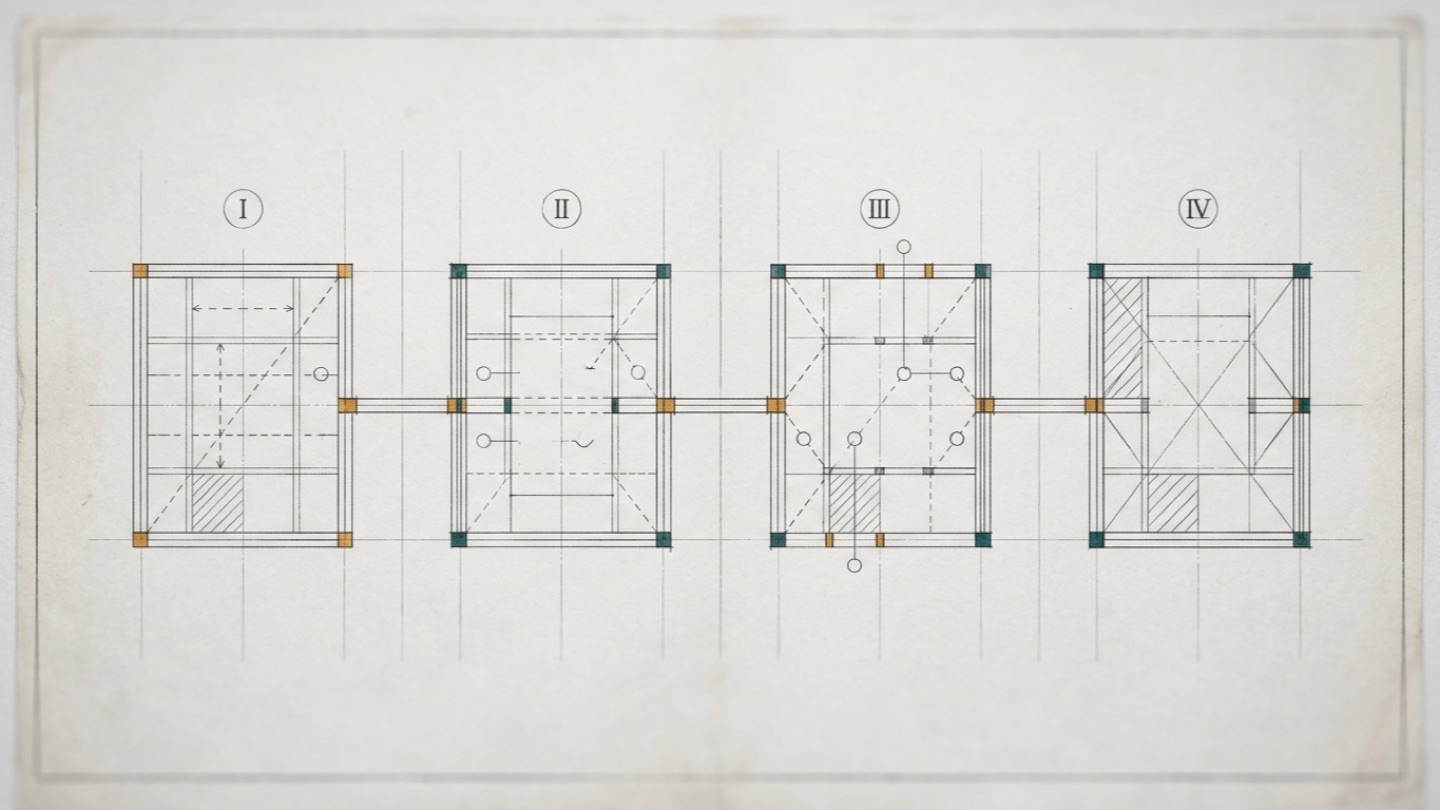

The Four Stages of AI Integration

The four stages aren't product tiers or vendor categories. They describe the relationship between your organization and the AI it uses — specifically, how much the AI knows about your business and whether that knowledge compounds over time.

Here's the architecture at a glance:

- Stage 1 — Conversation: You talk to AI. It answers. Every session starts from zero.

- Stage 2 — Context: AI knows your business. Every session starts informed.

- Stage 3 — Automation: AI executes without being asked. Workflows run on triggers.

- Stage 4 — Living Intelligence: The system compounds. Organizational knowledge accumulates, improves, and becomes a strategic asset.

What most organizations discover — usually the hard way — is that the stages can't be reordered. Stage 3 automation built on a Stage 1 foundation is fast and wrong. Stage 4 without a Stage 2 knowledge architecture isn't Stage 4 — it's expensive Stage 3 that claims to learn.

Here's what each stage actually involves.

Stage 1 — Conversation: Where Almost Everyone Starts

Stage 1 is the default state of AI for most organizations. You open a chat interface, type a question, get an answer. The interaction is one-directional — you bring the context; the AI brings the capability. Every session starts from zero.

This isn't a criticism. Stage 1 AI is genuinely useful. It can draft, summarize, explain, research, and edit faster than any human doing the same work alone. The productivity gains at Stage 1 are real, and significant enough to justify the investment many times over.

The problem isn't what Stage 1 can do. It's what it can never do.

The briefing tax

Every Stage 1 interaction begins with a cost you've probably stopped noticing: the briefing. Before you can ask a useful question, you establish who you are, what you're trying to do, what matters to your organization, what context the AI needs to give you a useful answer.

You do this automatically. You've gotten so good at it that you don't notice you're doing it. But spend a week tracking the first thirty seconds of every AI interaction and you'll find the same pattern: context-setting before the actual request. Who we are. What this project is. What tone we use. What we've already tried.

At Stage 1, that work resets with every conversation. The AI has no memory of yesterday. It doesn't know what your company does, who your customers are, what language you use in client communications versus internal documents, what your risk tolerance is, or what you tried last quarter that didn't work.

More significantly: it never will. Eighteen months of Stage 1 use leaves you exactly where you started, with exactly the same quality of AI interaction. The relationship doesn't compound. The AI doesn't know you better in month 18 than it did in month 1. Every conversation is the first conversation.

The Stage 1 ceiling

This creates a specific kind of frustration that organizations start recognizing around the six-month mark: the productivity gains plateau. Early Stage 1 feels like discovery. You keep finding new things the AI can do, new prompts that work, new use cases that save time. Then the discoveries slow down. The prompts you've learned work well enough that you stop experimenting. The tool becomes part of the workflow — but it doesn't get better.

The ceiling isn't the AI's capability. It's the structure around it. Stage 1 AI is a capable contractor who starts fresh every morning. You get everything they can do in a session; you get none of the institutional memory that makes long-term employees valuable.

The limits you start to notice

There's a specific experience Stage 1 users tend to share. You're two or three hours into a productive conversation with ChatGPT, Claude, or Gemini. You've built up the context, given examples, iterated on several outputs — and then the answers start drifting. The tool seems to forget things you told it earlier in the same session. It contradicts itself. It references instructions that weren't in this conversation at all.

What you're running into is the context window filling up. The AI is silently discarding parts of the earlier conversation to make room for what's coming. The tool didn't tell you it happened. Your chat history still shows the full transcript. But the model's working memory has rolled over, and it's operating on a truncated version of what you thought you'd shared.

This happens more often than most users realize. Chat history exists, but it's not a knowledge base — you can scroll back through old conversations, but the AI can't, unless you copy the relevant parts into a new session. Shared chats are one-way: you can send someone a link, but they can't collaborate inside the same context. And when you start a new session tomorrow to continue today's work, you're starting over.

These are the tool-level symptoms of the structural problem. Stage 1 isn't just limited by what the AI doesn't know about your business. It's limited by how much it can hold in mind at once, and how little of that carries forward.

Where Stage 1 remains the right choice

One thing worth saying clearly: Stage 1 is not wrong to use. For many tasks, it's the appropriate tool. For one-off research, for drafting a document type you rarely need, for answering a question that needs no organizational context — Stage 1 is efficient and sufficient. No AI investment should go to Stage 2 for work that doesn't need it.

The mistake is treating Stage 1 as a permanent operating mode for work that requires organizational knowledge. When your AI is helping you write customer communications, develop internal strategy, or support decisions that depend on knowing who you are and how you operate — that's when Stage 1's limitations start compounding rather than just limiting.

The question isn't whether Stage 1 is good or bad. It's whether the AI-supported work your organization cares most about actually needs Stage 2.

Stage 2 — Context: When AI Starts Knowing Your Business

Stage 2 begins with a realization: the AI doesn't need to be smarter. It needs to know more about you.

Context — in the AI sense — is the collection of business-specific knowledge that the system carries into every interaction. Not generic knowledge about your industry. Knowledge about your organization: how you position yourself, how you communicate with customers, what language you use internally versus externally, what decisions you've made and why, what your priorities are right now, what your team structure looks like.

When Stage 2 is working, every AI session starts informed rather than blank. You don't brief the AI on who you are — it already knows. You don't explain your brand voice — it's embedded. You don't remind it of decisions made last month — they're available. The first useful output comes faster, fits better, and requires less editing.

What context actually looks like

Most organizations' instinct about Stage 2 is that it's about tools — that there's a product that provides this capability. That's partly true, but it misses the harder part. Context isn't just a setting you configure; it's an accumulation of organizational knowledge that has to be deliberately captured.

A well-built context layer includes things like your company's voice and communication standards — the actual language you use, not generic "professional but approachable" descriptors. It includes your customer segments, their specific pain points, and the language they use versus the language your team uses. Your product or service positioning against the alternatives your prospects actually consider. Your decision history: what you've tried, what worked, what didn't, and the reasoning that drove each choice. Your organizational priorities — what matters this quarter, what's been deprioritized, where the real constraints are.

None of that exists in a generic AI system. All of it has to be built — either as structured documents, as configured knowledge bases, or as session templates that establish the operating environment before work begins. The work is organizational. The tools are secondary.

The compound effect

Here's what most organizations discover when they move to Stage 2: the quality improvement isn't additive. It's multiplicative.

Context doesn't just make individual interactions better — it raises the floor for every interaction. A Stage 1 prompt that produced a mediocre result produces a substantially better result with Stage 2 context, without changing the prompt at all. The AI's underlying capability hasn't changed. The knowledge available to apply that capability has.

More importantly, the context you build at Stage 2 compounds over time. Every structured document you add, every decision you record, every piece of organizational knowledge you encode — all of it is available in every future interaction. The investment gets better returns as it grows. An AI system with six months of accumulated context is substantially more useful than one with six weeks, not because the AI improved, but because the foundation you built did.

This is the core competitive mechanic that most organizations building "AI strategy" are missing. They're optimizing prompts. They should be building context.

Why most organizations skip this stage

Stage 2 is less visible than Stage 1 and less exciting than Stage 3. It doesn't produce demos. It doesn't generate all-hands content about your AI transformation. It looks like documentation work, knowledge management work, information architecture work — categories that organizations have historically underfunded and undervalued.

The result: most organizations jump from Stage 1 directly to Stage 3 without building the context layer that Stage 3 requires to function well. They implement agents and workflows on top of systems that don't know who they are or how they operate. The outputs feel generic. The automation requires constant correction. The productivity gains they expected haven't materialized at scale.

The answer, almost always, is Stage 2. Your AI doesn't know your business — and until you change that, every stage built on top of it will underperform. The architecture for building that context layer — the decisions about what to capture, how to structure it, and how to make it available — is what determines whether the context compounds over time or just sits in a folder nobody updates.

What most organizations try first — and why it falls short

The consumer AI tools have started gesturing at Stage 2. ChatGPT has Projects and Custom GPTs. Claude has Projects. Gemini has Gems. All three let you package instructions, upload reference files, and save a configuration so you don't have to brief the AI from scratch every session. If you've used any of these, you've taken the first real step toward Stage 2 — you've told the AI who you are in a form that persists.

These features are genuinely useful. They solve part of the Stage 1 problem. But they hit a ceiling fast for organizational use, because they were designed for individuals, not businesses.

A Custom GPT or a Gem lives in one person's account. There's no shared version the whole team operates on. Two people on the same team build their own, with their own instructions and their own uploaded files, and the outputs drift. There's no governance — no way to enforce brand voice across the organization, no audit trail of what the AI was told, no way to update the context centrally when the business changes. The knowledge lives in the tool, not in the organization. When the person who built it leaves, the work of building it leaves with them.

That's the difference between personal productivity and organizational capability. Stage 2 isn't one person's custom GPT. It's a shared context layer that the whole organization operates on top of — with versioning, with ownership, with deliberate maintenance, and with connections to the knowledge systems the organization already uses. Getting there usually means outgrowing the consumer tools and building the knowledge architecture explicitly.

What Stage 2 actually requires

Moving from Stage 1 to Stage 2 requires three things.

First, a decision: that your organization's knowledge is worth encoding. This sounds obvious. It isn't. Most organizations treat AI interactions as disposable — ask, receive, move on. Stage 2 requires the opposite orientation: every meaningful interaction is an opportunity to capture something your system should know permanently.

Second, a structure: somewhere for that knowledge to live in a form the AI can access. This might be a knowledge base, a context document, a set of session templates, or a more sophisticated configuration layer. The specifics depend on the tools you're using and the complexity of your organization. What matters is that it exists and that it's maintained.

Third, a habit: consistently updating and refining the context as your organization evolves. Context built once and left unchanged becomes stale. The organizations that get the most value from Stage 2 treat their context layer as a living document — something that reflects who they are right now, not who they were six months ago when they set it up.

Free Assessment · 10–15 min

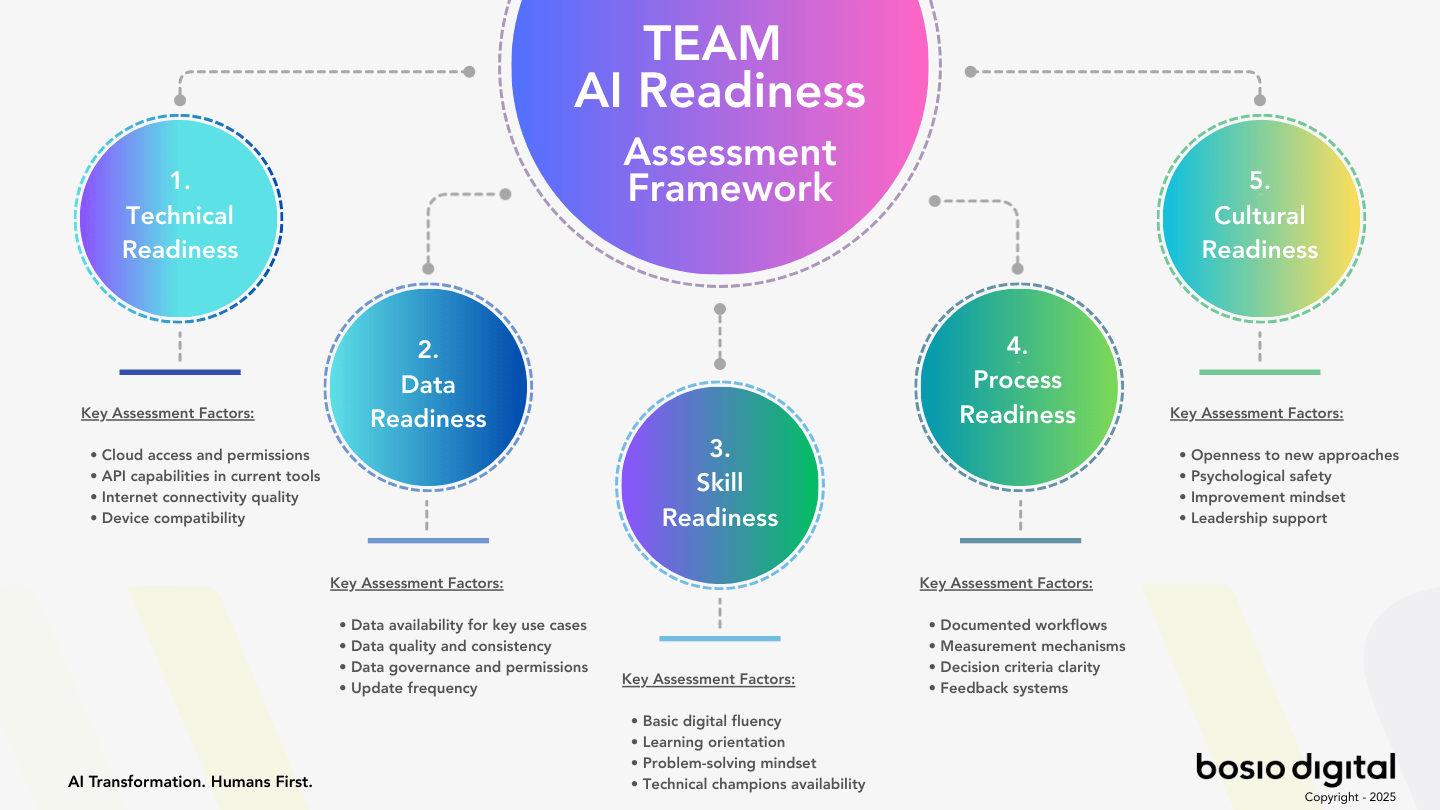

Is Your Business Actually Ready for AI?

Most businesses skip this question — and that's why AI projects stall. The TEAM Assessment scores your readiness across five dimensions and gives you a clear, personalized action plan. No fluff.

Stage 3 — Automation: When AI Stops Waiting to Be Asked

Stage 3 is where AI transitions from a tool you use to a system that operates. Instead of responding to prompts, it executes on triggers. Instead of waiting for a question, it monitors, evaluates, and acts. Reports generate themselves. Workflows run without human initiation. Emails draft and route based on incoming context. The AI doesn't ask what to do — it already knows, because you've defined the conditions under which it should act.

This is the stage that generates the most excitement in the AI market right now. And the most disasters.

What changes at Stage 3

The surface change at Stage 3 is speed and scale: things that took human time and attention happen automatically. But the deeper change is organizational. You're no longer managing tasks — you're managing systems. The work shifts from doing to designing: deciding what should happen automatically, under what conditions, with what guardrails, and how to verify it's working.

Well-designed Stage 3 automation is genuinely transformative. Routine work that previously required constant human attention gets handled without interruption. Decision-support runs in the background rather than on-demand. The team's cognitive energy shifts from execution to judgment — exactly where it should be.

Poorly-designed Stage 3 automation is fast and wrong. It executes confidently on bad information. It handles edge cases incorrectly, at scale, faster than humans can catch it. It generates remediation work that costs more than the automation saved.

The variable that determines which version you get is Stage 2.

The consumer-grade version of Stage 3

Stage 3 has its own set of consumer tools that gesture at the capability. ChatGPT has Tasks and Custom Actions. Claude has automations and tool use. Gemini has the Workspace extensions. Zapier and Make have added AI nodes that let you insert an LLM into any workflow. Microsoft Copilot and Google Workspace AI have started bundling automations directly into the productivity suite. If you've set up any of these, you've done Stage 3 in miniature for yourself — a scheduled report, an email triage rule, a workflow that drafts and routes.

The enterprise-grade versions are more ambitious: Anthropic's Model Context Protocol, custom-built agent platforms, dedicated workflow orchestrators that coordinate multiple AI steps across systems. These can do real work at real scale.

What the consumer and enterprise versions share is that the automation is only as good as the context it operates on. Set up an agent that drafts client emails without a Stage 2 brand voice layer, and it will produce drafts that sound like every other AI email in the world. Set up one on top of well-structured organizational context, and the drafts start sounding like your organization. Same technology. Different architecture underneath.

Automation amplifies what's underneath it

This is the insight missing from most discussions about AI agents and workflow automation: automation doesn't create intelligence. It amplifies whatever intelligence is already present in the system.

If you build Stage 3 on a well-developed Stage 2 foundation — a system that knows your business, your customers, your processes, your decision criteria — the automation inherits that knowledge. It acts according to organizational logic, not generic patterns. The outputs fit your context. Edge cases are handled correctly because the AI understands the domain it's operating in.

If you build Stage 3 on a Stage 1 foundation — a system that doesn't know anything specific about your organization — the automation inherits that ignorance. It acts on the best available generic logic, which is often sufficient for low-stakes tasks and insufficient for anything that requires nuance. The errors are polite, well-formatted, and wrong.

The organizations accumulating AI agents without a coherent architecture underneath them are experiencing the Stage 3 failure mode in real time. More automation, more complexity, less clarity about why the outputs don't match expectations. The problem isn't the agents. It's the foundation the agents are running on.

The governance requirement

Stage 3 introduces something Stage 1 and 2 didn't require in the same way: governance. When AI responds to your prompts, you're in the loop for every output. When AI executes autonomously, you're not. That changes the risk profile significantly.

The governance question at Stage 3 isn't just "what can go wrong" — it's "how fast can it go wrong before we notice?" Automation that misidentifies a pattern and acts on it can repeat that mistake hundreds of times before a human catches it. The speed advantage of automation is also the speed risk of automation.

Well-designed Stage 3 architecture includes explicit oversight mechanisms: monitoring that flags unexpected behavior, approval checkpoints for high-stakes decisions, clear boundaries between what the automation can decide independently and what requires human confirmation. These aren't limitations on automation's value. They're requirements for it to have value without also creating liability.

The teams doing Stage 3 well aren't the ones who've removed humans from the loop. They're the ones who've thought carefully about exactly which loop humans need to be in.

Stage 3 done right

When Stage 3 is built on a proper Stage 2 foundation with appropriate governance, the results are significant. Teams get back time previously spent on monitoring, routing, and formatting. Decisions that required research get the research done before the human enters the conversation. Routine correspondence that consumed senior attention gets handled without requiring senior attention.

The key is sequencing. Stage 3 should be an extension of Stage 2, not a replacement for it. The automation inherits the context; the context makes the automation useful. Organizations that build in this order — knowledge foundation first, automation second — consistently produce better outcomes than those that pursue automation first and try to add context later. The rearchitecting required to retrofit context onto existing automation is one of the more expensive lessons in AI implementation right now.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Stage 4 — Living Intelligence: When the System Compounds

Stage 4 is harder to name than the previous stages because it's not primarily a capability — it's a property. It's what happens when your AI system stops being a tool that does things and starts being an organization that learns.

The difference between Stage 3 and Stage 4 isn't scale. An organization with fifty automated workflows isn't a Stage 4 organization. Stage 4 is when the system gets better at your specific work over time — not because the underlying AI model improved, but because the organizational knowledge encoded into the system accumulates with each interaction and becomes more refined, more accurate, and more useful.

What compounding looks like in practice

At Stage 4, every meaningful interaction contributes to a shared body of organizational intelligence. A client communication that works particularly well informs how similar communications are drafted in the future. A decision process that proved effective gets encoded as a reusable pattern. A mistake that was caught and analyzed updates the system's operating parameters so it doesn't repeat.

The result: the AI system serving your organization in month eighteen is substantially different from the one in month six — not because you upgraded the model, but because the organizational knowledge available to that model has grown, been refined, and been structured in ways that make every interaction more useful.

Most organizations have their institutional knowledge distributed across people's heads, email threads, and documents that nobody can find. Stage 4 changes that. The knowledge lives in the system — structured, accessible, available to every AI interaction from the moment it's encoded. And critically, it survives the turnover that bleeds most organizations of hard-won expertise.

The mechanism: skills, not agents

The most important concept in Stage 4 — and the one most organizations discover last — is that the path to living intelligence isn't more agents. It's skills.

In the current AI discourse, agents get most of the attention. An agent is an AI that can take sequences of actions to accomplish a goal — it provides reasoning and execution capability. Agents are powerful, and they're increasingly available in commercial AI platforms. But agents alone don't create Stage 4. An organization with forty agents and no shared knowledge base has forty separate systems that each start with whatever they happen to know, which without a skills layer is generic information and whatever context is loaded at runtime.

Skills are different. A skill is a structured unit of domain expertise — your organization's specific knowledge about how a type of work gets done, encoded in a form that any AI interaction can access and apply. Not a script. Not a template. A knowledge module that captures how your organization thinks about and approaches a specific domain: client communications, competitive analysis, financial evaluation, product decisions.

The intelligence isn't in the agent — it's in the skills the agent can draw on. A capable AI agent with access to well-designed skills produces substantially better outputs than a capable AI agent operating without them. The agent provides the reasoning capacity; the skills provide the domain expertise. Together, they produce work that is both intelligent and contextually appropriate — something neither generates alone.

Skills encode what would otherwise leave

What makes skills particularly powerful at Stage 4 is that they're reusable and improvable. A skill built to guide client proposal writing doesn't just inform the next proposal — it informs every proposal. And when a particular approach works especially well, that learning can be incorporated back into the skill, making every future use better.

This is the compounding that defines Stage 4. Skills accumulate organizational expertise over time. The proposal-writing skill in month twelve is better than the one in month three — not because the AI got smarter, but because the skill was refined based on what worked. The competitive analysis skill incorporates lessons from twelve months of analyses. The client communication skill reflects what the organization has learned about how its specific customers respond to specific types of messages.

Consider what this means for the knowledge retention problem that every growing organization eventually confronts. In most organizations, expertise lives in people — and when people leave, the expertise leaves with them. At Stage 4, expertise is encoded into the skills library. When someone who has developed sophisticated judgment about client communications moves on, the patterns of their judgment are preserved. When a new team member joins, they have access to accumulated organizational wisdom from their first day. The skill carries what would otherwise be lost.

This is also where the connection to knowledge bases that actually compound rather than just accumulate becomes critical. The technical architecture underneath the skills layer — how knowledge is structured, maintained, and made queryable — is what separates a system that gets better from a system that just gets bigger. The learning loops that keep the system improving are worth understanding as a design pattern in their own right, because without them, Stage 4 is a static snapshot pretending to be a living system.

What Stage 4 actually looks like when it's built

The architectural picture of a working Stage 4 system has three components: an AI environment that supports persistent context and reusable skills, a knowledge layer connected to where the organization's information already lives, and a maintenance discipline that keeps both current.

For the AI environment, the options that genuinely support this pattern are narrower than the general AI market would suggest. Anthropic's Claude — through Projects, Skills, Cowork workspaces, and the Model Context Protocol — is designed around it. Claude Desktop with structured skill folders allows for domain expertise that loads on demand rather than living in a prompt. Perplexity Enterprise and its Comet agent point in a similar direction for research and synthesis work. Purpose-built enterprise agent platforms can do it too, though the build cost is significant. Most general-purpose consumer AI tools cannot — not today — even if their marketing suggests otherwise.

For the knowledge layer, the answer is almost always "connect to where the knowledge already is." Your organization's institutional memory lives in SharePoint, OneDrive, Google Drive, Dropbox, Notion, Obsidian, Confluence, or whatever combination has accumulated over the years. A well-designed Stage 4 architecture doesn't replace those systems — it connects to them, structures the knowledge that matters, and makes it available to the AI through a skills library. The knowledge systems are the substrate. The skills library is the interface between them and the AI. The distinction matters, because the organizations that try to rebuild their knowledge base inside a new AI tool end up maintaining two systems; the ones that connect their AI to the knowledge system they already run end up with one.

The maintenance discipline is the least glamorous component and the one most organizations fail at. Stage 4 isn't a project with a finish date. It's an ongoing practice of capturing what the organization learns, encoding it into the right skill, and pruning what has become outdated. Organizations that treat this as operational work — with clear ownership, regular cadence, and visible priority — get the compounding. Organizations that treat it as a one-time implementation get a snapshot that decays.

Stage 4 is an architecture decision made at Stage 2

Here's the part that changes how you should think about where you are right now: Stage 4 isn't a future destination you'll arrive at eventually. It's an architectural direction that you either choose or don't choose when you build Stage 2.

Organizations that build Stage 2 with Stage 4 in mind — structuring their context layer as a skills library from the beginning, designing the knowledge architecture to be reusable and improvable — find the path to Stage 4 to be a natural progression. The foundation they laid at Stage 2 scales into Stage 4 capability without a fundamental rebuild.

Organizations that build Stage 2 as an afterthought — or skip it entirely — find that arriving at Stage 4 requires significant rearchitecting of systems they've already built and deployed. That's solvable, but it's expensive in both time and organizational attention. The AI implementation debt accumulates in ways that aren't obvious until you try to move forward and find the foundation won't support where you want to go.

The question isn't whether Stage 4 is your goal. For any organization that treats AI as a strategic asset rather than a convenience tool, Stage 4 is the direction. The question is whether you're building toward it from the beginning — or whether you're building Stage 3 infrastructure that will need to be unwound before Stage 4 is possible.

Stage 5 — What's Coming, and Why It Doesn't Change the Work Today

Here's the honest state of the forecast: much of what looks like Stage 1's central limitation is already being solved at the platform level — right now, not in eighteen months.

ChatGPT has automatic memory that carries facts across conversations, and as of 2026, actively manages priority so the memory doesn't overflow. Claude shipped a three-layer memory architecture in March 2026 — chat memory, project memory, and an API memory tool — available to every user including the free tier, with automatic synthesis of your conversations every 24 hours. Gemini has rolled out Personal Intelligence, which connects to Gmail, Photos, YouTube, and Search to personalize responses, plus a memory import feature that transfers preferences from other AI platforms. This isn't a future prediction. This is the current product surface.

The trajectory is moving faster than most organizations are planning for. The floor is rising for every AI user, automatically, without anyone building anything.

That's the part most AI commentary is focused on. It matters. It's also not the interesting part.

The interesting part is what happens to the gap between Stage 2 and Stage 4 when the floor rises. Automatic memory management means the AI remembers what you told it last week — at the individual user level. That's useful, but it's generic: the same feature, running the same way, for every user of the platform. It does not mean the AI understands your pricing strategy, your customer segmentation, your internal decision-making culture, your compliance requirements, or the twelve months of refinement your team has accumulated on how to handle a particular type of client situation. Personal memory is not institutional knowledge. Automatic context is not organizational context.

More concretely: these memory features are attached to individual accounts, not to your business. The ChatGPT memory that has learned your CEO's preferences doesn't help the marketing coordinator. The Claude chat memory that has absorbed your sales director's communication style isn't available to anyone else. That's not a failure of the tools — it's what they were designed for. They personalize AI for one user at a time. They don't encode organizational knowledge for a team, and they weren't built to.

The organizations that built Stage 4 architecture — structured skills, maintained knowledge systems, deliberate organizational context — still have all of it. Their AI systems get the platform-level memory improvements layered on top of the organizational architecture they already built. The combination compounds. The floor rises for everyone; the ceiling stays where it is, reachable only by organizations that did the architectural work.

The organizations that waited for the tools to catch up — that stayed at Stage 1 assuming the platforms would eventually handle organizational knowledge automatically — have slightly smarter Stage 1 tools than they had last year. Better than before. Not different in the ways that actually matter.

The practical implication: nothing in the current trajectory makes Stage 2 work obsolete. It's what makes whatever ships next actually useful to your specific organization. Every piece of organizational knowledge you encode now is infrastructure the future tools will be able to leverage. Every piece you don't is knowledge those tools won't know to ask about.

Start Building

Audit Your AI Maturity Stage

Paste this prompt into any AI tool to get an honest assessment of which stage you're operating at — and the specific next step to move forward.

I want you to help me assess my organization's AI maturity stage. The four stages: — Stage 1 (Conversation): We use AI via chat. No memory, starts from zero every session. — Stage 2 (Context): AI knows our business — our voice, priorities, and decisions. — Stage 3 (Automation): AI executes tasks without being asked. Workflows run on triggers. — Stage 4 (Living Intelligence): AI system compounds organizational knowledge over time. Ask me these questions one at a time: 1. How does your team primarily interact with AI today? 2. Does your AI know your brand voice, key clients, and recent decisions — without you re-explaining each session? 3. Are there tasks your AI handles automatically, without a human initiating them? 4. When a high-performing AI output is created, what happens to that knowledge — does it update the system? 5. If your most experienced person left tomorrow, how much of their expertise would remain in your AI system? After I answer all 5, give me: — My current stage assessment (be honest — most organizations are 1–2 stages behind where they think) — The single most important thing to build before moving to the next stage — One specific action I can take this week

This prompt gives you the diagnostic — it won't design the architecture. If the assessment reveals Stage 1 or early Stage 2, the next question is what foundation to build first. See where you stand across all five readiness dimensions →

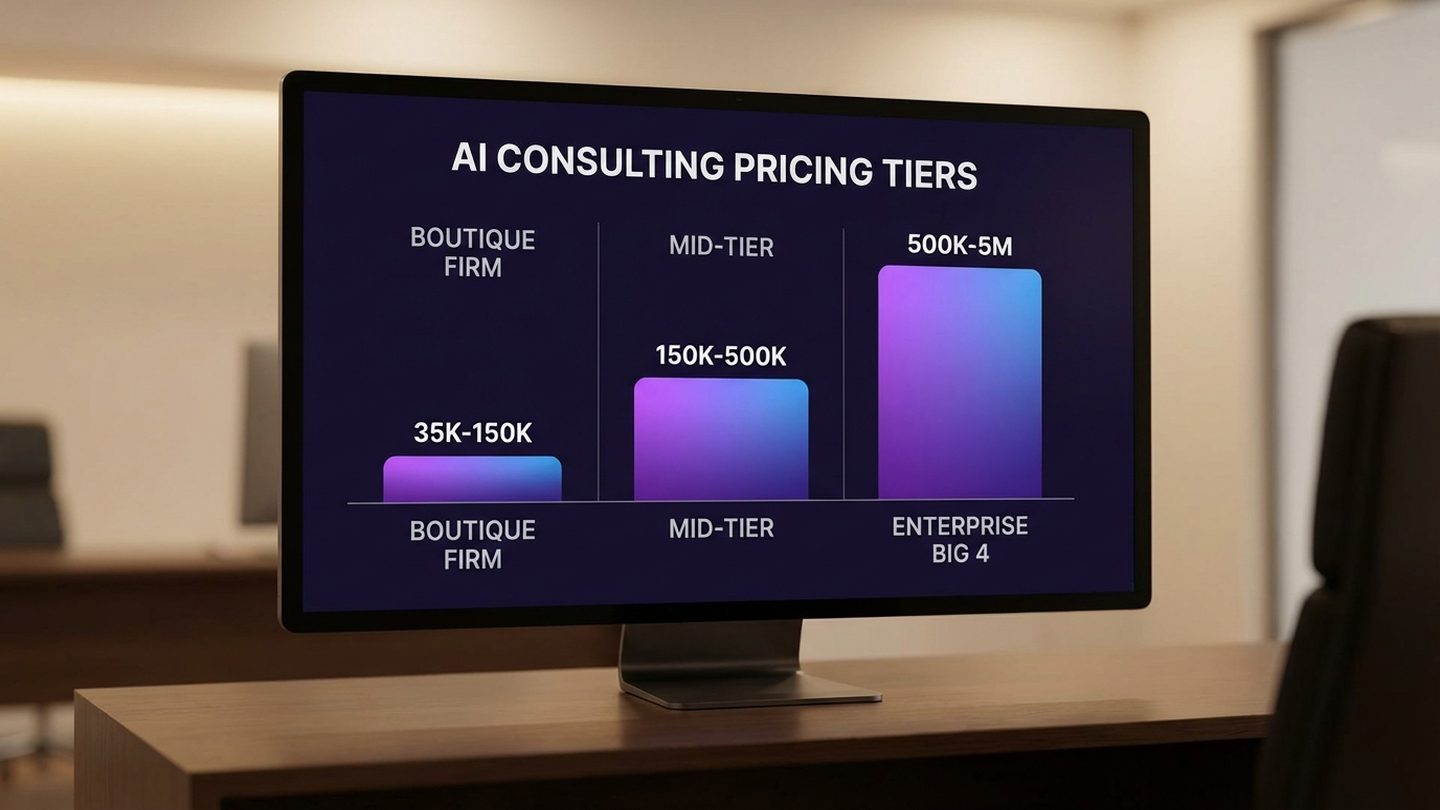

Where Most Organizations Actually Are

Here's the honest version of the maturity curve, based on what consistently shows up in organizations actively investing in AI:

Stage 1 is where the majority of AI activity happens, even in organizations that believe they're at Stage 2 or 3. This reflects how AI tools were designed and deployed, not how thoughtfully organizations are using them. ChatGPT, Copilot, and most enterprise AI tools default to Stage 1 behavior: capable, but context-free. Using them well is valuable. Using them and calling it an AI strategy is something else.

Stage 2 is where real differentiation begins. Organizations that have built a meaningful context layer — that have encoded their organizational knowledge in a form that AI can access and apply — see consistently better outputs with the same tools as their Stage 1 competitors. This is also where most organizations claiming Stage 2 haven't actually arrived. "We trained our AI on our documents" is not Stage 2. Stage 2 is a functioning context layer that makes every AI interaction start informed, reflects current organizational priorities, and gets maintained as those priorities change.

Stage 3 is where many organizations are experimenting, often out of sequence. Automation built on Stage 1 or partial Stage 2 foundations produces the most common AI complaints: outputs that require constant correction, agents that handle standard cases and fail at edge cases, workflows that save time on the easy scenarios and create new work on the hard ones. The technology is fine. The foundation it's running on isn't.

Stage 4 is rare — not because the technology doesn't exist, but because it requires architectural decisions that most organizations haven't made yet. The organizations operating at Stage 4 aren't there because they bought a product that delivers it. They're there because they made deliberate decisions at Stage 2 about how to structure, maintain, and improve their organizational knowledge — and those decisions scaled into something that compounds.

The most common gap we encounter isn't between Stage 3 and Stage 4. It's between Stage 1 and Stage 2. Organizations that close that gap — that build a genuine context layer, treat it as infrastructure, and maintain it — find the rest of the maturity curve becomes significantly more tractable. The organizations that skip it find it again at every subsequent stage, as an explanation for why the results they expected haven't materialized.

How to Move Through the Stages Deliberately

The temptation when reading a maturity model is to immediately ask "how do I get to Stage 4?" That's the wrong question. The right question is: what's required to move from where I am to the next stage, and am I ready to invest in it?

Moving from Stage 1 to Stage 2 requires a knowledge project. Not an AI project — a knowledge project. You need to identify what your organization knows that an AI system should know, capture it in a form that can be maintained, and build the discipline of keeping it current. This is slower than buying a new tool. It produces returns that buying a new tool can't.

Moving from Stage 2 to Stage 3 requires a design project. The automation you build should express the context you've accumulated. Every workflow should be designed with the assumption that the AI knows your organization — because if it does, that knowledge can be reflected in how the workflow handles branching points, interprets ambiguous inputs, and manages situations that didn't appear in the original specification. Automation designed this way behaves differently than automation built in ignorance of context.

Moving from Stage 3 to Stage 4 requires a systems design project. You need to think about how the skills library grows, how new organizational knowledge gets incorporated, how learning from individual interactions gets structured and preserved, and who owns the ongoing maintenance of the intelligence architecture. This is organizational design work as much as it is technical work — and it requires someone with enough authority to treat knowledge management as a strategic function, not an operational afterthought.

None of these transitions are primarily technology problems. They're organizational problems with a technology component. The organizations that move through the stages fastest aren't the ones with the most AI tools. They're the ones with the clearest thinking about what their AI system needs to know, and the discipline to build it.

The Map Is Not the Territory

Every organization's AI maturity looks different in practice. Some have built deep Stage 2 context layers in specific functions while remaining at Stage 1 everywhere else. Some have Stage 3 automation that works well in one workflow and catastrophically in another, because the context quality varies across the business. The four stages are a framework for thinking, not a precise taxonomy.

What the framework does is give you a vocabulary for honest diagnosis. When AI outputs feel generic — when the automation keeps requiring human correction, when the productivity gains you expected haven't materialized — the question isn't "is our AI good enough?" The question is: what stage is this system actually operating at, and what would it take to move it forward?

Most organizations have the AI capability they need for Stage 4. They're using it at Stage 1. That gap is addressable. It requires organizational will and careful sequencing more than it requires new technology.

The question is whether you're ready to locate yourself honestly — and to invest in what actually comes next.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Frequently Asked Questions

What stage of AI maturity is most common for mid-market businesses right now?

Most mid-market businesses are operating at Stage 1 — using AI through chat interfaces with no persistent organizational memory — even if they have multiple AI tools deployed. Stage 2 is where meaningful differentiation begins, but most organizations that believe they've reached it have only partially built the context layer required to actually operate there. A reliable test: start a new AI session without any context-setting and see if the output could apply to any organization in your industry. If it could, you're at Stage 1.

How long does it take to move from Stage 1 to Stage 2?

For most mid-market organizations, a meaningful Stage 2 context layer takes four to eight weeks to build initially, with ongoing maintenance after that. The investment is primarily organizational — identifying what the AI needs to know about your business, capturing it in a usable structure, and building the habit of keeping it current — rather than technical. Organizations that treat it as a documentation project finish faster than those that treat it as a software deployment.

What is the difference between AI context and AI training?

AI training changes the model itself — a technically complex, expensive undertaking that is generally unnecessary for business applications. AI context provides existing models with business-specific knowledge through structured documents, instructions, and knowledge bases available at runtime. For most organizations, context is the right investment: it doesn't require custom model development, can be built and maintained without deep technical expertise, and produces measurable improvements in output quality without a model change.

Can you implement Stage 3 automation before building Stage 2 context?

Technically yes — and many organizations do. The consequence is automation that executes on generic assumptions rather than organizational knowledge: outputs that require constant human correction, workflows that handle standard cases adequately and fail at edge cases, and productivity gains that plateau quickly. Building Stage 2 context before Stage 3 automation consistently produces better outcomes. The automation inherits the context, making it more accurate and requiring less oversight. The organizations retrofitting context onto existing automation report that the rearchitecting costs more than doing it in order would have.

What is an AI skill, and how is it different from an AI agent?

An AI agent is a system that takes sequences of actions to accomplish a goal — it provides reasoning and execution capability. An AI skill is a structured unit of domain expertise: your organization's specific knowledge about how a type of work should be approached, encoded in a reusable form. The key distinction is that agents are process, skills are knowledge. The most effective Stage 4 architectures combine capable agents with rich skill libraries — the agent supplies reasoning capacity, the skills supply domain expertise, and together they produce work that is both intelligent and contextually appropriate.

How do you know if your organization is actually at Stage 2, or just using Stage 1 AI more efficiently?

The test is practical: open a new AI session with no context-setting. Ask it to draft something typical for your business — a client email, an internal briefing, a proposal section. If the output sounds like it could have come from any organization in your industry, you're at Stage 1 — you've gotten better at briefing, but you haven't built context. If the output reflects your organization's specific voice, priorities, and framing without you providing them, you're at Stage 2. A corollary check: could a new employee use your AI system on day one and produce outputs that sound like your organization? If not, the context layer isn't there.

What does "living intelligence" mean in practical terms for a business?

Living intelligence means your AI system improves at your specific work over time — not because the underlying model changes, but because the organizational knowledge available to that model accumulates and gets refined. In practice: a proposal produced in month twelve is better than one produced in month three, not because you changed your prompts, but because twelve months of effective proposals have updated the skills library the AI draws from. The competitive moat is that the system encodes what your organization has learned — in methodology, in client communication, in analytical frameworks — and that knowledge stays in the system even as the people who developed it come and go.

Sources

- Your AI Doesn't Know Your Business — bosio.digital — The organizational context gap and its consequences for AI output quality.

- Context That Compounds — bosio.digital — Architecture and implementation of a Stage 2 context layer.

- From Raw Data to Living Intelligence — bosio.digital — Knowledge compilation vs. document storage; Karpathy's five-layer framework applied to business knowledge architecture.

- The Self-Improving AI — bosio.digital — Learning loop architecture and how feedback paths keep AI systems improving over time.

- AI Agent Sprawl — bosio.digital — Why accumulating agents without a coherent architecture creates Stage 3 failure modes at scale.

- Enterprise AI Adoption Report 2026 — Writer — 79% of organizations report challenges scaling AI systems; data on the gap between AI deployment and measurable value.

- Trustworthy Agents in Practice — Anthropic Research, April 2026 — Progressive trust architecture in AI agent deployment; empirical data on how humans develop appropriate oversight habits over time.

- Barry Zhang & Mahesh Murag, AI Engineer Code Summit — April 2026 — The skills-vs-agents framework; how skills encode domain expertise in reusable, improving units that outperform specialized agent architectures.