Question

What is self-improving AI and how does it work?

Quick Answer

Self-improving AI is a system architecture — not a model property — that routes execution outputs back into the system as improvements to skills, context, or both. Unlike static AI that stays exactly as capable as the day it was deployed, self-improving AI uses feedback loops to update its working instructions, expand its organizational knowledge base, and earn greater autonomy over time. A 2026 learning loop framework identifies three feedback paths that make this possible: silent skill optimization (automatic marginal improvements), a feedback database with human gate (larger changes reviewed before deployment), and context expansion on session close (organizational knowledge encoded at the end of every run). The result is a system that compounds value over time instead of delivering flat returns.

Your AI should be smarter on Friday than it was on Monday. If it isn't, you don't have a learning system — you have an expensive static tool. That distinction — between a system that compounds and one that doesn't — is the real reason enterprise AI initiatives keep failing. Not because the models aren't good enough. Because the architecture around them doesn't learn.

Your AI Is Exactly as Smart as the Day You Deployed It

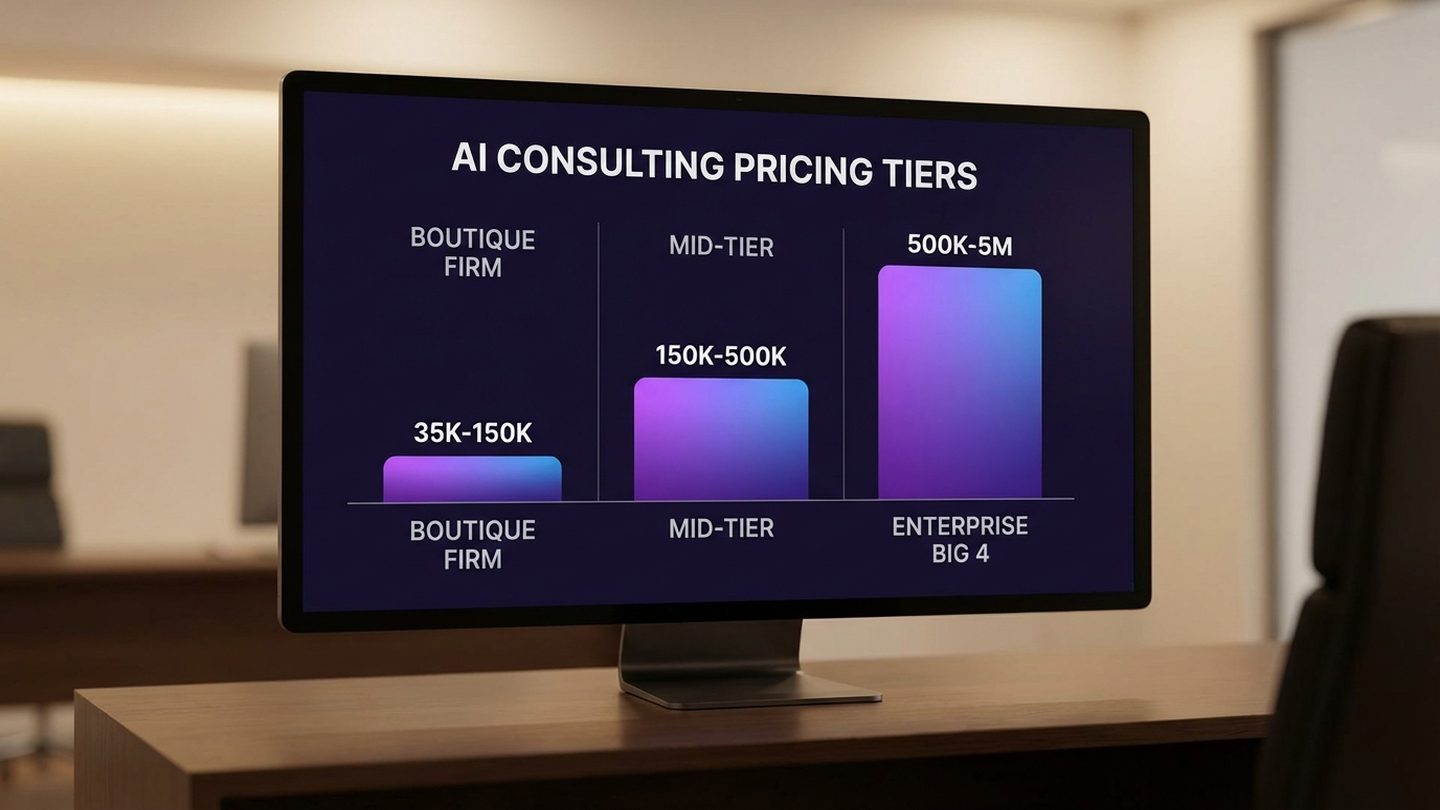

According to MIT research across 300+ AI initiatives, 95% of organizations saw zero measurable return from generative AI. Deloitte's 2025 survey of 1,854 executives found only 15% achieve significant, measurable ROI from generative AI — and only 10% from agentic AI — despite 85% having increased their investment. Those numbers don't reflect a technology problem. They reflect an architecture problem.

Most enterprise AI is deployed, runs, and stays exactly the same. No learning, no improvement. The ROI curve that should be going up is flat. But the problem is actually worse than flat. Unlike traditional software, which remains static until you explicitly change it, machine learning models exist in a state of continuous silent degradation. The world changes. Your business changes. Customer behavior shifts. The AI doesn't know any of it. It's working from a snapshot.

The data on degradation is stark. 91% of ML models degrade over time without active maintenance. EkFrazo's research on enterprise ML deployments found that performance degradation begins almost immediately after deployment — driven by data drift, concept drift, and the simple fact that the world the model was trained on is no longer the world it's operating in. The gap between what your AI knows and what your business actually needs grows every single week.

42% of companies abandoned AI initiatives in 2025 (S&P Global), up sharply from 17% the prior year. That doubling tells you something. These aren't companies that tried AI for a week and gave up — these are organizations that invested, deployed, and then pulled back. When you dig into what those projects had in common, a pattern emerges: most of them were agent sprawl situations — multiple static tools that couldn't learn, couldn't share knowledge, and required constant manual upkeep just to maintain their initial performance level.

This is the default outcome of AI without learning loops. And it's where most organizations are right now — not because their AI failed spectacularly, but because their architecture doesn't improve. The AI they have today is doing exactly what it was trained to do on day one. That's not a bug in the model. That's a design failure in the system.

The companies in Deloitte's top 20% — the ones actually seeing ROI — have one thing in common that the bottom 80% don't: their systems get smarter. Understanding why requires understanding what a learning loop actually is, and what it isn't.

Free Assessment · 10–15 min

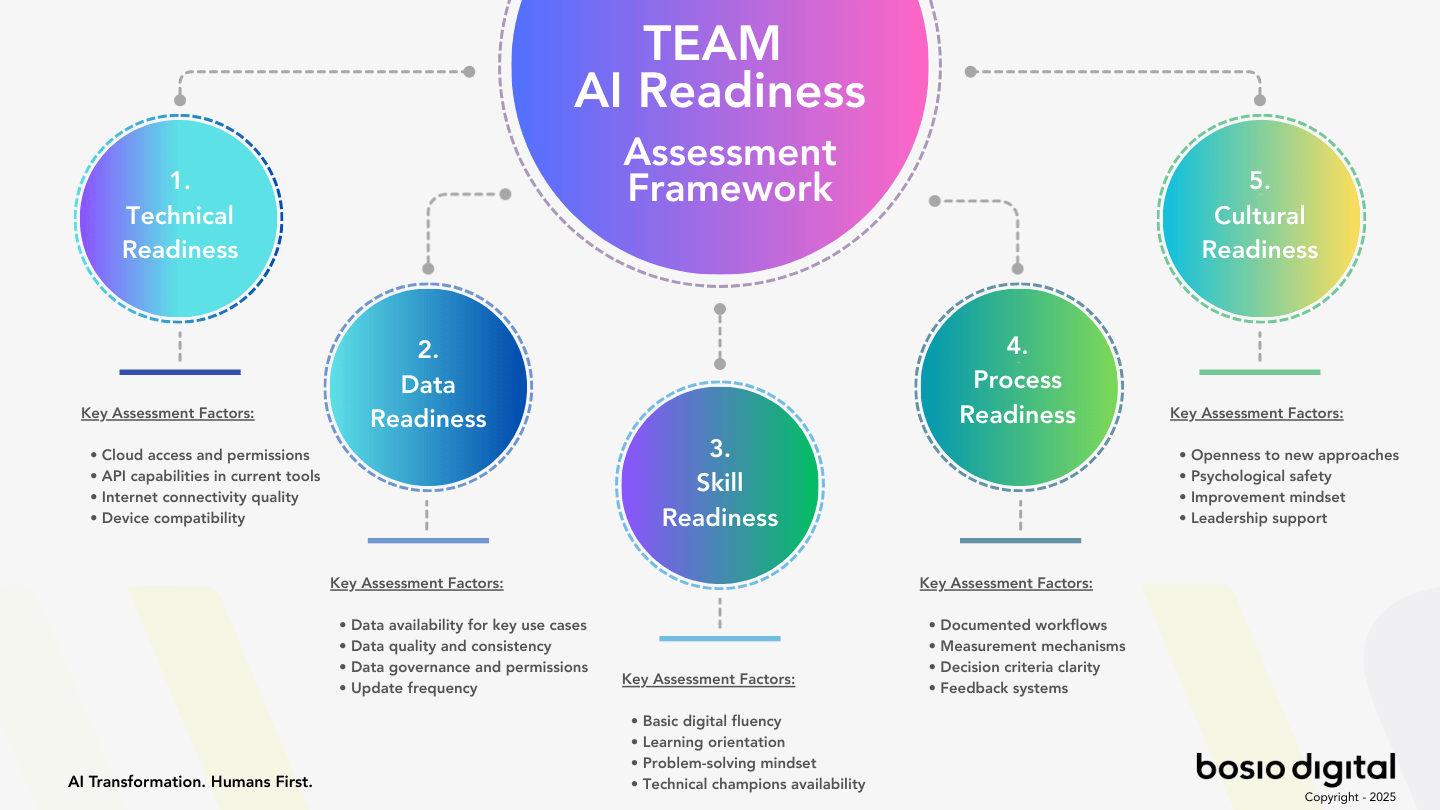

Is Your Business Actually Ready for AI?

Most businesses skip this question — and that's why AI projects stall. The TEAM Assessment scores your readiness across five dimensions and gives you a clear, personalized action plan. No fluff.

What a Learning Loop Actually Is (And What It Isn't)

A learning loop is a feedback architecture that routes execution outputs back into the system as improvements to skills, context, or both. That's the working definition. But what matters as much as the definition is the clarification: this is not model retraining. This is not AGI recursive self-improvement. The architecture we're describing operates entirely at the system layer.

This distinction matters because "self-improving AI" gets conflated with two other concepts that create confusion and, frankly, fear. The first is recursive model self-improvement — the AGI-adjacent idea that an AI system could improve its own underlying intelligence in an unbounded loop. That's a speculative research frontier, not a production architecture. The second conflation is with continual learning in the ML sense — the practice of updating model weights on new data. That's an engineering process requiring significant compute, infrastructure, and rigorous validation cycles. Neither of these is what we're talking about.

In a learning loop architecture, the model itself doesn't change. Claude remains Claude. ChatGPT remains ChatGPT. What changes are the instructions, context, and feedback routing around the model. The improvement happens at the system layer. The model is the engine; the learning loop is what makes the engine smarter about which routes to take, which tools to use, and what the organization actually needs.

The kitchen analogy is useful here. A master chef doesn't become better by getting new taste buds. They improve by refining their recipes, updating their techniques, learning from what worked last service and what didn't, and storing that accumulated judgment in a form that survives their shift. The kitchen is the system. The chef's body of earned knowledge is the context layer. The meal is the execution output. A kitchen without institutional memory produces the same meal in year five as it did in year one, regardless of how talented the chef. A kitchen with strong institutional memory gets better every service.

Most enterprise AI deployments have the chef but not the kitchen knowledge system. They have capable models running tasks without any mechanism for the lessons from those tasks to feed back into future performance. The model is being used, not trained. The sessions happen, and then they end — and everything learned in that session disappears.

The problem is structural. When your AI doesn't know your business — your specific language, your customer preferences, your internal process refinements — it can't improve on what it doesn't know happened. Every session is essentially a cold start from a knowledge perspective. The model brings its training. Your organizational context doesn't compound.

A learning loop changes this by answering a specific architectural question: after an execution completes, where does the learning go? In a static system, the answer is: nowhere. In a learning loop system, there are three distinct paths for that learning — and each one serves a different type of improvement. Understanding those paths is the difference between AI that compounds and AI that flatlines.

The Before State — 25 Agents That Couldn't Learn

A pattern we see repeatedly in enterprise AI: organizations build 25 or more specialized agents — each capable at its specific task. A proposal agent. A customer research agent. A meeting summary agent. Twenty-five distinct capabilities, each tuned for its function. On paper, impressive coverage. In practice, an architecture that couldn't learn from itself.

Here's what the 25-agent world actually looked like to operate. Every improvement required a human to identify the failure, update the instructions, and redeploy the agent. If the proposal agent was generating sections in the wrong order, someone had to notice it, rewrite the prompt, test the change, and push the update. Not once — every time the system drifted from what was needed. Process changes required manual updates across multiple agents simultaneously, because the agents didn't share a knowledge base. New capabilities required creating new agents entirely, compounding the management overhead. The agent sprawl problem isn't just about scale — it's about the maintenance debt that accumulates when each agent is an island.

Most critically, development was entirely human-dependent. The system could only get smarter when a specific human made it smarter. And when that person left, their refined workflows went with them. The institutional knowledge walked out the door.

This is the workforce crisis dimension of static AI that rarely gets discussed. 51% of employees were actively seeking new jobs in 2025. 10,000 Baby Boomers are retiring daily until 2030. US companies spend $900 billion annually replacing employees who quit — a figure that captures salary replacement costs but not the full cost of lost expertise. Synaply's research on the knowledge drain problem found that the tacit knowledge a departing employee carries — the workflow nuances, the client preferences, the hard-won process improvements — typically takes 12-24 months to rebuild in their replacement, if it's rebuilt at all.

Standard AI deployments don't capture the departing knowledge. They can automate the tasks the employee did, but they can't encode the judgment the employee had developed. When that person updates the proposal agent's instructions one last time before handing in their badge, those improvements live in a prompt file somewhere — not in a system that actively learns from every execution going forward.

Learning loop architecture solves this structurally. When organizational knowledge is continuously routed back into the system after every execution, it stops being a property of the person who did the work and becomes a property of the system itself. The knowledge doesn't walk out the door because it was never solely in someone's head — it was encoded at the system level in real time.

The reinforcing feedback loop in learning loop architecture creates the opposite dynamic from the 25-agent world. More execution generates more learning. More learning improves the instructions. Better instructions produce better results. Better results generate more use. More use generates more execution. The loop compounds upward instead of requiring constant human injection to maintain its current level.

But understanding the loop at this level of abstraction isn't enough. The practical question is: what are the specific mechanisms? How does learning actually move from execution output into system improvement in a way that's both automatic and trustworthy? The learning loop framework identifies three distinct paths — and each one solves a different part of the problem.

The Three Feedback Paths

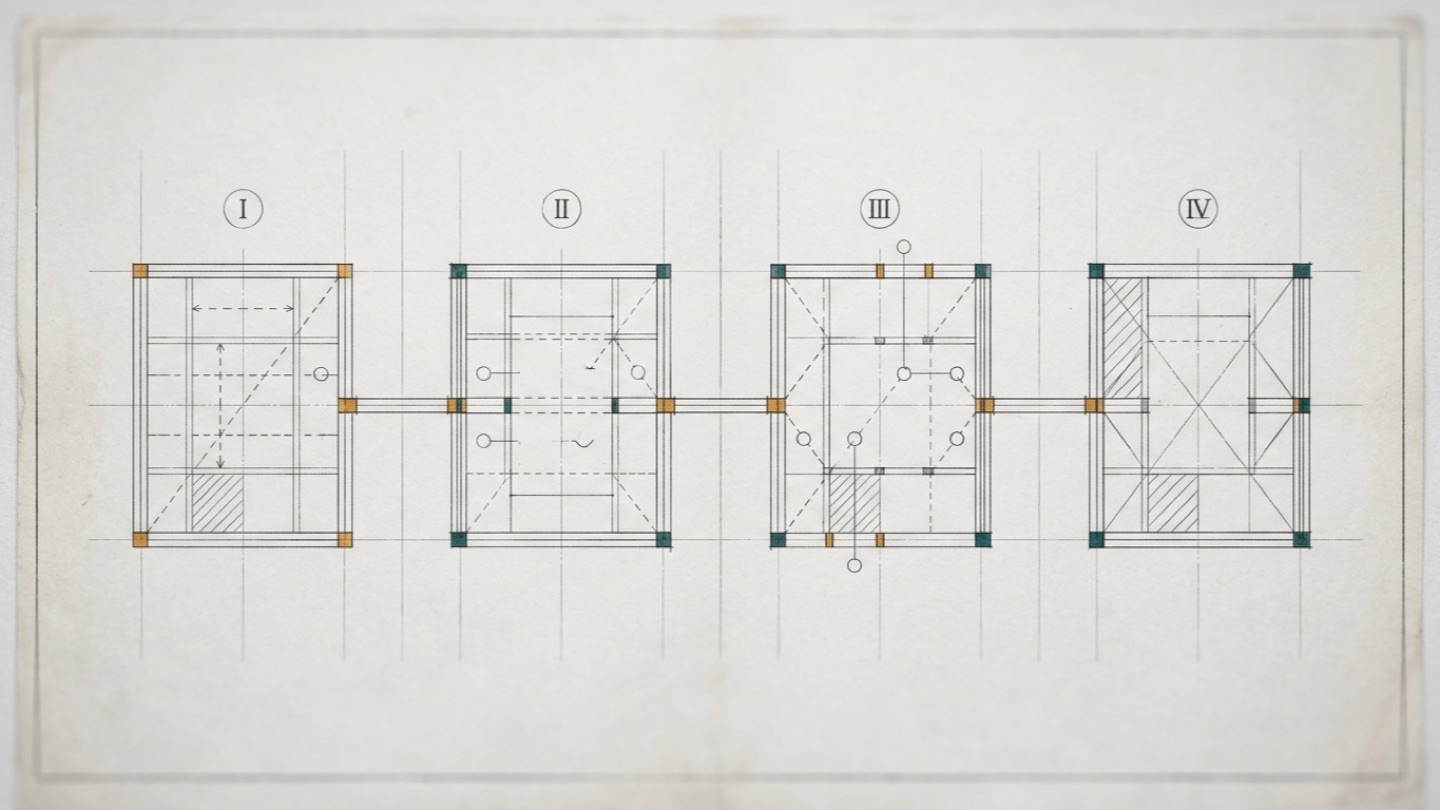

The three-path framework is the most practical architecture for production learning loops we've seen articulated. Each path handles a different type of learning at a different scope and speed. Together, they cover the full spectrum from small in-session corrections to long-term context accumulation.

Path 1 — Silent Skill Optimization

When users provide feedback about skill properties during live execution — tone is off, format is wrong, a specific rule needs to be applied consistently — the AI determines whether that learning is systemic. For unambiguous learnings, it implements three simultaneous actions: correct the current output, modify the skill instructions directly, and document the change in a learning log with a date and a single-sentence rule description.

The critical phrase is "next time, nobody needs to remember." This is the design principle that separates Path 1 from what most organizations actually do, which is: a user notices a recurring problem, mentions it in Slack, someone updates the prompt, and two weeks later the same problem resurfaces because the update was incomplete. Path 1 makes quality assurance a permanent system component rather than a human memory task. The moment a correction is confirmed as systemic, it becomes part of the skill instruction permanently — without requiring any follow-up action from anyone.

Path 1 handles the high-frequency, low-ambiguity improvements. The ones where there's no real debate about whether the learning should be applied. When a user says "always cite sources at the end, not inline" and that's clearly a format preference that applies universally to that skill, the system updates itself and moves on. No ticket. No admin queue. No forgotten follow-up.

Path 2 — Feedback Database with Human Gate

Problems that extend beyond a single skill's scope enter a central feedback system. A Feedback Logger agent runs after every execution, documenting whether issues emerged, whether information was missing, and whether optimization opportunities exist. That structured output enters a ticketing system — not a chat log, but a structured backlog of improvement candidates with context attached.

The critical rule here is human oversight: the AI doesn't independently implement structural feedback. Humans must review and approve every entry before the AI implements changes. Only after a human confirms the change does it deploy as a new skill version. This sounds like friction, but it's actually the mechanism that makes the system trustworthy at scale.

Teams that have attempted full automation in this layer discovered the same failure mode: hundreds of weekly changes flowing so fast that system changes became untraceable. When something broke, there was no clear cause. Multiple rollbacks were required just to restore stability. The human gate is not a concession to skepticism about AI — it's an engineering requirement for maintaining system integrity. You cannot have meaningful rollback capability if you don't have meaningful review.

Path 2 handles the medium-frequency, higher-stakes improvements. The ones where a reasonable person might ask whether the change is correct, or whether it has downstream consequences. Structural changes to how the system routes information, modifications that affect multiple agents, new integrations — these require a human to confirm that the proposed change actually reflects what the system should do. The AI surfaces the candidate. The human decides. The AI implements.

Path 3 — Context Expansion on Session Close

Every execution generates new context that automatically flows into appropriate databases at session close. Meeting transcripts get structured systematically — not just stored, but organized by topic, participant, and decision made. Proposals reference customer records and build on prior engagement history. Project documentation links within the living intelligence context map, so future sessions can query what was decided and why.

The power of Path 3 is invisible during any single session. It becomes visible at month three and month twelve. When creating the next proposal for the same customer, the AI doesn't start from generic best practices — it knows the prior discussions, the pricing sensitivities that came up last quarter, the specific use case they're focused on, and the format preferences the decision-maker responded well to. None of this required anyone to manually update a CRM or document a lesson learned. It happened as a byproduct of execution, automatically encoded at session close.

This is what static AI cannot replicate. The context layer that Path 3 builds is the organizational intelligence that currently lives in experienced employees' heads — and walks out the door when they leave. Path 3 doesn't eliminate the need for human expertise. It ensures that the knowledge generated by human expertise compounds in the system rather than evaporating at the end of each engagement.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Static vs. Self-Improving — The Architecture Comparison

The difference between a static AI and a learning loop AI isn't visible on day one. Both will execute tasks. Both will produce outputs. The gap reveals itself over time — and by month six, it's significant.

| Dimension | Static AI | Learning Loop AI |

|---|---|---|

| Skill quality | Fixed at deployment | Improves with every execution |

| Organizational knowledge | What you told it at setup | Accumulates with every session |

| Improvement mechanism | Manual prompt updates | Automatic + human gate |

| Knowledge when people leave | Lost | Encoded in context layer |

| Value over time | Flat or declining | Compounding |

| ROI trajectory | Decreasing (cost/output ratio rises) | Increasing (compound returns) |

| Scaling behavior | More agents = more maintenance | More agents = more learning |

At month one, a static AI deployment often looks fine. Output quality is acceptable. The team has adapted to its quirks. The prompts are tuned from the initial setup. There's enthusiasm. At month six, the picture is different. The model is doing exactly what it was doing on day one — but your business has moved. New clients have preferences the system doesn't know. New processes have been developed the AI doesn't follow. The maintenance overhead has accumulated. Someone on the team is spending real hours each week manually updating prompts and redeploying agents.

At month twelve, the static AI's cost-per-useful-output ratio has risen meaningfully. You're not getting more from it — you're getting the same, at higher maintenance cost. Deloitte's research is clear on the timeline: AI ROI takes an average of 2-4 years with static approaches, which is 3-4 times longer than the 7-12 month standard technology payback period that boards expect. Organizations aren't getting patient — they're abandoning projects that look like they'll never deliver.

The learning loop AI follows a different curve. Month one looks similar. But at month six, the skill instructions have been updated dozens of times based on real execution feedback. The context layer has accumulated hundreds of organizational knowledge entries. The system is producing meaningfully better output on the same tasks, with fewer corrections required from the team. Month twelve looks like a different product entirely from what was deployed on day one — because it is. The instructions are better. The context is richer. The corrections are rarer. The value compounds.

Organizations with learning loops are the ones in Deloitte's top 20%. The architecture, not the model, determines which side of that divide you're on.

The Compounding Value Model

Three compounding mechanisms drive the value curve in a learning loop architecture. Understanding each one separately makes it easier to see how they reinforce each other.

Skill compounding works because every marginal improvement becomes the new baseline. A skill that starts at 70% accuracy on a complex task reaches 75% after ten executions, 82% after fifty, and 91% after two hundred — if the learning loop is closing properly. Each correction that gets embedded in the skill instruction raises the floor for every subsequent execution. The compounding happens because you're not fighting the same problem repeatedly; you're building on prior improvements. Skills that improve with each use eventually reach quality levels that would have taken years of manual prompt engineering to achieve through episodic attention.

Context compounding works because organizational knowledge in the context layer creates an ever-richer intelligence base. An agent running its 500th session has access to everything learned in the previous 499 — client preferences, internal vocabulary, process refinements, decision history. A static agent starts from scratch every time. The 500th session is identical in capability to the first. Context compounding is what makes AI actually know your business, rather than just executing tasks in a business context it doesn't understand. The measuring AI return on investment calculation looks completely different when you factor in context depth — a system with 500 sessions of accumulated context is a qualitatively different asset from a freshly deployed agent.

Trust compounding is the mechanism that doesn't get discussed enough. As the system demonstrates reliable, consistently improving performance, human oversight can be calibrated accordingly — concentrating where it matters (structural changes via the Path 2 human gate) and reducing where it's no longer necessary (routine tasks where the system has demonstrated consistent quality). The result: the system gets both smarter and faster as it matures. More autonomous where it's earned that autonomy. More supervised where the stakes justify oversight. This reinforcing cycle is what organizations mean when they talk about AI that genuinely integrates into how work gets done — rather than AI that requires babysitting.

Organizations that move from AI pilots to production deployment achieve a 1.7x revenue growth advantage — and that advantage widens over time as the systems learn (Master of Code, AI ROI Research, 200 B2B deployments). The widening is the key finding. The gap between learning loop organizations and static AI organizations isn't fixed at 1.7x. It grows, because the learning loop organizations are compounding and the static ones aren't.

One more thing worth saying directly: the major enterprise AI platforms are static by default. They execute tasks. They don't learn from them. Any improvement requires a human administrator to update system prompts or reconfigure integrations. The learning loop architecture isn't a feature you can add to these platforms through a settings menu. It's a design decision that has to be made at the architecture level, before deployment, by people who understand what they're building toward. That design decision is the difference between an AI investment that compounds and one that doesn't.

Free Assessment · 10–15 min

Is Your Business Actually Ready for AI?

Most businesses skip this question — and that's why AI projects stall. The TEAM Assessment scores your readiness across five dimensions and gives you a clear, personalized action plan. No fluff.

Our AI Operating System — Learning Loop Architecture in Production

We built our AI operating system as a learning loop architecture from the ground up — not as a design exercise, but because we needed it to work on actual client work, actual articles, actual proposals. What follows isn't aspirational. It's a description of what's running.

Skill versioning is the foundation of Path 1 in our AI operating system. Every skill update is version-tracked. The system knows what changed, when, and why. Each skill file carries a version header. When feedback during execution triggers a skill update, the change is logged with a date, a description of what changed, and the single-sentence rule that was added or modified. Rollback is always available. This matters because skill improvement is only useful if you can also roll back a change that made things worse — and you can only do that if you have a clear record of what changed and when. Without versioning, skill updates are effectively irreversible. With versioning, they're safely iterative.

The feedback queue in the skill management layer is our implementation of Path 2. Improvement candidates surface from execution — either flagged directly by the team during a session, or identified by the system when a pattern of corrections indicates a structural gap. Those candidates enter a review queue. Before any structural change deploys, a human reviews the proposed modification and confirms it should go live. Only then does the change deploy as a new skill version. This gate is non-negotiable. It's not bureaucracy — it's the mechanism that keeps the system trustworthy as it scales.

The context enrichment protocol is our implementation of Path 3, and it runs at session close. Knowledge generated during the session is tagged by source, confidence level, and recency. A running document captures what's currently in progress and what decisions were made. Client files accumulate engagement history — preferences, project context, conversation history — in a form every skill can query. The organizational knowledge base grows with every completed project, which means future work is more informed by what's already been done. A structured close-of-session protocol ensures this happens consistently — skip it, and the session's learning doesn't transfer. Run it consistently, and the context layer builds week over week.

The key differentiator — the one we think about as our most defensible IP — isn't any individual skill. It's the feedback architecture that makes the skills self-improving. The content production skill we use today is meaningfully better than the one we deployed in 2025. It understands our standards more precisely. It handles recurring patterns more naturally. It applies the specific structural rules we've refined through hundreds of executions. None of that required a manual prompt rewrite session. It accumulated through execution, correction, and the learning loop closing after each session.

The gap widens every week. That's the compounding effect in practice. A system with one year of learning loop operation isn't just incrementally better than day one — it's categorically better, because the compounding of small weekly improvements produces a qualitatively different capability level over time. This is why we believe the organizations that invest in learning loop architecture today will have a durable advantage that's genuinely difficult to close — not because they've purchased better tools, but because they've built a system that gets better with use while their competitors' systems stay static.

Building Learning Loops Into Your AI Architecture

Not every organization needs to build from scratch. But every organization needs to answer five questions before deploying AI at scale. These aren't optional diagnostic questions — they're the architectural questions that determine whether your AI investment will compound or flatline.

Question 1: Does your system get smarter with every execution, or does it require manual updates to improve? If the honest answer is "manual updates," you have a maintenance architecture, not a learning architecture. That's not disqualifying — it's a starting point. But you need to know which one you have.

Question 2: Where does organizational knowledge live when a session ends? If the answer is "in the chat history" or "it doesn't really go anywhere," you're starting from zero every session. The knowledge generated in each execution is valuable. The question is whether your architecture captures it or discards it.

Question 3: Who owns the feedback loop, and is there a human gate for structural changes? The lesson from practitioners who've tried full automation is worth learning from secondhand: full automation of structural changes creates an untraceable system. The human gate isn't a limitation — it's a requirement for trustworthy operation at scale. If nobody owns the feedback loop, the loop doesn't close.

Question 4: How do you measure system intelligence growth over time — not just output volume? Output volume is easy to measure and often misleading. The meaningful metrics are correction frequency (are users correcting the same errors month after month?), context layer growth (is your organizational knowledge base expanding?), and skill version count (how many times have your skill instructions been updated?). No updates means no learning.

Question 5: What's your skill versioning strategy, and can you roll back a change that made things worse? If you can't roll back, you can't safely experiment. And if you can't safely experiment, your learning loop will be conservative to the point of ineffectiveness. Version control for AI skills is as necessary as version control for code.

These five questions will tell you more about your AI architecture's long-term ROI potential than any benchmark or capability comparison. The question is not whether to build AI. That decision is already made. The question is whether the AI you build is getting smarter every week — or whether you'll be explaining in 18 months why your $200,000 AI implementation is doing exactly what it was doing on day one. The organizations that can answer yes to the five questions above are the ones building toward compounding returns. The ones that can't are building toward the 42% abandonment rate. The difference is architectural.

Start Building

Map Your AI Learning Loops

Copy this prompt into Claude to audit your current AI architecture for learning loops. You'll get a clear picture of where your system improves automatically — and where it's stuck.

Context: I want to audit my current AI implementation for learning loop architecture. My current setup: — [Describe your current AI tools and agents: which tools, what tasks] — [Describe how your team currently updates or improves AI workflows] — [Describe what happens to knowledge generated in AI sessions — where does it go?] Audit against three learning loop paths: Path 1 — Silent Skill Optimization: Do my AI skills/prompts improve automatically when users give feedback, or does someone have to manually update them? Path 2 — Feedback Database with Human Gate: Is there a structured system for capturing improvement opportunities, with human review before changes go live? Path 3 — Context Expansion on Close: After each session, does new organizational knowledge get captured and made available to future sessions automatically? Output: For each path: current state, gap, and highest-priority action to add this loop to my architecture.

This covers the diagnostic layer. Designing and implementing the full learning loop architecture is where we work together. See where you stand →

Frequently Asked Questions

What's the difference between a self-improving AI and an AI that retrains its model?

Model retraining changes the underlying neural network weights — it's an engineering process that requires significant compute, data, and time. Self-improving AI at the system level is entirely different: the model itself doesn't change. What changes are the instructions (skills), the contextual knowledge, and the feedback routing around the model. The improvement happens at the architecture layer, not the model layer. This is why system-level learning loops can improve continuously in production without any model engineering work.

How do learning loops work without constant human intervention?

The three-path framework answers this directly. Path 1 (silent skill optimization) handles small, unambiguous learnings automatically — the system updates itself without requiring human review. Path 2 (feedback database with human gate) handles larger structural changes: AI identifies candidates, humans approve. Path 3 (context expansion) is fully automatic — organizational knowledge generated during execution flows into the context layer without human action. Human oversight concentrates where it matters (structural changes) and removes itself from routine improvements.

What happens to organizational knowledge when someone leaves if you have learning loop AI?

In a learning loop architecture, institutional knowledge that would normally walk out the door gets continuously encoded into the context layer. Every decision made, every workflow refined, every client preference noted during execution gets captured — not in someone's head, but in the system. When that person leaves, the knowledge stays. This directly addresses what Synaply research calls the $900B annual problem: the cost of replacing employees who take their expertise with them. A context layer built on learning loops retains that expertise structurally.

How long does it take for a learning loop AI to show measurable improvement?

Silent skill optimization (Path 1) shows improvement within the first few sessions — feedback from execution directly updates skill instructions, often within the same work session. Context expansion (Path 3) compounds over weeks as the organizational knowledge base builds. Structural improvements via the feedback database (Path 2) depend on the pace of human review, typically weekly cycles. Most organizations deploying learning loop architecture see measurably better output quality within 30 days, and significant context compounding within 90 days.

Can I add learning loops to my existing AI implementation?

Partially, yes. Context expansion (Path 3) can often be retrofitted — it requires routing session outputs to a structured knowledge store, which can be added to existing workflows. Silent skill optimization is harder to add to platforms with locked prompt structures. The feedback database requires building a review queue, which can be a standalone system. The honest constraint: if your AI platform doesn't allow you to modify skill instructions directly, you're limited in what learning loops you can implement. Architecture-level learning requires architecture-level access.

What's a feedback database and how is it different from a chat history?

Chat history is a log of what happened. A feedback database is a structured system for capturing what should change. Chat history is retrospective; a feedback database is prospective. In the learning loop framework, a Feedback Logger agent runs after every execution and documents: did issues emerge, was information missing, are there optimization opportunities? That structured output enters a ticketing system where humans review and approve changes. It's the difference between a diary and a backlog.

How do I measure whether my AI system is getting smarter over time?

Four metrics worth tracking: (1) Skill version count — how many times have your skill instructions been updated? No updates = no learning. (2) Context layer growth — is your organizational knowledge base expanding? (3) Correction frequency — are users correcting the same types of errors month after month, or are they declining? Persistent corrections signal a static system. (4) Human intervention rate — in a well-functioning learning loop, the rate of human corrections on routine tasks should decrease over time as the system improves. If it doesn't, the learning loop isn't closing.

Sources

- Enterprise AI Adoption in 2026: Why 79% Face Challenges Despite High Investment — Writer, 2026

- The State of AI in 2025: Agents, Innovation, and Transformation — McKinsey & Company, November 2025

- AI ROI: The Paradox of Rising Investment and Elusive Returns — Deloitte Global, 2025

- AI ROI: Why Only 5% of Enterprises See Real Returns in 2026 — Master of Code, 2026

- $900 Billion Knowledge Drain: How AI Is Becoming the Last Line of Defense Against Workforce Exodus — Synaply, 2025

- Continual Learning in AI: What It Is and Why It Matters — Beam.ai, 2026

- How Enterprises Detect Performance Degradation in ML Models Over Time — EkFrazo, 2026

- AI ROI Analysis: Insights from 200 B2B Deployments — ResearchGate, 2025

- Wie wir unser KI-Betriebssystem aufgebaut haben — AI FIRST Podcast, March 2026

- Building Self-Improving AI Agents: Techniques in Reinforcement Learning and Continual Learning — Technology.org, March 2026

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.