Question

What is the difference between AI skills and AI agents?

Quick Answer

An AI agent is a system designed to execute tasks — it perceives inputs, makes decisions, takes actions. An AI skill is a structured package of domain knowledge, workflow logic, and organizational expertise that teaches an agent how to perform a specific task the way your organization does it. Agents provide capability. Skills provide knowledge. A single capable agent equipped with a library of skills consistently outperforms a fleet of specialized agents — because the differentiator was never the agent architecture. It was always the domain knowledge. Anthropic's Barry Zhang and Mahesh Murag made this case at the AI Engineer Code Summit in November 2025: stop building more agents, start building skills that make your agent extraordinary.

Two Anthropic engineers stood in front of a room full of developers in November 2025 and told them to stop building the thing every AI vendor was selling.

"Stop building agents. Build Skills."

In context — at the AI Engineer Code Summit, in front of an audience that has spent two years competing on agent count, agent autonomy, and agent benchmarks — that's a heretical sentence. It also happens to be correct.

Barry Zhang and Mahesh Murag had sixteen minutes. They used them to argue that the entire industry has been solving the wrong problem: that more agents doesn't equal more intelligence, that domain knowledge packaged into reusable skills outperforms specialized agents at every level of scale, and that Fortune 100s have already figured this out while most of the market is still racing to deploy more.

What follows is what they said, why it matters for any organization that depends on AI, and what it changes about how you should invest in AI for the next twelve months. The technical move is small. The architectural implication is not.

The 16 Minutes That Changed How Developers Think About AI

The AI Engineer Code Summit is not a conference where heresy goes over well. The audience is developers and engineering leaders who have been deep in the AI agent space — building, testing, deploying, debugging — for two years. Most of them work for companies whose entire AI roadmap is built around agents. Many of them are paid to ship agents.

Then two Anthropic engineers walked on stage and told them to stop.

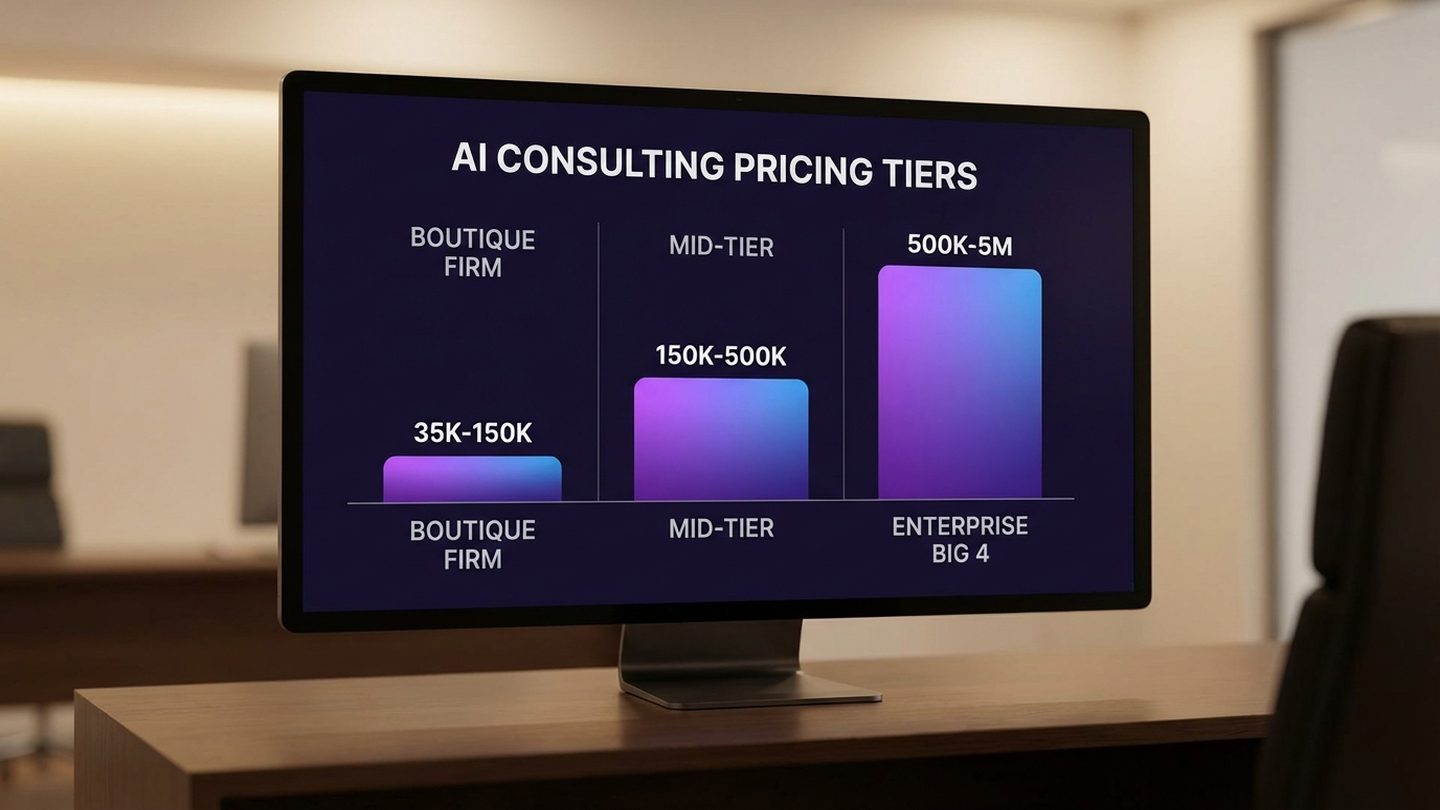

The phrase "stop building agents" lands wrong because the entire AI market is structured around agent count as a proxy for capability. Vendor pitches measure agents per platform. Enterprise buyers evaluate platforms based on how many specialized agents they support. Investor decks show agent fleets as evidence of progress. The unspoken assumption: more agents = more intelligence = more value.

Zhang and Murag spent sixteen minutes dismantling that assumption. Their argument was technically simple and strategically devastating: agents are containers for capability. Skills are containers for knowledge. The industry has been optimizing the containers when the contents are what actually matters.

A 300 IQ generalist agent will give you intelligent-looking answers about taxes. An experienced accountant agent — same model, plus a tax skill — will give you the right answer. The difference is not reasoning capability. It's domain knowledge. And domain knowledge is encoded in skills, not in agents.

What made the talk reverberate was not novelty. It was timing. Engineering teams at scale have been building skill libraries quietly for the last twelve months because their agent count strategy stopped scaling. Zhang and Murag did not introduce a new pattern. They named the one that was already winning.

What Skills Actually Are (It's Simpler Than You Think)

Here's the part that surprises people most: a skill is not code.

A skill is a folder. Inside that folder is a markdown file called SKILL.md (a markdown file is just a plain text document with light formatting — anyone who can write a Google Doc can write one), plus optional reference files. The markdown file describes when the skill should be used and what it does. The reference files contain the deeper domain knowledge — templates, examples, constraints, reasoning patterns — that the skill needs to do its job well.

That's the entire architecture.

When an agent encounters a task, it scans the available skills by their names and descriptions — the one-line summary at the top of each SKILL.md. If a skill is relevant, the agent loads its full SKILL.md. If the SKILL.md references a deeper file, the agent navigates to that file. The agent only carries what it needs for the task at hand.

To make this concrete: imagine a skill called client-proposal-writing. The folder contains a SKILL.md that describes when and how to write a client proposal in your organization's specific style, plus reference files holding your past five winning proposals, your standard pricing logic, and your service descriptions and scope language.

When someone in your firm asks the AI to draft a proposal, the agent finds client-proposal-writing, loads SKILL.md, applies the patterns, references the winning examples and pricing logic, and produces a draft that reflects how your organization actually writes proposals. Not generic. Not approximate. The specific language, the specific structure, the specific positioning.

The radical simplicity is the point. Skills are not a new technology — they're a discipline for organizing what your organization already knows, in a form an AI can use. If your firm has ever written an onboarding guide, a sales playbook, or a "how we run client proposals" document, you've already done the editorial work of a skill. The difference is that the AI reads the skill every single time it does that kind of work — not just on day one, not just when someone remembers to consult the wiki.

The hard work is not technical. It's editorial — deciding what your skills library should contain, how each skill should be structured, and what counts as "doing this work the way our organization does it."

That's a knowledge problem. It's the problem most organizations have been trying to solve for decades through documentation, wikis, training programs, and process books. None of those tools were ever read consistently. Skills are different because the AI reads them every time.

Why One Agent + Skills Outperforms a Dozen Specialized Agents

Here's the move that takes a minute to absorb but reshapes how you think about AI architecture: a single capable agent equipped with a library of skills consistently outperforms a fleet of specialized agents.

This is counterintuitive in 2026 because the AI market has been organized around the opposite assumption — that you need a sales agent, a marketing agent, a customer service agent, a finance agent, each purpose-built and separately maintained. The pitch is that specialization equals quality.

In practice, specialization equals fragmentation.

Twelve specialized agents means twelve places where organizational context needs to be loaded, twelve places where it can drift, twelve places where it has to be updated when something changes. Each agent starts with whatever context it happens to have access to — usually generic, occasionally tuned, rarely shared. When something works particularly well in one agent, that learning does not transfer to the others. Each agent gets better, or worse, on its own trajectory. The fleet is twelve separate AI systems pretending to be one. We've covered the consequences of this pattern in detail in AI Agent Sprawl — the short version is that more agents without a coherent architecture makes the organization less intelligent, not more.

Skills work differently. A skill encodes domain knowledge once and makes it accessible to whatever agent needs it. The client-proposal-writing skill is the same skill whether your generalist agent uses it for a sales draft, a marketing case study, or an internal capability deck. When you refine the skill — better examples, sharper reasoning, updated pricing logic — every future use of that skill benefits. Improvements compound across uses, not just within an agent.

The architectural mechanism that makes this work is called progressive disclosure. The agent does not load every skill into its working memory at the start of a task. Instead:

- It scans skill names and descriptions only — a single line per skill — to identify what's relevant.

- It pulls the full SKILL.md for any skill that matches the current task.

- It navigates to deeper reference files only when the SKILL.md tells it to.

The agent carries exactly what it needs and nothing else. There is no context waste, no memory bloat, no degradation as the skills library grows. An organization with a hundred skills works the same as an organization with five — the agent only sees the skills relevant to what it's doing right now.

"But isn't the answer both?"

The most common reaction to the skills-first argument is a softening one: "the answer is both." Yes, we need agents. Yes, we need skills. Why frame it as a choice?

The framing matters because the question isn't whether you need both — every skill has to run inside an agent, and every agent benefits from skills. The question is which one you treat as the strategic asset and which you treat as the runtime. The market has spent two years investing in agents as the asset: the procurement decision, the platform decision, the integration project. Skills, when they show up at all, are tactical accessories. Zhang and Murag's reordering — and ours — is that the asset is the skills library, and the agent is the runtime that uses it. The components are the same. The investment posture is reversed. The compounding goes to the side that gets treated as the asset.

This is why skill libraries scale where agent fleets do not. Adding a new skill costs almost nothing — a folder, a markdown file. Adding a new specialized agent costs significant rebuild work. The cost curves diverge, and the organization that treats skills as the asset wins.

Free Assessment · 10–15 min

Is Your AI Strategy Built Around Agents or Skills?

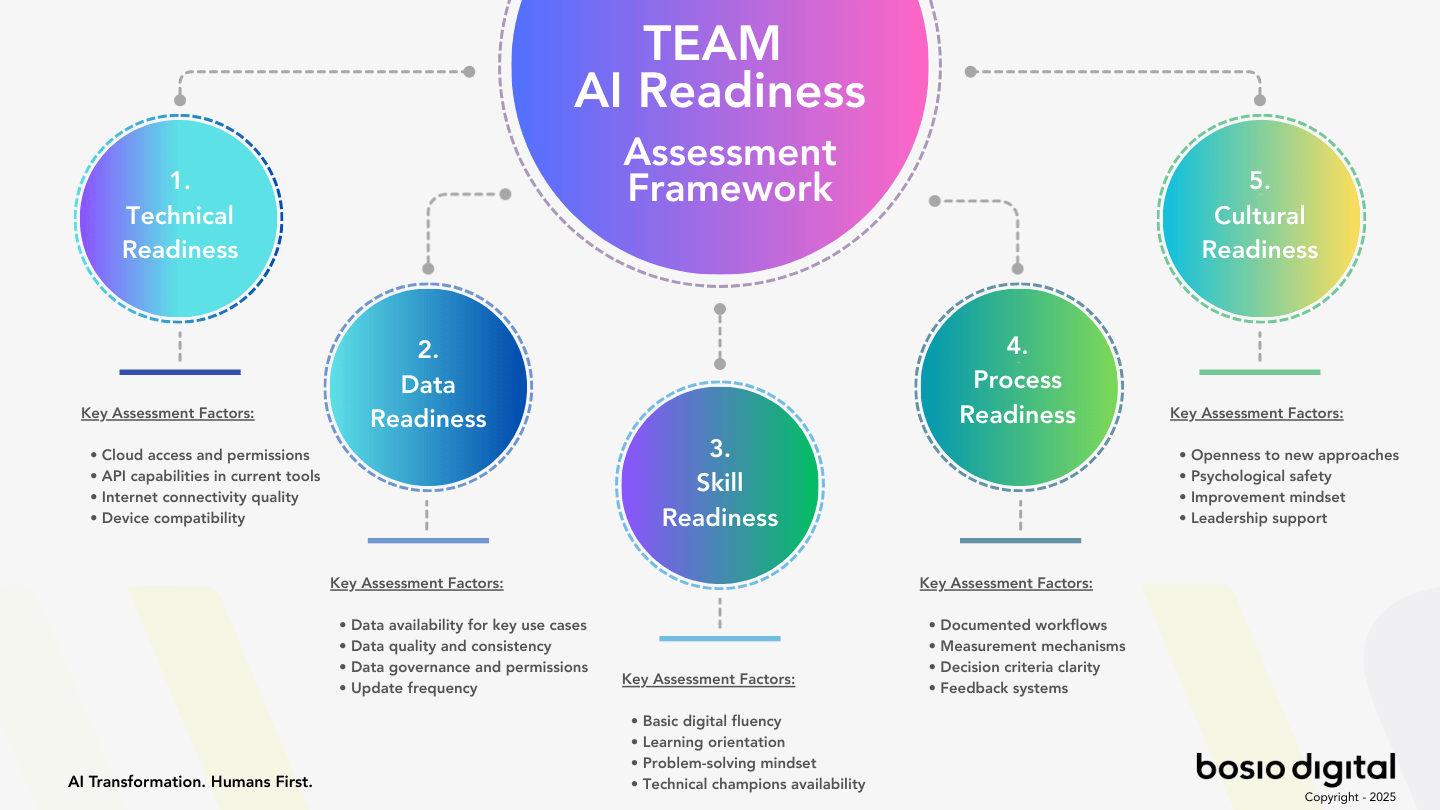

Most organizations are still investing in agents when the leverage is in skills. The bosio Architecture Assessment scores your AI readiness across five dimensions — and tells you, in ten minutes, whether your investment is compounding or fragmenting.

The Accountant Analogy: Intelligence Without Expertise Is Entertainment

Zhang and Murag used a simple analogy to make the entire argument land: who do you want doing your taxes?

Option A: a 300 IQ genius who has never read a single tax document. Maximum reasoning capability. Zero domain knowledge.

Option B: an accountant who has been doing taxes for twenty years. Average reasoning capability. Deep domain knowledge.

Almost everyone picks B. Even though A would be smarter in the abstract sense, when the stakes are real, you want the person who has done the work, who knows the regulations, who has seen the edge cases, who understands which deductions hold up under audit.

This is the choice every organization is making, mostly unconsciously, when they invest in AI.

The 300 IQ genius is the agent without skills. Powerful general capability. Excellent reasoning. No domain expertise. It can produce something that looks like a tax return — articulate, thorough, confidently formatted — and it can be wrong in ways that would never occur to a beginner, because it does not know what it does not know.

The accountant is the same agent, plus a skills library. Same general capability. The difference is that the agent now has access to encoded domain expertise: regulations, organizational pricing structures, product specifications, historical patterns, escalation rules. It produces work that reflects how the organization actually operates — not how an articulate stranger imagines it might.

The reformulation: intelligence without expertise is entertainment. Expertise packaged up is productivity.

This applies to every AI implementation decision. When you evaluate an AI tool, the question is not "how smart is the underlying model." Most current models are smart enough. The question is: how easily does this tool let me encode my organization's domain expertise into a form the AI can use? If the answer is "we don't really do that" — if the tool's value proposition is the model's intelligence in the abstract — you're being sold the genius. You probably want the accountant.

The market is currently saturated with geniuses. The competitive opportunity is in becoming the kind of organization that builds accountants — that takes the expertise locked in your most experienced people, your historical decisions, your hard-won workflow knowledge, and encodes it into skills an AI can apply consistently, at scale, every time.

What Fortune 100s Already Figured Out

The part of Zhang and Murag's talk that was missed by most of the AI press, and shouldn't have been: Fortune 100s are already at scale on this.

Productivity teams at organizations with ten thousand or more developers are using Skills to standardize how code gets written across the entire engineering organization. One skill that encodes the organization's coding standards, deployed to every developer's AI environment, used in every code generation and review. The result: code quality stops being a function of which developer wrote it and becomes a function of the standards encoded in the skill. New hires produce code that fits the organization's patterns from day one, not month six.

Compliance teams use Skills to encode regulatory workflows — what to check, in what order, against which rules, with which escalation paths. Underwriting teams use Skills to capture decision logic that previously lived in three senior people's heads. Legal review teams use Skills to standardize how contracts get marked up. Customer service teams use Skills to encode how the organization handles particular situation types — refunds, escalations, edge cases — so that the front-line agent's response matches institutional standards.

Notice what's not on this list: building more agents.

The pattern across Fortune 100 deployments is consistent: one capable AI agent, or a small number, plus a growing library of skills that encode the organization's specific expertise. The skills library is the strategic asset. The agent is the runtime that uses it. This is the same architectural argument we've made about knowledge bases that compound rather than just accumulate — only now the skill format gives that knowledge a structure the AI can use directly.

This is the gap mid-market companies have not yet closed. The mid-market AI conversation in 2026 is still anchored around agent procurement — which platform, which vendor, which specialized agents to deploy first. The Fortune 100 conversation is past procurement. The procurement decision was made. The current conversation is about how to scale the skills library.

The strategic implication for any organization not yet at Fortune 100 scale: the competitive moat is not the AI model you license, the platform you choose, or the agents you deploy. Those are commodities. The moat is the body of organizational expertise you've encoded into skills — the knowledge your AI uses, that your competitors cannot easily replicate, that compounds in value every time you refine it.

Mid-market organizations that recognize this in 2026 will be operating in a different competitive category by 2027. The ones that don't will be deploying their fourth specialized agent platform and wondering why nothing has structurally changed.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

The Architecture Insight Anthropic Actually Made

There's a deeper insight underneath Zhang and Murag's talk that's worth pulling out, because it changes how you should evaluate AI tooling.

Anthropic's working architecture for Claude Code — the agentic coding tool that has been used to build production software at scale — comes down to three components: a model, a runtime, and a filesystem. The model is the reasoning engine. The runtime executes actions. The filesystem stores knowledge. That's the entire pattern.

What's interesting is what doesn't change. The model can be upgraded. The runtime can be tuned. New tools can be added. But the structural pattern — model + runtime + filesystem — is constant. Every agentic AI system, when you strip it down, is a variation on this pattern.

What changes between a mediocre implementation and an extraordinary one is not the components. It's the contents of the filesystem.

Specifically: the domain knowledge you've encoded into the files the model reads when it's working. The skills. The reference materials. The accumulated patterns of how your organization approaches its work. The model is the same. The runtime is the same. The difference is the knowledge architecture you've built around it. And as we've covered in how learning loops keep that knowledge architecture improving over time, the architectural decision to make the system self-refining is what separates a snapshot from a living asset.

This reframes the most common AI question we hear in client conversations — "which AI tool should we use?" — almost entirely.

The tool matters much less than most evaluation processes assume. Within the current generation of capable AI platforms, the gap between the best and the merely good model is relatively narrow for most business applications. The gap that actually predicts outcomes is the knowledge architecture. An organization with a robust skills library running on a competent AI platform will dramatically outperform an organization with a marginal skills library running on the most capable AI platform.

That's not a controversial claim if you think about it from first principles. Smart people without domain knowledge produce mediocre work. The same is true of capable AI models. The knowledge architecture is doing the work. The model just executes it.

The implication for AI strategy: stop evaluating AI primarily on the basis of model capability. Evaluate it on the basis of how well the platform supports the knowledge architecture you need to build — how flexibly you can encode domain expertise into reusable, accessible, improvable skills. That's the variable that compounds. The model is just the runtime that uses what you've built.

What Skills-First Architecture Looks Like in Production

Here's the part of this article that risks sounding self-promotional, but is too central to the argument to skip:

bosio.digital has been operating our internal AI operating system — the same one we deliver to clients — on skills-first architecture since early 2026. We adopted the pattern shortly after Zhang and Murag named it in November 2025, restructured our entire internal stack around it, and have been running production workloads on it every day since. Most of the market is still arguing about agents.

We didn't read about this at a summit and write a think piece. We shipped it.

Concretely: our operating system is structured as a library of skills that encode organizational context — brand voice, client knowledge, financial logic, content production, service delivery patterns — alongside operational skills that handle workflow tasks. Every workflow we've built for ourselves and for clients is a skill. A folder. A SKILL.md. Reference files that capture the specifics. Each skill is reusable across the entire system. Each skill compounds in value as it's used and refined.

When we publish an article, the writing process is shaped by a skill that encodes how we write articles — voice, structure, citation discipline, internal linking patterns. When we send a client proposal, the proposal is drafted using a skill that encodes our positioning, pricing logic, and service architecture. When we run financial reports, those move through a skill that encodes how we categorize expenses, what we measure, what's considered material. The agent is generic. The skills are specific. The combination produces outputs that reflect how bosio.digital actually operates.

The architecture is identical to what Zhang and Murag described. The implementation predates the talk by most of a year. If you want to see where this fits inside the broader maturity arc — where Stage 4 living intelligence is reached only when the skills library compounds — we covered that in the current state of AI for business.

This isn't said as a victory lap. It's said because it changes what we can credibly tell you about adopting skills-first architecture — and what we can show you, not just describe.

For a mid-market organization considering this shift, the primary risk is not technical. The architecture is small. The risk is editorial: deciding what your skills library should contain, how to structure it, what counts as "this is how our organization does this work," and how to maintain it without it becoming another stale documentation project. That work cannot be outsourced to a tool or a generic vendor. It has to be done by the organization, with help from someone who has done it before.

We've done it before. We did it for ourselves first. That's the offer.

What Skills-First Means for Your AI Strategy in the Next 90 Days

If skills-first architecture is the direction — and the evidence above strongly suggests it is — then the practical question becomes: what does this mean for the next ninety days of your AI investment?

Three questions, each answerable by any leader, that diagnose where you are and what to build next.

Question 1: What workflows in your organization are currently locked in someone's head?

Every organization has them. The senior person who knows exactly how to handle the difficult client situation. The operations lead who knows which approval shortcuts are safe and which aren't. The veteran salesperson who knows how to position against the toughest competitor. This expertise is the most valuable knowledge your organization owns — and the most fragile, because it leaves when the person leaves. The first skills to build are usually the ones that capture this kind of knowledge before it walks out the door.

Question 2: What expertise would become ten times more valuable if it were reusable?

Some knowledge is valuable when one person uses it. Some knowledge becomes radically more valuable when every relevant interaction can use it. Brand voice. Client communication standards. Decision frameworks. Pricing logic. Quality criteria. These are the categories where encoding into skills produces the largest leverage — because the skill applies everywhere the work happens, not just where the original expert is in the room.

Question 3: What does your AI skills library look like right now — and if you don't have one, what are you building instead?

Most organizations, asked this question honestly, will answer that they don't have one. They have AI tools. They have prompt libraries, maybe. They have a few custom GPTs or Projects floating around in individual accounts. They don't have a skills library — a centrally maintained collection of structured domain knowledge that any AI agent in the organization can use.

That's the architectural gap. The next ninety days of AI investment, for most organizations, should be about closing it.

Not by buying a new platform. Not by deploying more agents. By identifying the most valuable organizational expertise, encoding it into skills, and building the institutional discipline to maintain and expand the library over time. The technology is small. The organizational work is significant. The competitive separation between organizations that do this and organizations that don't is going to be visible by the end of 2026.

Start Building

Identify Your First Five Skills

Paste this prompt into any AI tool to identify the highest-leverage skills your organization should encode first — the ones that capture the most knowledge with the least risk of obsolescence.

I want help identifying the first skills my organization should build for an AI skills library. Ask me these questions one at a time: 1. What are the three most valuable workflows in our organization that depend on knowledge living in one or two senior people's heads? 2. What are three repetitive expert decisions our team makes (e.g. how to handle a client objection, how to structure a proposal, how to evaluate a candidate)? 3. What's a piece of organizational expertise that, if every team member could access it consistently, would 10x our quality or speed? 4. What document, template, or pattern do new hires take the longest to internalize? 5. Where do outputs from generic AI tools currently miss our voice, standards, or context? 6. If our most senior person left tomorrow, what is the single most important piece of expertise that would walk out the door? After I answer all 6, give me: — A prioritized list of 5 skills to build first, ranked by leverage and risk-reduction — The structure each skill should have (SKILL.md outline + reference files needed) — A 30-day plan to capture and validate the first three skills

This prompt gives you the diagnostic — it won't design the architecture or build the discipline. The hard part of skills-first is editorial: maintaining the library so it doesn't become stale documentation. See where you stand across all five readiness dimensions →

The time to start is before the rest of your industry does.

The Architectural Choice

Stop building agents. Build skills.

The technical translation: stop optimizing the runtime. Start encoding the knowledge.

The strategic translation: stop competing on AI procurement. Start competing on the body of organizational expertise you've made accessible to your AI.

The honest translation, the one Zhang and Murag earned by saying it plainly: stop hiring 300 IQ generalists who've never read your tax code. Start training accountants.

The architecture is small. The work is real. The advantage compounds. The window for being early is narrow.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Frequently Asked Questions

What are Claude Skills and how do they work?

Claude Skills are structured units of organizational knowledge — folders containing a SKILL.md file and optional reference files — that teach an AI agent how to perform a specific type of work the way your organization does it. When the agent encounters a relevant task, it loads SKILL.md to understand the approach and pulls additional reference files only when needed. This pattern is called progressive disclosure. The radical simplicity is the point: skills are not a new technology. They're a discipline for organizing what your organization already knows in a form an AI can actually use.

Why does a single agent with skills outperform multiple specialized agents?

Specialized agents fragment organizational knowledge across multiple separately maintained systems. Each agent starts with its own context, drifts on its own trajectory, and improvements in one don't transfer to the others. A single capable agent with a skills library has access to centrally maintained domain expertise that compounds in value over time. When a skill is refined, every future use of that skill benefits — not just one agent. Progressive disclosure ensures the agent only loads what it needs for the current task, so the library scales without performance degradation.

What is progressive disclosure in AI skills architecture?

Progressive disclosure is the loading pattern that makes skills libraries scalable. Instead of loading every skill into the agent's working memory, the agent first scans only the names and descriptions of available skills — one line each. When a skill matches the current task, the agent loads the full SKILL.md. When the SKILL.md references deeper material, the agent navigates to those reference files. The result is zero context waste — the agent carries exactly what it needs for the task at hand. This is what allows an organization to maintain a hundred-skill library without performance degradation.

How do Fortune 100 companies use AI skills at scale?

Fortune 100 productivity teams use Skills to standardize how work is performed across thousands of employees. Engineering teams encode coding standards into skills used in every code generation. Compliance teams encode regulatory workflows into skills used in every review. Underwriting teams encode decision logic that previously lived in senior people's heads. Customer service teams encode how the organization handles particular situation types. The consistent pattern: one capable AI agent (or a small number) plus a growing library of skills that encode organizational expertise — with the skills library treated as the strategic asset and the agent treated as the runtime.

What's the difference between a prompt and a skill?

A prompt is an instruction given in the moment, usually for a single interaction. A skill is structured organizational knowledge maintained as a permanent asset, accessible to any AI interaction that needs it. Prompts live in individual conversations and disappear when the conversation ends. Skills live in a library and improve over time as they're refined. A prompt asks the AI to do something. A skill teaches the AI how your organization does something. The distinction matters because prompts can't compound in value across an organization. Skills can.

How do I start building a skills library for my organization?

Begin with the workflows currently locked in individual people's heads — the institutional knowledge most at risk if those people leave. Capture each workflow as a folder with a SKILL.md describing when and how the work should be done, plus reference files containing the relevant examples, templates, and constraints. Start with five to ten skills covering the highest-leverage workflows in your business. Build the discipline of maintaining and refining them as the organization learns. The technical part is small — the organizational discipline is what determines whether the library becomes valuable or stale.

Is skills-first architecture only for developers, or can business teams use it?

Skills-first architecture started in developer-focused contexts but applies equally — often more powerfully — to business teams. Skills can encode brand voice for marketing teams, sales playbooks for revenue teams, financial logic for finance teams, client communication standards for customer-facing teams, decision frameworks for executive teams. The format (folders with markdown files) is accessible to anyone who can write down how their work gets done. The harder problem is editorial — deciding what should be encoded and how — and that work is fundamentally about the business, not about the technology.

Sources

- Barry Zhang & Mahesh Murag, AI Engineer Code Summit — November 21, 2025 — "Don't Build Agents, Build Skills Instead." The source talk; the accountant analogy; the architecture argument.

- Extend Claude with Skills — Anthropic Documentation — Official documentation of the SKILL.md folder structure, progressive disclosure loading, and reference-file architecture.

- Claude Code Overview — Anthropic Documentation — Model + runtime + filesystem architectural pattern; agentic implementation reference.

- AI Agent Sprawl — bosio.digital — The architectural problem skills-first solves: agents accumulating without coherent organization.

- The Self-Improving AI — bosio.digital — Learning loops and how skills compound when paired with refinement discipline.

- From Raw Data to Living Intelligence — bosio.digital — Knowledge compilation as the substrate skills are built on.

- The Current State of AI for Business: A Practitioner's Map — bosio.digital — Where skills-first architecture sits inside the broader AI maturity arc.

- Trustworthy AI Agents — bosio.digital — Governance layer for agentic deployments running on skills.

- bosio.digital internal AI operating system — production reference implementation of skills-first architecture, operating since early 2026.