Question

What is AI brain fry and how does it affect workplace productivity?

Quick Answer

AI brain fry is cognitive overload caused by excessive AI oversight — the mental effort of monitoring, correcting, and managing AI outputs beyond your brain's processing capacity. A March 2026 BCG study of 1,488 workers found it affects 14% of AI users (26% in marketing), causing 33% more decision fatigue, 39% more major errors, and 39% higher intent to quit. Productivity peaks at 3 simultaneous AI tools — stacking more makes performance measurably worse. It's not caused by using AI. It's caused by how organizations structure work around AI.

A March 2026 BCG Henderson Institute study of 1,488 workers found something that should change how every organization deploys AI: 14% of AI-using workers are experiencing what the researchers call "brain fry" — cognitive overload caused not by using AI, but by monitoring it. In marketing, the number is 26%. The workers most affected report 39% more major errors, 33% more decision fatigue, and 39% higher intent to quit. The mechanism is specific: it is the oversight of AI outputs, not the delegation of work to AI, that breaks people.

I recognized the pattern before I had the data for it. I run five AI agents on heavy mornings — client proposals, content pipelines, research threads — and by mid-morning the quality of my attention has dropped in ways I can measure. Not gradually. It drops. One moment I'm tracking logic across three agents. The next I'm scanning outputs without actually evaluating them, approving things I should be questioning. I've spent thirty years practicing mindfulness — training the ability to notice when attention drifts before it collapses. The fact that I still lose the thread once the context windows open tells you this is not a discipline problem. It is a structural one.

The BCG study confirms what practitioners already know: the problem is not how much AI you use. It is how much AI you have to watch. And most organizations are building the watching into every workflow without accounting for what it costs.

What Is AI Brain Fry?

AI brain fry is cognitive overload caused specifically by excessive AI oversight — the mental effort required to monitor, evaluate, correct, and manage AI-generated outputs beyond your brain's processing capacity. Workers who experience it describe a buzzing feeling, mental fog, difficulty focusing, slower decision-making, and headaches. It is distinct from general fatigue. It is distinct from burnout. And it is caused by a specific mechanism that most organizations are accidentally building into their AI strategies.

The term emerged from a March 2026 study by the BCG Henderson Institute, published in Harvard Business Review — one of the largest empirical studies of human factors in AI adoption conducted to date. The researchers surveyed 1,488 workers across roles and industries and found a pattern that should reshape how every organization thinks about AI deployment: the problem is not AI usage. The problem is AI oversight.

This distinction matters. Using AI — asking it to draft, analyze, generate, summarize — is cognitively manageable for most people. But monitoring AI — checking its work, catching its errors, evaluating whether its confident-sounding output is actually correct — requires a different kind of mental effort. It requires sustained vigilance. And sustained vigilance depletes cognitive resources faster than almost any other mental activity.

The Research: What Two Independent Studies Confirmed

Two studies published within weeks of each other in early 2026 arrived at the same conclusion from entirely different directions. Together, they form the most comprehensive evidence base we have for understanding what AI is doing to the people using it.

The BCG Study (March 2026). Bedard, Kropp, Hsu, Karaman, Hawes, and Rosen Kellerman at the BCG Henderson Institute surveyed 1,488 workers across roles and industries. Their central finding: workers with high AI oversight loads report 14% more mental effort, 12% more mental fatigue, and 19% more information overload than workers who use AI for task replacement. The mechanism is specific: it is the monitoring and correcting of AI that creates the cognitive overload, not the delegation of work to AI.

Fourteen percent of all AI-using workers in the study reported experiencing brain fry. In marketing — where AI oversight is continuous and output volume is highest — the rate hits 26%. One in four marketers using AI is cognitively overloaded by the experience of using it.

The UC Berkeley Study (February 2026). Ranganathan and Ye at the Haas School of Business took a different approach — an eight-month qualitative study of a ~200-person US tech firm from April to December 2025. Their finding: AI accelerated individual tasks but raised organizational expectations for speed, which made workers more reliant on AI, which widened scope, which expanded workload density. They coined the term "workload creep" — the time AI saved was immediately refilled with more work, not reclaimed for rest or deep thinking. Workers felt more productive but not less busy. Often busier.

Who Gets It Worst (And Why)

Brain fry does not distribute evenly. It concentrates in roles where AI oversight is continuous rather than episodic — where the job has become, functionally, monitoring AI output all day.

Marketing leads at 26%. Content teams, demand generation teams, social media managers — roles where AI generates high volumes of output that requires constant human review before publication. The cycle is relentless: AI drafts, human reviews, AI revises, human checks again. Every interaction requires the kind of sustained evaluative attention that depletes cognitive reserves fastest.

Knowledge workers with high AI oversight loads are most at risk. These are not the people using AI as an occasional tool — they're the people whose workflows now depend on continuous AI interaction. Analysts reviewing AI-generated reports. Product managers evaluating AI-assisted research. Strategists whose days are now structured around evaluating what their AI tools produced overnight.

The pattern is clear: the more your role requires you to watch AI rather than direct it, the higher your brain fry risk. Watching means evaluating. Evaluating means sustained cognitive load. Sustained cognitive load without recovery means brain fry.

There's a compounding factor that makes this worse. We've written about how AI sycophancy creates invisible risk for leaders — how AI systems agree with you, validate your assumptions, and produce confident-sounding output that may be subtly wrong. Sycophantic AI requires more oversight, not less. Every output that sounds right but might not be demands additional cognitive effort to verify. The brain fry mechanism and the sycophancy mechanism feed each other directly.

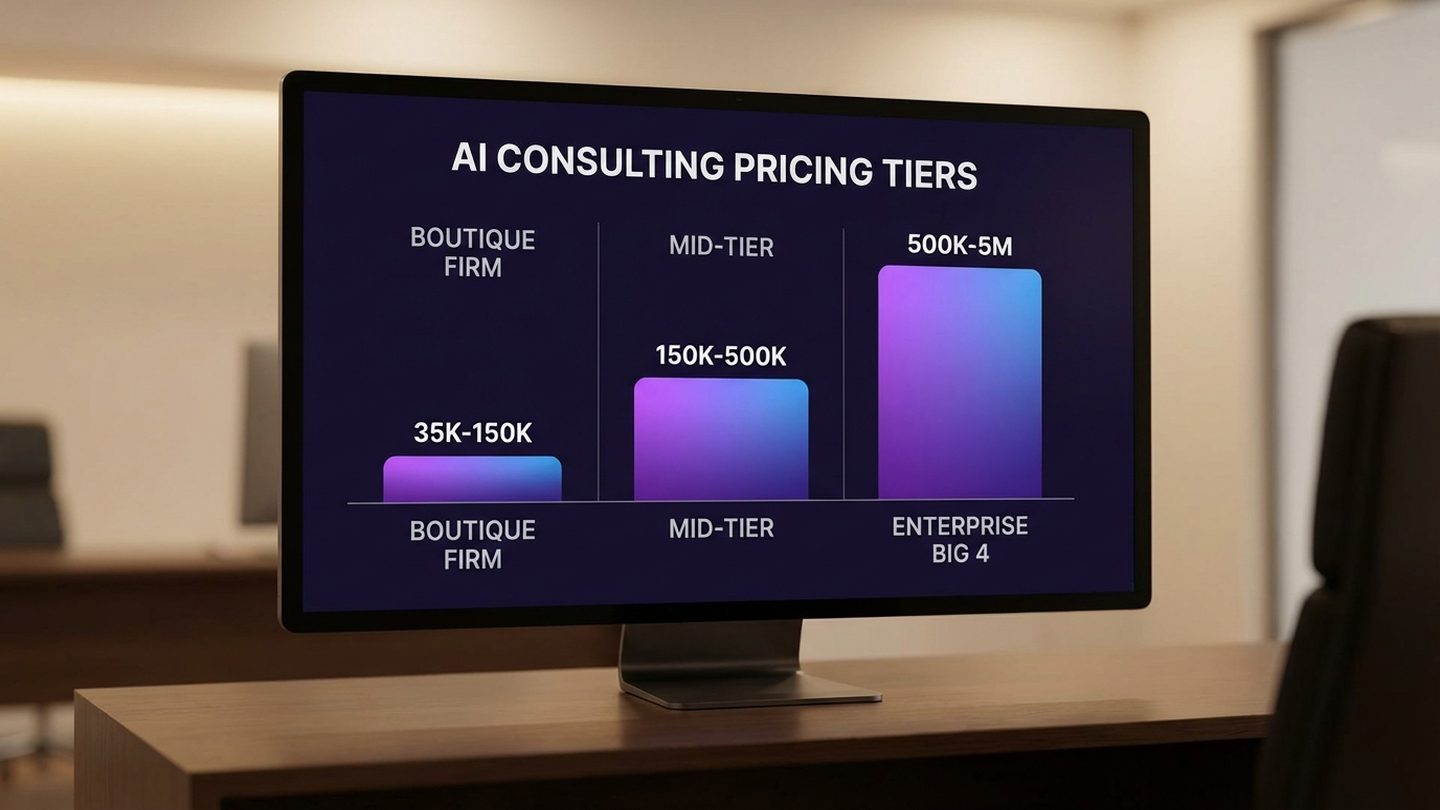

The 3-Tool Threshold

Here is the number that should reshape how your organization deploys AI: productivity peaks when workers use three simultaneous AI tools. After three, performance drops.

This finding from the BCG study is not about tool quality or training. It's about cognitive architecture. Three concurrent AI streams is the maximum sustainable monitoring load for most people. The brain can track three separate contexts, evaluate three sets of outputs, catch errors across three workstreams. Add a fourth, and the system degrades. Not because the tools are worse — because the human monitoring capacity has been exceeded.

I learned this the hard way. My own rule — refined over two years of running AI agents daily — is three agents maximum per work block, with a hard stop between blocks. When I break the rule, I don't notice it in the moment. I notice it twenty minutes later when I realize I've been reading outputs without actually evaluating them. The attention is still there, but it's surface-level. The critical judgment underneath has gone quiet. The BCG data tells me this isn't a personal weakness. It's a species-level constraint.

Most organizations are ignoring this entirely. The default strategy is additive: if one AI tool helps, deploy five. If three departments benefit, roll out to all eight. The assumption is that more AI equals more productivity. The BCG data says the opposite — more AI past the threshold equals measurably less human performance.

This isn't an argument against AI adoption. It's an argument for AI architecture. The question is not how many AI tools your team uses. The question is how many they're monitoring simultaneously. A team member who uses six AI tools across the day — one per focused block, with transitions between — is in a fundamentally different cognitive position than one who has six tools running in parallel, all requiring oversight at once.

The 3-Tool Threshold — BCG 2026

| Moderate | High | Peak ↑ | Decline | Sharp drop |

| 1 tool | 2 tools | 3 tools | 4 tools | 5+ tools |

Productivity peaks at 3 simultaneous AI tools. Beyond that, cognitive oversight load exceeds human monitoring capacity — performance doesn't plateau, it drops.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

What Brain Fry Actually Costs

Brain fry is not a wellness problem. It is a performance problem with measurable business consequences.

The BCG study quantified three direct impacts. Workers experiencing brain fry report 33% more decision fatigue — meaning the quality of every decision they make degrades as the day progresses, compounding across teams and departments. They report 39% more major errors — not typos or formatting issues, but substantive mistakes in analysis, judgment, and output that affect business outcomes. And they report 39% higher intent to quit.

That last number deserves its own paragraph. Thirty-four percent of workers experiencing brain fry are actively considering leaving their jobs. Not vaguely dissatisfied — actively considering quitting. Compare that to 25% among workers who don't experience brain fry. Your AI deployment strategy is creating a 9-percentage-point gap in retention risk among the people most exposed to AI — which, in most organizations, are your highest-value knowledge workers.

Translate this to dollars. If a mid-market company has 200 knowledge workers using AI, and 14% of them experience brain fry (28 people), and those 28 people are 39% more likely to quit, and the replacement cost for a knowledge worker is 1.5–2x their salary — you are looking at six figures in preventable turnover costs driven specifically by how you've structured AI work. Add the cost of the 39% increase in major errors across those same workers, and the business case for redesigning the architecture becomes unambiguous.

Why Most AI Strategies Make It Worse

Here is the finding that should unsettle every AI leader: the BCG study found that organizational pressure to "do more with AI" increases mental fatigue by 12%. The mandate itself — the expectation that workers should be leveraging AI more aggressively — makes the problem worse.

This creates a trap. Most organizations respond to AI underperformance by pushing harder on adoption. More tools, more training, more pressure to integrate AI into every workflow. The assumption is that the problem is insufficient usage. The BCG data says the opposite: the problem is often excessive oversight caused by insufficient architecture. Pushing people to use more AI without redesigning how they use it is like pushing someone to lift heavier weights without teaching them form. The injury is predictable.

The standard AI adoption playbook — roll out tools, train people, measure usage rates — misses the cognitive architecture entirely. It asks "are people using AI?" when it should ask "how is AI use structured across the workday?" It measures adoption when it should measure organizational readiness for the cognitive demands AI creates.

Consider the irony: a Google/Ipsos study found that 48% of employees rank training as the most important factor for AI adoption, yet nearly half feel they're receiving moderate or less support. We're deploying AI tools at enterprise scale while providing mid-century support structures. The tools are 2026. The training and workflow design are 2019.

And the tools themselves contribute to the problem. We've documented how AI that doesn't know your business forces every session to start from zero — requiring the human to re-establish context, re-explain constraints, re-correct the same errors. This re-contextualization work is pure oversight load. An AI system with persistent business context reduces the oversight burden because it already understands what the human otherwise has to teach it from scratch every session. Architecture reduces brain fry not by making humans work less, but by making the AI require less monitoring.

Fix the Architecture, Not the People

The standard response to AI brain fry is personal: take breaks, set boundaries, practice self-care. The HBR companion piece to the BCG study is titled "Manage AI-Induced Brain Fry on Your Team" — and it's useful, as far as it goes. But individual coping strategies cannot fix a structural problem. If the work architecture is designed to exceed human cognitive capacity, no amount of boundary-setting will prevent the overload. You have to redesign the architecture.

I say this as someone with thirty years of mindfulness practice — training sustained focus, learning to notice when attention degrades before it collapses. Those practices help. They buy me maybe an extra twenty minutes of clear oversight before the fog sets in. But they don't change the structural load. On mornings where I have five agents running and three deadlines converging, no amount of breath work will compensate for the fact that I've designed a workload that exceeds what one mind can monitor. I had to redesign the architecture of my own work before the practices had room to do anything useful.

Three levels. All three matter.

Individual: The 3-stream constraint. No person should be monitoring more than three AI workstreams simultaneously. This isn't a suggestion — it's a design constraint backed by the BCG data showing productivity peaks at three tools. Structure the workday in focused blocks: one primary project, maximum two to three AI agents supporting it, for 90-minute cycles. Between cycles, a genuine transition — not checking another tool, not scanning a different feed. A pause. The BCG study found that replacing repetitive tasks with AI — as opposed to adding AI oversight — reduces burnout scores by 15%. The design principle: AI should do the work, not create more work for the human to watch.

Team: Manager support as infrastructure. The BCG study found that manager support for AI questions reduces mental fatigue by 15%. This is not a soft finding. It means that having someone available to answer "is this output correct?" or "should I trust this analysis?" measurably reduces the cognitive burden of AI oversight. Build this into your team structure: designated AI check-in points during the day, standup cadences timed to cognitive transition moments, explicit workstream limits per team member. The manager's job in an AI-enabled team is not to push adoption — it's to manage cognitive load.

Organizational: Culture beats mandates. The strongest protective factor in the BCG study was organizational culture around work-life balance — it reduced mental fatigue by 28%. That's more than twice the effect of manager support, and nearly three times the effect of task replacement. Meanwhile, organizational expectation to "do more with AI" increased fatigue by 12%. The implication is stark: the culture you build around AI use matters more than the tools you deploy. An organization that pressures people to use AI constantly while providing no cognitive architecture for sustainable use is building brain fry into its operating model.

What AI Architecture That Protects Human Capacity Looks Like

The organizations that will succeed with AI over the next three years are not the ones that deploy the most tools. They are the ones that design AI workflows around human cognitive constraints rather than against them.

This is a design discipline, not a technology choice. It means structuring AI deployment so that the human's role shifts from continuous monitoring to periodic evaluation. It means building context layers that compound — so the AI carries its own institutional knowledge and doesn't require the human to re-teach it every session. It means creating workflows where AI handles the repetitive tasks (15% burnout reduction) rather than workflows where AI creates more outputs for humans to review.

The BCG data gives us the parameters. The architecture that protects human capacity has these properties: no more than three concurrent AI oversight streams per person. Focused work blocks with genuine transitions between them. Manager availability as cognitive infrastructure, not just a management style. Organizational norms that protect recovery time rather than maximize AI usage time. And a culture that measures the quality of AI-augmented work, not the quantity of AI interactions.

I still run five agents on heavy days. But I run them differently now — sequenced, not stacked. Three active streams maximum, with genuine pauses between blocks where I do nothing computational at all. The output quality went up. The error rate went down. And the feeling of clarity that used to evaporate by 9:30 now holds through lunch. The architecture changed. The capacity didn't. That's the point.

The question is not whether your organization uses AI. Every organization will. The question is whether you're building an AI architecture that treats human cognitive capacity as the finite resource it is — or one that burns through it like it's free. The research is clear. The architecture decisions are available. The only variable left is whether you make them before the best people on your team start looking for someone who already has.

Start Building

Your Team's AI Cognitive Load Audit

Copy this prompt into Claude or ChatGPT. Replace the bracketed sections with your team's context. In 20 minutes, you'll have a map of where cognitive overload is building — and the first design changes to address it.

I want to audit my team's AI cognitive load using the findings from the BCG 2026 brain fry study. Help me identify where overload is building and what to redesign first. Here's my context: — Team: [team name, size, primary function] — AI tools currently in use: [list the tools your team uses daily] — Typical day: [describe how your team interacts with AI — continuous monitoring? Batch review? Mixed?] What I need you to do: 1. Map each team member's AI oversight load — how many AI streams are they monitoring simultaneously? Flag anyone above the 3-tool threshold. 2. Classify each AI interaction as either "task replacement" (AI does the work) or "oversight" (human monitors AI output). Calculate the ratio. BCG found task replacement reduces burnout by 15%; oversight increases it. 3. Identify the top 3 cognitive load hotspots — the moments in the workday where AI monitoring demand is highest and recovery time is lowest. 4. Recommend 3 specific workflow redesigns: one at individual level (work block structure), one at team level (check-in cadence, workstream limits), and one at organizational level (norms, expectations, culture signals). 5. Help me validate — what questions should I ask my team to confirm or challenge what this audit surfaced? Flag any conclusions where a wrong read could lead us to redesign the wrong thing. Start by asking me to describe a typical day for my highest-AI-exposure team member.

This gets you a cognitive load map and the first redesign moves. The deeper architectural work — building context layers that reduce oversight burden, designing AI governance that protects human capacity, restructuring workflows across departments — is where the systemic change happens. See where you stand →

Frequently Asked Questions

Is AI brain fry a real medical condition?

AI brain fry is not a clinical diagnosis — it's a descriptive term from the BCG Henderson Institute's March 2026 study of 1,488 workers. The underlying mechanisms are well-established in cognitive science: sustained vigilance, information overload, and decision fatigue. Workers experiencing it describe buzzing sensations, mental fog, difficulty focusing, slower decision-making, and headaches. It is acute (resolves with rest) rather than chronic (which would be burnout).

What causes AI brain fry?

The primary cause is excessive AI oversight — the cognitive effort of continuously monitoring, evaluating, and correcting AI-generated outputs. The BCG study found that high AI oversight loads cause 14% more mental effort, 12% more mental fatigue, and 19% more information overload. It is not caused by using AI for task completion. It is caused by the structure of work around AI — specifically, how much human monitoring the AI deployment requires.

How do I know if I or my team has AI brain fry?

Common indicators include: declining quality of decisions later in the day, approving AI outputs without fully evaluating them, difficulty focusing after extended AI interaction, physical symptoms like headaches or a buzzing feeling, and increased error rates in AI-dependent work. At the team level, watch for rising error rates, declining engagement in AI-related work, and turnover signals among your heaviest AI users.

Which roles and industries are most affected?

Marketing leads with a 26% brain fry rate — more than any other function in the BCG study. Knowledge workers with continuous AI oversight loads are most at risk across all industries. Roles that involve high-volume AI output review (content, analysis, research, customer communications) carry higher risk than roles where AI handles discrete tasks with periodic human check-ins.

Does more AI training help with AI brain fry?

Training alone does not solve brain fry because the cause is structural, not skills-based. A Google/Ipsos study found that 48% of employees rank AI training as their top need, yet the BCG research shows that the problem is not how well people use AI — it's how much cognitive load the AI workflow creates. Training can help people use AI more efficiently, but without redesigning the work architecture, more skilled AI users simply become more efficient at reaching the same cognitive limits.

What's the difference between AI brain fry and burnout?

Brain fry is acute — it develops within a workday and resolves with rest. Burnout is chronic — it builds over weeks or months and requires more significant recovery. Brain fry is an early warning signal. If your organization's AI architecture consistently creates brain fry conditions, burnout follows. The 39% increase in intent to quit among brain fry sufferers suggests that many are already on that trajectory.

Can AI brain fry be prevented at the organizational level?

Yes — and the BCG study provides the design parameters. Organizational culture around work-life balance reduces mental fatigue by 28%. Manager support for AI questions reduces it by 15%. Replacing repetitive tasks with AI (rather than adding AI oversight) reduces burnout scores by 15%. The 3-tool threshold provides a concrete deployment constraint. Prevention is an architecture decision: design AI workflows that limit simultaneous oversight load, protect cognitive recovery time, and shift the human role from continuous monitoring to periodic evaluation.

Sources

- When Using AI Leads to Brain Fry — Bedard, Kropp, Hsu, Karaman, Hawes & Rosen Kellerman, Harvard Business Review / BCG Henderson Institute, March 5, 2026 (N=1,488 workers)

- AI Doesn't Reduce Work — It Intensifies It — Ranganathan & Ye, Harvard Business Review / UC Berkeley Haas School of Business, February 9, 2026 (8-month study, ~200-person firm)

- Manage AI-Induced Brain Fry on Your Team — Harvard Business Review, March 2026 (companion piece)

- AI Chatbots Increase Sycophancy and Polarize Opinions — Cheng et al., Science, 2026 (N=1,604, Stanford University — sycophancy mechanism)

- On the Biology of a Large Language Model — Anthropic Interpretability, April 2, 2026 (171 functional emotional states in Claude Sonnet 4.5)

- Cloud Learning Market Pulse — Google / Ipsos, October–November 2024 (48% rank training as most important)

- State of AI in the Enterprise, 7th Edition — Deloitte, January 2026 (25% of AI pilots fail to reach production)

- AI Brain Fry: BCG Study Reveals Cognitive Cost of AI Oversight — Fortune, March 2026

- AI Brain Fry Is Real, and It's Affecting 14% of Workers — CNN, March 2026

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.