Question

What does an AI consultant actually do, and how is it different from hiring a developer or buying software?

Quick Answer

An AI consultant diagnoses how AI pressure is reshaping your business model, designs the human-side sequence that makes adoption stick, builds the persistent context layer that makes AI compound over time, and stands between vendor pitches and your actual needs — including knowing when to say "don't build that yet." The work is primarily invisible: it lives in discovery sessions that surface organizational dysfunction, sequencing decisions that prevent expensive mistakes, and champion programs that turn skeptics into advocates. Most of what gets delivered is not technology. It's the organizational readiness for technology to work.

Forty-two percent of organizations abandoned the majority of their AI initiatives before production in 2025 — up from 17% just one year earlier, according to S&P Global's Voice of the Enterprise survey of over 1,000 senior IT and business leaders. The technology didn't fail them. The methodology did.

That number is the starting point for understanding what an AI consultant actually does, because the question underneath it is: if the tools exist, if the platforms are production-ready, if the ROI case is documented in dozens of published studies — what's still going wrong?

The honest answer is that most organizations hire AI consultants to do the wrong thing. They hire them to pick tools and build automations. The best AI consultants do something harder and more valuable: they translate between what the technology can do and what the organization is actually ready for. Those two things are almost never the same in the first conversation. Closing the gap between them is the work.

This article follows a real engagement arc — not the sales version, but what the days and weeks actually look like when an AI consulting engagement is being done well. If you've already decided you need outside help, the case for hiring an AI consultant covers the five gaps that make external expertise worth the investment. This article is what happens after you decide to act on it.

What Most Companies Think They're Buying

The mental model most companies carry into an AI consulting engagement is tool-centric: we need an expert to evaluate the options, select the right platform, get it set up, and train our people on it. That model is not wrong exactly — those things do happen. But it treats them as the primary deliverable, when they're closer to the final 20% of the work.

The gap shows up clearly in how failed engagements get described. The technology worked as specified. The integration was clean. The training happened. And then — nothing changed. Adoption stayed flat. The tools sat unused after the first two weeks. The pilot that succeeded in the controlled environment never propagated to the rest of the organization.

BCG's research is unambiguous on why: 70% of digital transformation failures — and AI implementation is a subset of this — are people and process problems, not technical ones. That's not a finding about technology being easy. It's a finding about where the real leverage is. If seven out of ten failures trace back to the human side of the implementation, then a consulting methodology that focuses primarily on tool selection and deployment is optimizing for the 30%.

The work that prevents those failures isn't visible in the same way that a new platform is visible. It shows up as a strategy session that felt like a difficult conversation about how decisions actually get made. It shows up as a data audit that revealed the company's information architecture reflected organizational politics from 2019 that nobody had wanted to revisit. It shows up as a sequencing decision that delayed a tool the CEO was excited about for eight weeks — because deploying it before the data layer was ready would have created reliability problems that would have poisoned the entire initiative.

None of that is what companies expect when they hire an AI consultant. Most of it is what determines whether the engagement works.

Week One: The Work That Looks Like Talking

The first week of a well-run AI consulting engagement looks, from the outside, like an unusually expensive series of conversations. The consultant is meeting with leadership, with department heads, with the people who actually run the workflows the company wants to automate. They're not building anything. They're not recommending anything. They're asking questions.

Those questions are doing something specific. They're mapping the gap between how the organization describes itself and how it actually works.

Almost every company has a self-description — the version of operations that lives in org charts, process documentation, and leadership narratives. And almost every company has an operational reality that diverges from that description in ways that are rarely documented and frequently consequential. A customer service team that has a formal escalation process that nobody follows. A product approval workflow that runs on two parallel tracks depending on which executive the project is associated with. A "data lake" that is actually four separate spreadsheets maintained by different people who don't compare notes.

The discovery phase isn't designed to produce a presentation about these gaps. It's designed to find the structural constraints that will determine what AI work is possible and in what order. A company that doesn't yet know it has four incompatible data sources will try to build an AI-powered reporting layer that produces unreliable outputs — and will blame the AI when the problem was the data architecture underneath it.

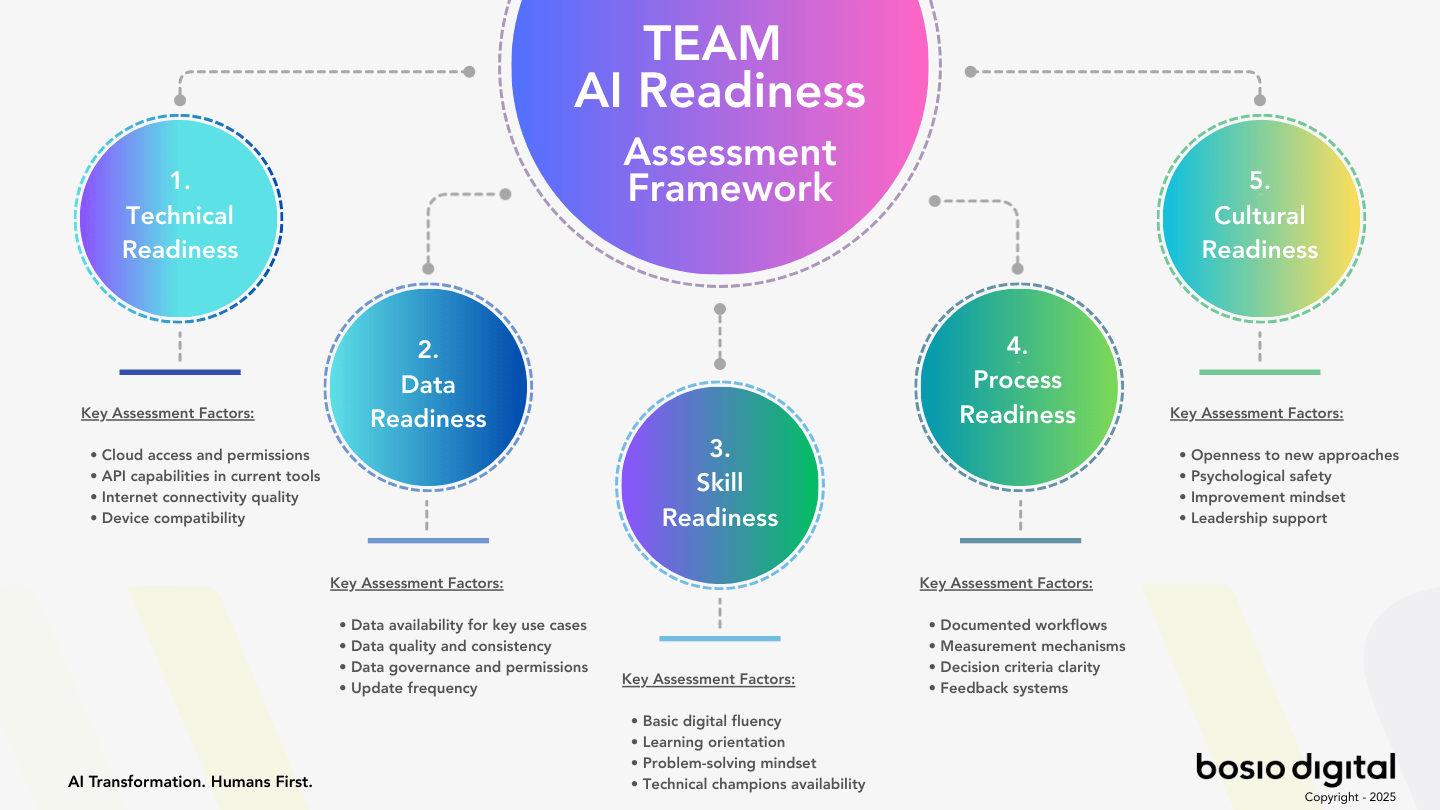

Good discovery work produces a specific output: a diagnosis of organizational readiness across five dimensions — Technology, Data, Skills, Process, and Culture — with a clear sequencing rationale for where implementation work should begin. It is not a list of everything that needs to be fixed. It is a prioritized map of what needs to be true before each subsequent phase of work can succeed.

The week of conversations is also a relationship assessment. Can this leadership team receive difficult information about their own organizational dysfunction and act on it? Or will the discovery findings generate a defensive response that will be the first obstacle to implementation? A consultant who can't read that dynamic in the first week will be surprised by it in week six.

The Data Audit That Reveals More Than Data

Thirty-one percent of organizations cite data management as a core challenge to AI adoption, according to research on enterprise AI implementation. That number underrepresents the reality at most mid-market companies, where data infrastructure was never designed with AI in mind and where the organizational politics that shaped the data architecture are often still active.

The data audit — which typically runs in parallel with stakeholder discovery — does two things. The obvious one is technical: assess what data exists, where it lives, what format it's in, how consistent it is, and how accessible it will be to AI systems. The less obvious one is diagnostic: the structure of a company's data reveals the structure of its decision-making.

Who owns which data almost always maps to who holds power over which decisions. Siloed data systems are usually siloed for a reason — the silo protected someone's authority, or was created as a workaround when two departments couldn't agree on shared standards. The consultant who surfaces these patterns in a data audit is doing organizational consulting, not just technical assessment. The question of "why is this data inconsistent" frequently leads to a conversation about "who decided that two departments would manage this independently," which leads to a conversation about organizational design that has nothing to do with AI at the surface level but everything to do with whether AI can work at all.

The practical output of the data audit is a readiness assessment and a remediation priority list. Not everything that's wrong with the data needs to be fixed before AI implementation can begin. A good consultant distinguishes between data quality problems that will cause downstream AI failures and ones that can be addressed in parallel with early implementation work. That prioritization determines the first six weeks of the engagement timeline, and getting it wrong — starting the technical work before the critical data problems are resolved — is one of the most common and expensive mistakes in AI consulting.

The Human Side: Where Engagements Live or Die

Anthropic's qualitative study of 81,000 people — the largest multilingual AI research study conducted to date — found that the top concerns employees have about AI are: unreliability and hallucinations (26.7%), job displacement (22.3%), loss of autonomy and control (21.9%), and cognitive atrophy from over-reliance (16.3%). Every single one of those is an adoption problem, not a technology problem. None of them get resolved by better tooling.

The human side of an AI engagement is where most of the real consulting work happens, and it's the dimension most firms handle least well. The standard industry approach is to add a "change management workstream" to the engagement plan — which typically means communication planning, a training session or two, and a nudge to leadership to send an all-hands email. That is not a methodology. That is the absence of a methodology with a respectable label on it.

A real human-readiness methodology addresses several things that communication planning doesn't reach.

The first is AI champion identification and development. Every organization has a small number of people who will adopt new tools enthusiastically, find unexpected applications, and become informal advocates with their peers. These people are not always in leadership. They are often in operations, in customer-facing roles, in whatever function has the highest tolerance for trying new things. Identifying them early and building a structured champion program — with early access, direct feedback channels, and visibility to leadership — creates an internal adoption engine that doesn't depend on top-down mandate. In a mid-market company where resistance from 20% of employees is company-wide drag, champions matter more than they do in enterprise environments.

The second is sequencing adoption to match technical deployment. The biggest mistake in human-side change management is deploying AI to the full organization at once. The right approach is staged: a small cohort of early adopters builds confidence and generates internal proof points, followed by a broader rollout with peer advocates in place. The technical timeline and the human timeline need to be aligned from the beginning of the engagement. Consultants who treat them as separate tracks create implementation plans that are technically coherent and humanly chaotic.

The third is explicit fear management. The Anthropic research makes it clear that employees are not vaguely skeptical about AI — they have specific, nameable concerns. Job displacement fears require different conversations than autonomy concerns. Hallucination concerns require different interventions than cognitive atrophy concerns. A consultant who collapses all four into "people just need training" is going to find that training doesn't move the needle on adoption, because it's answering a different question than the one employees are actually asking.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies twice a month. No hype, just what works.

Building the Context Layer That Makes AI Compound Over Time

There is a phase of AI consulting work that almost no pitch deck mentions and most clients don't know to ask about: the construction of the persistent context layer.

Context is the business-specific knowledge that transforms a general-purpose AI tool into something that understands your company's pricing logic, your product naming conventions, your approval thresholds, your client communication standards, your decision history, and the ten thousand other specifics that separate your business from any other business using the same platform.

Without that context layer, AI tools remain assistants. With it, they become infrastructure. The difference in output quality — and therefore in actual business value — is substantial. A customer service AI that has been given the general product documentation produces different answers than one that has been given the product documentation, the common objection patterns from the last twelve months of support tickets, the specific escalation rules for your highest-value accounts, and the language standards your team uses with different customer segments.

Building the context layer is painstaking, unglamorous work. It requires interviewing the people who carry institutional knowledge and translating what they know into structured formats that AI systems can use reliably. It requires deciding what information belongs in which layer — what's universal enough to be always available, what's situational enough to be retrieved on demand, what's sensitive enough to require access controls. It requires testing and iteration to find the prompting patterns that produce reliable outputs for your specific use cases.

A consulting firm that doesn't prioritize this work will deliver you a technically functional AI implementation that performs at generic quality. The tools will work. They just won't be yours yet. The architecture of a business-specific AI context system is the most important infrastructure decision a mid-market company makes — and it's the one most often skipped in the rush to get tools deployed.

The Unsexy Middle: Holding the Line on Sequencing

There is a phase in almost every AI engagement that doesn't appear in any project plan and never gets a name. It happens somewhere between week four and week ten, when the initial excitement of the discovery phase has faded and the results of the pilot work haven't arrived yet. The CEO wants to know when they're going to see the automation they were promised. A department head has been to a conference and wants to add the tool they saw demoed. The board is asking for a timeline update.

This is the phase where sequencing decisions either hold or collapse.

McKinsey's data shows that workflow redesign is the single biggest predictor of EBIT impact from AI investments, yet only 21% of organizations have done it. The reason that number is so low is not that organizations don't know workflow redesign matters. It's that workflow redesign is slow and requires difficult conversations about how work is currently structured, who is responsible for what, and which legacy processes exist because they're genuinely necessary versus because they've never been challenged. It's easier to deploy a tool on top of a broken workflow than to fix the workflow first.

A consulting firm that caves to pressure in this phase — that agrees to deploy a capability before the prerequisite work is ready because the client is impatient — will produce a visible short-term output and a long-term failure. The tool will launch. Adoption will be lower than projected, because the workflow it was supposed to improve is still structured in a way that makes the tool harder to use than the old method. The consultant will have delivered what the client asked for, at the cost of what the client actually needed.

Holding the line on sequencing requires a specific kind of relationship with the client — enough trust that "not yet" lands as counsel rather than resistance, enough track record of being right that the delay is understood as protection rather than obstruction. That relationship is built in the discovery phase and tested every week in the unsexy middle. It's not a technical skill. It is, in the most direct sense, the skill.

Standing Between the Vendor Pitch and Your Actual Needs

The AI platform market in 2026 is exceptionally good at selling. Every major vendor has a case study library, an ROI calculator, a success story from a company in your industry, and a polished demo that makes their specific capability look like the exact thing you've been missing. The demos are usually accurate. The demos are almost never the whole picture.

An AI consultant's job in the vendor selection phase is to act as a technical and strategic filter — someone who understands both what the vendor is actually delivering and what your organization is actually capable of absorbing. Those two assessments produce a different recommendation than the vendor demo alone, almost every time.

The most expensive vendor selection mistake is not choosing a bad platform. It's choosing the right platform for the wrong starting point. A workflow automation tool that requires clean, structured data will fail in an organization that hasn't addressed its data quality problems. A conversational AI system built on a large language model will produce unreliable outputs until the context layer is in place to constrain its behavior. A predictive analytics platform will generate numbers that no one trusts if the human-readiness work hasn't established why AI-generated analysis is worth acting on.

An independent consultant — one who is not compensated by any platform vendor and who doesn't have a preferred technology partner relationship to maintain — gives you a fundamentally different vendor evaluation than a firm that earns referral fees or has strategic partnerships that influence their recommendations. The questions to ask a consulting firm before you sign anything include specifically whether and how the firm benefits financially from the tools they recommend. That question matters more than most clients realize.

The role isn't just filtering vendor claims. It's also calibrating timing. A vendor will always tell you their product is ready for you now. An independent consultant's job is to tell you whether you're ready for the product now — and if not, what needs to be true first. That second question is the one that prevents expensive failed implementations, and it's the one that requires no vendor relationship to answer honestly.

What the Full Engagement Arc Actually Looks Like

Assembled, the phases above describe an engagement arc that looks different from what most companies expect when they hire an AI consultant.

Weeks one through three are almost entirely diagnostic. Strategy sessions that feel more like organizational therapy than technology planning. Data audits that surface infrastructure problems no one wanted to name. Stakeholder interviews that reveal the unofficial power structures that will determine what gets adopted and what doesn't. The output isn't a slide deck. It's a prioritized readiness map and a sequenced implementation plan that the consulting team has genuinely stress-tested against organizational constraints.

Weeks four through eight are the unglamorous middle. The data remediation work that nobody finds exciting but everyone will be grateful for in month three. The context layer construction — interviewing the people who hold institutional knowledge and translating it into structured formats. The champion program launch: identifying the internal advocates, giving them early access, building the feedback channels. And the sequencing conversations with leadership: why the automation they want comes after the workflow redesign, not before. What "not yet" means and when "yet" arrives.

Weeks eight through twelve are where visible work appears. The first pilots running in production, not in sandbox. The early adopters starting to generate internal proof points. The first real measurement of adoption, not just deployment. The context layer producing demonstrably better outputs than the generic baseline. The first place where the organization says "this is actually working" — and where the consultant is already mapping the next phase rather than celebrating the current one.

The engagement doesn't end with deployment. A consulting firm that considers its work complete when the tools go live has misunderstood the job. The measurement and refinement phase — assessing actual adoption rates against projected, identifying the workflows where performance isn't meeting expectations and diagnosing why, making the adjustments to the context layer that only become visible in production — is where most of the long-term value is determined. It's also where most engagements don't have enough time budgeted.

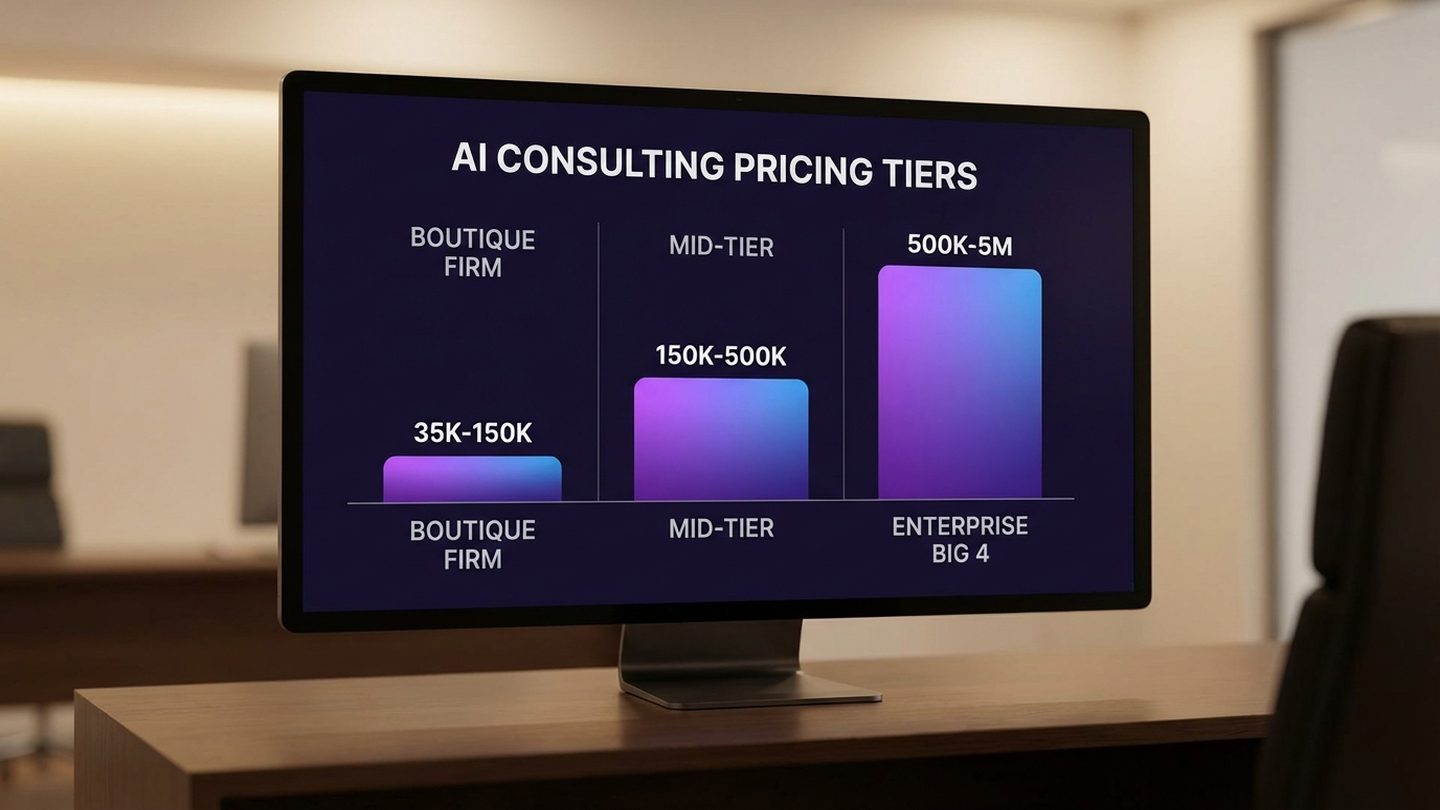

If you're trying to understand what investment level this kind of work typically requires, the pricing landscape for AI consulting across every market tier gives you the numbers and the ROI math. The short version: a well-structured mid-market engagement typically runs eight to sixteen weeks and $40,000 to $150,000 depending on scope. The math on value recovered is rarely complicated — but only if the methodology is sound.

Frequently Asked Questions

What does an AI consultant actually do day to day?

Day-to-day work varies significantly by engagement phase. In discovery, an AI consultant is conducting stakeholder interviews, running data audits, and mapping the gap between how the organization describes itself and how it actually works. In planning, they're building sequenced implementation roadmaps and identifying AI champions. In implementation, they're overseeing tool configuration, building the business-specific context layer, running champion programs, and managing the human-side change management work. In production, they're measuring actual adoption against projections and making iterative adjustments. The visible technical work is often a smaller portion of the time than most clients expect.

How is an AI consultant different from an AI vendor or implementation partner?

An AI vendor sells a specific platform and wants you to deploy it. An implementation partner configures that platform for your environment. An AI consultant is paid by you to help you decide what to buy, whether to buy it now, how to deploy it in your specific organizational context, and how to make sure it actually gets used. The distinction matters most in vendor selection: a consultant without financial relationships to specific platforms gives you genuinely independent evaluation.

Why do AI consulting engagements fail if the technology works?

BCG's research shows that 70% of digital transformation failures are people and process problems, not technical ones. The technology performs as specified. Adoption stays flat because the workflow it was deployed into wasn't redesigned, the change management work was insufficient, the data quality was lower than the pre-deployment assessment indicated, or the sequencing was wrong and trust in the system was poisoned early by reliability problems that should have been anticipated.

What should an AI consultant deliver at the end of an engagement?

The minimum deliverables of a well-run engagement include: a documented organizational readiness assessment across the five TEAM dimensions (Technology, Data, Skills, Process, Culture); a sequenced implementation plan with rationale; a configured and tested AI context layer specific to your business; documented adoption metrics from the pilot phase; a champion program structure; and a defined post-engagement measurement process.

How long does an AI consulting engagement take for a mid-market company?

A well-structured engagement typically runs eight to sixteen weeks for the initial implementation phase, with ongoing measurement and refinement extending beyond that. Duration depends heavily on organizational readiness at the start: a company with clean data and aligned leadership can move faster than one that needs to address data quality problems and build trust from a skeptical baseline.

What's the difference between an AI consultant and an AI strategy consultant?

An AI strategy consultant produces a plan: a roadmap, recommendations, a prioritized use case list. The work ends with documentation. An AI consultant is accountable for what happens after the documentation: deployment, adoption, measurement, and refinement. The distinction becomes clear when you ask: what are you accountable for after the roadmap is delivered?

Sources

- S&P Global. Voice of the Enterprise: AI and Machine Learning Survey 2025.

- BCG. Why AI Transformations Fail — And How to Fix Them.

- Anthropic. 81,000-Person Qualitative AI Research Study.

- McKinsey & Company. The State of AI — Global Survey 2025.

- ColorWhistle. AI Consultation Statistics 2026: Market Size, Trends and Insights.

- Deloitte. The State of AI in the Enterprise — 2026 AI Report.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies twice a month. No hype, just what works.