Question

What is AI agent sprawl?

Quick Answer

AI agent sprawl is what happens when organizations deploy AI agents reactively — team by team, tool by tool — without a unifying architecture. Each agent operates in isolation, with no shared memory, no governance rules, and no feedback loop that makes it smarter over time. A 2026 OutSystems survey of nearly 1,900 global IT leaders found 94% report sprawl is increasing complexity, technical debt, and security risk — while only 12% have implemented a centralized platform to manage it. The fix isn't fewer agents. It's intentional architecture: shared skills, a context layer, governance, and learning loops that turn isolated agents into an AI operating system.

Ninety-four percent. That is the share of enterprise leaders who told researchers in April 2026 that AI agent sprawl is actively increasing complexity, technical debt, and security risk inside their organizations. Not eventually. Now. While the experiment is still running.

That number is not surprising if you have been paying attention. But the second stat from the same research is the one that matters more: only 12% of those enterprises have implemented a centralized platform to manage the sprawl they are already reporting as a problem.

This is where most companies are in 2026. Building faster than they are governing. Deploying more than they are designing. Accumulating agents without accumulating intelligence.

Everyone's selling you agents. Nobody's designing your operating system.

That is not a critique of the technology. It is a description of a pattern — the default outcome of AI adoption without architecture. This article names it, measures it, and shows you what the alternative looks like.

What Is AI Agent Sprawl?

AI agent sprawl is the uncontrolled proliferation of AI agents across an organization — without centralized visibility, governance, or a shared intelligence layer. It is the AI equivalent of shadow IT, but faster to accumulate and deeper in consequence.

The typical pattern: one team builds a ChatGPT workflow to handle customer queries. Another deploys Copilot for content creation. A third hires a contractor to build custom agents for data analysis. Each initiative solves a real problem. None of them talk to each other. None share context. None have governance rules that specify what the AI can do autonomously versus what requires human review. And none of them improve automatically — each stays static at the quality level it had on deployment day.

Then the person who built the contractor agents leaves. Their knowledge leaves with them.

This is not an edge case. This is the default outcome of AI adoption without architecture. The California Management Review put it plainly in its 2026 analysis of enterprise AI governance: the absence of a unifying design is not a neutral position. It is itself a structural choice — one that compounds risk with every agent deployed.

Agent sprawl produces five specific failure modes. Redundant integrations, where different teams independently connect to the same tools and each integration requires its own maintenance, monitoring, and security review. Compliance exposure, as agents operate without audit trails or permission frameworks. Zero compounding value, because each agent stays static at deployment quality rather than improving with use. ROI fragmentation, where costs accumulate while results scatter across teams. And knowledge loss — the specific, expensive consequence of context living inside people rather than inside the system.

The term "agent sprawl" is entering the mainstream. AWS named it directly when launching Agent Registry in April 2026. Unframe AI published a cost analysis. California Management Review addressed its governance implications. The concept is circulating. What it lacks is a firm that has both diagnosed the problem at the architecture level and built the solution from the inside out. That is what this article establishes.

The Numbers: Where Most Companies Actually Are

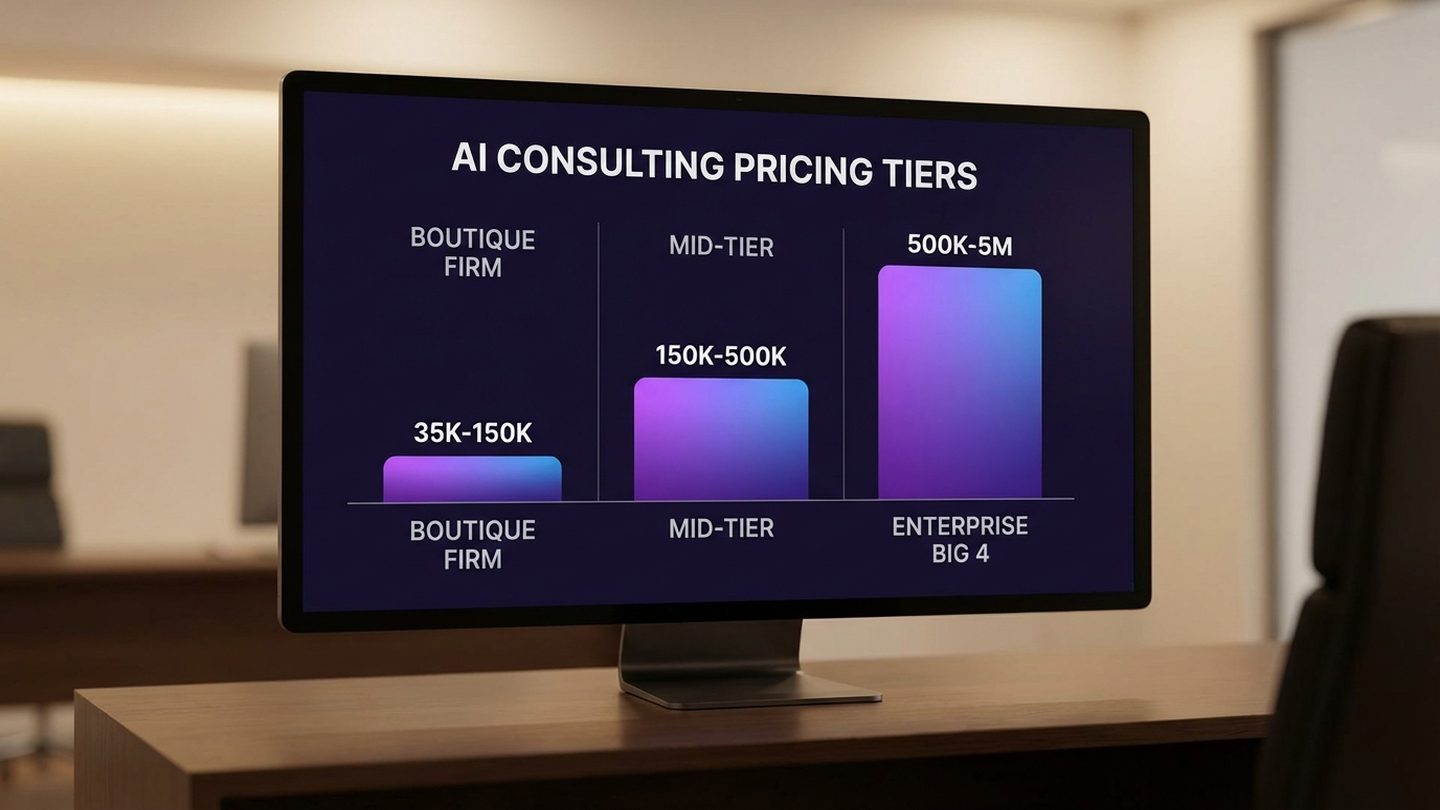

The research picture for 2026 is consistent across every major study. The headline figures tell the same story: adoption has outpaced architecture, and the gap between deploying agents and governing them is widening.

McKinsey's State of AI (N=1,993 respondents, 105 countries, June–July 2025) found that 23% of organizations report they are actively scaling an agentic AI system in at least one business function. That sounds like progress until you read the next line: 62% say their organizations are at least experimenting with agents — meaning the majority of those experiments are not reaching production scale. Nearly two-thirds of organizations have not yet scaled AI across the enterprise.

Deloitte's State of AI in the Enterprise 2026 (N=3,000+ senior leaders, 24 countries) confirms the governance gap that makes the adoption numbers alarming rather than encouraging. Twenty-three percent of companies report using agents at least moderately today. That figure is expected to reach 74% within two years. Only 21% of companies currently have a mature governance model for autonomous agents. Three in four companies planning to scale agents are doing so without the governance infrastructure to manage them safely.

A March 2026 survey of 650 enterprise technology leaders found 78% have at least one AI agent pilot running — but only 14% have successfully scaled an agent to organization-wide operational use. The scaling gap is not technical. It is architectural and organizational.

And then there is the OutSystems research from April 2026, which surveyed nearly 1,900 global IT leaders and found 96% of enterprises already using AI agents in some capacity — with 94% reporting concern that sprawl is increasing complexity, technical debt, and security risk. Only 12% have implemented a centralized platform to manage it.

Gartner adds the trajectory: 40% of enterprise applications will embed AI agents by end of 2026, up from less than 5% in 2025. That is an eightfold increase in a single year. Every new deployment has the potential to compound the sprawl problem — or to avoid it entirely, if the architecture comes first.

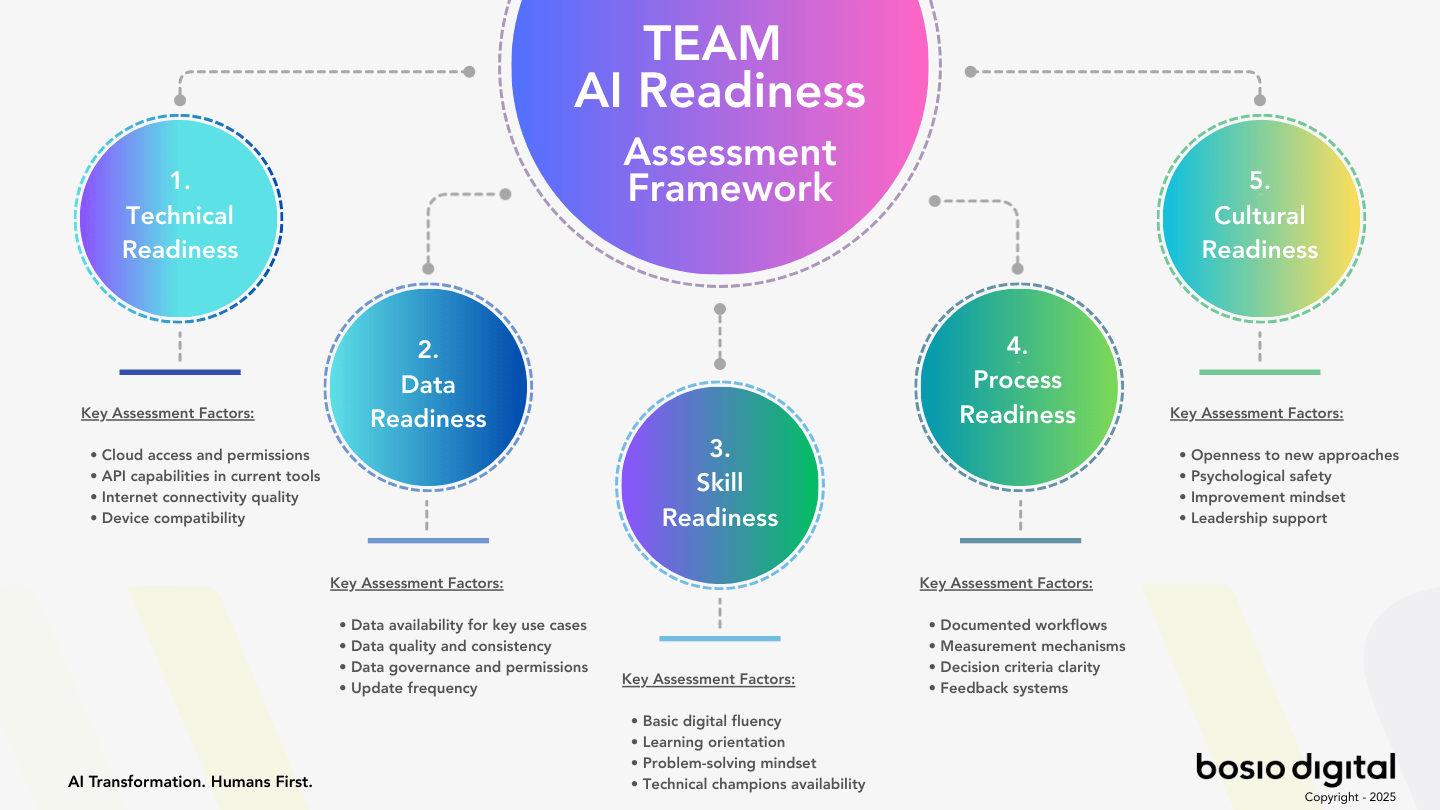

Free Assessment · 10–15 min

Is Your AI Strategy Building an Operating System — or Agent Sprawl?

Most businesses skip the architecture question — and that's exactly why AI projects stop scaling. The bosio Architecture Assessment scores your readiness across five dimensions and gives you a clear, personalized action plan. No fluff.

What Agent Sprawl Is Actually Costing You

Sprawl is not just disorganized. It is expensive. And the costs are not one-time — they compound.

The first cost is redundant integration. When different teams build agents independently, they each build their own connections to the same tools: the same CRM, the same data sources, the same calendar systems. Each integration requires maintenance, monitoring, and security review. Multiplied across twenty or fifty independent agent deployments, the maintenance burden becomes a hidden tax on every system it touches.

The second cost is compliance exposure. A 2026 governance analysis found that 80% of organizations deploying AI agents are doing so without the governance infrastructure to manage them safely at scale. When AI operates without explicit permission frameworks, audit trails, or documented governance rules, every action the agent takes is a potential compliance event that cannot be explained after the fact. For regulated industries — financial services, healthcare, professional services — this is not a theoretical risk. It is a liability accumulating with every ungoverned deployment.

The third cost is the most invisible: no compounding value. Most AI implementations are static. They do not improve after deployment. Each agent stays at the quality level it reached on day one. Every agent that operates without a learning loop — a structured feedback path that flows execution results back into the system — is an investment that depreciates rather than compounds. Companies deploying static agents are not building an asset. They are renting a fixed capability that grows relatively weaker as the market matures around it.

The fourth cost is ROI fragmentation. When AI initiatives scatter across teams without centralized tracking, measuring the aggregate return becomes nearly impossible. Costs accumulate on infrastructure, maintenance, and tooling across every team. Results appear in individual team reports. Nobody is looking at the consolidated picture — which means nobody can make the investment decisions that the consolidated picture would support.

There is also the organizational cost that nobody budgets for. When the knowledge of how an AI system works lives primarily in the head of the person who built it, and that person leaves, the institutional investment walks out the door with them. This is not a technology problem. It is an architecture problem — one that a well-designed context layer would have prevented.

Companies that use AI governance tools get over 12 times more AI projects into production than those that do not. That ratio deserves attention. It is not saying governance creates better ideas. It is saying that ungoverned AI investments fail the path from pilot to production. The sprawl problem is not just a governance problem. It is a compounding execution problem.

Why the Platforms Can't Fix It

The major technology platforms are not ignoring agent sprawl. They have each announced infrastructure responses to it. None of them solve the design problem — and the design problem is where the actual business value lives or fails to appear.

AWS launched Agent Registry in April 2026: a centralized catalog and discovery layer for agents, tools, skills, and resources across the enterprise. "As enterprises scale to hundreds or even thousands of AI agents," AWS wrote at launch, "tracking what exists, who owns it, and whether it's approved for use has become an operational crisis in itself." Agent Registry addresses the discoverability crisis directly. It tells you what is running and who owns it.

Microsoft's response is the governance layer embedded in Agent 365: centralized agent identity through Entra Agent ID, policy enforcement, and security monitoring across Microsoft's ecosystem. Google's answer is Workspace Studio, bringing agent orchestration into the familiar Google Cloud environment. Anthropic launched Claude Managed Agents the same week: composable APIs that handle sandboxing, scoped permissions, identity management, and execution tracing — the infrastructure layer for running production agents with governance built in. ServiceNow added an AI Control Tower for compliance and business strategy oversight.

These are real infrastructure improvements. They are the right responses to a platform-level problem.

But none of them solve the design problem. And the design problem is prior to everything else.

An agent registry tells you what agents exist and who owns them. It does not tell you how to structure your skills — the versioned working instructions that define what each agent actually does and how it improves over time. It does not define your context architecture: what organizational knowledge every agent draws from, how that knowledge is maintained, how it compounds as the organization learns. It does not set your governance rules: what AI can do autonomously at which trust levels, what requires human approval, what is off-limits. And it does not build your learning loops: the feedback paths that flow execution results back into the system so it improves with every use rather than staying static at deployment quality.

These are not platform problems. They are design problems. Design problems require design decisions — about your organization, your workflows, your risk tolerance, and your organizational knowledge. No platform can make those decisions for you.

We have seen this pattern before. Enterprise software vendors built ERP infrastructure. The companies that got value from ERP did the process design work first — they decided how their business would operate before the platform was configured. The companies that skipped the design work ended up with expensive systems that automated their existing chaos.

The agent infrastructure platforms are building better ERP. The operating system question — the design work — remains with the organization. That is the consulting problem bosio was built to solve.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

25 Agents, Zero Intelligence: The Before State

Here is a pattern we see in nearly every company we talk to. A team has twenty, twenty-five, sometimes fifty specialized AI agents. Each one is purpose-built for a specific task: research, drafting, analysis, client communication, content production. On paper, it looks like a sophisticated AI operation. Broad coverage. Deep specialization. Multiple workflows automated.

In practice, the system works like a filing cabinet that requires constant human maintenance to stay useful. Each agent only improves when someone actively updates it. None of them share context. None of them learn from each other's executions. When a team member leaves, the knowledge they built around their agents — the prompts they refined, the edge cases they accounted for, the undocumented workflows they maintained — leaves with them.

More agents than intelligence. That is the phrase we keep coming back to.

This is not a story about building the wrong technology. These agents are typically well-designed for their individual functions. The failure is architectural: no shared context, no feedback loop, no governance layer, no mechanism for the system to improve automatically. Every improvement is a human intervention. The system's ceiling is the maintainer's bandwidth.

This is not an edge case. It is the representative outcome of enterprise AI adoption in 2025 and 2026. The organizations operating twenty or fifty agents face the same structural problem: agents that accumulate without compounding. More tools. The same organizational intelligence. And no architecture connecting any of it into something that gets smarter over time.

The question is not whether you have too many agents. The question is whether your agents are connected to a system that makes them more intelligent with every use — or whether they are static, siloed, and waiting for a human to manually update them. Most organizations cannot yet answer that question. That is the problem.

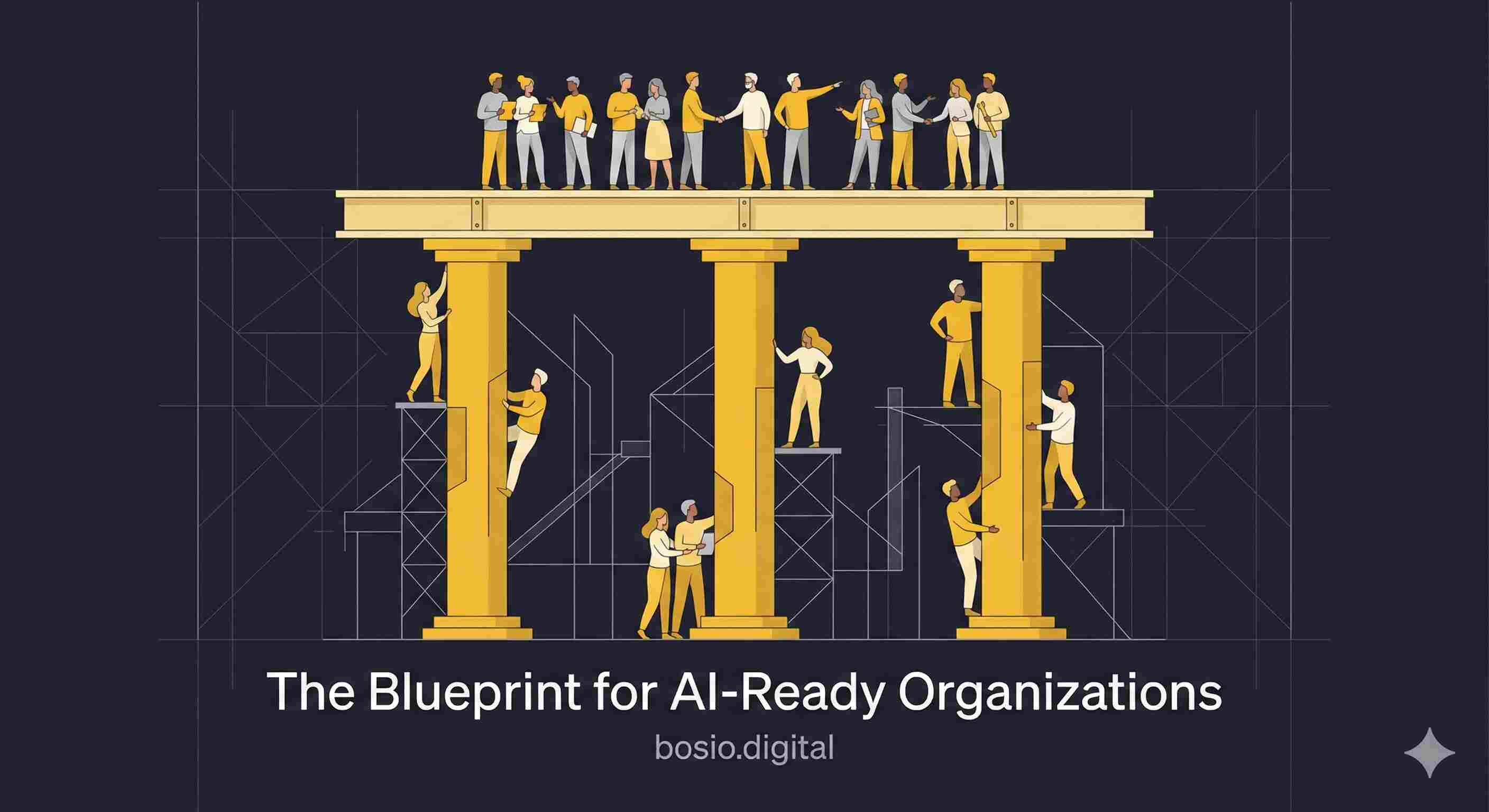

What an AI Operating System Actually Looks Like

The alternative to agent sprawl is not fewer agents. It is intentional architecture from day one. Four components turn isolated agents into an operating system — and each one addresses a specific sprawl failure directly.

Skills — structured, versioned working instructions for every repeatable task. Not prompts saved in a notes document. Not ad hoc workflows that depend on whoever created them remembering the context. Skills are explicitly designed, version-controlled working instructions that define exactly what the agent does, how it does it, and what decisions it escalates to humans. A skill for client proposal writing contains the firm's methodology, the voice standards, the approval logic, and the improvement record from every execution. It gets better the more it is used — not because the model gets smarter, but because the instructions do.

The difference between a skill and a prompt is the difference between institutional knowledge and personal knowledge. Prompts live in someone's ChatGPT history. Skills live in the system — and they stay there when people leave.

Context — a shared intelligence layer every agent can access. This is the organizational knowledge base: who the firm's clients are, how decisions get made, what the company's methodology actually is, what it learned from its last twenty projects. Every agent draws from the same context, which means every agent starts from the same organizational intelligence rather than from zero.

Most organizations have this knowledge. It lives in people's heads, in emails, in old project folders. The context layer makes it available to every AI agent in the system — and keeps it current as the organization evolves. This is what we mean when we talk about AI that actually knows your business. The agent is only as intelligent as the context it can draw from. Building that context layer is architectural work that no platform does for you. How you structure and compile it determines whether your AI compounds over time or stays static — which is exactly what we explored in detail in our piece on building living intelligence from organizational knowledge.

Governance — explicit rules that determine what AI can do autonomously, what requires approval, and what is off-limits. The traffic light model maps neatly onto practice: green for autonomous execution, yellow for proceed with notification, red for stop and escalate. The specific categories matter less than the principle: every AI action in the system has a documented authorization level before the action is taken.

Without governance, every agent deployment is a trust question the organization is resolving informally — through the intuitions of whoever built the agent, which may or may not reflect the organization's actual risk appetite. Effective AI governance is not a constraint on AI capability. It is the mechanism through which capability can responsibly grow. Organizations with mature governance frameworks get more AI projects into production, not fewer. That is the opposite of the intuition most teams have when they hear the word governance.

Learning loops — feedback paths that flow execution results back into the system. When an agent completes a task, what happens to that output? In most deployments, the answer is: nothing systematic. The output goes to the human, the human acts on it, the agent starts fresh next time. The execution generated no intelligence that the system retained.

A learning loop captures that intelligence. Three mechanisms in practice: silent skill optimization, where systematic feedback patches the skill file directly; a structured feedback queue with a human gate, where observations that go beyond individual skill corrections are logged for review and approval; and automatic context enrichment, where key decisions and learnings from each session flow back into the shared context layer. The system gets smarter with every use, rather than staying static at deployment quality. That compounding is the return on architecture investment that agent sprawl makes impossible to achieve.

These four components — skills, context, governance, learning loops — are what turn a collection of agents into an operating system. bosio.digital has been running this architecture in production since 2025. Every pattern described here, we have built for ourselves first. When we design it for clients, we are transferring an architecture we live inside every day. That is the proof of concept. That is what makes the claim credible rather than theoretical.

Start Building

Audit Your Current AI Architecture

Run this prompt with Claude or ChatGPT to map your current agent landscape and surface where you have sprawl before it compounds further.

Context: I'm mapping the AI agents and tools currently in use across my organization to identify where I have sprawl. Step 1 — Inventory: Help me list every AI tool, agent, or automated workflow in use. For each one: What does it do? Who owns it? What systems does it connect to? Does it have documented governance rules? Step 2 — Sprawl Diagnosis: For each agent, answer: Is there a shared context layer it draws from? Does it improve automatically, or only when a human updates it? Can it be audited after the fact? Step 3 — Architecture Gaps: Based on the inventory, identify: redundant integrations, agents operating without governance, missing feedback loops, and context that lives only in individual tools or people. Output: A table — Agent | Owner | Governance (Y/N) | Learning Loop (Y/N) | Shared Context (Y/N) — and a list of the three most urgent architectural gaps to address first.

This gets you the map. The architecture decisions — what to consolidate, what governance model fits your risk profile, how to build the context layer — require organizational context this prompt can't provide on its own. See where you stand →

How to Audit Your Current AI Architecture

You do not need a consultant to tell you whether you have agent sprawl. Five questions will tell you — and the answers will show you exactly where the architecture work needs to start.

Do your agents share context? When one agent completes a task, does the output — the learning, the decision, the edge case — become available to other agents in the system? Or does each agent start from zero with every execution? If your agents are not drawing from a shared context layer, the organizational intelligence your AI generates is scattered and inaccessible. The system has no memory.

Do your skills improve automatically? When an agent performs a repeatable task, does the quality of that task improve over time without a human manually updating the instructions? If every improvement requires someone to rewrite the prompt or update the workflow, your AI infrastructure is a depreciating asset. It is not learning. It is waiting for maintenance.

Do you have a governance layer? Is there a documented framework specifying what each agent can do autonomously, what requires a human review step, and what is off-limits entirely? Or do different teams operate on different assumptions — meaning the only thing preventing an agent from exceeding its intended scope is the hope that nobody thought to ask it to? Governance is not bureaucracy. It is the precondition for expanding AI autonomy safely and demonstrably.

Can you audit what your AI did and why? If a decision was made with AI assistance last week, can you reconstruct the inputs, the reasoning, and the output? In regulated industries, this is an emerging compliance requirement. The absence of auditability is the absence of accountability — and accountability is what makes it possible to expand AI's role with confidence rather than anxiety.

Does your system get smarter with every execution? The compounding value of an AI operating system comes from its learning architecture. Every execution should contribute something back: a skill improvement, a context update, a governance refinement. If your system is not getting smarter, it is not an operating system. It is a collection of tools that requires the same human effort to produce the same output every time.

Most organizations that run through these five questions find two or three clear failures. That is not a crisis — it is a map. And connecting your context layer so it actually compounds is typically where the highest leverage is, because it is the work that every platform tool assumes you have already done. Organizations that have built it are in a fundamentally different competitive position than those that haven't.

Wondering how your architecture stacks up against these five questions?

The bosio Architecture Assessment maps your gaps and gives you a personalized action plan in minutes.

Building vs. Buying: The Architecture Decision

Not every company should build its AI operating system from scratch. The build-versus-buy question matters far less than it is usually framed. What matters — and what most organizations are currently skipping — is the architecture question itself.

In practice, the realistic options for building an AI operating system in 2026 come down to four paths. Anthropic's Claude — specifically Cowork for strategy and reasoning, Code for execution and automation — is the most architecture-ready option today, with native support for persistent context, skills, and agent orchestration. Microsoft Copilot is building similar capabilities into its ecosystem, with Cowork-style features emerging across the 365 stack. Google requires more custom development through Workspace Studio and Vertex AI, but offers deep integration for organizations already running on Google Cloud. And then there is the custom-built path: stitching together multiple tools — an LLM here, an automation layer there, a knowledge base somewhere else — into something that functions as a system. Every path works. None of them exempt you from the architecture decisions.

The same four design questions apply regardless of which path you choose: How are your skills structured and maintained? What context does every agent draw from? What governance rules determine AI autonomy levels? How does each execution feed back into the system?

Platforms determine where these decisions get implemented. They do not make the decisions for you. A well-designed operating system on any of these platforms is an operating system. A poorly designed one is expensive, governed agent sprawl — better tracked, but still fundamentally static and siloed.

The role of a consulting firm in this work is not to pick the platform. It is to help you make the architecture decisions that determine whether the platform delivers its potential. That includes defining your skill library, designing your context architecture, setting your governance framework, and building your learning loops. All of these are organizational decisions that require understanding how your business actually works — decisions that cannot be outsourced to a platform vendor whose incentives end at configuration.

The platform competition between OpenAI, Anthropic, and Google is making the infrastructure better and cheaper every quarter. That acceleration makes the architecture question more urgent, not less — because more organizations will be deploying more capable agents into systems that still have no design behind them.

2026 is the year the collision arrives. The ungoverned agents deployed in 2025 are starting to create real problems: redundant costs, compliance exposure, ROI fragmentation. The organizations that navigate this cleanly are the ones that use the collision as a forcing function — not to slow down, but to start designing.

Everyone's selling you agents. Fewer are asking whether you have the operating system to run them.

That question is where the real work starts.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Frequently Asked Questions

What's the difference between AI agents and an AI operating system?

Individual AI agents are purpose-built tools that handle specific tasks — writing, research, data analysis, customer communication. An AI operating system is the architecture that connects them: a shared context layer every agent draws from, a skills library of structured working instructions, a governance framework defining AI autonomy levels, and learning loops that feed execution results back into the system so it improves over time. Without an operating system, agents are powerful but isolated. With one, each agent contributes to cumulative organizational intelligence rather than operating as a standalone tool.

How do I know if my company has AI agent sprawl?

Five questions reveal it: Do your agents share context with each other? Do your skills improve automatically without human intervention? Do you have documented governance rules specifying what AI can do autonomously? Can you audit AI decisions after the fact? Does your AI system get smarter with every execution? If the answer to two or more is no, you have some degree of sprawl. The OutSystems 2026 research found 94% of enterprise organizations are concerned about sprawl — most mid-market companies will find three or four of the five questions expose clear architectural gaps.

What does AI governance actually mean in practice?

Governance is a documented framework that specifies what each agent can do autonomously (green), what requires human notification before proceeding (yellow), and what is off-limits or requires explicit approval (red). In practice, it means knowing the authorization level of every AI action before it is taken, being able to audit what the AI did and why after the fact, and having a mechanism to adjust those authorization levels as trust builds over time. Companies with mature governance models get over 12 times more AI projects into production than those without — governance expands AI capability, it does not restrict it.

Can I fix agent sprawl without starting over?

In most cases, yes. The architecture work does not require replacing existing agents — it requires adding the connective tissue that turns isolated agents into a system: a shared context layer the agents can draw from, structured working instructions (skills) for your most repeatable tasks, governance rules for the agents you already have, and feedback paths that capture execution learnings. The consolidation of redundant agents follows naturally once the architecture is clear. Starting over is rarely necessary and usually counterproductive — the goal is to design around what you already have.

How is an AI operating system different from Copilot or Salesforce Agentforce?

Copilot makes Microsoft applications smarter. Agentforce automates Salesforce workflows. Both are application-level AI — excellent within their respective platforms, but not a unifying architecture for the organization as a whole. An AI operating system sits at a layer above individual platforms: the shared context, skills library, governance framework, and learning infrastructure that every agent draws from regardless of which platform runs it. Copilot and an AI OS are not competing — they run side by side. The operating system layer makes every platform tool more valuable, not redundant.

What does an AI skills library look like?

A skills library is a version-controlled collection of structured working instructions — one document per repeatable task. Each skill contains the exact steps the AI should follow, the decision rules for edge cases, the human escalation triggers, the quality standards the output must meet, and a learning log where improvements are captured after each execution. A mature skills library might contain dozens of skills: client proposal writing, weekly reporting, research synthesis, competitor analysis, onboarding documentation. The key difference from a prompt library is versioning and improvement — skills get better with every use because the instructions are updated, not just the model.

How long does it take to build a functioning AI operating system?

For a company up to 25 people, bosio.digital's ClaudeOS implementation runs six to eight weeks: two weeks of discovery and architecture design, two to three weeks of build, and one week of training and handover. The more important number is the ROI timeline: a 20-person company recovering five hours per person per week at $80 per hour generates $8,000 in weekly value. A $35,000 implementation pays back in five to seven weeks. The constraint is not time — it is the willingness to treat AI as infrastructure rather than a software purchase.

Sources

- Agentic AI Goes Mainstream in the Enterprise, but 94% Raise Concern About Sprawl — OutSystems State of AI Development 2026 (N=~1,900 global IT leaders), April 2026

- The State of AI in 2025: Agents, Innovation, and Transformation — McKinsey (N=1,993 respondents, 105 countries), November 2025

- The State of AI in the Enterprise 2026 — Deloitte (N=3,000+ senior leaders, 24 countries), 2026

- The Future of Managing Agents at Scale: AWS Agent Registry Now in Preview — Amazon Web Services, April 2026

- AI Agent Scaling Gap March 2026: Pilot to Production — Digital Applied (N=650 enterprise technology leaders), March 2026

- Writer Enterprise AI Adoption 2026 — 79% of organizations report challenges scaling AI (double-digit increase from 2025)

- Scaling Managed Agents: Decoupling the Brain from the Hands — Anthropic, April 2026

- AI Agent Sprawl: Security Risks and Governance Challenges for Enterprises — Reco.ai, 2026

- Agent Sprawl Is the New IT Sprawl — Dataiku, 2026

- AWS Agent Registry: Taming Enterprise AI Sprawl — The Letter Two, April 2026

- The AI Governance Mandate: Scaling Agentic AI on Google Cloud in 2026 — Covasant, 2026