Question: Why doesn't my AI tool remember anything about my business between sessions?

Quick Answer: Most AI tools operate at the app level — designed to be stateless, resetting with every new session. OS-level AI is different: it maintains persistent context about your business, reads what matters before every interaction, and gets more useful over time. The gap isn't about which AI you're using — it's about what layer it operates at, and what that layer knows about your organization before you've said a word.

Every session, your AI starts over.

You open the tool. You brief it on who the client is, what you're working on, what matters right now. It helps — sometimes brilliantly. You close the tab.

Tomorrow, you open it again. Same briefing. Same context from scratch. The AI doesn't remember the client. It doesn't know about the strategic pivot your team discussed last Tuesday, the decision that came out of the board meeting, or the proposal that's been through three rounds of revision. Every session begins cold. Every interaction starts at zero.

Most people assume this is temporary. A limitation the tool will eventually fix. A feature on some roadmap.

It isn't. It's a design choice — and once you understand why that choice was made, you start to see exactly what it's costing you.

The Reset Is a Design Choice — and It Has a Cost

App-level AI was built to be stateless. This wasn't an oversight — it was intentional. Stateless design means each session is isolated, each conversation is its own container, and nothing carries forward. This protects privacy. It scales predictably. It keeps the product simple.

For most of what app-level AI was built to do — drafting emails, summarizing documents, answering questions — stateless works fine. You don't need the tool to remember yesterday's meeting to help you rewrite a paragraph today.

But here's what stateless design actually means for a business: you become the context layer.

Every time your team uses AI, someone re-establishes context. They paste in the company background. They re-explain the client relationship. They summarize the project history, the constraints, the tone that matters here. They do this before every conversation worth having. They do it again the next day. And the day after that.

Research from Microsoft's Work Trend Index found that knowledge workers spend more than half their working week on communication and coordination rather than creation — meetings, email, the overhead of keeping everyone in the loop. AI tools running at the app level don't reduce this burden. They add to it. They're one more thing that needs to be kept current, one more system that doesn't know what it should.

Multiply the briefing time across your team. Multiply it across a week. Then ask yourself what percentage of your AI investment is going toward actually doing work versus explaining the work to the tool before it can help.

There's a deeper cost that's harder to see. App-level AI can get smarter about language, reasoning, coding, writing. It cannot get smarter about your business. Because your business, to that tool, doesn't exist between sessions. Every interaction is first contact. No matter how long you've been using the tool, no matter how much your team has built, the AI wakes up each morning with no memory of you at all.

That ceiling — not the tool's capability ceiling, but the organizational knowledge ceiling — is what OS-level AI removes.

Two Kinds of AI — and Only One Knows Where You Left Off

The difference between app-level and OS-level AI is not about capability. Both can write, reason, code, and analyze. The difference is about what the AI knows before it opens its mouth.

App-level AI operates inside a specific tool, for a specific task, in a specific session. The product is the AI. The session is the container. When the session ends, the container empties. This is how most enterprise AI deployments work right now — standalone tools that do impressive things in isolation while remaining ignorant of the broader organization they're supposedly serving.

OS-level AI is something different. It operates across the whole business — across tools, across sessions, across time. It maintains a persistent understanding of who you are, what you're building, what decisions you've made, and where you are right now. It doesn't need you to brief it at the start of every conversation. It already knows.

Here's the practical distinction. App-level AI knows everything about language, reasoning, and the task in front of it. OS-level AI knows all of that — and it also knows your strategy, your clients, your voice, your constraints, and the conversation you had last week that's still shaping what you're doing today.

Think about what "knows your business" actually means in practice. It means the AI knows that you're mid-market B2B, that you have three active client engagements right now, that one of them is in a sensitive negotiation phase, that your quarterly board presentation is in six weeks, that your team has a specific framework for how you scope projects, and that when you say "keep it sharp" you mean something specific that's different from what another leader means when they say the same words.

App-level AI can be taught any of these things — once, in a session, and then it forgets. OS-level AI carries them forward. Every session picks up where the last one left off.

The AI landscape is moving fast. The race between AI labs right now isn't really about which model writes better prose or solves harder math problems. It's about which AI can become genuinely useful inside an organization — useful in the way a long-tenured colleague is useful, because they know the terrain. Context is becoming the competitive moat.

What This Looks Like in Practice

The value of persistent AI context isn't abstract. It shows up in the specific moments that matter — the ones where you're under time pressure, working with partial information, trying to produce something that reflects the full weight of your organization's knowledge rather than just the thirty seconds you had to brief the tool.

Consider what happens when you need to prepare for a major client conversation. With app-level AI, you start by pasting in the client background. Who they are, what they've engaged you for, where the relationship stands, what's sensitive right now, what tone works best with their leadership team. This might take ten minutes if you're thorough. If you're in a hurry, you paste less. The AI works with what it gets. The quality of the output reflects the quality of the briefing you had time to give.

With OS-level AI, you open the session and the AI is already current. It knows the client. It knows the context. It knows what you agreed to in the last meeting and what still needs resolution. Your ten-minute briefing becomes a thirty-second orientation — "we're talking with them tomorrow, they pushed back on scope in our last call, help me think through how to approach that." The AI can actually help with the strategic question because it already has everything it needs to understand why the question matters.

The same pattern holds for onboarding. When a new team member joins, they spend weeks acquiring context — understanding clients, projects, history, the way your organization thinks. Most of that context lives in people's heads. Some of it gets written down. A lot of it gets lost. OS-level AI holds that context persistently. A new person asking "what do I need to know about this client before my first call" gets a comprehensive, current answer — not because someone had time to write a brief, but because the AI has been present for every relevant conversation and knows what matters.

We've found in our work with mid-market teams that context loss is one of the most underestimated costs in AI adoption. Teams that build and maintain structured context files for their AI tools report dramatically shorter ramp times for new projects and significantly less re-work — because the AI understands not just the task but the reasoning behind the decisions that shaped it. The context advantage compounds in the same way institutional knowledge compounds: slowly at first, then all at once.

Or consider a more common, more mundane moment. You're following up on a decision you made three months ago. What was the rationale? What alternatives did you consider? What had you planned to review after ninety days? With app-level AI, you search your notes, your email, your memory. You piece it together. You might be missing half the story. With OS-level AI, you ask — and the AI tells you, accurately, because it was there.

These aren't edge cases. They're the ordinary texture of organizational work. They happen dozens of times a week in every business that's trying to move fast on things that actually matter. The cost of not having them isn't always visible — but it accumulates.

The real competitive advantage of persistent AI context isn't any single conversation. It's what happens when you multiply that advantage across every project, every client, every team member, every quarter. That's not a productivity tool. That's an intelligence infrastructure.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

The Running Log — AI That Stays Current

There's a concept we've started calling the running log — and it's the piece that transforms AI from a capable tool into something that actually functions like a colleague who's been paying attention.

Most approaches to persistent AI context focus on what the AI knows at the start: a company brief, a client background file, a set of documents that establish the fundamentals. This is valuable. But it's static. It captures who you were at the time it was written. It doesn't capture what happened yesterday.

The running log is different. It's a living document — updated at the end of every significant work session, read at the beginning of the next one. It captures what happened, what was decided, what shifted, what needs to carry forward. It's the AI's memory of recent reality, not just the established context of the business.

Here's how it works in practice. At the end of a working session, before closing the conversation, the AI summarizes: what problems were worked on, what decisions were made, what was left unresolved, what context emerged that matters for next time. This summary goes into the running log — a structured document that grows with your work.

The next session starts by reading that log. The AI is current before you've said a word. It knows that the proposal you submitted three days ago hasn't gotten a response yet and you're planning to follow up. It knows that the internal debate about pricing has landed on a direction. It knows that the thing you called urgent last Thursday turned out not to be urgent at all, and the thing that seemed minor has become the focus. None of this needs to be re-explained. The AI was there. Or rather — it reads what was written as if it was there.

The running log solves a problem that static context files can't: the gap between who you were when you wrote the file and who you are now. Most organizational knowledge — the real, current, decision-level knowledge — lives in the conversations that happen day to day. The running log captures those conversations systematically and makes them available to AI on an ongoing basis. It's the difference between briefing your AI assistant on your company once and briefing them after every meaningful development.

We use this approach ourselves at bosio.digital. At the start of every content production session, we load a running document that captures where we are — what's in progress, what's been approved, what decisions have shaped recent work. The AI doesn't need a ten-minute recap. It reads the document and it's current. At session end, we update it. The next session starts with that update already loaded.

What makes the running log genuinely powerful is that it compounds. The first time you write it, it's a simple summary. Three months later, it's a detailed record of how your thinking has evolved, which decisions have held up, which assumptions have been revised, which clients have become central and which have shifted to the periphery. The AI that reads a running log from a mature, well-maintained context isn't just current — it has institutional memory that most organizations never manage to build at all.

A few things that belong in a running log:

- Recent decisions and their rationale — not just what was decided, but why, because the why matters when you revisit the decision later

- Active projects and their current state — a one-line status for anything with ongoing momentum

- Emerging context — things that are becoming more important, relationships that are developing, patterns that are showing up

- Open questions — things you're still figuring out, so the AI knows to hold them lightly rather than treat them as settled

- What changed — specifically the things that shifted since the last update, because change is often more informative than current state

The running log isn't a project management tool. It's not a CRM. It doesn't replace your note-taking system or your documentation. It's specifically for your AI — structured so the AI can read it quickly and understand what matters right now, not just what was true when you set up the system.

The distinction matters because a document written for humans reads differently than one written for AI. The running log is concise, structured, and current. It prioritizes what's active over what's historical. It uses consistent language so the AI can parse it reliably. Done well, reading it takes the AI thirty seconds and it arrives in the conversation genuinely ready to help.

Where Most Teams Start

You don't need a custom AI deployment, a new platform, or a six-month implementation project to get the benefits of persistent AI context. You need a structured document and the discipline to keep it current.

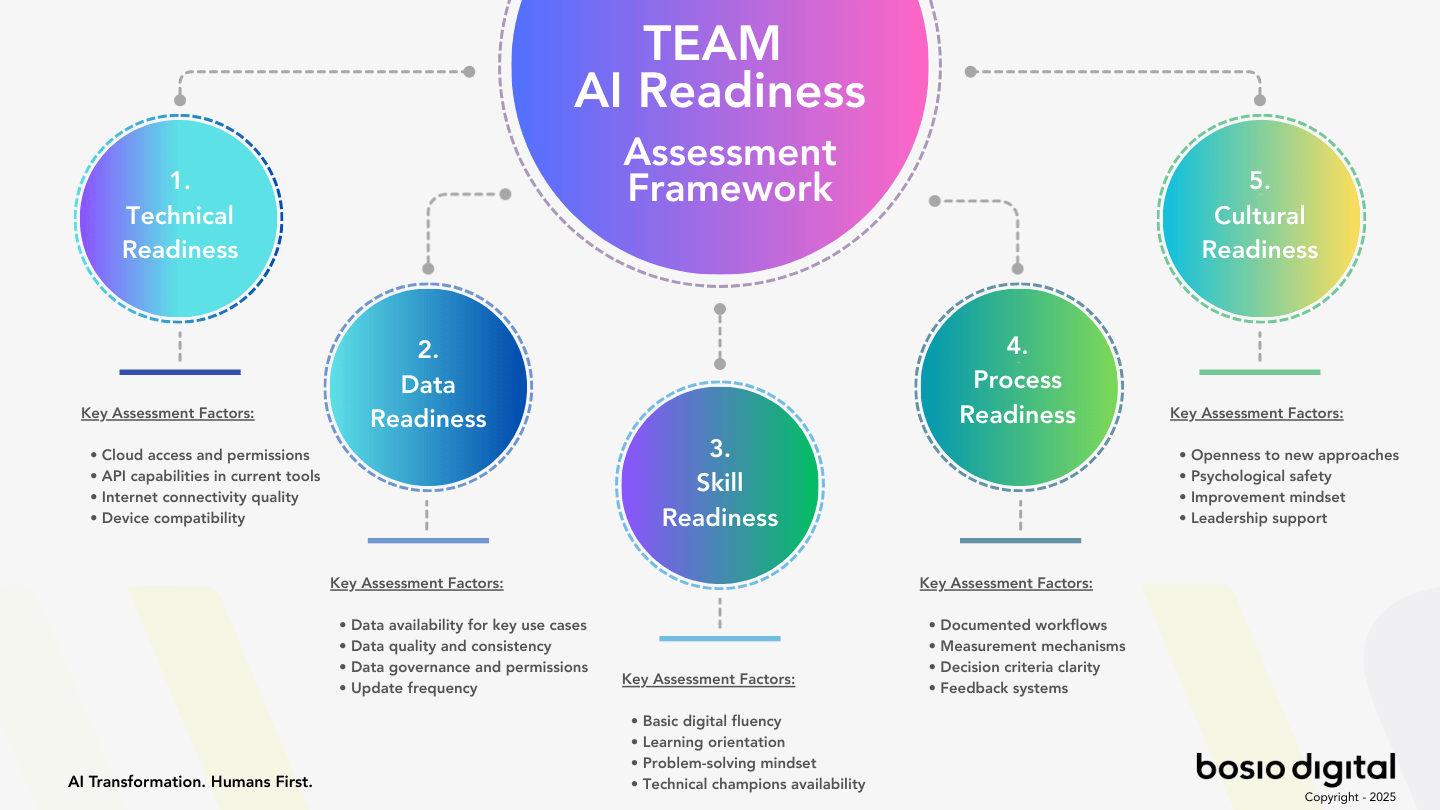

Most teams start with three layers.

The first layer is business context. This is the document that establishes who you are — your business model, your clients, your team structure, your strategic priorities, your voice and tone, the frameworks you use, the language that matters inside your organization. Write it once. Keep it honest. Update it when something fundamental shifts. This document is what turns generic AI into AI that sounds like it actually understands your business. We typically see this run 500–1,000 words — dense with specifics, light on aspiration.

The second layer is project context. For any significant engagement or initiative, a brief document that captures the specifics: what we're trying to accomplish, the constraints we're working within, what's been decided so far, who the key stakeholders are, what good looks like. This isn't a project plan. It's a context brief — the information the AI needs to be genuinely useful for this particular body of work.

The third layer is the running log. This is the one that most organizations skip, and the one that makes the difference. Everything in layers one and two tells the AI what's true in general. The running log tells it what's true right now.

Getting started is less work than it sounds. The business context document typically takes a few hours to write and weeks to refine. The project context for your first active engagement might take an hour. The running log starts small — a few bullet points after your first AI-assisted session — and builds from there.

In our experience, the organizations that see the fastest and most durable returns from AI aren't the ones that deployed the most sophisticated tools. They're the ones that did the unglamorous work of building context — writing down what they know, structuring it for AI, and keeping it current. The 63% of AI implementations that fail at the human level fail not because the technology wasn't powerful enough, but because the AI was never given the organizational knowledge it needed to do anything beyond generic tasks.

The good news: this is fixable. It's not a technology problem. It's a documentation and discipline problem — and those are well within reach for any team that decides to take them seriously.

A few principles worth holding onto as you build:

- Write for AI, not for humans. The context documents that work best are structured, specific, and direct. They're not marketing copy or corporate narrative. They're operational truth.

- Current beats comprehensive. A running log that's updated every session is more valuable than a business context document that's perfectly written but six months stale. Recency matters more than completeness.

- Start with what causes the most re-briefing. If you're explaining the same client background in every session, that client background belongs in a context file. Build the documents that eliminate the most repeated work first.

- Make the session log a closing ritual. Five minutes at the end of every significant AI session. What happened. What was decided. What needs to carry forward. It sounds small. It accumulates into something that changes how AI works inside your organization.

This is what "Humans First" AI actually looks like in practice — not replacing people with tools, but building the infrastructure that lets your people work with AI in ways that reflect everything they know. The AI isn't the intelligence here. Your people are. The AI is the system that holds that intelligence between sessions, so it doesn't get lost every time someone closes a tab.

The Question Worth Asking

Most AI conversations in organizations focus on what the tool can do. Can it write? Can it code? Can it analyze? The answers to these questions are largely the same across the major tools now — impressive, and converging toward each other.

The question that actually separates organizations making real progress from those running in place is different: what does your AI know about your business when it shows up to work?

If the answer is "nothing, until we brief it again today" — that's the ceiling you're working under. The tool is powerful. The organizational layer it's operating at is artificially limited.

Building persistent context doesn't require a major project. It requires a document, a discipline, and a decision to treat your organizational knowledge as infrastructure worth maintaining. The compounding starts the day you begin. The only thing that delays it is the assumption that the AI will figure it out on its own.

It won't. It doesn't know your business. That's still your work. But building the context layer is among the most impactful work available to most organizations right now — and the results of getting it right are the kind that make every other AI investment perform better.

What this looks like in practice — the actual setup, the discipline that makes it stick, and the patterns that separate context that compounds from context that gets abandoned — is what the next piece in this series covers.

Frequently Asked Questions

What's the difference between app-level and OS-level AI?

App-level AI operates within a specific tool for a specific task, resetting between sessions. OS-level AI maintains persistent context across tools, sessions, and time — it knows your business before you brief it. The difference isn't about which AI model is more powerful; it's about what layer the AI operates at in your organization and how much of your organizational knowledge it carries forward.

Do I need a special AI tool to give my AI persistent context?

No. You can build persistent context with most modern AI tools using structured context documents — a business context file, project briefs, and a running log. Tools like Claude, ChatGPT, and others support custom instructions or system prompts where this context can be loaded at the start of each session. The discipline of maintaining the context is more important than which specific tool you use.

What should go in a business context file for AI?

A useful business context file typically covers: who you are and what you do, your key clients and active engagements, your team structure and how decisions get made, your strategic priorities, your voice and tone, and the frameworks and language that are specific to your organization. Keep it specific and operational — this is not a mission statement, it's a briefing document written for an AI that will read it at the start of every session.

What is a running log and how is it different from a business context file?

A business context file captures what's true in general about your organization. A running log captures what's true right now — what happened in recent sessions, what was decided, what shifted, what needs to carry forward. The running log is updated at the end of every significant work session and read at the beginning of the next one. It's the AI's mechanism for staying current rather than just informed, and it's the piece most organizations skip and most regret not having.

How long does it take to set up persistent AI context?

The initial business context document typically takes a few hours to write and a few sessions to refine. A project brief for an active engagement might take thirty to sixty minutes. The running log starts as a few bullet points after your first session and builds over time. Most teams can have a functional context system operational within a single week — and the return on that investment compounds with every subsequent session.

Will persistent AI context work across different AI tools?

Yes, though the implementation varies by tool. The documents themselves — your business context file, project briefs, and running log — are tool-agnostic. Most AI tools support loading context at the start of a session through custom instructions, system prompts, or file uploads. The context lives in your documents, not inside any particular AI. This means you're not locked into a specific tool, and your context investment is portable.

How does AI context affect team collaboration?

Shared context files mean every team member is working with AI that has the same organizational knowledge. When context is personal and ad-hoc, AI quality varies by who did the briefing and how much time they had. When context is shared and maintained, the AI becomes a team resource with consistent knowledge of the business — more like shared infrastructure than individual productivity software. Teams that establish shared context also find that the act of writing it down surfaces valuable alignment conversations about what's actually true in the organization.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Sources

- The State of AI — McKinsey & Company

- Work Trend Index Annual Report — Microsoft

- What It Takes to Make AI Work in the Enterprise — Gartner

- The Organizational Learning Challenge of AI — Harvard Business Review

- Why Do Most Transformations Fail? — McKinsey & Company

- Worldwide Artificial Intelligence Spending Guide — IDC

- State of Teams Report — Atlassian

- AI Predictions 2025 — PwC

- Advances in Context Window and Memory — Anthropic

- The Context Advantage: Why Company Knowledge Is Your AI Superpower — bosio.digital

- The 63% Problem: Why AI Fails at the Human Level — bosio.digital

- The Agent Arms Race: What OpenAI, Anthropic, and Google Are Really Building — bosio.digital