Question

What does it mean that Claude is becoming an operating system, and what should businesses do about it?

Quick Answer

In early 2026, Anthropic shipped a sequence of products — Claude Cowork (GA April 9), Claude Design (preview April 17), Routines (preview April 14), Scheduled Tasks (GA February), Skills, the Model Context Protocol, and the Plugin Marketplace (February) — that together turn Claude from a chatbot into an operating system. An operating system manages persistent state, schedules tasks, runs plugins, and governs permissions. Claude now does all of these. But the platform Anthropic shipped is substrate, not solution. Out of the box, Claude does not know your business, does not know your skills, does not enforce your governance, and does not improve over time. Those have to be built. The companies that built them are seeing AI ROI. The companies that did not are using Claude like a chatbot and wondering why nothing changes.

Think about the difference between using Microsoft Word and using Microsoft Windows. Word is a tool you open to do a specific job — write a memo, edit a letter, draft a proposal. Windows is the environment Word runs inside. It schedules tasks, manages files, runs programs, handles permissions. You do not usually think about Windows. It is just there, doing the work that lets everything else work.

Claude was Microsoft Word through 2024 and 2025 — an AI tool you opened for a specific job. Sometime in the first four months of 2026, Anthropic quietly turned it into Microsoft Windows: not a tool, but the environment other tools run inside.

Most of the AI commentary is still arguing about which model is best. That conversation matters for some decisions. It has also run past the more important question: what happens to your AI strategy when the AI you have been using stopped being a tool and started being infrastructure?

This article is about that category change — what Claude has become, what it does not give you out of the box, and what the small number of companies seeing real AI returns have built on top of it.

The Category Line Anthropic Crossed in Q1 2026

Operating systems do not get announced as operating systems. They get assembled — usually quietly, over a sequence of releases that, taken individually, look like incremental product updates. By the time the assembly is complete, the category has already shifted. Most observers were watching the wrong frame.

That is what happened with Claude between February and April 2026. Look at the sequence:

- February 2026 — Scheduled Tasks shipped. Claude could now run recurring jobs autonomously, on its own clock. Not a feature of a chatbot.

- February 2026 — The Plugin Marketplace launched. Third parties could now build integrations into Claude's runtime. An ecosystem move.

- April 9, 2026 — Claude Cowork went GA on macOS and Windows. An AI agent with file access, task planning, parallel execution, and a sandboxed VM, with enterprise analytics, OpenTelemetry, and role-based access controls. The desktop manifestation of the OS.

- April 14, 2026 — Routines launched in research preview. A cloud-hosted automation tier that runs when your laptop is closed. Cron for AI.

- April 17, 2026 — Claude Design launched in research preview. Visual asset creation that reads your codebase and design system. The OS now produces work product, not just text.

- Through the period — Skills and MCP matured. Reusable domain expertise modules plus an open standard for tool integration. The OS now has applications and a driver model.

Read these as isolated features and the picture is small. Read them as components of a single architecture, and the picture is something else. Claude has a runtime (the model). It has a process scheduler (Scheduled Tasks, Routines). It has a file system (Cowork's access to your local files). It has applications (Skills, Claude Design). It has an extension architecture (the Plugin Marketplace). It has an integration standard (MCP). It has a permissions model (enterprise RBAC, sandboxed execution).

The components of an operating system, in other words. Shipped in a sequence that obscured the assembly until the assembly was complete.

Free Assessment · 10–15 min

Are You Building On the AI OS — or Just Using It?

The bosio Architecture Assessment scores how much of your AI investment is going into infrastructure that compounds versus tools that don't. Ten minutes. Concrete answer.

What Makes Something an Operating System

The word "operating system" gets thrown around loosely. For an executive deciding what to invest in, the distinction matters. Most software is an application: it does one thing inside an environment that someone else built. An operating system is the environment. It is what other things run inside.

The functional components of an operating system, set out plainly:

- Persistent state. The system remembers what it knows across sessions. You do not start from zero every time you open it.

- A file system. The system can read and write to organized storage. Information persists in a queryable form.

- Process scheduling. Multiple tasks can run in parallel, in the background, on a schedule. The system does not stop when you stop watching.

- An application layer. Other programs run inside the environment. Each program has a defined purpose. The system orchestrates them.

- An extension architecture. Third parties can build new applications without rewriting the operating system. The ecosystem grows beyond what the original vendor builds.

- A permissions model. Who can do what is defined by the system. Some actions require authorization. Others happen automatically within scope.

- An integration standard. The system talks to external services through a known protocol, so anyone can extend its reach without custom plumbing.

That is the working definition. A chatbot has none of those. ChatGPT in 2023 had none of those. The Claude that most executives still think of as "the AI we use for writing" has none of those.

The Claude that exists in May 2026 has all of them. Persistent state lives in skills and configured context. The file system is Cowork's local access. Process scheduling is Scheduled Tasks plus Routines. The application layer is Skills plus Claude Design. The extension architecture is the Plugin Marketplace. The permissions model is enterprise RBAC plus sandboxed execution. The integration standard is MCP.

This is not a stretched analogy. This is the standard structural definition of an operating system applied to what Anthropic shipped. The category change is technical, not rhetorical.

The Difference Between a Platform and an Architecture

Here is where most of the conversation about "Claude as an OS" goes wrong, and where the bosio argument starts.

What Anthropic built is a platform. A platform is a generic environment. It is designed to work for everyone, which means it works for no one specifically. The same Cowork install that runs on a marketing executive's laptop in Chicago runs identically on a finance team's machine in Frankfurt. The platform makes no assumptions about who you are, what you do, or how your organization makes decisions. That is a feature, not a flaw. Platforms have to be generic to be platforms.

What your organization needs is an architecture. An architecture is what makes a generic platform behave as if it were built specifically for your business. It is the layer between the operating system and the people using it that captures what your organization knows, does, and decides. The platform does not have this. You have to build it. And whether you build it well is the variable that separates the companies seeing real AI returns from the companies that are not.

This distinction is concrete, not abstract. Take a single use case: drafting a client proposal. On a platform-only deployment, the executive opens Cowork, types a description of what they want, and gets a proposal that is articulate, well-structured, and indistinguishable from what every other business using AI is producing. The output is generic because the platform is generic. On an architecture deployment, the same executive types the same description, and the system reaches for the proposal-writing skill, which loads the past five winning proposals, your standard pricing logic, your service descriptions, and your positioning against the alternatives the client is considering. The output reflects how your firm writes proposals — voice, structure, pricing, scope — because the architecture taught it to.

The platform is the same in both cases. The difference is what was built on top.

This is why the same gap shows up everywhere the industry tracks AI returns. The mid-market firms that built the architecture are seeing returns. The ones that bought the platform and stopped there are not. We covered the structural reasoning behind that gap in why 80% of AI investments aren't paying off and what the 20% built. The short version: the platform is necessary and insufficient. The architecture is what determines what the platform does in your hands.

What Claude Out of the Box Doesn't Give You

Stand the platform up in your organization tomorrow. Claude Cowork installs in fifteen minutes. Connect a few plugins from the Marketplace. Schedule a routine or two. By the end of the first afternoon you have an AI environment that, on paper, has all the components of an operating system running in your business.

Here is what it still does not have.

It does not know your business. The model has read most of the public internet. It has never read your client roster, your pricing decisions, your service descriptions, your past wins, your past losses, or the reasoning behind any of it. None of that is part of the platform. None of it shows up in Claude's outputs unless someone explicitly puts it there. The first session your team has with Cowork is a session with a capable stranger.

It does not have skills. The Skills feature exists in the platform — the capability to package domain knowledge into reusable units that Claude can apply. The skills themselves do not. There is no proposal-writing skill, no compliance-review skill, no client-communication skill. You write them. If you do not, the agent has the runtime to apply skills it does not have, which is to say, it has nothing.

It does not enforce your governance. Cowork runs in a sandbox with enterprise role-based access controls. The platform supports the concept of permissions. The platform does not know what your organization considers approvable versus what requires escalation. It does not know which decisions the AI can make autonomously and which require a human checkpoint. It will do whatever the user tells it to do, within the runtime sandbox, with no understanding of organizational risk thresholds. The governance layer has to be designed and configured. Until then, every user is making personal judgment calls about what the AI should do — which means there is no shared governance at all.

It does not improve over time. The model improves when Anthropic ships an upgrade. The platform improves when Anthropic adds features. Your organization's specific use of the platform does not improve unless someone has designed a feedback loop that captures what worked, what failed, and what should be encoded back into the architecture for the next round. Without that loop, your AI in month twelve is the same as your AI in month two. The platform got better. Your use of it did not.

This is what Cowork's adoption looks like in most organizations where the architecture was never built. The platform is in production. The behaviors are unchanged. The investment is real. The compounding is not.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

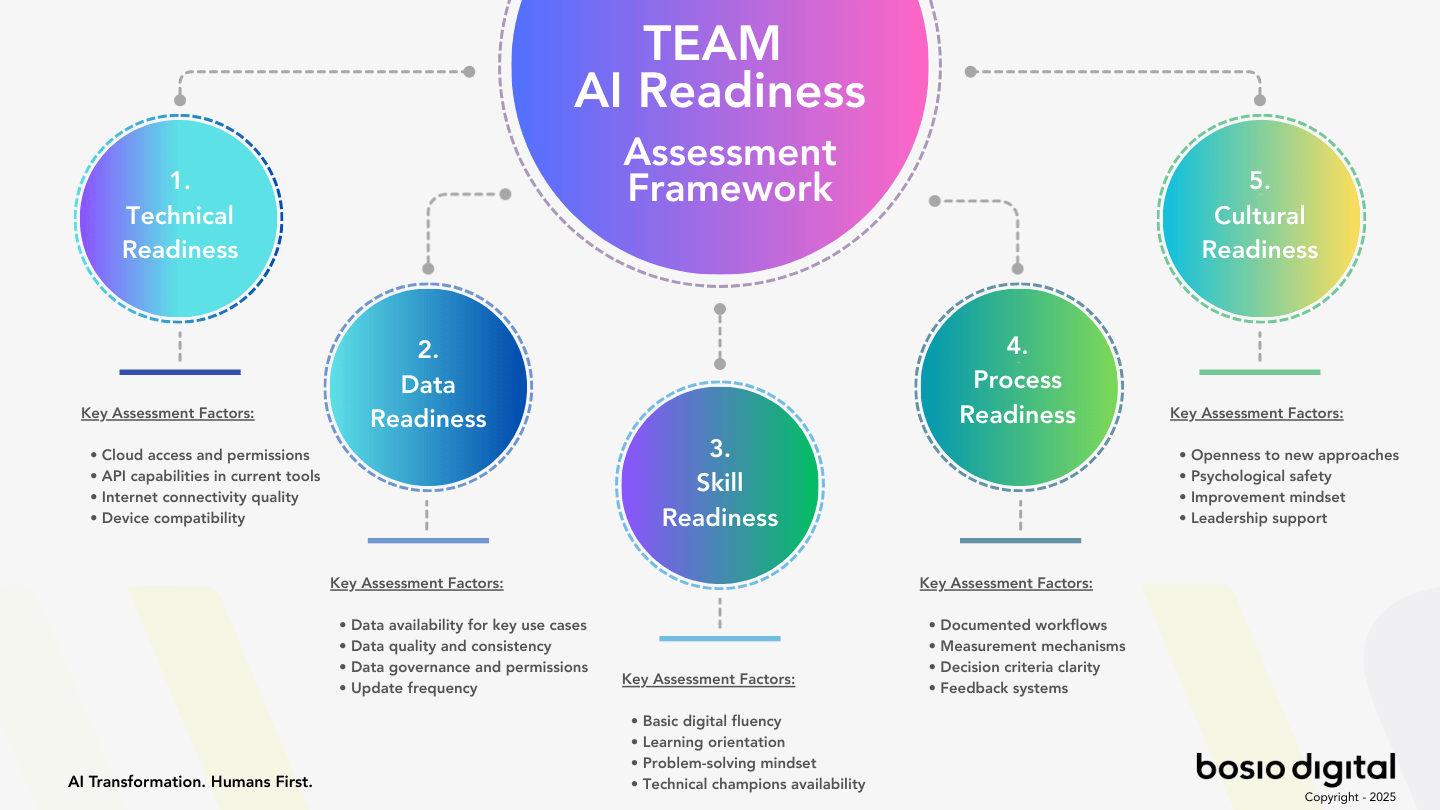

The Three Architectural Decisions

The companies that are seeing AI compound made the same three decisions before deploying anything substantial on the platform. Each decision is about what to build, not what to buy. None of the three is gated by capital or by technical access. All three are gated by organizational will.

Decision 1 — Build the context layer before the agents.

Encode what the organization actually knows in a maintainable, queryable form. The context layer is not a marketing document. It is the operating knowledge of the business — customer segments, decision history, brand voice, pricing logic, service definitions, what worked last quarter, what failed and why. Most of this knowledge currently lives in individual people's heads, in slide decks nobody opens, and in wikis nobody updates. The first job of architecture is to move it into a form that the platform can read every time the platform does work.

The companies that skipped this step deployed Cowork on top of an organization the platform did not know. The outputs are competent and generic. The work it produces could have come from any firm. That is the signature of a context-free deployment.

Decision 2 — Build skills, not tool stacks.

The platform supports Skills as a feature. Skills are reusable units of domain knowledge that Claude can apply to any task that needs them. A proposal-writing skill knows how your firm writes proposals. A compliance-review skill knows how your firm reviews contracts. A client-communication skill knows your voice with different segments. Build a library of these and the system gets more useful every time you refine one. The same skill is used by every agent, every Routine, every Scheduled Task that needs it. The argument for why skills outperform specialized agents is the design pattern Anthropic itself surfaced in late 2025. Most companies are still buying agents. The 20% are encoding skills.

The difference compounds. A specialized agent improves only when humans actively update it. A skill improves every time someone refines the knowledge it encodes — and every agent that uses it benefits. Twelve specialized agents are twelve separately maintained systems. Twelve skills used by one capable agent is one knowledge base that improves under shared discipline.

Decision 3 — Design governance before you need it.

For every domain where the AI will do work, define three things: what the AI does autonomously, what requires human approval before action, and what requires human review after action. These three answers may differ by domain. The discipline of having answered them at all is what separates organizations that can safely expand AI autonomy from organizations that cannot.

This is what Anthropic's research on trustworthy agent deployment made explicit: organizations granting agents more autonomy over time can only do that if the governance framework is built first. Trust is not a feeling. It is a layered set of controls that makes expansion possible without losing oversight. Organizations that bolt governance on after an agent misbehaves have to roll the autonomy back. Organizations that designed governance in from the start can confidently expand it.

Those are the three. The same three decisions show up in every analysis of why companies are or are not seeing AI returns. They are not novel. They are not complicated. They are what gets skipped when the temptation is to deploy first and design later.

The Fourth Dimension: Learning Loops

When the three architectural decisions are in place, a fourth dimension emerges on its own — but only if you design for it explicitly. Without intent, even a well-built architecture can stop improving.

Learning loops are the mechanism that turns a static architecture into a compounding one. The pattern is simple in concept and disciplined in execution: every time the system produces work, capture what worked, what failed, and why; encode the lesson back into the skill, the context, or the governance rule that governed it; the next instance of that work starts from a better baseline.

This is the difference between an architecture that decays and an architecture that compounds. A self-improving AI system is a strategic asset; a static one is a depreciating one. The platform Anthropic ships does not include this loop. You can run Cowork for a year and the model will not have learned anything specific about your business unless you designed the mechanism that captures the learning and feeds it back.

The reason most architectures stall in month six is not that the foundation was wrong. It is that nobody designed how the foundation gets refined. Skills that were good in month one do not get sharper. Context that was accurate in February does not get updated when the business changes in May. Governance rules written for one set of use cases do not adapt as new domains come online. The compounding stops, and the architecture starts to feel inert.

Learning loops are not technical infrastructure. They are an organizational practice. Someone owns the question of what changed this week that should be reflected in the architecture. Someone owns the discipline of writing those changes into the skills, the context, the governance. Without that ownership, the loops do not close. With it, the architecture gets more valuable every month.

This is why the fourth dimension emerges from the three — not as a separate decision, but as the quality that distinguishes architecture that lives from architecture that ossifies. Done well, the three decisions produce a system that learns. Done badly, the three decisions produce a snapshot that ages.

What This Looks Like in Production

bosio.digital has been operating an internal AI operating system on this architecture — context, skills, governance, and learning loops — since the start of 2026. The same system we deliver to clients runs every day inside our own business. We did not invent the pattern. We adopted it for ourselves first because it was the only honest way to recommend it.

What it looks like, in practice, is unglamorous and specific.

The context layer is a maintained set of structured documents — the firm's brand voice, our client roster, our service descriptions, our financial logic, our content patterns, our decision history. None of it lives in one person's head. All of it lives in a queryable form that Cowork reads every time it does work. The maintenance discipline is real: someone owns each piece of context, someone is accountable for keeping it current, and the updates happen on a defined cadence rather than when somebody remembers.

The skills library is a folder structure where each skill is a unit of organizational expertise. When we write articles, an articles skill encodes our voice, structure, and citation discipline. When we draft proposals, a proposals skill encodes our positioning, pricing, and scope language. When we run financial reports, a finance skill encodes our categorization standards. The skills are not scripts. They are knowledge modules that the platform applies when relevant.

The governance layer is explicit and small. For every category of work, we have defined what gets done autonomously, what requires Sascha or Laura's approval before action, and what gets escalated. Communications to specific client segments require approval. Routine financial reports do not. Skill updates require a review before they ship to production. Context updates can be made unilaterally by the owner of that context piece. The rules are written down because written-down rules can be applied consistently and changed deliberately.

The learning loops are the part that makes the rest of it worth doing. Every Friday, we audit what shipped that week — what landed, what missed, what required correction. The lessons go into skill updates, context refinements, or governance changes the following week. The architecture in month five is better than the architecture in month one because we treat refinement as operational work, not an optional improvement.

This is not a transformation program. It is a knowledge project, a design project, and a maintenance discipline executed in order. For a mid-market organization, the architecture is buildable in eight to twelve weeks. The technology is small. The organizational work is significant. The compounding is real.

Three Questions Before You Build on Claude

The platform is in your hands either way. The architectural choice is what determines whether the next twelve months produce returns or just produce activity. Three diagnostic questions surface the choice before it becomes architectural debt.

1. Does Claude already know your business, or does every session start from zero?

Open a new Cowork session with no context-setting and ask it to draft something typical for your firm — a client email, an internal briefing, a section of a proposal. Read the output critically. If it could have come from any organization in your industry, your context layer does not yet exist. If it reflects how your firm specifically thinks, writes, and decides, your context layer is real. Most organizations fail this test. The architecture starts here.

2. Do you have a skills library — or do you have prompts?

If your team's accumulated expertise lives in individual people's prompt notebooks, in browser tabs, in custom GPTs nobody else can use — you have prompts. Useful, but not compounding. If the way your firm writes proposals, reviews contracts, or analyzes financials lives in a structured skill that any agent can apply, you have a skills library. The platform supports both. The architecture only starts when the second exists.

3. Can you name your governance rules, or are you discovering them by accident?

For your three highest-leverage AI use cases, can you write down in one paragraph what the AI does autonomously, what requires approval before action, and what gets reviewed after action? If those rules exist on paper, you have governance. If they exist as informal team norms that vary by user, you are discovering them by accident — usually after the AI does something that prompts a hallway conversation about what should not have happened.

Start Building

Audit Your AI Architecture in Twelve Minutes

Paste this prompt into Claude. The diagnostic surfaces whether your AI deployment is building architecture or just running on the platform — and gives you the next step.

I want to assess whether my organization is building architecture on top of Claude or just running on the platform. Ask me these questions one at a time: 1. If I open a new Claude session with no context-setting and ask for a typical piece of work from my firm, will the output reflect how we specifically think and write — or will it sound generic? 2. Where does the expertise that takes our senior people years to develop currently live? In their heads, in personal prompt notebooks, in shared and maintained skills, or somewhere else? 3. For my top three AI use cases, can I write down in one paragraph what the AI does autonomously, what requires approval, and what gets reviewed after? 4. When the AI produces something especially good or especially bad, where does that lesson get encoded so the next instance is better? 5. If my most experienced person left tomorrow, what percentage of their judgment would remain accessible to the rest of the team through our AI system? After I answer all 5, give me: — Honest read: am I building architecture on top of the platform, or just running on it? — The single architectural decision I should make next — A 30-day plan to begin building that piece into my operating model

The diagnostic shows the gap. Closing it is a different project. See where you stand across all five readiness dimensions →

The Architectural Choice

Anthropic crossed a category line in Q1 2026. Claude is no longer a chatbot. The product surface has the structural components of an operating system, and the platform is in production in most organizations that take AI seriously.

The line that separates the next twelve months for your business is not whether you adopted the platform. Most organizations did. The line is whether you are building the architecture that makes the platform behave as if it were built for you — or whether you are using a generic operating system to produce generic work and wondering why nothing compounds.

The platform is the substrate. The architecture is the differentiator. The choice is the only one that matters this year.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Frequently Asked Questions

Is Claude really an operating system, or is that an analogy?

It is a structural claim, not an analogy. An operating system, defined functionally, has persistent state, a file system, process scheduling, an application layer, an extension architecture, a permissions model, and an integration standard. Claude in May 2026 has all of these — Skills and configured context (persistent state), Cowork's local file access (file system), Scheduled Tasks and Routines (process scheduling), Skills and Claude Design (applications), the Plugin Marketplace (extension architecture), enterprise RBAC plus sandboxed execution (permissions), and the Model Context Protocol (integration standard). The category change is technical, not rhetorical.

What is the difference between Claude as a platform and Claude as an architecture?

The platform is what Anthropic shipped — a generic environment that works the same way for every customer. The architecture is what you build on top of the platform to make it behave as if it were designed specifically for your organization. The platform is necessary but generic. The architecture is what captures organizational knowledge, encodes domain expertise as reusable skills, defines governance rules, and designs the learning loops that make the system improve over time. Most companies have the platform. Few have the architecture. That gap is the difference between organizations seeing AI ROI and the rest.

What does Claude Cowork actually do?

Claude Cowork is a desktop AI agent that runs on macOS and Windows, with direct access to local files, the ability to plan and execute multi-step tasks, parallel execution of subagents, and a sandboxed virtual machine for safety. It went generally available on April 9, 2026, with enterprise analytics, OpenTelemetry, and role-based access controls. Cowork is the desktop manifestation of the operating system — the surface where the platform's components show up as a working environment.

What are AI skills and why do they matter more than tools?

An AI skill is a structured unit of organizational knowledge — your specific way of doing a category of work — encoded in a reusable form that any AI agent can apply. The skill knows how your firm writes proposals, reviews contracts, analyzes financials, or communicates with specific client segments. The value compounds because a single skill is used by every agent that needs it, and improvements to the skill benefit every future use. Tool stacks fragment knowledge across separately maintained systems. Skill libraries concentrate it.

How long does it take to build the architecture on top of Claude?

For a mid-market organization, building the initial context layer, an opening skills library covering the highest-leverage workflows, and the governance rules for the first wave of use cases takes eight to twelve weeks. The technology is small. The organizational work — deciding what to encode, who owns what, how the maintenance discipline runs — is what determines the timeline. After the initial build, the architecture compounds as long as the learning loops are maintained.

Do I need to be on Claude specifically, or does this apply to other AI platforms?

The architectural principles — context, skills, governance, learning loops — apply to any operating-system-grade AI platform. As of May 2026, Claude is the platform that has shipped the most complete set of OS components, which is why the article focuses there. Microsoft and Google are building similar surfaces. The architectural decisions transfer; the specific implementation depends on the platform. Choosing the platform is the smaller decision. Building the architecture is the bigger one.

What is the relationship between this and the "humans above the loop" concept?

"Humans above the loop" describes the operating model that becomes possible once the architecture is in place. With context, skills, and governance in place, humans can step back from executing tasks within an AI process and instead judge outcomes the system produces. The shift only works when the architecture is trustworthy enough that humans can step back without losing control. Without the architecture, humans stay in the loop by necessity — which limits the leverage AI can produce. The architecture is the precondition for the operating model the next stage of AI-enabled work depends on.

Sources

- Claude Cowork — Anthropic product page. GA on macOS and Windows, April 9, 2026. Desktop AI agent with file access, task planning, parallel execution, sandboxed VM, enterprise analytics.

- Get Started with Claude Cowork — Anthropic Help Center. Setup, capabilities, enterprise controls.

- Introducing Claude Design — Anthropic, April 17, 2026. Visual asset creation, design system integration, powered by Claude Opus 4.7.

- Anthropic launches Claude Design — TechCrunch, April 17, 2026. Independent coverage of the launch and target users.

- Don't Build Agents, Build Skills Instead — Barry Zhang & Mahesh Murag, Anthropic, November 21, 2025. The skills-first architectural framework that underpins Decision 2.

- 80% of Companies Aren't Seeing AI ROI — bosio.digital. The three architectural decisions in the context of why most AI investments don't pay off.

- Stop Building Agents. Build Skills. — bosio.digital. Why skills outperform specialized agents.

- The Self-Improving AI — bosio.digital. Learning loop architecture as the mechanism that makes architecture compound.

- Trustworthy AI Agents — bosio.digital. Governance architecture for safe agent autonomy expansion.

- The Current State of AI for Business: A Practitioner's Map — bosio.digital. The four-stage maturity arc and where operating-system-grade AI fits.

- AI Agent Sprawl — bosio.digital. The failure pattern when the platform is deployed without architecture.

- bosio.digital internal AI operating system — production reference implementation of context + skills + governance + learning loops, operating since the start of 2026.