Question

What does Google's AI optimization guide mean for mid-market AI strategy?

Quick Answer

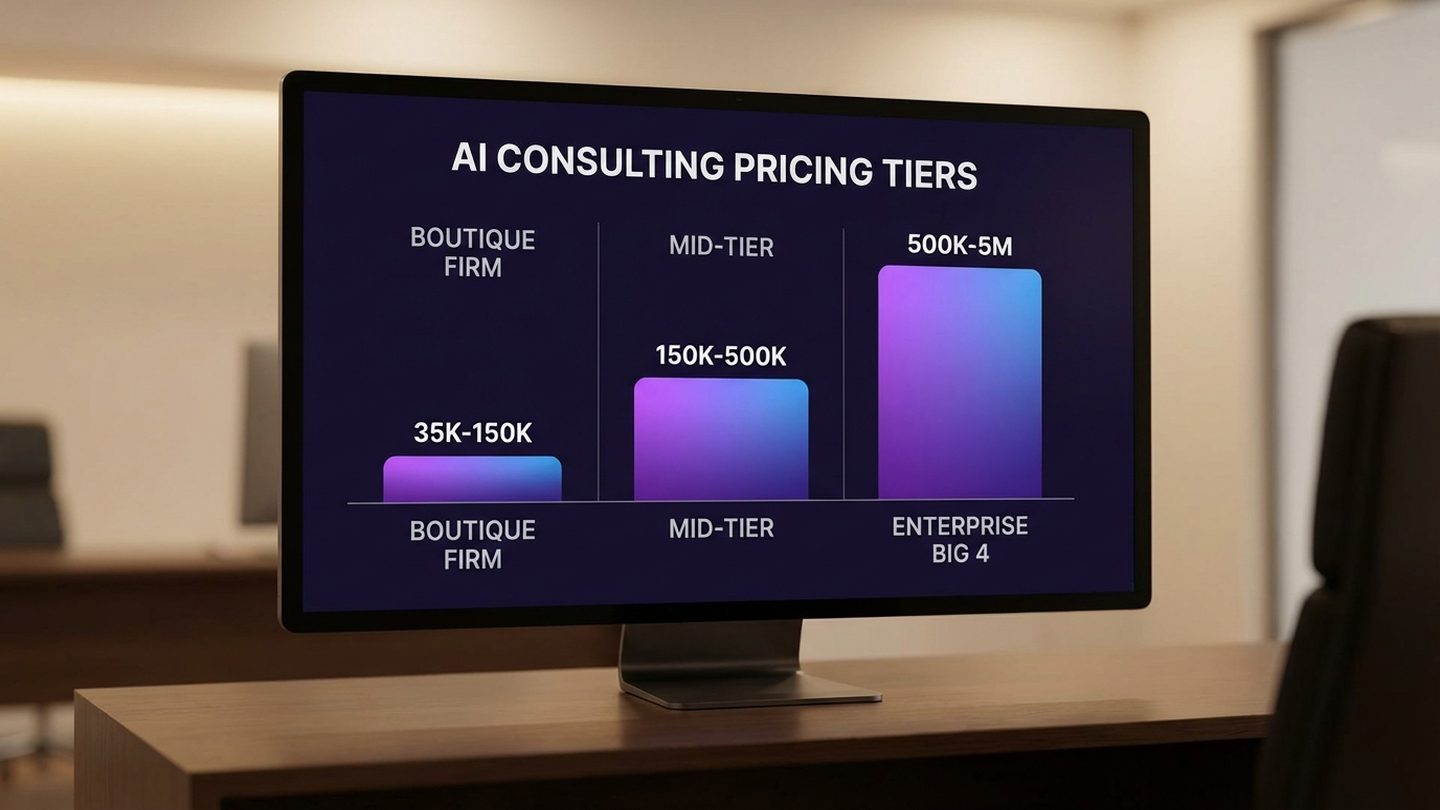

Google published its consolidated guide to optimizing for AI search on May 15, 2026, stating plainly: "There are no additional requirements to appear in AI Overviews or AI Mode." The guide eliminates years of speculation about a separate AI-SEO discipline — and quietly exposes a harder truth that mid-market leaders need to act on. AI citation is no longer determined by content quality alone. The overlap between pages that rank and pages that AI cites has collapsed from roughly 75% in mid-2025 to a measured 17–38% in early 2026 (Ahrefs and BrightEdge studies aggregated by ALM Corp). Good pages are being skipped. The question is why — and what architecture, not tactics, is the answer.

Google published a consolidated guide to optimizing for its AI search features on May 15, 2026. The SEO community read it in about twenty minutes and reached a verdict: nothing new here. Barry Schwartz at Search Engine Land described the document as a summary of "a lot of the advice Google has posted on its blogs, videos, spoken about at in-person events and more."

That read is correct. And it misses what actually matters.

For mid-market leaders, the significance of this document is not what it teaches. It is what it ends. Google has formally closed the door on a category of vendor spend that has been growing since 2024. And in doing so, it has quietly pointed toward a strategic question that is far more expensive to ignore than any SEO budget line.

What Google Actually Published Today (and Did Not Publish)

The guide lives at developers.google.com/search/docs/fundamentals/ai-optimization-guide and consolidates Google's guidance on appearing in AI Overviews, AI Mode, and what the company is calling "agentic experiences." The central claim is direct: "There are no additional requirements to appear in AI Overviews or AI Mode, nor other special optimizations necessary."

The recommendations themselves are ten-year-old Google best practice: E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness), structured data via Schema.org markup, solid page experience, clear internal linking, and the consistent production of substantive, non-thin content. Nothing in the guide requires a new budget line, a new vendor, or a new workflow.

The mythbusting section is particularly pointed. The guide explicitly states that llms.txt files are not required for AI Overviews or AI Mode. It warns against AI-specific content rewrites and content chunking pipelines as unnecessary. It does not endorse any form of prompt injection or crawler-targeted content. What it does endorse is the same body of practice Google has recommended for a decade, now also applied to the surfaces where AI generates responses.

A thin section on "agentic experiences" gestures at Universal Commerce Protocol and agentic browsing, but offers no concrete recommendations. That section is a placeholder. The rest of the document is consolidation.

The question the guide does not answer — and this is where the real work begins — is why the overlap between pages that rank well and pages that AI cites has collapsed so sharply. Two 2026 studies make the point: Ahrefs research found that 38% of Google AI Overview citations come from top-10 ranked pages, while BrightEdge measured the same overlap at roughly 17% — both aggregated and analyzed by ALM Corp. Less than a year earlier, that overlap stood at roughly 75% (Ahrefs, late 2024). Good content is still ranking. The same content is increasingly not being cited.

That is not a content quality problem. It is an architecture problem.

Free Assessment · 10–15 min

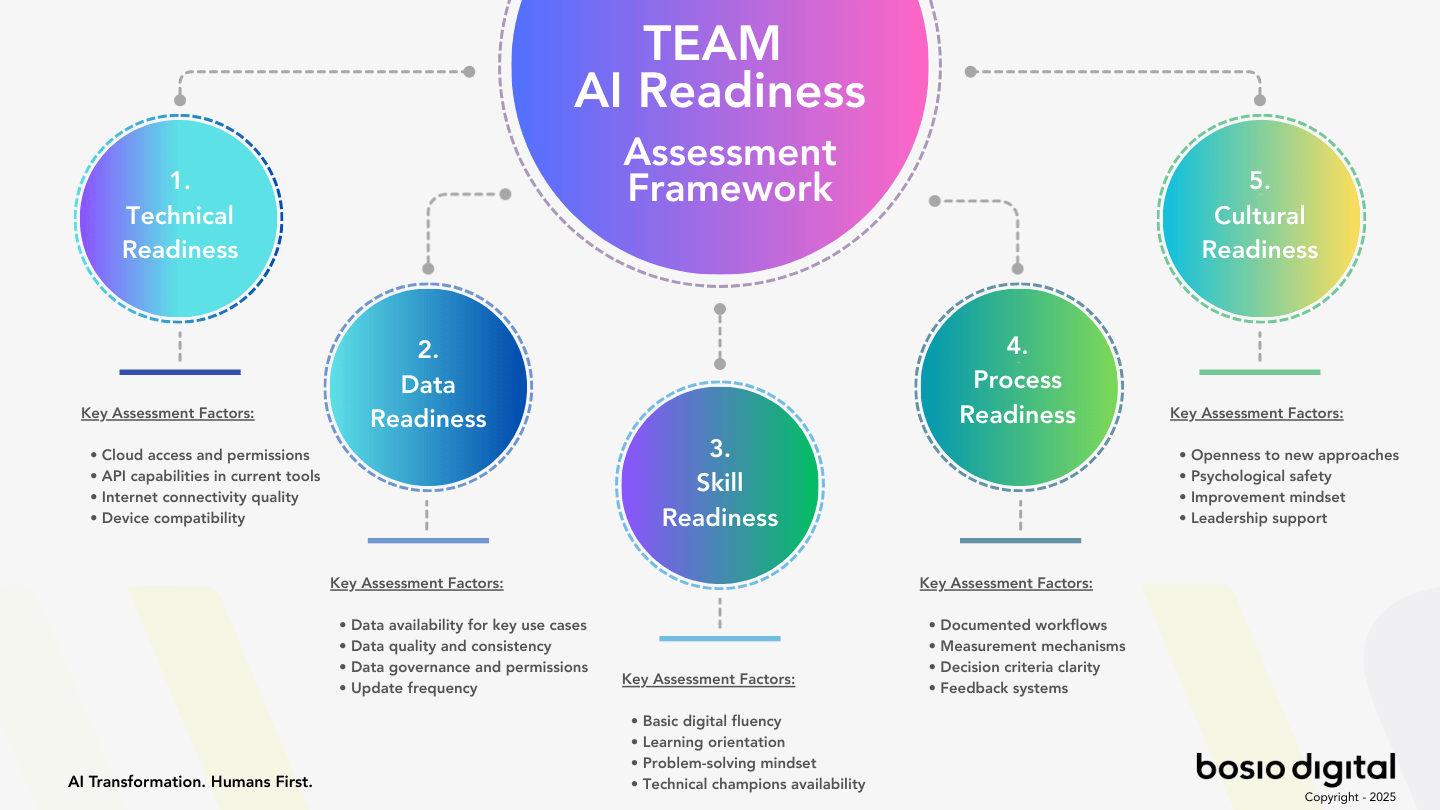

Is Your Business Actually Ready for AI?

Most businesses skip this question — and that's why AI projects stall. The TEAM Assessment scores your readiness across five dimensions and gives you a clear, personalized action plan. No fluff.

The Permission Structure Hiding Inside the Guide

The most strategically significant part of Google's guide is not its recommendations. It is its prohibitions.

For eighteen months, a category of vendor services has been growing around the premise that AI search requires new optimization work: specialized llms.txt implementations, content chunking pipelines designed for AI parsing, rewrites targeting AI summaries rather than human readers, and various forms of "AI SEO" that operate on the assumption that ranking for AI is fundamentally different from ranking for humans. Some of this work has been sold to mid-market companies at meaningful budget levels.

Google's guide is an answer key. Four categories of AI-optimization spend it effectively cancels:

- llms.txt implementation projects. Google explicitly states these files are not required and do not affect AI Overview or AI Mode inclusion.

- AI-specific content rewrites. The guide is unambiguous: the same practices that produce quality for human readers produce quality for AI. Separate AI rewrites serve no purpose in Google's system.

- Content chunking pipelines for AI parsing. Not mentioned as a beneficial practice. The recommendation is clear information architecture — internal linking and structured data — which is standard practice, not a new capability.

- Prompt injection or crawler-targeted optimization. Google does not endorse any strategy that creates content specifically for AI crawlers rather than human readers. The E-E-A-T standard exists precisely to prevent this.

For mid-market leaders, the practical implication is direct: if you have been pitched any of these services in the last two years, you now have Google's formal position that they were not necessary. The question worth asking your current vendors is what, exactly, the budget has been buying.

The consolidation itself matters as a signal. Google formalizing this position ends two years of speculation about whether there would ever be a separate AI-SEO discipline. There will not be. That clarifies the field and, critically, it redirects resources. Budget freed from phantom tactics is budget available for architecture.

The Architecture Read: Why Good Pages Are Now Invisible to AI

The gap that Google's guide does not explain — and that mid-market leaders most need to understand — is the citation paradox. Good pages are ranking. The same pages are not being cited in AI Mode or AI Overviews at anything close to their previous rate.

The ranking-to-citation overlap measured by Ahrefs (38%) and BrightEdge (17%), aggregated by ALM Corp in 2026, is not noise. It is a structural change in how AI systems select what to ground their responses in.

The conventional explanation from SEO commentators is that AI systems are simply citing less overall, or are drawing on broader training data rather than live search. Both are partly true. But they miss the mechanism. What AI assistants do when they ground a response is not the same as what Google's ranking algorithm does when it surfaces a page. Ranking rewards relevance to a query at a moment in time. Citation rewards structural authority — the cumulative signal that a source knows what it is talking about, has a coherent body of work, and has organized its knowledge in a way that makes it trustworthy to reproduce.

Pages get found. Architectures get cited.

This distinction has direct operating implications. A company that publishes five well-written articles on AI adoption but has no internal linking structure connecting them, no published methodology that grounds the claims, and no clear signal of who is responsible for the knowledge it shares — that company has content. It does not have an architecture. AI systems will find the pages. They will not reliably cite them.

Contrast that with a company that has published a coherent body of work — articles that link to each other purposefully, a framework that is named and referenced consistently across the site, expertise signals like author credentials and company history that give the content context — and you have something closer to what AI systems treat as a citable source. The work is not about any single page being perfectly optimized. The work is about whether the whole body of content signals authoritative knowledge structure.

We have been building this kind of architecture for bosio.digital and for the clients we work with. The approach is consistent with what Google's guide recommends — E-E-A-T, structured data, clear internal linking — but the goal is explicitly architectural rather than tactical. The shift from rankings to citations is not a content quality problem. It is an organizational knowledge structure problem.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

What This Means for the Mid-Market AI Budget

The practical translation of Google's guide for mid-market leaders has two parts: what you can stop spending money on, and where that budget should go instead.

What stays the same is the foundational work. E-E-A-T is not bureaucratic box-checking — it is the signal that the people behind a website have genuine expertise, actual experience with the subjects they write about, and a track record that gives readers reason to trust what they say. Structured data via Schema.org markup helps both search engines and AI systems understand the content and context of a page. These are not optional. They are the floor that everything else stands on.

What changes is the frame for why you do this work. For the last decade, content strategy has been primarily a ranking play — produce content that performs well in search and traffic follows. That logic still holds. But AI citation is not a ranking outcome. It is an architecture outcome. Publishing content is not the same as publishing a knowledge structure.

For mid-market businesses whose customer acquisition depends on organic discovery, the 93% zero-click rate in AI Mode — measured by Seer Interactive across 25.1 million impressions in 2026 — presents a real challenge. AI Mode produces higher-quality traffic in the sense that users who click through have higher intent. But 93% of queries in AI Mode now resolve without a click at all. The implication for businesses that rely on organic clicks to drive acquisition is not subtle: if your business is not the source that AI cites when it generates a response, you have been removed from the consideration set before the user ever sees your name.

The budget reallocation that makes sense given this reality is not dramatic. Stop spending on llms.txt builds, AI content chunking, and AI-specific rewrites. Redirect that budget toward three things: published methodology (a documented, named framework your content consistently references), a deliberate internal linking structure that maps to how your knowledge actually fits together, and governance documentation that signals who is responsible for the expertise your site represents. None of this requires a new vendor. All of it requires an architectural decision about how your organization's knowledge is organized and published.

The architecture engagement framework we use with clients addresses exactly this sequence — the structural decisions that determine whether your AI investment compounds over time or stays flat. The content work and the AI architecture work are not separate. They are the same decision made at different layers of the organization.

The Osmani Contradiction and What It Tells You About Vendor Advice

On April 11, 2026 — thirty-four days before Google's consolidated guide — Addy Osmani, Director of Engineering at Google Cloud AI, published a piece advocating for what he called "agentic engine optimization." His recommendation included implementing llms.txt files and AGENTS.md files to improve discoverability for AI agents. He was describing a best practice from inside Google's own walls.

Google Search Central's guide, published May 15, 2026, says you do not need llms.txt files.

Two Google teams. Contradictory advice. Thirty-four days apart.

This is not a reason to distrust Google. It is a reason to distrust certainty. The honest interpretation is that Google — like every organization working at the frontier of AI deployment — does not yet have a unified position on what AI-content infrastructure should look like. The agentic AI surface and the search AI surface are being built by different teams, evolving on different timelines, and optimized for different use cases. Osmani's guidance is probably right for agentic browsing contexts. The Search Central guide is probably right for AI Overviews and AI Mode. They are not the same surface.

For mid-market leaders, the lesson is not about which Google to believe. The lesson is about the half-life of vendor recommendations in this environment. Any vendor — SEO agency, AI consultant, or specialist firm — whose recommendations rest on a reading of what one platform team said in one post is selling a position that may be obsolete before the contract is signed. The Osmani-versus-Search-Central gap is not an anomaly. It is a preview of the next eighteen months.

This is precisely where the Humans First principle we operate by at bosio.digital becomes a strategic asset rather than a philosophical position. When platform recommendations have a thirty-day half-life, architectural judgment cannot be rented. The leaders who will navigate this period well are the ones who build internal architectural capability — who understand enough about why AI systems work the way they do to evaluate vendor claims against first principles, not just against the most recent blog post. Outsourcing that judgment to vendors whose recommendations are contingent on platform stability is a risk that compounds every quarter.

The question to ask of any AI-adjacent recommendation — from vendors, from consultants, from inside your own organization — is not "did someone authoritative say this?" but "does this rest on an architectural principle or a platform quirk?" Architectural principles survive platform updates. Platform quirks become liabilities.

What to Do in the Next 90 Days

The practical checklist for mid-market leaders responding to this moment is short. Resist the urge to make it longer.

Audit your current AI citation footprint. Before changing anything, know where you stand. Search for your company name, key frameworks, and core service descriptions in AI Mode, ChatGPT, and Perplexity. Are your pages being cited? If not, is the gap in content quality, in content structure, or in the architecture that connects your content? The answer determines what work actually needs to happen.

Identify three architecture gaps. Using the findings from the citation audit, identify the three most concrete structural gaps: missing internal links between related articles, a framework that is referenced but never formally documented and published, author or company expertise signals that are mentioned internally but not visible on the site. Concrete gaps produce concrete fixes. Vague dissatisfaction produces vendor spend.

Stop budget on phantom tactics. If you are currently paying for llms.txt implementation, AI-specific content rewrites, or content chunking services, you now have Google's formal position that these are not required for AI search inclusion. Redirect that budget. Google said it plainly — the guide is not ambiguous on this point.

Decide which of your published content is structured for citation versus structured for ranking. These are not always the same. Ranking-optimized content is designed to perform on a specific query. Citation-optimized content is designed to represent a coherent body of knowledge. The organizations seeing ROI from AI have figured out that publishing is an architectural act, not just a content production act. Make that decision explicitly for your next five pieces.

Make one architectural commitment and ship it in 90 days. A skills library that encodes your firm's domain knowledge. A knowledge layer that connects your team's expertise to your published content. A governance document that signals who is accountable for what your organization claims to know. Pick one and ship it. The organizations building on AI as a platform rather than using it as a tool are the ones making commitments like this — not launching new content campaigns, but investing in the structure that makes all future content worth citing.

The Architecture Read

Google's guide tells you what to do tactically. Ten years of best practice, consolidated into one document, endorsed again. The SEO community is not wrong that nothing in it is new.

What the guide does not say — and what mid-market leaders need to hear — is what is actually happening at the strategic level. The practices that produce ranking and the practices that produce AI citation have converged. But convergence does not mean equivalence. You can do everything Google recommends for ranking and still be invisible in AI Mode, because citation requires something that the ranking algorithm does not: evidence that your published knowledge hangs together as a coherent, trustworthy body of work.

Pages get found. Architectures get cited.

The Osmani-Search-Central contradiction — two Google teams, contradictory advice, thirty-four days apart — is not a reason to stop paying attention to what Google says. It is a reason to build the kind of internal judgment that can evaluate what any platform says against first principles. Leaders who outsource architectural judgment to vendors whose recommendations expire in a quarter are accumulating a liability, not building a capability.

Stop optimizing pages. Start architecting authority. The two activities look similar from the outside. They compound very differently over time.

Frequently Asked Questions

What did Google's AI optimization guide actually say?

Google's consolidated guide, published May 15, 2026, states that "there are no additional requirements to appear in AI Overviews or AI Mode." It recommends E-E-A-T, structured data via Schema.org, strong page experience, clear internal linking, and substantive non-thin content — the same practices Google has recommended for a decade. The guide explicitly says llms.txt files, AI-specific content rewrites, and content chunking pipelines are not required for AI search inclusion.

Do I need to implement llms.txt files for Google's AI search?

Google's May 15, 2026 guide directly addresses this: llms.txt files are not required for inclusion in AI Overviews or AI Mode. Addy Osmani of Google Cloud AI recommended llms.txt for agentic discovery contexts thirty-four days earlier, which reflects the distinction between search AI surfaces and agentic AI surfaces. For Google Search specifically, the answer is no.

Why are good pages not being cited in AI Mode even when they rank well?

Two 2026 studies — Ahrefs (38%) and BrightEdge (17%), aggregated by ALM Corp — show the overlap between top-10 ranked pages and AI Overview citations has fallen from approximately 75% in mid-2025 to the 17–38% range. The mechanism is architectural: ranking algorithms reward relevance to a specific query, while AI citation systems reward structural authority — the signal that a body of content represents coherent, trustworthy expertise, not just a well-optimized page. Pages get found. Architectures get cited.

What should mid-market leaders do differently after this guide?

Stop spending on AI-specific optimization tactics that Google has now formally deprecated — llms.txt builds, AI content rewrites, content chunking pipelines. Redirect that budget toward published methodology, deliberate internal linking structure, and expertise signals. Then audit your current citation footprint across AI Mode, ChatGPT, and Perplexity to identify whether the gap is in content quality, content structure, or knowledge architecture.

How does the Osmani-Search-Central contradiction affect vendor advice?

It reveals that even within Google, different teams are working from different positions on what AI-content infrastructure should look like. Any vendor recommendation that rests on a single platform team's blog post has a shelf life measured in weeks, not quarters. The useful filter is whether a recommendation rests on an architectural principle — which survives platform updates — or a platform quirk, which becomes a liability. Architectural judgment, in this environment, cannot be outsourced.

What is the zero-click problem in AI Mode and how should businesses respond?

Seer Interactive's 2026 analysis of 25.1 million AI Mode impressions found a 93% zero-click rate. Users receive a generated response and do not click through to source pages. For businesses whose acquisition depends on organic clicks, the implication is direct: if you are not the source being cited in the AI-generated response, you have been removed from the consideration set before the user sees your name. The response is not to optimize pages more aggressively — it is to build the architectural authority that makes you the cited source.

What is the difference between content for ranking and content for AI citation?

Ranking-optimized content is designed to match a specific query and outperform competitors on that query at a moment in time. Citation-optimized content is designed to represent a coherent body of knowledge that AI systems can reliably attribute and reproduce. The best content does both, but the architectural decisions that produce citable content — published methodology, consistent internal linking, expertise signals, named frameworks — are distinct from the decisions that produce ranking gains. Both matter. They require different investments.

Sources

- Google's Guide to Optimizing for Generative AI Features on Google Search — Google Search Central, May 15, 2026

- AI Features on Google Search — Overview — Google Search Central, May 2026

- A New Resource for Optimizing for AI on Google Search — Google Search Central Blog, May 15, 2026

- Google publishes guide on optimizing for generative AI features — Search Engine Land (Barry Schwartz), May 15, 2026

- Agentic Engine Optimization — Google AI Director Addy Osmani — Search Engine Land, April 11, 2026

- AI Visibility and Customer Acquisition Cost Statistics — Demand Local, 2026 (38% organic click drop measurement; 12% YoY CPC rise; $2.96 average CPC Q1 2026)

- Seer Interactive AI Mode analysis — Seer Interactive, 2026 (analysis of 25.1 million AI Mode impressions; 93% zero-click rate in AI Mode)

- Google AI Overview Citations Drop From 76% to 38%: The Data Every SEO Needs to Act On in 2026 — ALM Corp, 2026 (aggregating Ahrefs 38% + BrightEdge 17% findings)

- Creating Helpful, Reliable, People-First Content — Google Search Central (E-E-A-T framework)

- Schema.org Structured Data Reference — Schema.org

- From Rankings to Citations: The AI Search Economy Playbook — bosio.digital

- 80% of Companies Aren't Seeing AI ROI. Here's What the Other 20% Built. — bosio.digital

- Claude Is Becoming an Operating System. Are You Building on It or Just Using It? — bosio.digital

- Stop Building Agents. Build Skills. — bosio.digital

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.