Question

What does a mid-market AI consulting engagement actually produce, and what do you own when it's done?

Quick Answer

A bosio.digital architecture engagement runs in four phases — Discovery (2 weeks), Architecture Design (1 week), Build (2–3 weeks), and Training & Handover (1 week) — for a total of 6–8 weeks for a company up to 25 people. Cost ranges from $35,000–$55,000 for that scale and $55,000–$95,000 for 25–100 people. What you receive is not a strategy deck. It is a working AI operating system installed in your own environment, under your accounts, your licenses, and your existing knowledge infrastructure — whatever your organization already uses to store and govern its information. Skills and context files are written in markdown your team can edit. The engagement is Humans First by design: every architectural decision is also an organizational decision, and the work addresses both the technical layer (context, skills, governance, responsible-AI rules) and the human side (how roles change, how teams adopt new tools, how leaders handle the employment shifts that come with AI well-implemented). After a 30-day support window, the system runs without bosio.digital. The retainer ($1,500–$3,500 per month) is for cases where you want continued development — not because the system requires us. That ownership distinction is what separates an architecture engagement from a consulting project.

If you are evaluating an AI consultant right now, you are probably less interested in what AI consulting is and more interested in what you actually get when the engagement ends. Most of the writing on this subject answers the first question and avoids the second. The reason for that is not a coincidence.

This article answers the second question directly. What follows is the architecture engagement bosio.digital runs for mid-market companies — broken down by phase, by deliverable, by week, by cost, and by what you own when we are done. There is no philosophy section. There is no introduction to "what an AI consultant is." There are no comparison tables of large consulting firms. If you have arrived here, you already know what AI consulting is. You are trying to decide whether to hire someone, and if so, what to expect when you do.

Read it as a specification, not a sales document. If we are not the right fit, the last section will tell you that as clearly as everything else.

The Question Most Mid-Market Leaders Actually Have

The questions mid-market leaders type into search engines and AI assistants when they are evaluating an AI consultant fall into a narrow band. The most common are some variation of:

- What does an AI consulting engagement actually cost?

- What do I get at the end of it?

- How long does it take?

- Who is this right for, and who is it not right for?

- What happens after the engagement ends — does the system keep running?

The answers most firms give to these questions are vague on purpose. Cost is "it depends on scope." Deliverables are "a strategy" or "a roadmap" or "a transformation framework." Timeline is "6 to 18 months." Fit is "any organization committed to digital transformation." The last question — what happens after the engagement — usually does not get answered at all.

The vagueness is not always evasion. Big consulting firms genuinely struggle to quote a number because the same engagement looks different at a thirty-thousand-person enterprise and a seventy-five-person mid-market firm. The deliverables vary because the firms themselves do not always have a repeatable methodology — each engagement gets shaped by the partner who runs it.

For a mid-market leader who needs an answer, the vagueness is the problem. You are not trying to pick a consulting firm. You are trying to decide whether to commit a meaningful capital allocation to a kind of engagement you cannot yet picture. Specifics are the thing you need most and the thing you are getting least.

What follows is the specific version. The phases are real. The weeks are real. The deliverables are real. The cost ranges are what we charge. The ownership claims are operational, not rhetorical. Read it and decide.

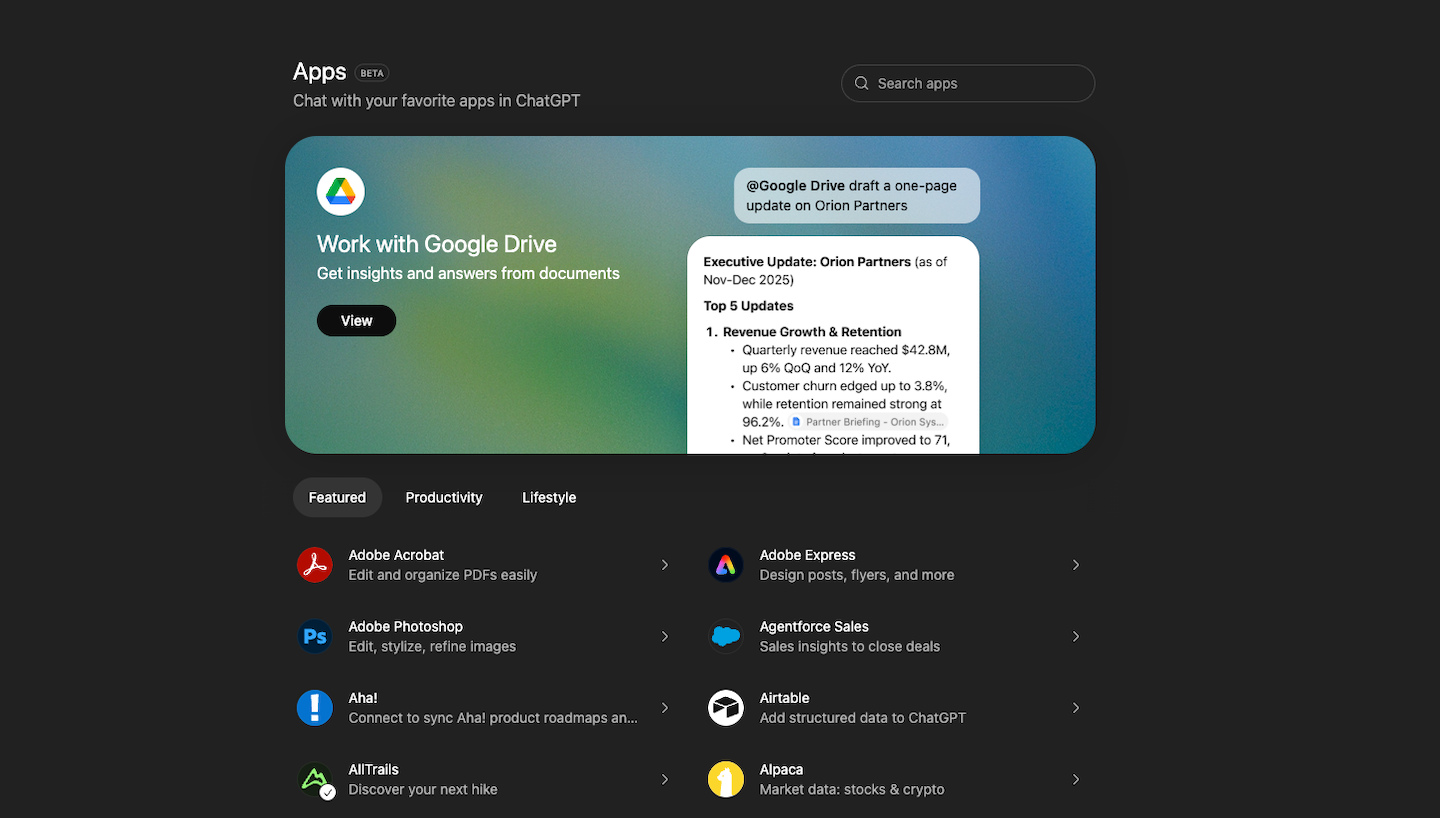

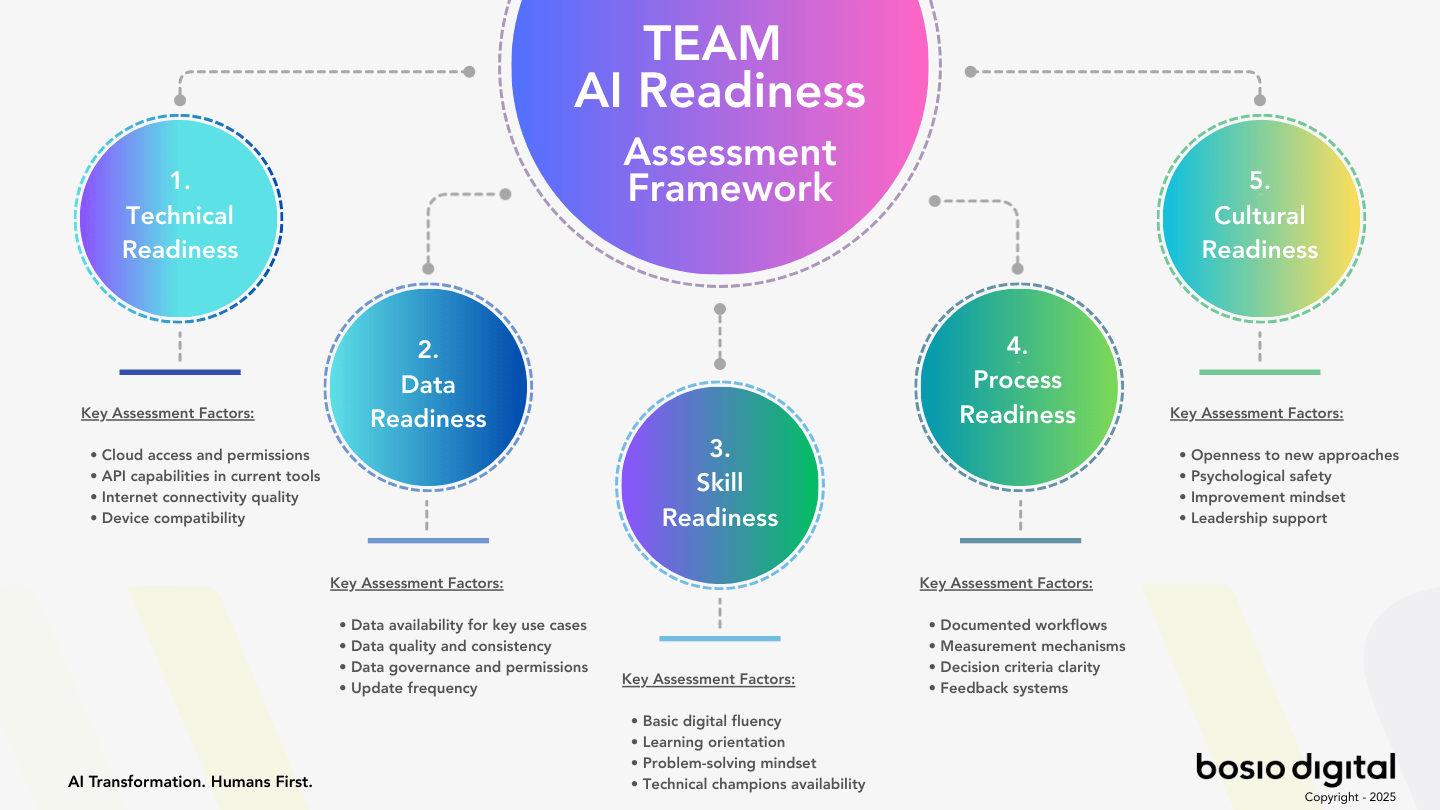

Free Assessment · 10–15 min

Is Your Organization Ready for an Architecture Engagement?

Before you commission an engagement, find out where the gaps are. The bosio.digital Architecture Assessment scores your AI readiness across five dimensions in ten minutes and tells you which phase your organization is actually starting from.

What an AI Consulting Engagement Should Produce

Before describing the phases, the deliverables, and the cost, the distinction this article rests on needs to be made explicit.

The default model of consulting — the one Big 4 firms made famous — is a model in which the consultant produces a document. The document is the work product. It contains analysis, recommendations, frameworks, and sometimes implementation roadmaps. The client receives the document, internalizes it, and is then responsible for execution. The consultant's job is finished when the document is delivered.

This model worked, more or less, for thirty years. It is the wrong model for AI work, for three reasons.

First, AI is not a recommendation that can be acted on by following instructions. It is infrastructure that has to be built, configured, and integrated. A document describing it is not equivalent to having it. The gap between "we recommend you implement an AI strategy" and "the AI strategy is implemented" is the entire failure mode of most enterprise AI engagements.

Second, the document deteriorates immediately. AI capability moves fast enough that a deck written in February is describing a different world by June. A specification for an architecture that has not been built is a snapshot of yesterday's understanding. An architecture that is built and running is a system that can be updated as the conditions change.

Third — and this is the part that matters most to a mid-market leader — the document does not produce value. A working system produces value the day it goes live. A strategy deck does not, however well-written. The cost is comparable. The return is not.

The alternative is what we call an architecture engagement. The work product is not a document; it is the working system. The deliverable is not a strategy; it is the infrastructure that lets the strategy run. The cost is similar to a traditional engagement. What you walk away with is materially different.

An architecture engagement also rejects the artificial split between technical work and human work. Most consulting models treat them as separate phases or — worse — as separate teams. The technical team builds the system; the change-management team gets handed the people problem after the fact. The result is a system that runs and a workforce that does not adopt it. Bosio.digital's framing is Humans First: every architectural decision is also an organizational decision. How decisions get made, how the team adopts new tools, where the resistance will land, what changes when one team's workflow now includes an AI — these are not afterthoughts to the technical build. They are part of it. AI architecture without human readiness is hardware without software.

The positioning is straightforward: you should own what we build together. That is what separates an architecture engagement from a consulting project.

The four phases that follow describe how that actually works — week by week, deliverable by deliverable, with the human work integrated into each phase rather than appended at the end.

Phase 1 — Discovery (Weeks 1–2)

Discovery is the part of the engagement that, in most consulting projects, gets compressed into a kickoff workshop and a list of questions. We treat it as a two-week phase of its own because the architecture that comes out of Phases 2 and 3 is only as good as the understanding established in Phase 1.

What happens during Discovery: structured stakeholder interviews with the leadership team and the operators closest to the workflows AI will touch. Current-state audit of how decisions are made today — what is documented, what lives in people's heads, what the team relies on without saying it out loud. MCP assessment — which systems your AI will need to read and write to, what authentication exists, what does not. Data readiness evaluation — not whether your data is "clean enough" (a question that is usually unanswerable) but whether the data your AI will need is reachable in a form it can use.

The stakeholder interviews are also where the human dynamics get mapped. Who actually makes which decisions. Where change typically lands well in your organization and where it lands hard. What roles will shift, what roles will compress, what new work will be created. This is not change-management theater — it is the operational understanding the architecture has to encode in Phase 2 so that the system the team adopts in Phase 3 is one the team can actually adopt.

What you provide during Discovery: access to people, access to existing process documentation (whatever exists), and three to five hours per stakeholder for interviews. We design the schedule around your team's availability rather than the other way around.

What you receive at the end of Phase 1: an Architecture Blueprint. The Blueprint is a written document — not a deck — that names the specific architecture you should be building. It includes the context layer your AI needs (what your business actually knows that should be encoded), the skills library that will encode your domain expertise, the governance rules that will define what the AI does autonomously versus what requires human approval, and the set of connectors that will plug your AI into your existing systems. The Blueprint is not the system. It is the specification of the system that will be built in Phases 2 and 3.

For an organization that decides not to continue past Discovery — which happens, and is fine — the Blueprint is a deliverable that has standalone value. You can take it to another implementation partner. You can use it as the basis for an internal build if you have the team for that. You can use it to evaluate other proposals against. The Blueprint is yours.

For organizations that do continue, Phase 1's Blueprint becomes the input to Phase 2.

Phase 2 — Architecture Design (Week 3)

Phase 2 is a single week, and it is the most concentrated week of the engagement. The architecture decisions that determine what the next two phases produce all get made here.

What gets decided in Phase 2: the folder structure of your knowledge base (in whatever file infrastructure your organization already uses — Drive, OneDrive, or your equivalent), the inventory of context files your AI will read each session, the permission matrix governing which team members can configure which parts of the system, the session ownership model that defines how multiple users share an AI environment without stepping on each other, and the responsible-AI rules that determine what the AI does autonomously, what requires human approval, and what gets escalated.

The reason this phase is so concentrated is that the decisions are interdependent. The permission model influences the folder structure. The folder structure influences the context inventory. The session ownership model depends on how the team is organized to use the system. Trying to make these decisions separately produces an architecture that does not hold together. Making them in a single intensive week produces a coherent specification.

This phase is also the one where the four architectural decisions we cover at depth in why 80% of AI investments aren't paying off and what the 20% built get made concrete for your specific organization. Context, skills, governance, and learning loops are not abstract principles by the end of this week. They are specific files in specific folders with specific access patterns.

What you receive at the end of Phase 2: a Full Specification. The Specification is the architectural blueprint translated into a buildable plan — which files get written, where they live, who can edit them, what the permission boundaries are, and what the governance defaults will be. You sign off on the Specification before Build begins. After sign-off, what you receive in Phase 3 is what the Specification described.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Phase 3 — Build (Weeks 4–6)

Phase 3 is the longest phase and the one where the working system comes into existence. The Specification produced in Phase 2 is now executed.

The first part of the build is the knowledge layer. The folder structure designed in Phase 2 gets created in your file infrastructure — your Drive, your OneDrive, or whatever your team already uses. The context files described in the Blueprint get written with your organization's actual knowledge — not lorem ipsum, not templates. Your brand voice, your customer segments, your service descriptions, your pricing logic, your decision history. The writing of these files is a collaborative process: we draft, you correct, we iterate. By the end of week 4, your AI has a knowledge base that reflects your business specifically, not a generic version of your industry.

The second part of the build is the skills library. The reusable units of organizational expertise — proposal writing, compliance review, financial categorization, client communication, whatever your highest-leverage workflows are — get encoded as skills. The architecture argument for why skills outperform specialized agents is the design principle this build follows. Each skill is a folder with a markdown SKILL.md file and reference materials. They are yours to read, edit, and extend at any point during or after the engagement.

The third part of the build is the connection layer. The MCP connectors that link your AI to your existing systems — HubSpot, Gmail, Calendar, QuickBooks, whatever your stack includes — get configured under your accounts. Authentication runs through your credentials. The connectors are not pooled through a bosio.digital environment. They are direct integrations into your business systems, set up to work as your team uses them.

The fourth part of the build is the execution layer. Claude Cowork gets configured on the relevant team members' machines. Routines and Scheduled Tasks get set up for the workflows that should run autonomously. The governance rules from the Specification get translated into actual permission boundaries — what the AI does on its own, what requires approval, what gets escalated.

Throughout the three weeks, we test as we build. Every workflow gets exercised before it goes into production use. The team starts using the system in week 5, in parallel with continued building. This is also when the human side of the architecture starts producing visible signal — which workflows the team adopts naturally, which ones produce hesitation, where the AI is doing useful work and where someone is quietly working around it. We coach individual users through the friction as it surfaces, because adoption is not a Phase 4 problem; it is built or broken during Phase 3. By the end of Phase 3, you have a working AI operating system installed in your business, running on your accounts, with documentation in your knowledge base, deployed for your team and being used by them.

What you receive at the end of Phase 3: a working AI operating system. Not a specification of one. Not a prototype. A production system, running daily, that your team is already using.

Phase 4 — Handover (Weeks 7–8)

Phase 4 is the part that distinguishes an architecture engagement from a consulting project. The system is built. The question is what happens next.

What happens in Phase 4: role-based training for the team. The people who will use the system day-to-day get walked through the workflows that affect them. An admin deep-dive for whoever owns the AI infrastructure in your organization — how to add a new skill, how to update a context file, how to change a permission, how to roll back a configuration change that did not produce the expected result. The runbook gets written together: a living document, in your knowledge base, that captures the operational knowledge needed to maintain the system.

The training is also where the human transformation work becomes explicit. The bosio.digital framing has been Humans First throughout — every architectural decision is also an organizational decision — and Phase 4 is where that lands in practice. Roles change. Some of the work that used to fill a job description gets done by the system; what remains is judgment, relationship, and the kind of work that benefits from a human in the loop rather than at the keyboard. We discuss this directly with the team. Not as a euphemism. The honest version is: AI well-implemented changes employment, and the leaders who handle that with intention end up with a stronger organization than the ones who pretend nothing changed. The training prepares the team for the system; the conversation prepares the team for the change.

At the end of Phase 4, the 30-day post-handover support period begins. During those 30 days, we are available for issues, edge cases, and the unexpected situations that always emerge in the first weeks of any new system running in production. The support is included in the engagement fee.

What you own at this point is specific:

- Your files live in your own knowledge infrastructure — Google Drive, OneDrive, or whatever your organization already uses — under your account. Not in a bosio.digital-controlled environment. If your IT team wants to migrate, audit, or back up the files, they can.

- Your Claude license is yours. The team uses Claude Cowork through your organization's subscription. If you ever decide to switch AI providers — though we will tell you straightforwardly when that is and is not a good idea — the work product transfers because it lives in your storage.

- The MCP connectors run under your accounts. HubSpot, Gmail, Calendar, QuickBooks — every connector is authenticated through your credentials and configurable from your admin panels.

- Skills and context are markdown files your team can edit. If you want to refine a skill, you open the file and change it. If you want to add a new skill, you create a folder with a markdown file and reference materials. If you want to retire one, you archive the folder. No bosio.digital interface stands between you and your knowledge.

- The governance rules are documented in your knowledge base. What the AI does autonomously, what requires approval, what gets escalated — all written down, in your hands, changeable by you.

After the 30-day support period, the system runs without bosio.digital. The retainer is for the cases where you want continued development — not because the system requires us.

That sentence is the ownership claim, and we want to be precise about what it means. The retainer is a legitimate offering: ongoing strategic development, new workflow builds, executive advisory, system health monitoring as the business evolves. Many clients choose it because they value the continued partnership. The point is that they are choosing it. The system does not stop working if they do not. That distinction is what makes it an architecture engagement rather than a managed service.

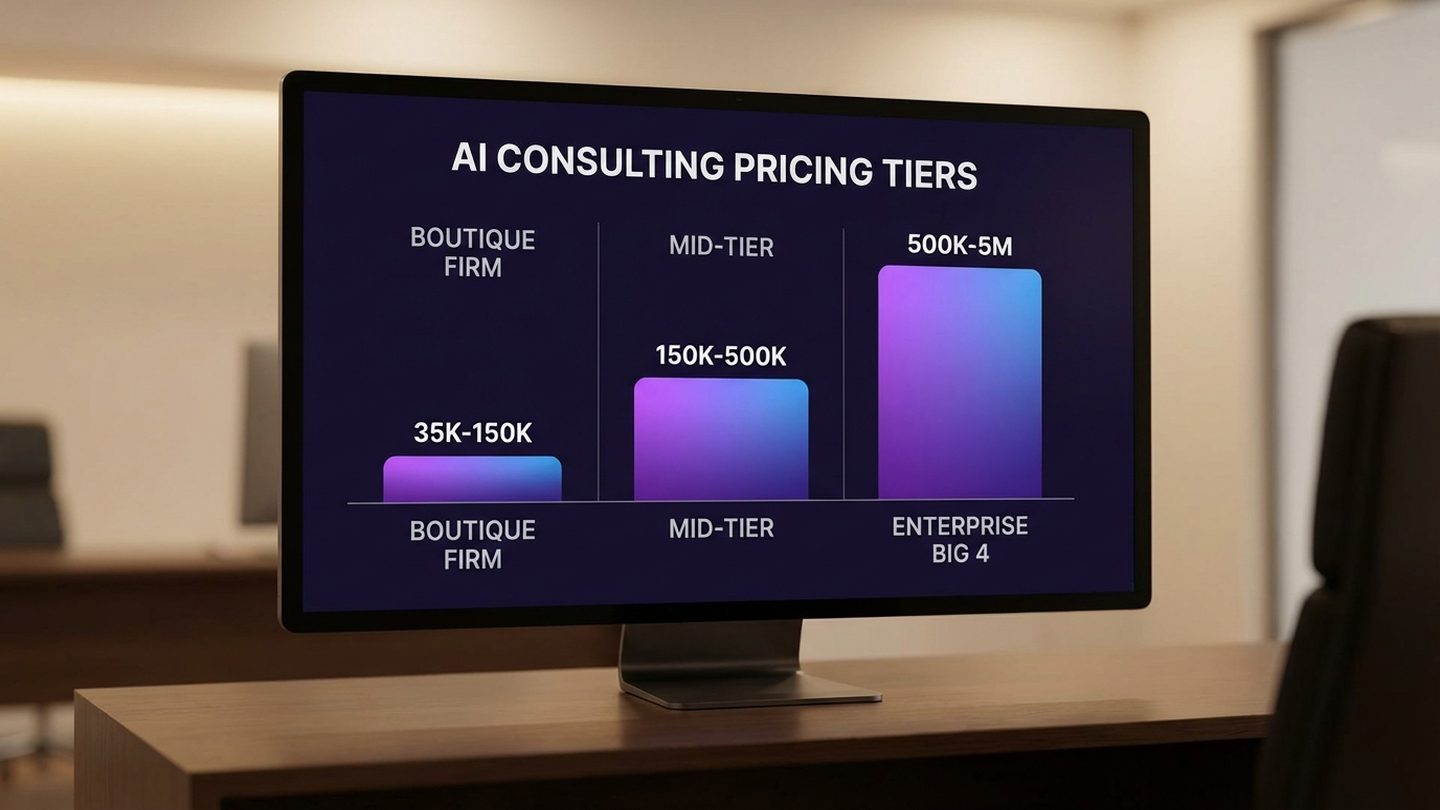

What It Costs at Mid-Market Scale

The pricing for a bosio.digital architecture engagement at mid-market scale is the following.

Implementation, up to 25 people: $35,000–$55,000. The variance depends on the complexity of your knowledge base, the number of MCP integrations required, and whether your team is starting from a clean slate or migrating from existing AI tools that have to be evaluated and selectively retained.

Implementation, 25–100 people: $55,000–$95,000. The variance scales with team complexity rather than headcount alone — a 75-person company with three distinct workflows is a simpler build than a 40-person company with seven.

Implementation, 100+ people: Custom. At that scale, the engagement becomes shaped by your specific organizational structure, the regulatory environment you operate in, and the degree of existing AI infrastructure that needs to be assessed before it is replaced or retained.

Maintenance retainer (optional): $1,500–$2,500/month. Covers system health monitoring, context updates as the business evolves, connector monitoring, and minor additions. For organizations that want bosio.digital's continued presence but do not have ongoing strategic development needs.

Extended governance retainer (optional): $2,000–$3,500/month. Everything in the maintenance retainer plus ongoing strategic development, new workflow builds as the organization identifies them, and executive advisory. For organizations that view the partnership as continuing rather than transactional.

To put those numbers in operating terms: a twenty-person company recovering five hours per person per week — which is the conservative end of what we see from the architecture once it is running — at a blended $80/hour fully loaded cost recovers $8,000 per week. At a $35,000 implementation fee, that pays back the engagement in five to seven weeks. The math is in your favor because the system is durable. The recovered hours compound across years, not weeks.

What is not included in the engagement fee and is sometimes mistaken for included: third-party software subscriptions you need anyway (Claude, your existing CRM, your file storage), any specialty tools that get added during Phase 3 that your business will continue to use. These are configured through your accounts and billed by the vendors directly. The reason for separating them is the ownership principle — software that runs in your name is yours; if we bundled it into the engagement fee, the line between "our service" and "your tooling" would blur in exactly the way that produces lock-in over time.

Who This Is Right For (And Who It Isn't)

The architecture engagement is built for a specific kind of organization at a specific stage. Honest disqualification protects everyone's time, including yours.

Right fit — three criteria, all required

1. Strategic urgency. Either a recent diagnostic exercise has revealed pressure on the business model that demands a serious response, or leadership has already accepted that AI is infrastructure for the next phase of the business rather than a tool to be evaluated. Without urgency, the engagement gets deferred at every decision point and the architecture never gets built.

2. Operational complexity. Knowledge that lives in people's heads. Decisions that get made the same way every week but are not documented. Workflows that could be systematized but have not been. If there is nothing to systematize, the architecture engagement has nothing to build. The richer the operational complexity, the more value the engagement produces.

3. Leadership buy-in. Someone at the top of the organization sees this as an infrastructure investment, not a software purchase. They will participate in Phase 1, sign off on the Specification in Phase 2, and own the operating model that emerges in Phase 4. Without this, the project stalls at Phase 3 even if the budget is approved — because someone has to make the decisions that the architecture documents will encode.

Not a fit — three signals to watch for

You want to "try AI first" with no commitment to process change. The architecture engagement requires changing how decisions get made, how knowledge is documented, and how the team works with AI. Organizations that want AI to slot into existing workflows without disrupting them get the wrong outcomes. This is not a criticism. It is a fit signal.

You expect ROI without operational adjustment. The recovered hours come from the team adopting the new system into how they work. If the system is built but the team continues to work the way they did before, the system produces activity rather than returns. We will tell you this in Discovery if we sense it; we will tell you again in Phase 4 if it has not been addressed.

Leadership has not accepted urgency yet. Some organizations are not ready to commit. They will be in twelve months, when the business model pressure has become unavoidable. They are not a client today. This is also not a criticism. The architecture engagement requires the work that follows it; the organization has to be ready to do that work.

Start Building

Audit Whether You're Ready for an Architecture Engagement

Paste this prompt into Claude. The diagnostic surfaces whether your organization is positioned to get the full value from an architecture engagement — and where to invest before you commission one.

I want to assess whether my organization is ready to commission a mid-market AI architecture engagement. Ask me these questions one at a time: 1. What is the specific business pressure that is making AI feel urgent right now — or is it still an exploratory conversation? 2. Name three workflows in our organization where the same decision gets made over and over but is not documented anywhere a new hire could read. 3. Who at the top of the organization will personally own the operating-model change that comes out of the engagement? (Not approve — own.) 4. What are we likely to have to stop doing in order to start doing AI well? (If the answer is "nothing," that is a flag.) 5. If the engagement ended in twelve weeks and the system was running, what specifically would have to be different in the business for us to call it a success? After I answer all 5, give me: — Honest assessment: am I in the right-fit category, or one of the three not-a-fit signals? — The single biggest readiness gap I should close before commissioning an engagement — A 30-day plan to close that gap (or to confirm I am ready)

The diagnostic shows the gap. Closing it is a different project. See where you stand across all five readiness dimensions →

The Architectural Choice

An architecture engagement is not a different kind of consulting. It is a different category of work. The output is a running system, not a recommendation. The deliverable is operational infrastructure, not a strategic document. The retainer is for continued development, not for keeping the lights on.

What separates an architecture engagement from a consulting project is the answer to one question, asked twelve weeks after kickoff: does the system run without us? If the answer is yes, you have an architecture engagement. If the answer is no, you have something else — and you are paying for it.

You should own what we build together. Everything described above is the operational version of that sentence.

Subscribe to our AI Briefing!

AI Insights That Drive Results

Join 500+ leaders getting actionable AI strategies

twice a month. No hype, just what works.

Frequently Asked Questions

How long does a bosio.digital architecture engagement take from kickoff to handover?

Six to eight weeks for a company up to 25 people. Phase 1 (Discovery) is two weeks. Phase 2 (Architecture Design) is one week. Phase 3 (Build) is two to three weeks. Phase 4 (Training and Handover) is one week, followed by a 30-day post-handover support period. Larger organizations take longer because the architecture has more variations to encode, not because the methodology changes.

What does a mid-market AI architecture engagement cost?

For organizations up to 25 people, $35,000–$55,000. For 25–100 people, $55,000–$95,000. For 100+ people, custom — the variance comes from organizational structure and regulatory environment more than headcount. Maintenance retainer (optional, post-engagement): $1,500–$2,500 per month. Extended governance retainer (optional): $2,000–$3,500 per month. Third-party software your business will use (Claude license, your existing CRM, file storage) is billed separately by the vendors so the line between our engagement and your tooling stays clear.

What deliverables do I receive at each phase?

Phase 1 produces the Architecture Blueprint — a written specification of the architecture your organization should build. Phase 2 produces the Full Specification — the Blueprint translated into a buildable plan with folder structures, permission boundaries, and governance defaults. Phase 3 produces the working AI operating system installed in your accounts. Phase 4 produces a team trained to run it and a runbook documenting the operational knowledge needed to maintain it. Each phase deliverable has standalone value if you decide not to proceed to the next phase.

What do I own when the engagement ends?

The files live in your own knowledge infrastructure — Drive, OneDrive, or whatever your organization already uses — under your account. Your Claude license is yours. The MCP connectors run under your accounts and authentications. Skills and context files are markdown your team can read, edit, and extend. The governance rules are documented in your knowledge base. The system runs without bosio.digital after the 30-day support period. The retainer is for cases where you want continued development — not because the system requires us. That ownership distinction is the difference between an architecture engagement and a consulting project.

What is the difference between a maintenance retainer and an extended governance retainer?

The maintenance retainer ($1,500–$2,500 per month) covers system health monitoring, context updates as the business evolves, connector monitoring, and minor additions. For organizations that want continued bosio.digital presence without active strategic development. The extended governance retainer ($2,000–$3,500 per month) covers everything in maintenance plus ongoing strategic development, new workflow builds as the organization identifies them, and executive advisory. For organizations that view the partnership as continuing rather than transactional. Both are optional. The system runs without either.

Who is this engagement right for, and who is it not right for?

Right fit: organizations with strategic urgency around AI, operational complexity worth systematizing, and leadership willing to own the change. Not a fit: organizations that want to "try AI" without changing process, leaders expecting ROI without operational adjustment, and organizations where senior leadership has not yet accepted AI as infrastructure rather than tooling. The not-a-fit cases are not criticisms — they are timing signals. Some of those organizations will be ready in twelve months.

What happens if I decide to stop after Phase 1 instead of completing the full engagement?

You keep the Architecture Blueprint — the written specification of what your organization should build. It has standalone value: you can use it to evaluate other implementation proposals, to brief an internal build, or to make the case for a future engagement when timing is right. Discovery is the phase organizations sometimes choose to complete and then pause. The pricing reflects the standalone value of the Blueprint; if you decide to continue later, Phase 1 work does not get repeated.

How is responsible AI implementation handled in a mid-market engagement?

Responsible AI is not a Phase 5 add-on. It is encoded into the architecture during Phase 2 as the rules that govern what the AI does autonomously, what requires human approval, and what gets escalated. Those rules are written down, version-controlled in your knowledge base, and visible to anyone who needs to audit them. The human side is built in the same way: the architecture assumes a human-in-the-loop pattern by default for any decision that touches customers, employees, money, or legal exposure, and the team is trained in Phase 4 to recognize when the AI is operating outside its lane and when to override it. Responsible AI at mid-market scale is mostly a clarity problem — making decisions about boundaries explicit, in writing, before the system ships — rather than a tooling problem.

Sources

- Beyond the Big 4: A Mid-Market Leader's Guide — bosio.digital. The competitive positioning context for why a different engagement model exists for mid-market firms.

- What Does AI Consulting Actually Cost? — bosio.digital. Deeper context on industry pricing benchmarks and ROI math.

- What to Ask an AI Consulting Firm Before You Sign Anything — bosio.digital. The diagnostic questions that should precede a commitment.

- What Does an AI Consultant Actually Do? — bosio.digital. The companion piece on the feel and quality of the work, complementing this article's focus on the specs and deliverables.

- Claude Is Becoming an Operating System — bosio.digital. The platform context that makes an architecture engagement possible.

- 80% of Companies Aren't Seeing AI ROI — bosio.digital. The three-decision architectural framework this engagement implements.

- Stop Building Agents. Build Skills. — bosio.digital. The design principle behind Phase 3's skills library build.

- bosio.digital architecture engagement — production methodology, run for our own firm since the start of 2026 and delivered to clients on the same architecture.